We run Claude rollouts for a living, so we watch Claude Code account bans the way ops teams watch incident channels. The pattern over the past few months is clear: enforcement is automated, the appeal path is a Google Form, and resolution takes anywhere from several days to several months.

Two events made this concrete for the Australian developer community.

Tom's Hardware reported on a 60-employee company cut off from Claude with no warning and a vague 'usage policy violation' message. The only recourse was submitting a form. That outcome is no longer an edge case. It's the expected model.

On March 31, 2026, Anthropic accidentally pushed Claude Code's source maps to npm. Researchers extracted the harness logic, internal flags, and references to abuse-detection signals. None of it is official documentation, but it gave the community a much sharper picture of what the automated systems actually watch.

If your team depends on Claude Code for delivery, account access is not something you can take for granted. These seven rules are how we configure Claude infrastructure for clients across Sydney and Melbourne, drawn from Anthropic's official docs, the post-leak community analysis, and a long tail of public ban reports.

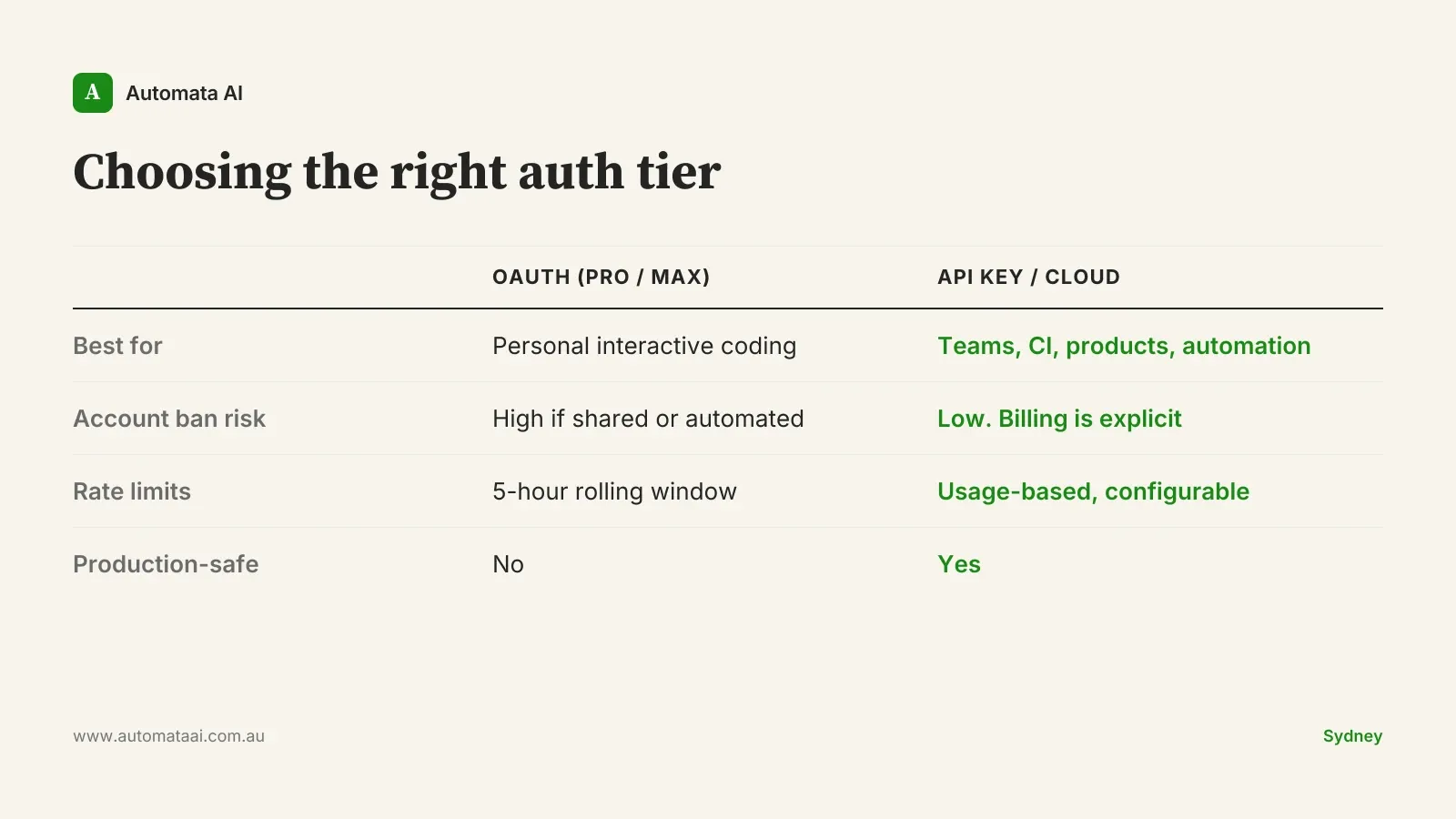

One principle ties all seven together: one human, one subscription, one beneficiary. That's not Anthropic's exact wording. It's the shorthand for their actual policy. OAuth (Pro and Max) is scoped to the person who bought it. Everything else belongs on an API key, AWS Bedrock, or Google Cloud Vertex.

Personal coding. OAuth (Pro or Max) is the right tool.

Team bot, shared agent, or contractor work. API key.

Product or SaaS calling Claude. API key.

Production systems. Bedrock or Vertex.

Rule 1: Fix the auth precedence trap

This is the single most common setup error we find in client environments, and it silently routes traffic to the wrong billing tier.

In Claude Code, ANTHROPIC_API_KEY takes priority over CLAUDE_CODE_OAUTH_TOKEN. If you exported an API key in your shell at any point, even buried in a .zshrc you haven't opened in months, Claude Code will use it instead of your Max subscription. You think you're on Max. You're burning API credits, often under tighter rate limits.

To verify: open Claude Code, run /status, and check two fields. apiKeySource should show none. rateLimitType should show 5h_limit. If apiKeySource shows anything else, find the key in your shell config, remove it, and recheck.

Rule 2: Don't share a seat

Anti-sharing detection runs on combined signals, not a single trigger. Based on the post-leak analysis and public ban reports, the system appears to weigh several factors: the same account active across multiple devices in a short window, different IP addresses or OS fingerprints stacked together, token consumption well above typical interactive use, and billing metadata that doesn't match the account profile.

No single signal trips the alarm. Several together do.

If a team wants Claude Code, the answer is a Team Plan or API billing, not one Max seat split between three engineers. A Team Plan currently costs roughly $25 AUD per user per month. An unexplained ban during a release cycle costs considerably more than that delta.

Rule 3: Never run a product on personal OAuth

These are the highest-risk patterns we encounter in production environments:

Shipping a product that authenticates with an OAuth token. Fastest path to a permanent ban.

Multi-tenant SaaS signing into Claude on behalf of users. Every call is a shared-seat violation.

Reselling Claude access in any form. Explicitly prohibited in the usage policy.

Pulling tokens from ~/.claude.json and routing them through a gateway. Treated as credential misuse.

Creating a new account immediately after a ban. Compounds the violation rather than resetting it.

The test is simple. If any human other than the seat-holder benefits from the Claude calls, you need an API key. Not because Anthropic is hostile to teams (they actively support team use through proper channels) but because the detection logic is built around the OAuth contract, and it doesn't negotiate.

Rule 4: Use API keys for CI and automation

Running CI through Claude is not against the rules. The risk emerges when your traffic pattern stops looking like an interactive developer and starts looking like backend infrastructure consuming tokens at machine speed.

CI pipelines, cron jobs, background agents, scheduled tasks. API key.

Local interactive Claude Code on your own machine. OAuth.

The closer your usage profile resembles an always-on process running at scale, the more important it is to be on API billing, where that pattern is the explicitly intended model.

When these rules don't apply

If you're a solo developer using Claude Code interactively on your own machine, for your own work, with no CI integration and no automation, rules 2 through 6 don't apply to you. OAuth is exactly the right tool for personal interactive use. The risk profile here is for teams, products, and anyone whose usage pattern could read as infrastructure rather than a person working through a problem.

Rules 1 and 7 apply regardless. For a solo developer on OAuth, everything in between is noise.

Rule 5: Content minefields are pattern problems, not intent problems

Automated moderation reacts to topic clusters, not purpose. Legitimate research can hit the same signals as bad-faith requests when the subject area involves chemicals, aerosols, weapons, malware, credentials, bulk scraping, or high-volume output extraction.

Community reports include people flagged for translating kanji engraved on a kitchen knife, and for asking about aerosol droplet sizes in an agricultural context. We can't verify each case individually, but the direction is consistent: when a topic pattern reads as sensitive, automated thresholds apply regardless of actual intent.

Two habits reduce exposure. Lead with context when the topic is borderline: one sentence establishing professional purpose shifts the framing and costs nothing. Keep sessions focused. Stacking multiple sensitive-adjacent requests into one conversation amplifies the signal.

Rule 6: Don't treat a ban as a reset opportunity

The instinct after a ban is to create a new account and keep moving. That instinct makes the situation worse.

Anthropic's detection systems flag account creation immediately following a termination event. Post-leak community analysis suggests evasion attempts are scored as an additional signal, not treated as separate incidents. A new account doesn't give you a clean slate.

The correct response is to file an appeal through the official channel and wait. If you're running production workloads, you should already have API-key-based fallback in place before any ban happens.

Rule 7: Build the fallback before you need it

If any production workflow depends on Claude Code's OAuth path, the question isn't whether to have fallback access. It's whether you've built it before something breaks.

The Anthropic API, AWS Bedrock, and Google Cloud Vertex all provide Claude access with service agreements, billing controls, and no shared-seat risk. For anything client-facing or revenue-critical, OAuth is the wrong foundation. The cost difference between a Max subscription and Bedrock at typical Australian mid-market production volumes is usually under $200 AUD per month. The cost of an unplanned outage during a client delivery is a multiple of that.

The appeal path exists, but it's not a recovery strategy. Set up the right auth tier now, document your production fallback, and treat account access as infrastructure rather than an assumption. The organisations that get through a ban without losing delivery time are the ones that planned for it before it happened.