Most Australian enterprise risk teams have an AI compute concentration concern sitting somewhere in their vendor risk register. For Claude deployments specifically, the concern usually comes down to one thing: what happens to model availability if the primary cloud running Anthropic's infrastructure runs into serious trouble.

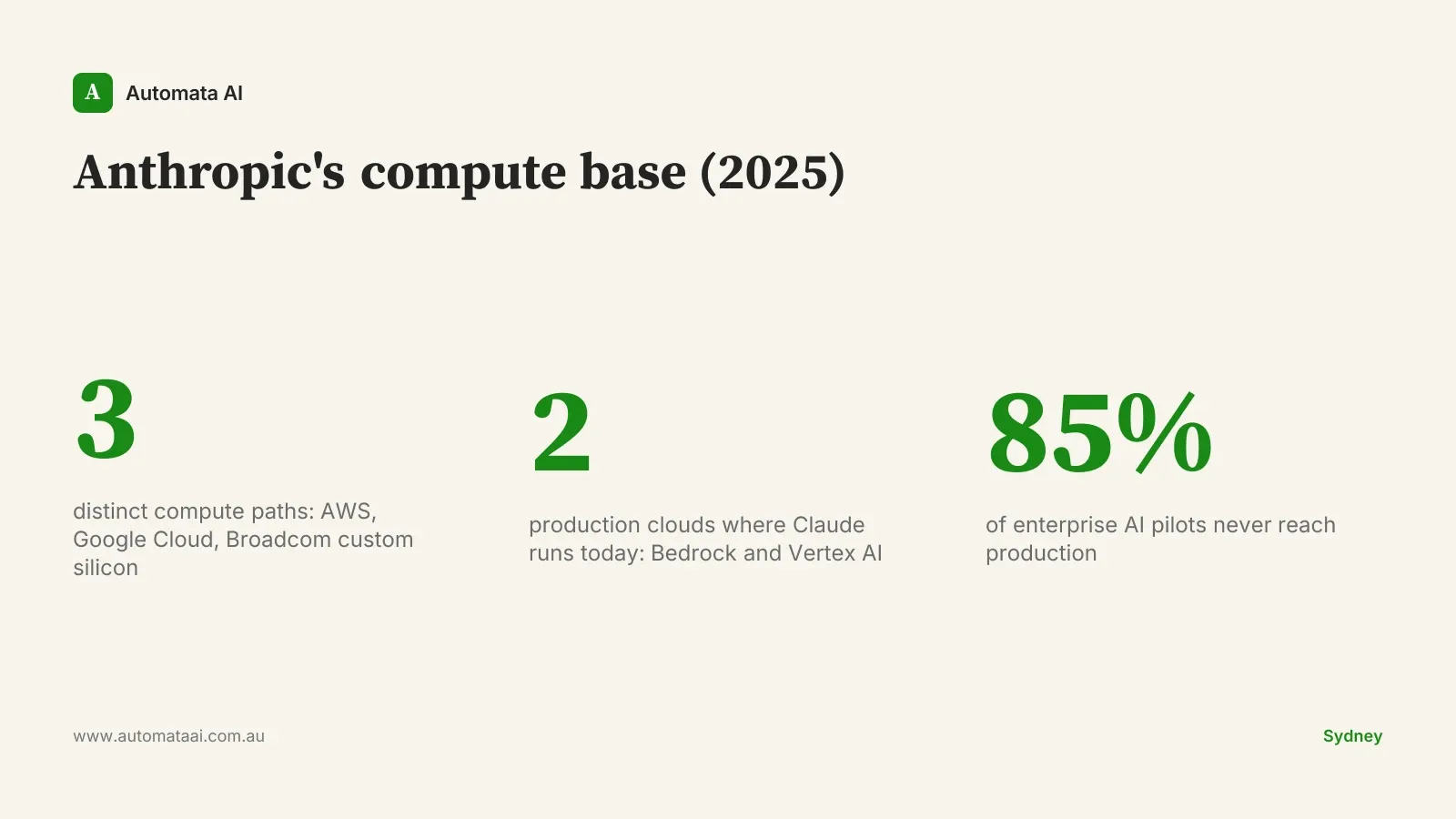

Anthropic's recent compute agreements with Google and Broadcom change that risk profile. Three implications are worth working through for Australian enterprises evaluating Claude as a long-term platform.

Why compute partnerships are capability decisions

The binding constraint on frontier AI model development isn't talent or algorithms. It's chips. More precisely, it's the data centre capacity to train and run them at scale. Every major lab is in a multi-year race to lock in enough compute for next-generation models.

Anthropic already had a deep capital and infrastructure relationship with AWS. The Google Cloud partnership adds Vertex AI as a second production inference platform, backed by a compute agreement. The Broadcom deal adds a third path: purpose-built AI accelerator silicon, not renting hyperscaler GPU capacity but commissioning dedicated chips with a chip-design partner.

For Australian enterprises picking an AI foundation model platform for 2025 through 2030, that spread matters more than the headline deal figures.

Implication 1: Multi-cloud Claude becomes defensible procurement

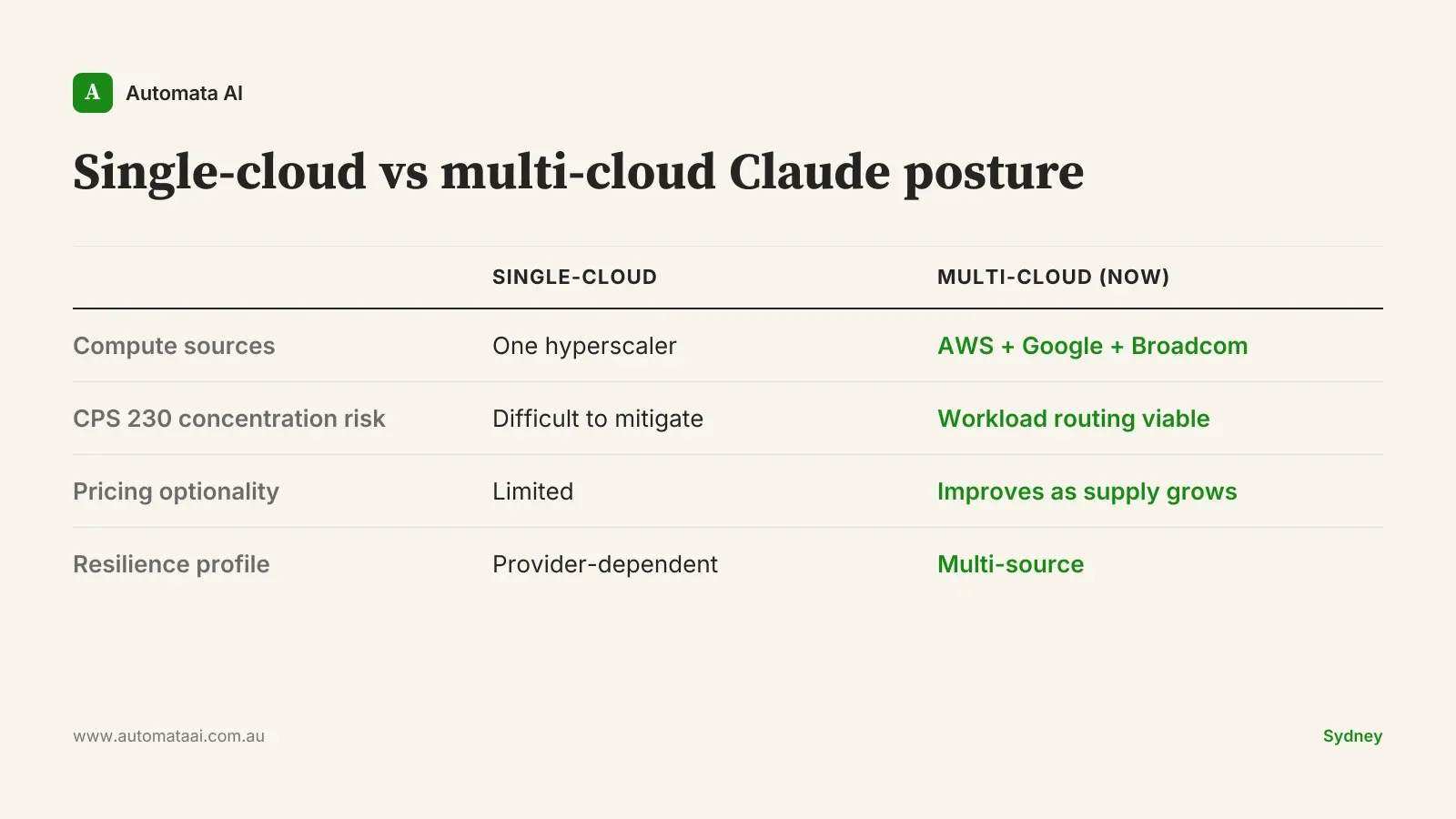

Claude is available on AWS Bedrock and Google Cloud Vertex AI today. The question facing Australian enterprise procurement teams has been whether those two deployments would maintain feature parity, or whether one cloud would consistently trail the other on capability updates, forcing a de facto single-cloud decision.

Deeper compute commitments across both platforms raise the likelihood of parity. That matters for Australian financial services firms operating under APRA CPS 230. CPS 230 requires entities to manage concentration risk across material service providers, and it applies directly to cloud infrastructure supporting critical business services. If all AI workloads run through one cloud, that is a concentration exposure in your operational risk framework. Most AU financial services risk teams are already modelling cloud concentration. A credible multi-cloud Claude story, routing workloads to Bedrock or Vertex based on data residency requirements, existing cloud contracts, or resilience architecture, is now a defensible position rather than a procurement workaround.

A mid-sized Melbourne-based wealth management firm running document automation, for instance, can now route different workflows to different clouds based on its CPS 230 operational risk framework. Before these deeper partnerships, the quality differential between platforms created real risk of lock-in regardless of procurement intent.

Implication 2: Compute concentration risk shifts from your problem to Anthropic's

The fragility in single-cloud AI infrastructure is straightforward: your vendor's compute reliability becomes your model availability. When a lab trains and serves primarily on one cloud, a significant infrastructure event at that provider is a model event for every customer simultaneously.

The Broadcom partnership is notable because it represents dedicated silicon rather than shared hyperscaler capacity. Anthropic is commissioning purpose-built AI accelerator chips, not buying blocks of existing GPU inventory. Combined with AWS and Google Cloud commitments, there are now three meaningfully different compute paths underpinning Claude.

That's not a guarantee against disruption. The infrastructure buildout across these partnerships will take years to fully deliver, and purpose-built silicon programs carry their own timeline risk. But for risk teams building their AI vendor risk register, the multi-source posture is a materially different entry than single-source. If your current framework penalises vendors with single-cloud compute dependency, the Claude picture has changed.

Implication 3: Long-term pricing optionality in enterprise contracts

Capacity constraints drive pricing. When supply is tight and demand is growing, enterprise AI pricing reflects that scarcity. More compute sources don't immediately lower prices; the market is still supply-constrained. But they do create the structural conditions for more competitive pricing as capacity increases over the next two to three years.

Australian enterprise procurement teams negotiating multi-year Claude commitments, typically $80,000 to $250,000 annually for meaningful production deployments, should factor this into their contract structures. The case for shorter initial commitment terms, where your commercial framework allows it, is to retain pricing optionality as the compute supply picture matures.

This isn't an argument to delay deployment. The ROI on Claude-powered automation for the right processes, $30,000 to $150,000 build cost with two to six months payback on high-volume knowledge work, is strong enough that waiting 18 months for better pricing is usually the wrong trade. It's an argument about contract structure, not deployment timing.

When this analysis doesn't apply

If your organisation is evaluating Claude for a single, defined operational workflow, whether that's contract review, document classification, or customer query routing, none of this infrastructure analysis should enter your evaluation. The relevant question is whether the model solves your problem, not whether Anthropic has signed a Broadcom deal. Infrastructure durability matters at scale; for a $30,000 to $50,000 single-process automation, the risk calculation is simply different.

The infrastructure picture matters for three specific situations:

Multi-year platform commitments at $100,000+ annual spend. At that scale, infrastructure durability is a load-bearing variable in your vendor decision, not a nice-to-have.

APRA CPS 230 or compliance-driven vendor assessments. The multi-cloud Claude story now has a more defensible answer for compute concentration scoring than it did 12 months ago.

Board-level AI platform decisions. CIOs selecting a foundation model vendor for the next five years should treat the compute partnerships as a structural indicator, not just a press announcement.

There's an honest caveat worth stating plainly. These compute commitments are expansion bets, not delivered capacity. Multi-year infrastructure buildouts with new silicon partners carry execution risk. Treat the multi-source posture as a credible direction of travel, not an achieved state.

The question worth asking before any platform commitment

The right question isn't whether this vendor has compute today. It's whether this vendor has a credible structural path to scale compute through 2028 to 2030, as model sizes grow and enterprise inference demand increases.

Anthropic's partnership pattern, with deep deals spanning Amazon, Google, and Broadcom, is the most diversified answer to that question among the frontier labs right now. That doesn't make it a certainty. It makes it the most structurally durable bet on the table.

If your vendor risk assessment was flagging compute concentration as an unresolved concern, update it. The multi-cloud Claude posture across Bedrock and Vertex AI, backed by the compute commitments now in place, gives your procurement and risk teams something defensible to document.