A development team ships a Claude application in mid-2024. The prompt engineering is careful, the retry loops are dialled in, the scaffolding manages context reliably across long sessions.

Six months later, the model they built for has been superseded. Half of what the harness was managing, the new Claude can do natively. The harness doesn't know that. It's still doing the work, burning tokens and adding latency to solve problems that no longer exist.

That's the failure mode worth planning around. Not applications built badly. Applications built well, for a model that has since been replaced by something meaningfully more capable, with a harness that never got the memo.

Three patterns make the difference.

Pick the stack Claude already understands

Build with tools and frameworks Claude has seen extensively in training. Standard languages, conventional APIs, well-documented CLIs. This matters more than it sounds.

Claude 3.5 Sonnet (late 2024) completed roughly 49% of SWE-bench tasks. Current Claude models clear that by a substantial margin, partly because Anthropic invested in the toolchain the model can use natively. When a model knows a framework deeply, you need less scaffolding to get reliable output, and the scaffolding you do build breaks less often as models update.

For Australian teams building Claude applications, the temptation is the elegant edge choice: a bespoke internal DSL, a niche orchestration library, a framework the wider engineering community hasn't standardised around. Claude will work with it. It will also produce less reliable output, require more correction prompts, and cost more in harness complexity than if you'd chosen the well-known option. Each model update becomes a regression risk rather than a free capability upgrade.

A Melbourne-based professional services firm cut their prompt-failure rate by roughly 40% after moving a document retrieval step from a custom graph format to standard JSON over HTTP. Same data, different tooling. The exotic stack was the liability.

Harness as technical debt: the quarterly prune

In 2023 and early 2024, harnesses needed to do real work. Limited context windows meant teams built manual chunking and summarisation layers. Inconsistent instruction-following meant elaborate retry loops with validation checks. Weak tool use meant custom routing and fallback logic.

Most of those constraints have dissolved. The harness code has not.

The numbers aren't trivial. A re-prompting layer that adds 3-4 seconds per call and $0.02 per query looks very different at 100,000 queries a month: $2,000 a month in unnecessary API cost, and measurable latency overhead for every user. Over a year, that's $24,000 spent compensating for a problem that no longer exists.

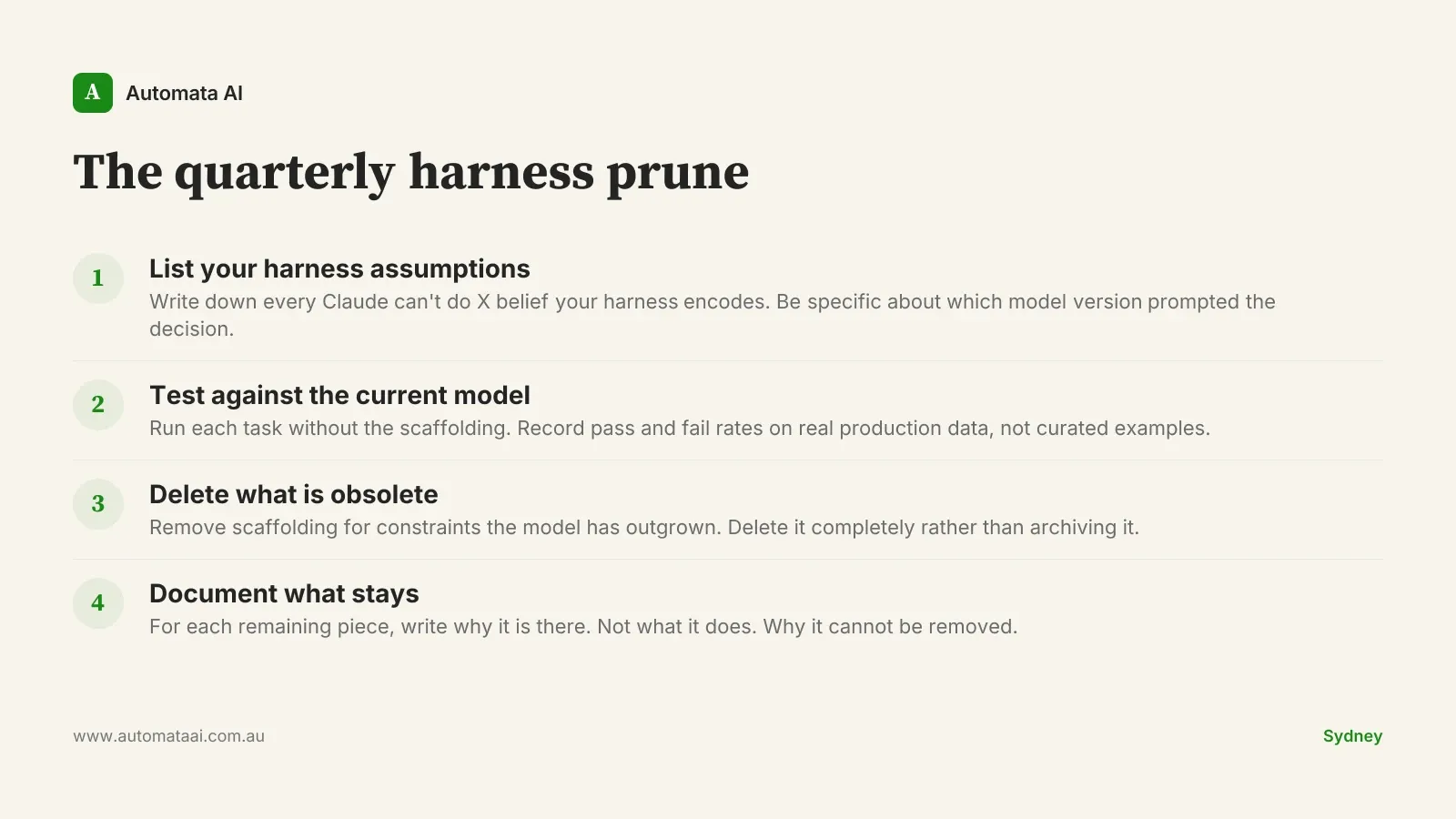

The pattern is a quarterly review of three to five harness assumptions. Pick the ones you'd put money on, test them against the current model on real production data, and delete the scaffolding when they're wrong. "Claude can't do X" assumptions from six months ago have a poor survival rate.

Most harnesses aren't protecting the business from Claude. They're protecting the team from having to update their understanding of Claude. That's the uncomfortable version of this pattern, and it's worth sitting with.

Encode your constraints deliberately, and only yours

There are two reasons to put logic in the harness rather than leaving it to the model. Most teams treat them as the same reason. They're not, and conflating them is what makes harnesses brittle.

The first reason is compensating for model limitations. Claude couldn't reliably do X when you built this, so the harness does X instead. This is Pattern 2's territory. Prune it when the model catches up.

The second reason is encoding deliberate business constraints. Hard guardrails, regulatory requirements, audit trails, escalation thresholds. These do not belong in a prompt. Prompts drift; harness logic is reviewable, testable, and auditable. If a constraint matters enough to enforce, it belongs in code.

For APRA-regulated financial services teams, Australian banks, super funds, and insurers operating under CPS 230, this boundary is not an engineering preference. It's the operational risk story. Claude is making judgements; your harness is enforcing the rules. If you can't separate those two things cleanly in your architecture, document the distinction before your next audit conversation.

For every piece of logic in your harness, ask one question: is this here because Claude couldn't do it when we built this, or because it represents a deliberate business constraint? The first category is a candidate for deletion. The second should be documented, reviewed on any change, and never touched without sign-off.

When these patterns are the wrong frame

Three situations where the above reasoning breaks down.

Early-stage exploration. If you're still running experiments to understand what Claude can do for your domain, don't prune. Build. The harness-as-debt argument assumes you know what you're building. If you're still finding that out, a messy harness is the right call.

Proprietary tooling as a moat. If the bespoke DSL or unusual framework is genuinely hard to replicate and forms part of your competitive advantage, "use standard tooling" is bad advice. The rule applies to default choices, not every choice.

Low-volume processes. If the process runs a few hundred times a month and a quarterly review costs $2,000-$3,000 in engineering time, the math won't justify a formal cadence. The prune-and-review approach earns its keep at roughly $30,000 or more per year in API costs.

Any Claude application built more than twelve months ago is worth a second look. Not because it's broken, but because the assumptions it encoded are probably outdated enough that it's spending real money compensating for problems that no longer exist. The question isn't whether to revisit. The question is whether you have a process for it.