Your agent demo ran in four minutes. The model found the right documents, called the right tools, returned the right answer. Everyone in the room was convinced. Three months into production, the system is handling 15% of the volume you projected, your team is managing a steady stream of edge-case exceptions, and nobody can explain why it works on some inputs and not others.

That pattern has a name. And a fix.

Workflows vs agents: pick the right tool first

Most of what gets built as an agent is actually a workflow in agent clothing. These aren't interchangeable choices, and the distinction matters more than the tooling.

Workflows are predetermined sequences of LLM calls with fixed inputs and outputs. They're predictable, debuggable, and cheaper per run. Agents are systems where the model decides what to call and in what order. They're necessary when the path can't be determined in advance.

A Melbourne financial services firm running APRA CPS 230 compliance checks doesn't need a model dynamically deciding which documents to examine. It needs a reliable sequence: ingest the policy document, run structured extraction, flag exceptions, generate the report. That's a workflow. Build it as a workflow. The compliance audit trail is clean, the behaviour is predictable, and the cost per run is fixed.

Reach for agents when the problem genuinely requires dynamic decision-making: multi-step research tasks where the right next search depends on what the previous one returned, customer escalations that branch across multiple systems, or processes where the correct path isn't predictable at build time. Not because agents are more impressive. Because they're the right tool.

The augmented LLM is the building block, not the agent

The foundational unit isn't the agent. It's the augmented LLM: a model with retrieval, tools, and memory. Get this primitive right and everything built on top of it becomes more reliable. Skip it and you'll spend months debugging architectural problems by adjusting prompts, which is the wrong layer entirely.

Reliable retrieval means tested against adversarial queries. Not the clean examples from development. The uglier inputs your actual users will send. Well-designed tools means typed inputs, documented failure modes, and test coverage on both the happy path and the error path. Scoped memory means the agent knows what it can carry between calls and what gets reset.

A team that invests two weeks getting this primitive right before building anything else will outperform a team that skips ahead to multi-agent orchestration. The cost of fixing retrieval at the agent level, after four layers are built on top of it, isn't linear. It compounds.

Composable patterns over bespoke architecture

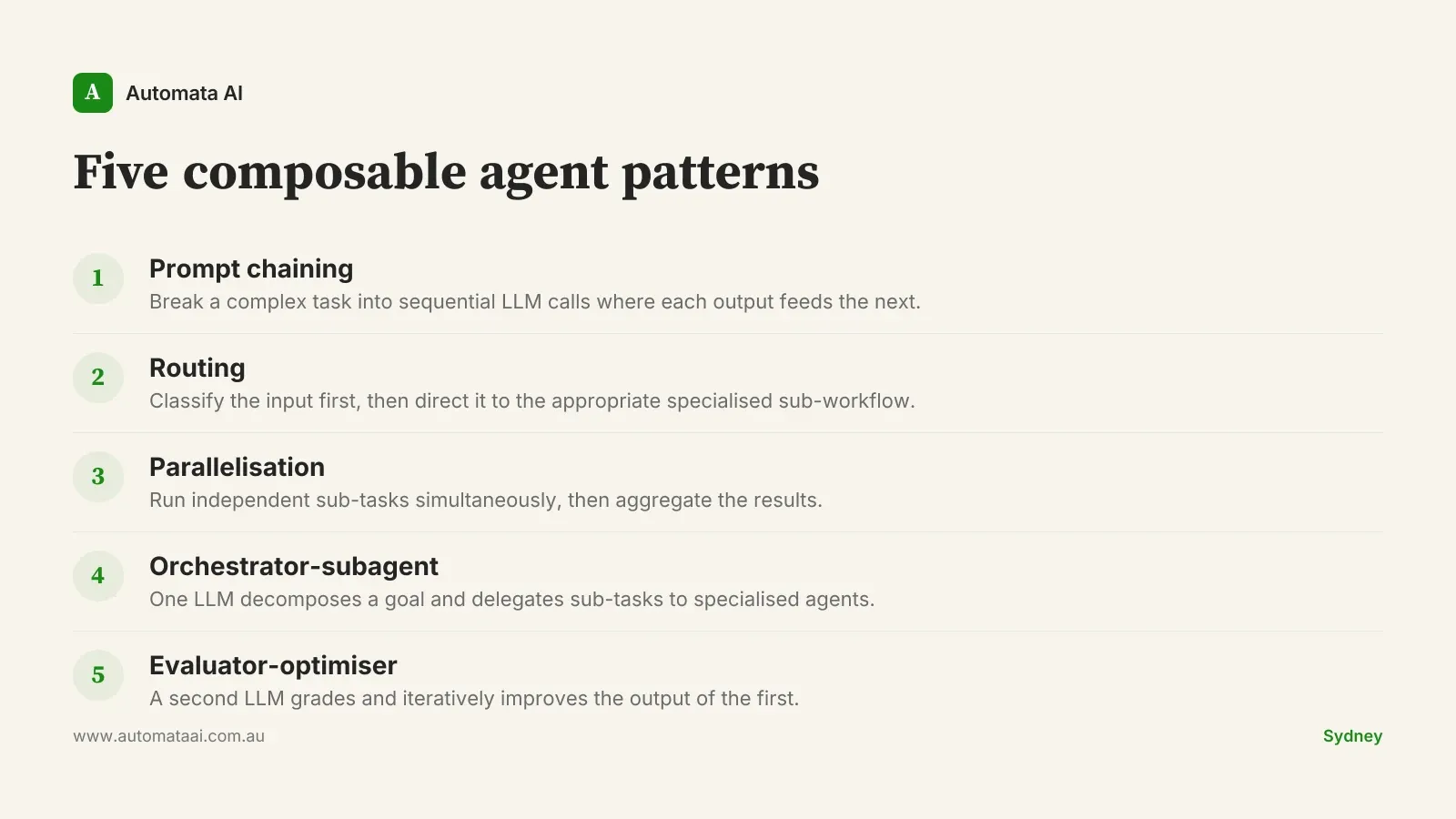

Anthropic's engineering guidance identifies five core workflow patterns: prompt chaining, routing, parallelisation, orchestrator-subagent, and evaluator-optimiser. Most real production systems are compositions of two or three of these.

The temptation is to design something bespoke. Teams draw custom architecture diagrams and convince themselves the problem is unique. Usually it isn't. A document processing pipeline is routing plus parallelisation. A compliance checker is chaining plus evaluator-optimiser. A customer triage system is routing plus orchestrator-subagent.

Before drawing a new architecture, ask whether it can be expressed as a composition of those five patterns. Usually the answer is yes. The composition is easier to debug, easier to test, and easier to hand to someone else on the team.

Defensive tool use is non-negotiable in production

Tools fail in production. Networks time out. Third-party APIs return 500s. The CRM returns a partial record because a user hit save mid-entry. An external service changes its response format without notice.

The question isn't whether this will happen. It will. The question is what your agent does when it does.

Every tool integration needs a failure-mode design pass before shipping. What happens on a 500? What happens on a partial response? What happens on a timeout? At $85-$100 per hour fully loaded for a developer, an agent built with no failure-mode design will cost more in incident response over 12 months than it saved in the first six.

When not to build an agent

This is where the pitch needs to stop for a moment.

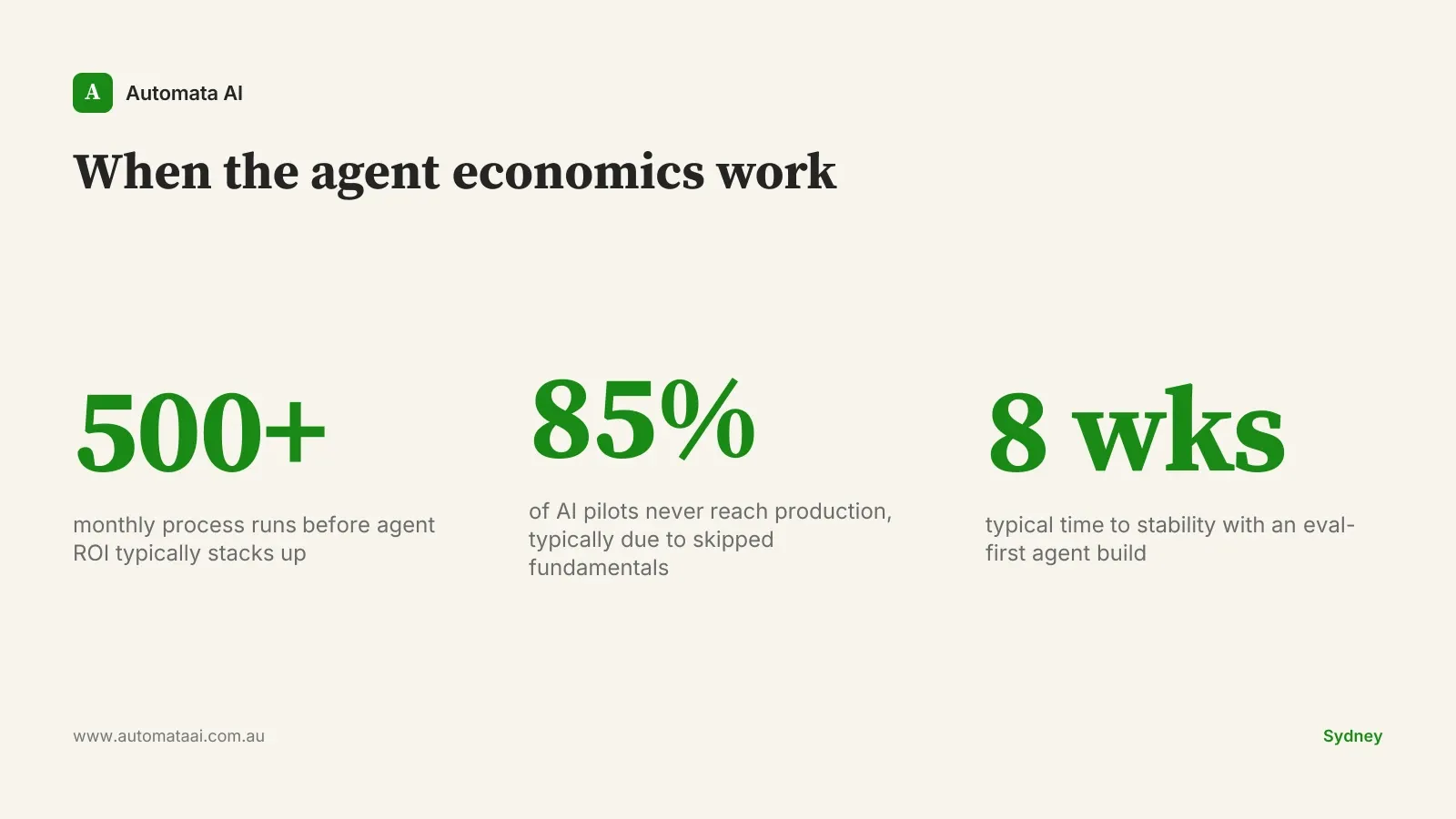

Agents aren't the right answer for most of the use cases they get proposed for in Australian mid-market businesses. If a process runs fewer than 50 times a month, a properly instrumented agent probably won't generate the ROI to justify the build and ongoing maintenance. If the rules change every quarter, the maintenance burden erodes the savings. If the team can't write evals, they can't safely run an agent in production.

High-volume repetitive work. The ROI case depends on frequency. 500 or more runs per month, with consistent inputs and expected outputs, is where the economics typically work.

Complex branching logic. Too many conditional paths to hard-code reliably as a workflow. If mapping the logic takes more than a whiteboard session, an agent is probably the right call.

Information gathering at scale. Research tasks where a human would spend 30-60 minutes pulling together information from multiple sources. The time savings compound at volume.

A Sydney professional services firm pulling together pre-deal research across six data sources is a real case for an agent. A five-person business processing 20 invoices a month isn't.

Eval-driven development

An eval is a test suite for your agent. It tells you whether a change to the prompt, the model version, the tool configuration, or the retrieval layer improved or degraded output on a defined set of test cases. Without evals, every change is a guess. And you're making those guesses on a live system.

The pattern most production agent builds skip is writing evals before the build. By the time you have a misbehaving agent in production, you're patching without feedback. You don't know if a fix helped or made something else worse.

If you can't write five concrete test cases for what the agent should do in normal operation, and at least two for failure modes, you don't understand the problem well enough to automate it yet. Write the eval suite first. Treat it as the specification.

An agent built without evals rarely makes it through its first year without a significant rework. That rework typically costs $60,000-$120,000 in developer and implementation time. An agent built eval-first typically stabilises within eight weeks of deployment and holds for 18-24 months of normal operation.

Audit one current or planned agent build against these five patterns. Most teams find two gaps. Usually the augmented LLM primitive and evals. Fix those two first. The architecture debates can wait.