Your compliance team signed off. Your CTO said yes. Your Claude pilot produced results the board actually paid attention to. Then procurement opened a ticket asking for data residency documentation, a SOC 2 Type II report, and evidence of CPS 234 alignment.

Three months later, you're still in evaluation. This is the recurring pattern in Australian financial services AI adoption. It's also the main reason the two most instructive Claude production deployments published recently both run on AWS Bedrock rather than the Anthropic API. NBIM, Norway's sovereign wealth fund, and Brex, the US corporate card company, built their agent architectures on Bedrock. The patterns they landed on translate directly to the environment Australian banks, super funds, and insurers operate in.

Bedrock is a control-plane choice, not just a hosting choice

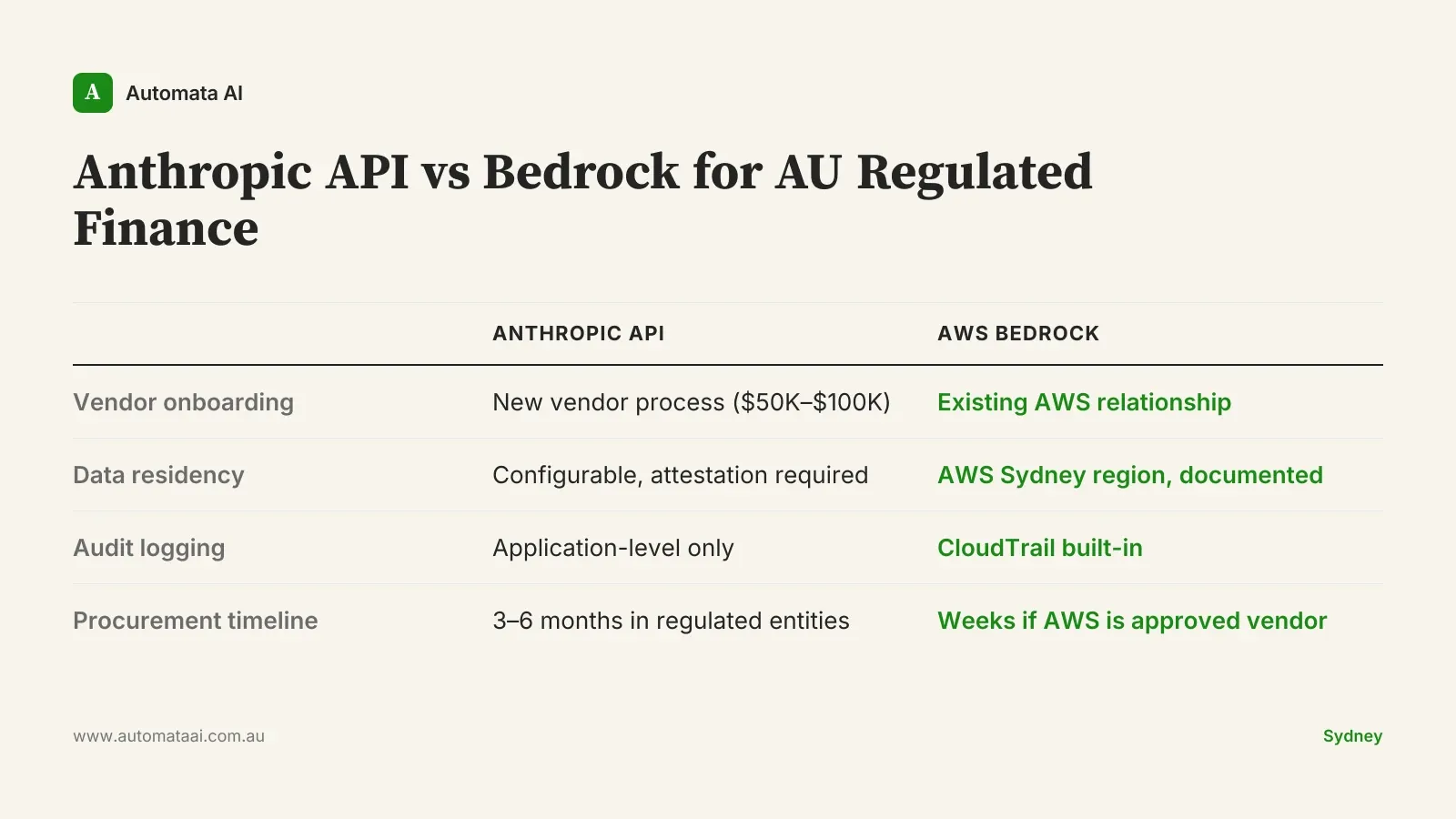

The Claude model on AWS Bedrock is identical to the model on the Anthropic API. Inference output, capability, model version: the same. What Bedrock adds is an AWS-managed control plane. Data residency within the AWS Sydney or Asia Pacific regions, AWS KMS key management, CloudTrail audit logging, VPC network isolation. For most Australian financial institutions, this is what clears the procurement gate.

Under APRA's CPS 234, regulated entities need documented controls over data location and access. Under CPS 230, they need vendor due diligence and operational resilience evidence. AWS already sits on approved vendor lists at most major Australian banks, super funds, and insurers. Anthropic, for many procurement teams, does not yet. Bedrock lets you run Claude inside an existing approved relationship rather than opening a new one.

The cost difference is concrete. Onboarding a new AI vendor into an APRA-regulated environment involves third-party risk assessments, legal review, board-level sign-off, and integration with existing security frameworks. That process typically runs $50,000–$100,000 in fully loaded analyst and legal time. If AWS is already your cloud provider, that cost is largely sunk. You're adding a new service, not a new vendor.

What NBIM's architecture shows the industry

Norway's Government Pension Fund Global uses Claude to synthesise investment research at a volume no analyst team could replicate manually. The architecture: agents that ingest structured data (filings, financial statements) alongside unstructured text (earnings transcripts, analyst notes), then produce summaries with explicit citations. Every claim links back to a source document.

The citation requirement isn't an add-on. It's the design constraint that makes the system auditable and therefore useful inside a regulated institution. AI-generated investment analysis that can't show its sources isn't analysis. It's inference. ASIC's market integrity expectations and most internal risk frameworks won't accommodate the latter. For Australian teams building Claude agents in investment, lending, or compliance functions: design citation into the output schema from day one.

What Brex's architecture shows the industry

Brex runs Claude across its corporate spend platform for transaction categorisation, fraud signal detection, and customer support triage. Their architecture is built around tight feedback loops: the agent proposes a decision, a human confirms or overrides, the system logs both. Over time, the override log becomes a dataset that reveals exactly where model performance degrades in production.

This matters for compliance more than it matters for accuracy. Australian financial services businesses carry responsible lending obligations, best interest duty, and licensing conditions that sit with the entity, not the AI vendor. An agent that makes consequential decisions without a human confirmation step creates a liability structure your legal team will veto. The Brex pattern is not a design preference. For APRA-regulated entities, it's the only architecture that passes a governance review.

Three practical moves for AU financial services teams

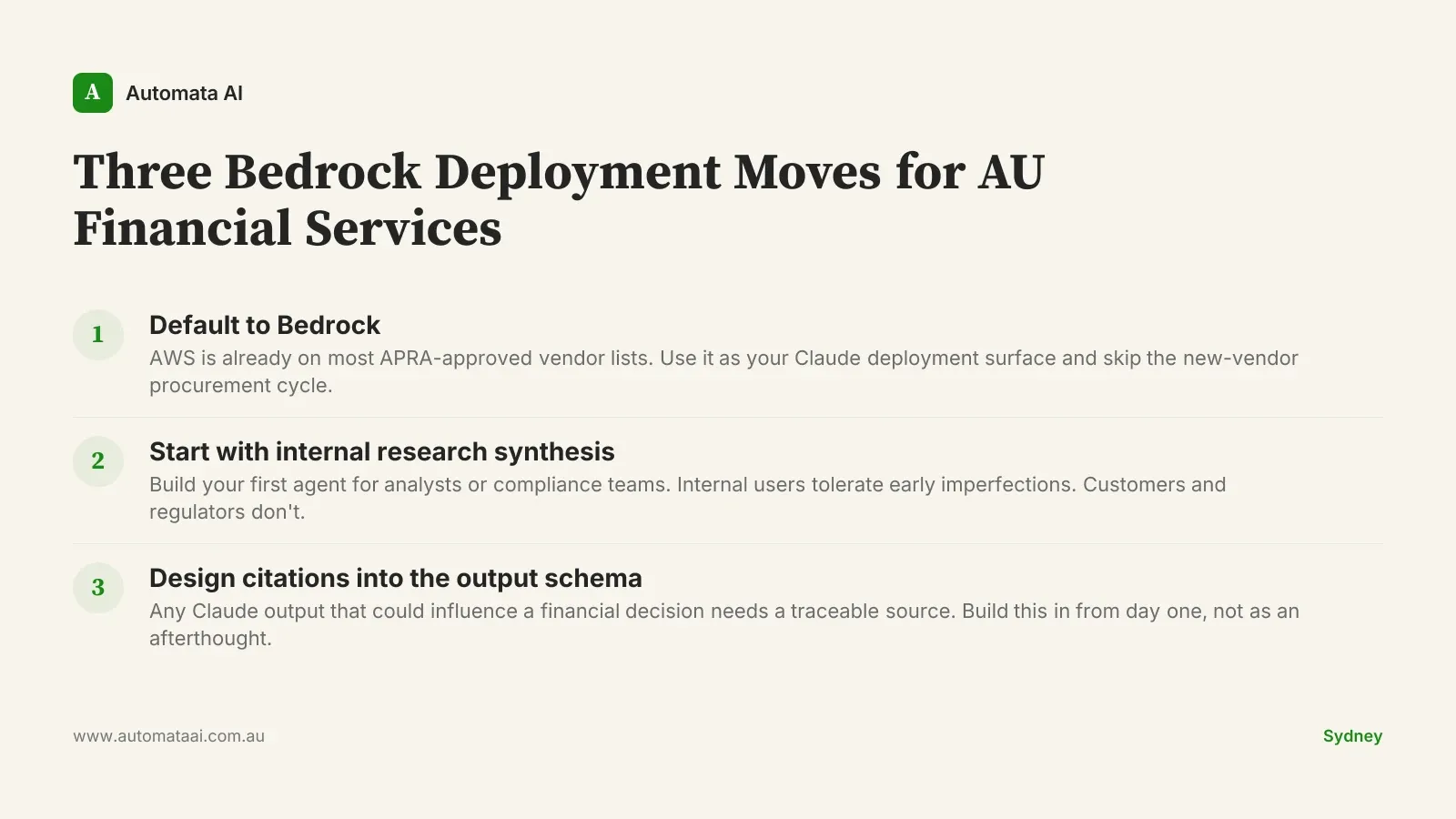

Default to Bedrock. Use it as your Claude deployment surface unless there is a specific architectural reason not to. The procurement and compliance overhead is materially lower than onboarding the Anthropic API as a new vendor.

Start with internal research synthesis, not customer-facing automation. Build your first agent for an analyst or compliance function. Internal users tolerate v1 imperfections. Customers and regulators do not.

Design citations into the output schema before you design anything else. Any output that could influence a financial decision needs a traceable source. Build that into the first sprint.

When this is the wrong approach

Not every financial services business should be building Claude agents right now. If your first process candidate runs fewer than 200 cycles per month, the ROI case is hard to close. An APRA-aligned Bedrock deployment done properly runs $60,000–$120,000 at the implementation stage. You need volume to reach a 6-month payback. If the process involves sensitive personal information that your privacy counsel hasn't assessed under the Australian Privacy Principles, that review comes before any build work.

The harder problem: most teams frame this as 'let's try AI on this process' without first quantifying what the process actually costs. Identify the process. Count the annual hours. Multiply by the fully loaded rate, typically $120–$150 per hour for a senior analyst in Sydney or Melbourne. If the answer is under $80,000 per year, there's a better candidate to start with.

Where the Australian market sits now

CommBank, Westpac, NAB, ANZ, and Macquarie all have active Claude evaluations underway, most of them in internal analytics and compliance functions rather than customer products. Smaller regional banks and credit unions are mostly watching. The gap between institutions that are building and those still evaluating is widening. Every 12 months of production data compounds into training signal, override logs, and agent architectures that become progressively harder to replicate from a standing start.

The businesses that move first are not taking more risk. They're accumulating operational knowledge. Pick one process: research synthesis, compliance reporting, or document review. Quantify it. Run the Bedrock procurement path. If your institution already runs on AWS, the hard part is mostly done.