A senior engineer running three Claude Code sessions simultaneously. That's the promise. But the actual arc, for most Australian teams we've seen try it, goes differently: they enable auto-mode, something moves faster than expected, they find a change to a system they weren't watching, and auto-mode goes back off.

That's the wrong call. The instinct to be cautious is right. The problem is that caution without a policy just means supervised mode forever, and supervised mode forever means you're paying a human tax on every action Claude takes.

What auto-mode actually does

In auto-mode, Claude Code executes task sequences without per-step approval. The agent plans the work, runs the commands, edits the files, verifies the output, and reports back. You set the goal. The agent handles the steps. You review what came out.

The trade is simple: faster execution and lower cognitive overhead, in exchange for reduced visibility into what happened between start and finish. For some tasks, that trade is obviously worth making. For others, the reduced visibility is exactly what you cannot give up. The challenge is knowing which is which, consistently enough that you can build a team norm around it rather than deciding case by case every time someone opens a session.

Three categories where auto-mode is the right default

These categories aren't arbitrary. They share three properties that make a task safe to run without step-by-step supervision: a clear definition of done, output that's trivially verifiable, and a bounded blast radius if something goes sideways.

Test generation and execution. Test code has all three properties. It's high-volume, the success criterion is unambiguous, and a broken test fails immediately rather than shipping silently. Running test generation in auto-mode is almost pure throughput gain.

Refactoring with bounded contracts. When the task has a fixed contract. Rename a function across the codebase, extract a module with a known interface. The output is mechanically verifiable: if the build passes and the tests are green, the refactor worked. Auto-mode handles this faster than any supervised workflow.

Bug fixes with reproducible failures. A failing test is a spec. Claude knows what done looks like and iterates until it gets there. Auto-mode here is faster than supervised mode, and the verification is structural rather than subjective.

If your team is still running test generation in supervised mode, you're paying a supervision tax on the lowest-risk work you do. That's not caution. That's overhead.

When supervision isn't optional

The categories above share a property most tasks don't have: a failure is obvious and contained. That's not true of the following, and treating them like it is will cost you. The cost usually arrives in one of two forms: a production incident, or a compliance event.

Anything touching auth, payments, or PII. A bug in a test fails loudly. A bug in an auth flow ships. For financial services firms operating under APRA CPS 230, or any business subject to the Australian Privacy Principles, the accountability for what gets deployed doesn't transfer to the tool that wrote the code.

First integrations with an external system. Until you've seen how Claude handles your specific API's edge cases, error states, and retry behaviour, you don't have enough signal to run it unsupervised. Build that signal in supervised mode first. Auto-mode can come later.

Architecture-level decisions. Extracting a microservice, redesigning a data model, moving from REST to GraphQL. These decisions carry consequences that extend months past the code change. They deserve human review not because Claude can't execute them, but because the decision-making process needs to be visible.

The compliance dimension matters here in a way that's specific to Australia. A reduced-supervision session that unexpectedly logs personal information to the wrong location isn't just a code mistake. Under the Privacy Act (1988) and the Australian Privacy Principles, it's a potential notifiable data breach. Under APRA CPS 230, regulated entities carry operational risk accountability that doesn't dissolve because a tool generated the code. The cost of catching a problem in a code review is a few hours. The cost of catching it after it ships, particularly for a mid-market professional services or financial services firm in Sydney or Melbourne, is a different order of magnitude.

A tiered policy that actually works

The mistake most teams make is treating auto-mode as an on/off switch for the entire engineering workflow. The teams that get the most out of it use it as a tiered system where the default changes by category of work, not by mood or recency of a bad experience.

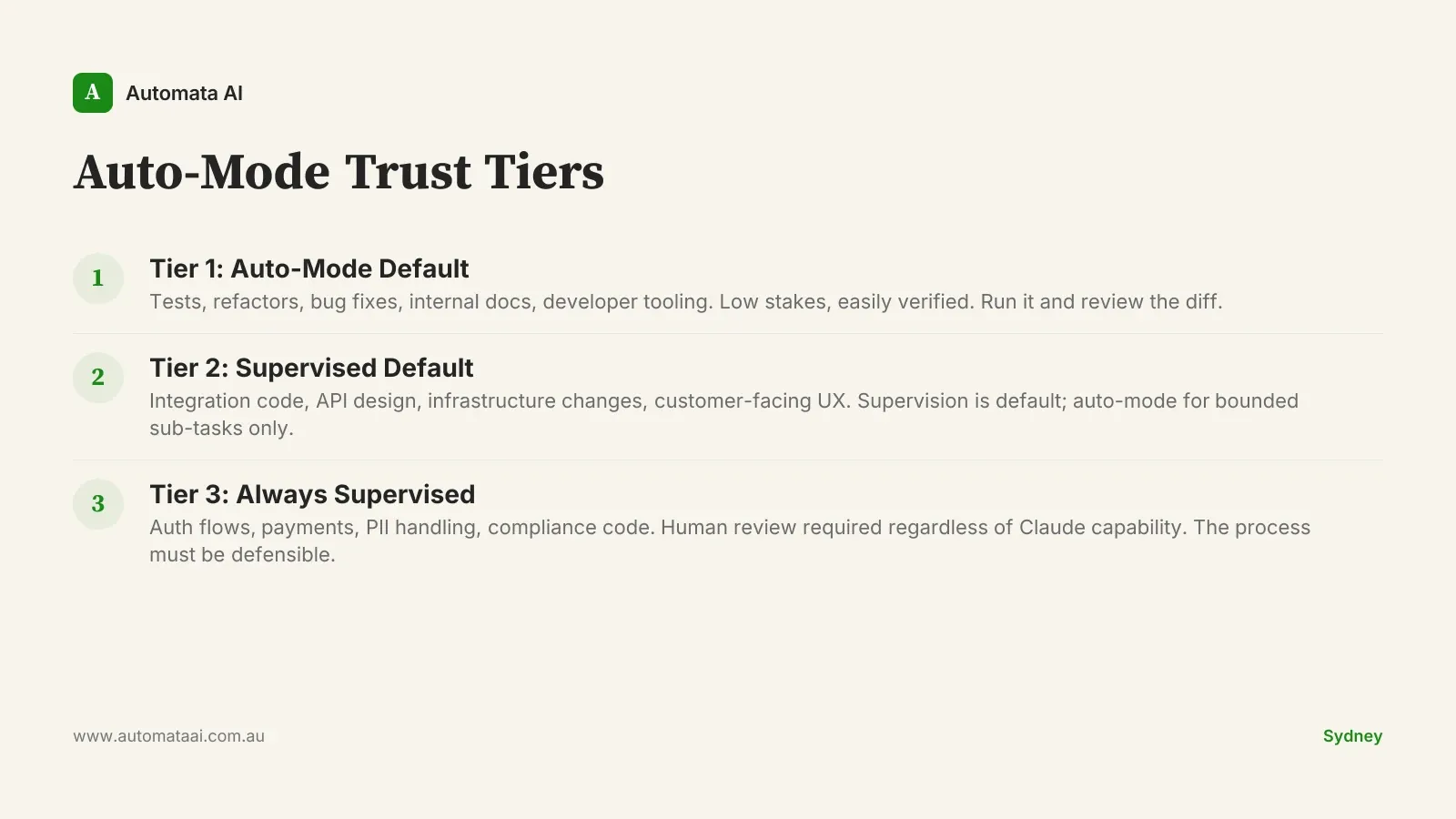

Tier 1, auto-mode default: tests, refactors, bug fixes, internal documentation, developer tooling. Low stakes, easily verified. Run it and review the diff.

Tier 2, supervised default: integration code, API design, infrastructure changes, customer-facing UX. Supervision is the default; auto-mode can be used for bounded sub-tasks where the success criteria are clear.

Tier 3, always supervised with a second reviewer: auth flows, payment handling, PII logic, compliance code. No exceptions, regardless of how capable Claude gets. The second reviewer isn't there because the code is technically hard. It's there because the process needs to be defensible.

Write this in your engineering handbook, not just as a verbal norm. The teams where the policy exists only in institutional memory are the ones where it erodes as people leave and new engineers join with different defaults. One page. Three tiers. Examples of what goes in each. It doesn't need to be a complex document. The simpler it is, the more likely it gets used.

Revisit it quarterly. Claude's capability isn't fixed. Work that sat in Tier 2 six months ago may be safely in Tier 1 now. The policy should evolve with the tool, not stay locked to the day it was written.

The economics of getting this right

A supervised Claude Code workflow running at every-step approval delivers roughly 1.5–2x throughput per engineer. A well-calibrated auto-mode policy running Tier 1 work across a team of five is closer to 3–5x. At $150,000–$180,000 fully loaded per senior engineer in Sydney or Melbourne, the gap between those multipliers is not marginal. It's the difference between a slightly faster team and a structurally leaner one.

The senior-engineer-as-orchestrator model — one experienced engineer steering several Claude Code sessions — only works if those sessions can run Tier 1 tasks without constant interruption. Auto-mode is the mechanism. The tiered policy is what makes leaving the sessions running feel like a considered decision rather than a gamble.

The teams that won't capture this upside are the ones that turned auto-mode off after the first unexpected output and never built the policy that would let them turn it back on. Write down what belongs in Tier 1. Start there. The rest follows from watching what actually goes wrong.