Your security team approved the Claude Code pilot. Three developers have been running it for two weeks. Last Tuesday, one of them ran it against a repo that had a .env file sitting in the working directory. Nobody knows what the agent read, what it sent upstream, or whether it logged anything. The CISO finds out on Friday.

That is not a hypothetical. That is the conversation happening across Australian financial services engineering teams right now.

The five questions a CISO actually asks

The good news: Claude Code has a permissions and sandboxing architecture that can genuinely satisfy an APRA CPS 234 audit trail requirement. CPS 234 is specific about what information security capability means — it includes the ability to demonstrate controls, log events, and show evidence of ongoing management. Claude Code can do all three. Most Australian financial services firms are already running APRA CPS 230 assessments for operational resilience. CPS 234 is the information security counterpart. Satisfying it for an agentic coding tool means demonstrating that you can detect anomalies, respond to incidents, and produce records on request. The hard news is that none of it happens by default.

Any CISO signing off on an agentic coding tool is asking five specific questions. If the platform team cannot answer all five in 90 seconds each, the organisation does not have a Claude Code rollout. It has a side project waiting for a security incident.

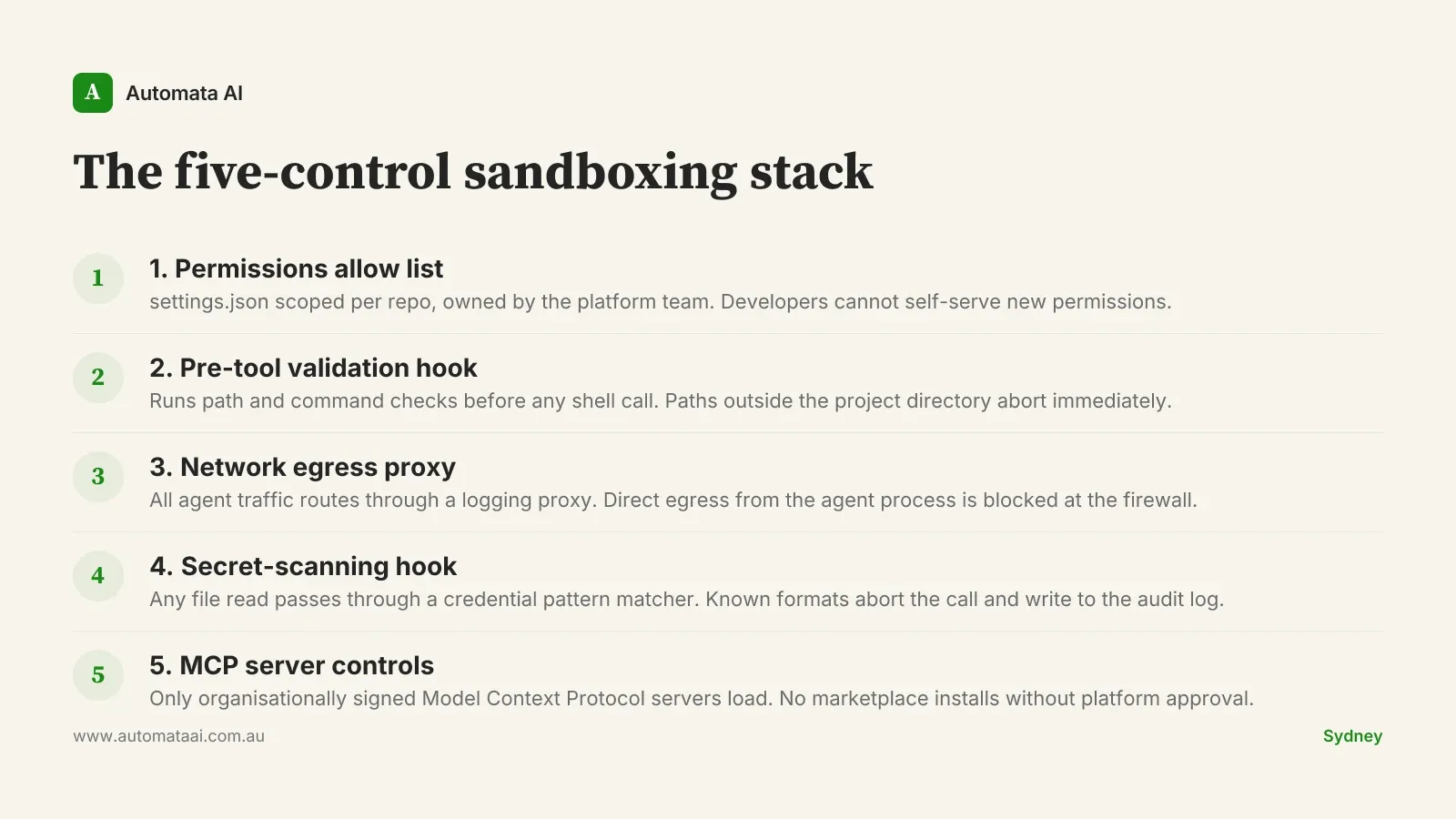

Execution scope. What can the agent run on a developer's machine, and how do I prove it cannot run anything else?

Network egress. What outbound connections is the agent allowed to make, and is every one of them logged?

Secret exposure. What happens when the agent reads a file containing credentials? Does it abort? Does it log?

Revocation path. If a contractor's credential is compromised, how do I pull access organisation-wide in under an hour?

Audit trail. Is there a log that would satisfy an APRA CPS 234 review, and can I produce it within 24 hours of a request?

In practice, teams that arrive without answers get one of two outcomes. Either the CISO sends the project back to engineering with a list of requirements and the rollout stalls for three to six months. Or it proceeds without proper controls, and the organisation discovers the gaps the harder way. The teams that close the CISO meeting in one session are the ones that walk in with a written sandboxing design, a sample audit log, and an answer to the revocation question.

The answers are not unknowable. They require deliberate configuration.

The five-control sandboxing stack

A financial services or professional services team running Claude Code in production needs five controls before the CISO conversation goes anywhere useful. Not three, not ten. Each control maps directly to one of the five questions above, and a gap in any one of them is a gap in your answer.

Standing these up takes roughly two months of combined platform and security engineering time. Fully loaded for an Australian team, that is around $120,000–$150,000. The number looks different when you compare it to a mandatory breach notification under the Privacy Act and the reputational cost that follows in a regulated sector.

Automata AI ships the permissions and audit layer for Australian Claude Code rollouts. If your team is scoping this work, our Claude Code rollout services walk through the engagement tiers and what is included at each.

Start with the audit log, not the allow list

Most teams start with the permissions allow list because it feels like a positive control. Block the dangerous paths. Restrict the shell commands. The problem is that fences without logs only tell you what the agent was prevented from doing. They cannot tell you what it actually did within the permitted scope, which is precisely what an auditor will ask.

The scenario that surfaces this is not sophisticated: a developer runs the agent over a repository that contains a credentials file, the session closes normally, and three weeks later a support ticket suggests those credentials were used somewhere unexpected. Without the audit log, the forensics conversation ends in minutes with no conclusions.

Ship the audit log first. Structured events from every tool call, every file read, every shell execution, piped into whatever observability stack the platform team already runs. Splunk, Datadog, or a plain PostgreSQL table all work. Completeness matters more than format.

Good logs capture three things: the agent's input context, the tool call and its arguments, and the result including any errors. Logs you cannot query are logs you cannot use under time pressure. Most Australian teams pipe these into their existing SIEM rather than building a separate store.

The audit log also has to survive audit theatre. Logs written by the agent itself can be tampered with if the agent has write access to the same store. The right pattern is write-once: events flow directly from the hook layer to the SIEM without passing back through the agent process. That separation is what gives the audit trail its evidential weight under a CPS 234 review.

Before scoping the full rollout, an AI Readiness Assessment is a fast way to identify which controls your team has partially in place and where the actual gaps are.

When this is the wrong approach

Not every team needs the full five-control stack. If your organisation has fewer than ten developers and they work exclusively on internal tooling with no access to production customer data, the overhead of standing up a full sandboxing layer may genuinely outweigh the risk profile.

This stack targets regulated environments: financial services firms with APRA obligations, healthcare organisations governed by the Australian Privacy Principles, or any team where a credential leak triggers a mandatory breach notification. If that describes your organisation, the financial services AI guide covers the compliance context specific to that sector.

A small team on internal tooling can start with a well-configured .claudeignore and a minimal allow list in settings.json. That is not a permanent answer, but it is a defensible starting point while the risk assessment catches up.

Australian regulators are not going to grow less interested in AI agent behaviour over the next three years. The teams that build the permissions layer before the audit requests arrive are the ones that can say yes to the next capability, instead of spending another quarter explaining a side project to the security committee.