If Claude Code felt slower, more forgetful, or oddly terse between early March and mid-April, it wasn't your imagination. Three separate changes shipped in a six-week window. Each was individually defensible. Together they degraded the tool in ways that were genuinely hard to track down from the outside.

On April 23 Anthropic published an engineering postmortem naming what happened, when, and why. Development teams in Sydney and Melbourne who have Claude Code wired into build workflows should read it, not just for the specific bugs, but for what the failure pattern reveals about running any serious production agent stack.

The three bugs, in order

1. Reasoning effort quietly downgraded (4 March to 7 April)

The default reasoning effort in Claude Code was lowered from high to medium on March 4. The rationale was legitimate: high-effort mode was making the interface feel frozen on certain tasks, and the latency was real. The change shipped quietly. Users noticed almost immediately on tasks where deep reasoning is the whole point: complex debugging, architecture decisions, anything that requires holding multiple constraints in context.

The default was reverted on April 7. Opus 4.7 now defaults to xhigh effort, with high as the standard below it. That's five weeks where the tool was running at a lower reasoning level than users expected, with no public notice when the change first shipped.

2. A caching change that erased session memory (26 March to 10 April)

On March 26 Anthropic shipped a change to prune stale thinking blocks from sessions idle for more than an hour. The intent was sound. The bug caused that pruning to fire on every subsequent turn for the rest of any affected session, not just once. Claude appeared to forget context mid-session, repeated work it had already done, and burned through token budgets faster than expected. The fix landed April 10 in version 2.1.101.

This one deserves close attention. The change passed multiple rounds of human code review, automated review, unit tests, end-to-end tests, and internal dogfooding before it shipped. It only manifested on sessions that had been idle, and it was difficult to reproduce in a local environment. This is precisely the failure profile that clears every standard pre-merge check.

3. A word-count limit placed on the coding agent (16 April to 20 April)

A verbosity-control instruction was added to the Claude Code system prompt. The exact text, quoted in the postmortem: Length limits: keep text between tool calls to ≤25 words. Keep final responses to ≤100 words unless the task requires more detail.

The word-count constraint might have made sense for a customer support chatbot. It was the wrong limit for a coding agent, where the reasoning that appears between tool calls is the mechanism, not the noise. The instruction was reverted four days later after users reported responses that felt clipped and incomplete.

Why three regressions looked like one problem

Each change affected a different slice of usage on a different schedule. The aggregate signal from users was consistent: 'Claude feels worse.' It didn't map cleanly to a single cause. From the outside it looked like persistent, inconsistent degradation across March and April. From the inside it was three separate regressions that happened to overlap, with the caching bug arriving while the reasoning downgrade was already active.

The diagnostic detail worth noting from the postmortem: Anthropic back-tested its own Code Review tool against the pull requests that introduced the caching bug. Opus 4.7 found the defect. Opus 4.6 didn't. It's one of the few times Anthropic has published a direct model-to-model comparison on the same real-world debugging task, and a concrete data point on where reliability improved between versions.

What this means if you're running Claude in a production workflow

Three things worth taking seriously, whether you're using Claude Code directly or building on the Agent SDK.

The harness is part of the model. Quality regressions in agentic workflows are rarely explained by 'the model got dumber.' They almost always trace to the orchestration layer: caching strategy, system prompts, default parameters, token budgets. None of the underlying Claude models changed across March and April. Three configuration-layer decisions did. If your senior developers are fully loaded at $120,000 to $180,000 per year, six weeks of degraded tooling has a real dollar cost.

'It passed our evals' is not a guarantee. The caching bug cleared multiple layers of automated and human review before shipping. It only manifested on sessions that had been idle, and was difficult to reproduce locally. That's precisely the failure profile that clears standard CI pipelines. Soak periods and gradual rollouts catch this class of bug.

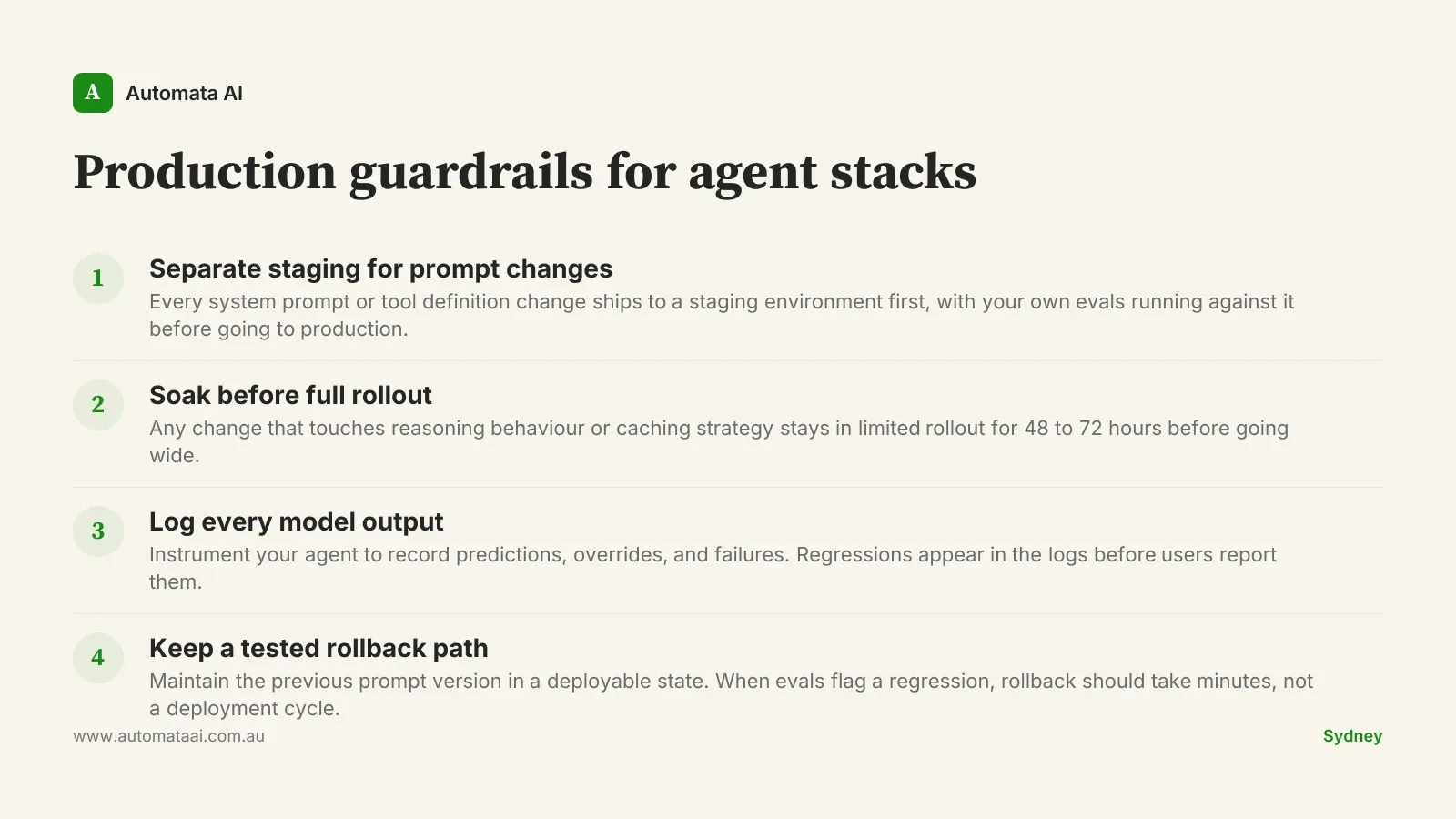

Watch Anthropic's going-forward commitments. The postmortem lists specific operational improvements: broader eval suites before system-prompt changes, tighter controls, soak periods, gradual rollouts. If you're shipping internal prompts or tool definitions to your own production agents, you've inherited the same operational problem. The same disciplines apply.

When a postmortem isn't the protection you need

Anthropic got this one right. The postmortem names dates, owns the decisions, credits users who flagged the degradation, and Anthropic reset usage limits for the affected period. That's a high bar for platform accountability, and it matters when you're defending a platform recommendation to a board.

But a postmortem is published after the regression has ended. If your business has a customer-facing agent that was making worse decisions for six weeks, or if you're in a regulated sector such as financial services, healthcare, or insurance, where changes in model behaviour may have compliance implications under APRA CPS 230 or the Australian Privacy Principles, 'we published a postmortem' doesn't close the loop.

High-frequency automation. If your agent processes thousands of tasks per day, six weeks of degraded quality multiplies across a large volume of outputs before any platform fix arrives.

Customer-facing workflows. When model quality regressions affect what users see or receive directly, the business impact doesn't wait for a retrospective.

Regulated environments. Material changes in model behaviour may trigger disclosure or review obligations under sector-level frameworks. Financial services, healthcare, and insurance carry the most exposure.

The answer isn't to pull Claude out of production. It's to build your own evaluation layer that runs against every prompt change, and to have a rollback path you've actually tested.

Why we're watching this closely at Automata AI

Automata AI builds on Claude. That means our clients' production reliability sits downstream of how Anthropic operates the platform. The April postmortem is a useful precedent: specific, dated, honest about what slipped through review, and paired with concrete operational changes. That's the level of transparency we expect from a platform we put in front of Australian enterprise clients.

The question for any organisation running Claude in production isn't whether the platform will ever regress. It will. The question is whether you have the instrumentation to detect it quickly, and the architecture to absorb it without it becoming your incident.