Most Claude Code rollouts at Australian engineering teams follow the same pattern. One engineer tries it on a side task. Within a week, three more are using it. Within a month, it's in the standard workflow on production codebases.

The sandboxing conversation usually comes after that.

For teams in non-regulated environments building internal tools, that order is probably fine. For teams in APRA-regulated financial services, government agencies handling sensitive data, or any organisation under the Privacy Act (1988), it's a problem waiting to be discovered at audit.

Claude Code is not just an autocomplete tool. It can read files, write files, run shell commands, and make network calls. Each of those capabilities needs a boundary.

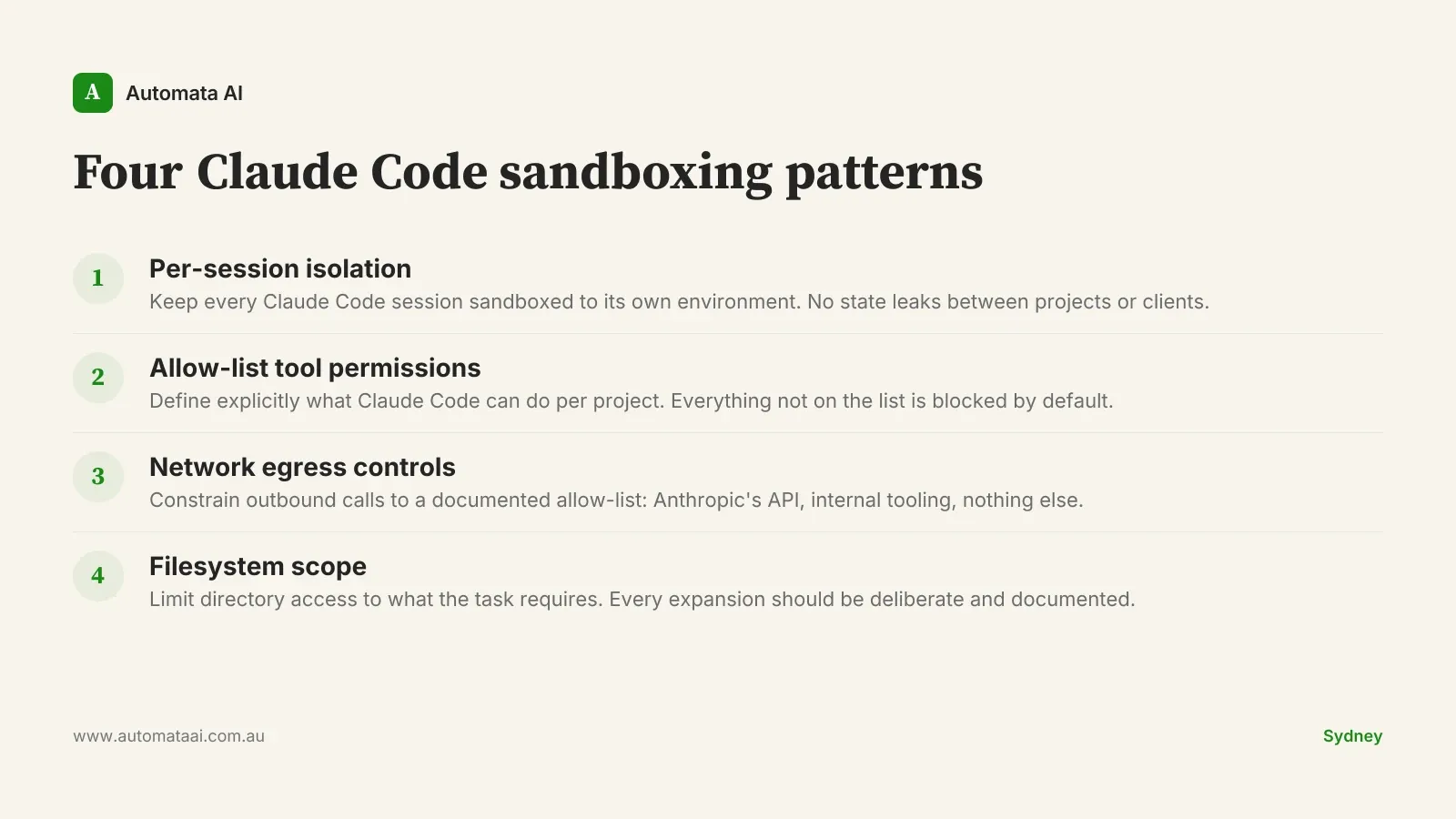

Pattern 1: Per-session sandbox isolation

Every Claude Code session should run in an isolated execution environment. No shared state between sessions, no cross-project contamination.

For a single-product startup, this feels academic. For an Australian consultancy running Claude Code across ten client projects simultaneously, or a government department where analysts work across different data classifications on the same day, it's not.

The practical implication: if you're running Claude Code on client A's codebase in the morning and client B's in the afternoon, those sessions should have no way to bleed into each other. Default configuration provides this. Teams that customise their setup with shared tooling, shared context files, or shared memory directories can inadvertently break it.

Audit your setup. Verify isolation is maintained when your customisations are applied, not just in the default configuration.

Pattern 2: An allow-list posture, not a deny-list

Claude Code's tool permissions can be configured as "deny what's dangerous" or "allow only what's needed." The correct posture is the second one.

Deny-lists fail open. When something new is introduced (a new shell command, a new integration, a new file path), it bypasses the deny-list until someone notices and adds it. Allow-lists fail closed. Anything not explicitly permitted is blocked.

For regulated environments, this isn't a preference. The ASD's Essential Eight, which APRA now expects organisations to address under CPS 234, specifically calls for application control that fails closed. Claude Code's permission model should align with that expectation.

In practice: for each project or environment, define the minimum tool set Claude Code needs. Read access to the project directory. Write access to the same. The specific shell commands required for the build and test pipeline. Nothing else. Document the justification for any expansion.

Network egress controls: the gap most teams don't see

This is the one pattern that genuinely surprises most engineering teams when they look at it.

Claude Code can make outbound network calls. Not just to Anthropic's API. Depending on the task and the tools configured, it can reach external endpoints.

For teams operating under APRA CPS 234 or the Privacy Act, uncontrolled outbound data egress is not a configuration gap. It's a notifiable incident if the wrong data leaves the environment. An incident response engagement in Australia typically runs at $200–$400/hr for specialist legal and security counsel, plus OAIC notification preparation. A single uncontrolled egress event on a production codebase can cost $50,000–$150,000 to investigate and remediate properly.

The fix: constrain Claude Code sessions to a defined allow-list of destinations. Anthropic's API endpoint, your internal registries and tooling, nothing else. Most teams haven't implemented this because it's not configured by default and it requires infrastructure work. That's exactly why it's the first gap worth closing.

Pattern 4: Filesystem scope

Claude Code's default scope is the current working directory. That's sensible. The risk is in what happens when that default gets expanded without review.

Engineers legitimately need Claude Code to access adjacent directories sometimes: shared libraries, monorepo siblings, config files in parent directories. Each expansion should be deliberate and reviewed, not granted because someone ran Claude Code from a higher directory in the tree.

For teams with secrets in environment files, AWS credentials, or config with embedded API keys, the question is straightforward: can Claude Code read those files? By default, it can if they're in the working directory. Configuring explicit exclusions for sensitive file patterns is a five-minute safeguard. It's not a substitute for proper secrets management, but it's a useful second line.

When these patterns add more cost than they remove

Not every team needs all four of these implemented immediately.

If you're a 12-person startup with a single product, no customer PII in the codebase, and no regulatory obligations beyond standard consumer law, patterns 1 and 4 are a reasonable starting point. Add network egress controls when customer data enters the codebase.

The full setup earns its cost quickly in a few specific situations:

Multiple client projects. Any agency or consultancy running Claude Code across client engagements. Isolation is not optional.

APRA-regulated entities. CPS 234 creates documented expectations around technology controls. 'Our developers used Claude Code without egress controls' is an audit finding waiting to happen.

SOCI Act operators. Engineering tooling with access to operational systems is an active audit question for critical infrastructure operators.

Teams with offshore developers. Data residency and cross-jurisdiction access controls become notifiable topics fast.

If none of those apply, calibrate accordingly. Sandboxing has an implementation cost. It's worth paying where the regulatory exposure is real.

The compliance dividend

APRA CPS 234, the Privacy Act, and the SOCI Act all create technology operator obligations that now include engineering tooling. "Our developers were running Claude Code on production codebases without documented controls" is exactly the kind of finding that surfaces at your next external audit.

Good sandboxing posture flips this. A documented allow-list, network egress policy, and filesystem boundary review gives your security team something concrete to show. "Every Claude Code session operates under a documented sandbox policy, reviewed quarterly" is the sentence your CISO wants to write in the next board risk report.

The engineering work is not particularly complex. The ongoing effort sits in the oversight: documenting, reviewing, and updating the allow-lists as your tooling evolves. That work belongs in your existing change management process, not in a separate Claude Code compliance exercise.

The teams that get this right aren't doing more work. They're doing the security review before the incident rather than after it.