Most Australian enterprise AI deployments look successful at the six-month review. Adoption is up. The tools are being used. Someone has put a productivity slide in the board deck. But the gains are thin and not growing. They are flattening, not compounding.

The enterprises pulling ahead are not doing wildly different things. They are executing on three specific pillars consistently, and using a deployment surface that supports all three simultaneously. Here is what each pillar looks like when it is working, and where most Australian organisations are actually sitting.

Pillar one: Cross the agentic thinking divide

Most AI rollouts stall not because the model is bad but because the workforce treats it like an improved search engine. Ask a question, get an answer, close the tab. No process changes. The tool is marginally faster than Google.

That is not compounding. That is a better notepad.

Compounding gains require a different mental model: thinking in agents. Not what can I ask Claude, but what task can run autonomously overnight, what workflow can be encoded once and reused ten thousand times, what recurring process should never touch a human hand again. The mental model shift is harder than the technical one. It is also the one most enterprises skip.

A handful of Sydney financial services firms and a few mid-market retailers have made this shift. The diagnostic is straightforward: if Claude is helping your teams do the same tasks better one query at a time, you are in the typewriter phase. If Claude is running recurring workflows — summarising overnight, drafting before someone opens their laptop, filtering before anyone’s time is spent — you are in the compounding phase. The gap between those two groups is growing faster than anyone admits at industry events.

Claude Cowork is built around this frame. Teams build and share Skills, which are reusable, context-aware agent behaviours tied to specific workflows, rather than firing ad-hoc queries. That structural difference is what separates a tool your team uses from a system your team builds on.

A Skill in Cowork terms is a reusable, shareable agent configuration. A contract review Skill does not just answer questions about contracts. It runs through a standard checklist, flags the clauses your lawyers care about, and outputs a review in your firm’s format. Once built by one person, the whole team uses it. That is how institutional knowledge compounds: not by asking the same question better, but by encoding the question once and never having to ask it again.

Pillar two: Encode institutional knowledge into systems

Generic AI knows how work is supposed to happen. Your organisation knows how your work actually happens. That delta is where competitive advantage lives, and most enterprise deployments throw it away by defaulting to generic off-the-shelf implementations.

Encoding institutional knowledge means more than uploading a policy document and hoping Claude reads it. It means custom Skills built around your actual processes. MCP servers wired to your internal tools and data sources so the agent has live context. Agent definitions that capture the real judgment calls your senior people make: how your risk team actually prioritises, not how a generic compliance framework says they should.

Generic implementations don’t compound. Bespoke ones do. The closer the implementation is to how your organisation actually works, the more it compounds. The gap grows wider every quarter.

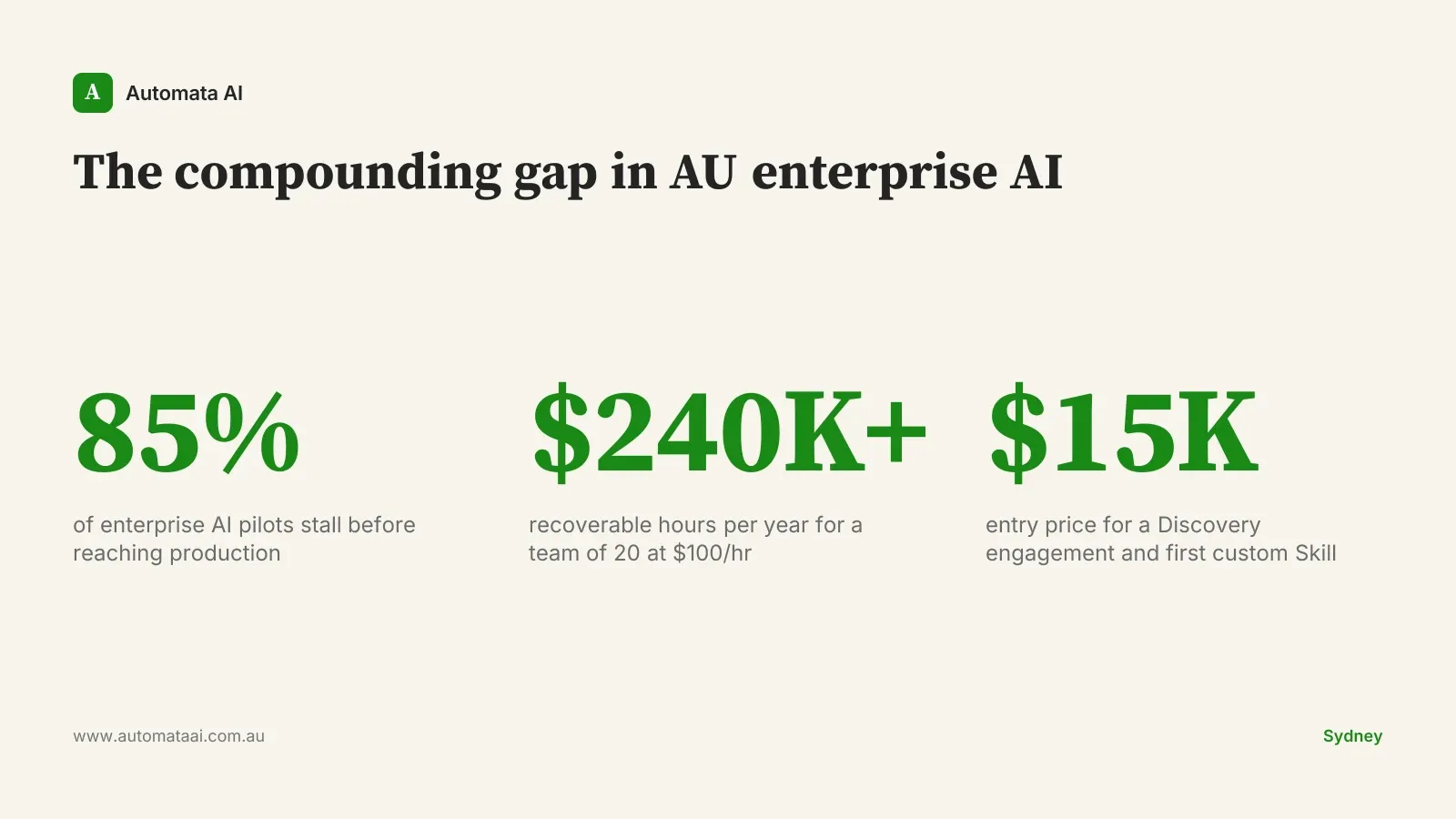

The cost model makes this concrete. A team of 20 knowledge workers spending 30 minutes per day on recoverable tasks (document review, status updates, manual lookups) generates roughly $240,000 to $280,000 per year in recoverable hours at $100 to $120 per hour fully loaded. A Discovery engagement to map one core process and build the first custom Skill starts at $15,000 to $20,000. The payback window on the first well-chosen process is typically two to four months.

Pillar three: Build new revenue, not just cut existing costs

The biggest strategic miss in Australian AI deployments is the fixation on cost takeout.

Every CFO in Sydney and Melbourne loves a cost-reduction story. It is measurable, attributable, and presents well at a board meeting. It also gets copied quickly. If you save $200,000 a year by automating a compliance review process, your competitors are three months behind you and then they catch up. Cost reduction gives you a temporary lead. It is the floor of what AI can do for an organisation, not the ceiling.

The compounding plays are new product capabilities. Services your business can now offer because AI is in the stack. Not cost stories. Revenue stories. The organisations that have worked this out are not debating whether AI justifies the spend. They are debating how fast to scale.

Most Australian mid-market businesses have not reached this pillar. They are still modelling year-one cost savings and have not projected what year two and three look like when the capability is embedded in client-facing products, pricing models, or services they could not previously offer.

When this approach is the wrong first move

Not every Australian enterprise is in the right position to deploy Claude Cowork and see compounding results. Three situations where a different path makes more sense:

No baseline AI literacy. If teams are still being briefed on what a large language model does, Cowork is the wrong surface. The first six weeks will go to change management. A structured AI literacy program comes before the deployment platform.

No one who will own the Skills. If there is no internal person who can write a clear prompt, document a process, and iterate a Skill when the workflow changes, the initial lift will stall within a quarter. The tool compounds when someone tends it.

Your highest-ROI opportunity is one narrow task. Invoice reconciliation at scale, contract clause extraction on a fixed template. These are purpose-built integration problems, not Cowork problems. Build a targeted automation and deploy the workforce platform when the problem is broader.

A six-month deployment frame for Australian enterprise

For Australian enterprises with solid AI literacy, functional governance, and at least one internal champion who understands the agentic model, Cowork is the fastest deployment surface to get most teams active and building.

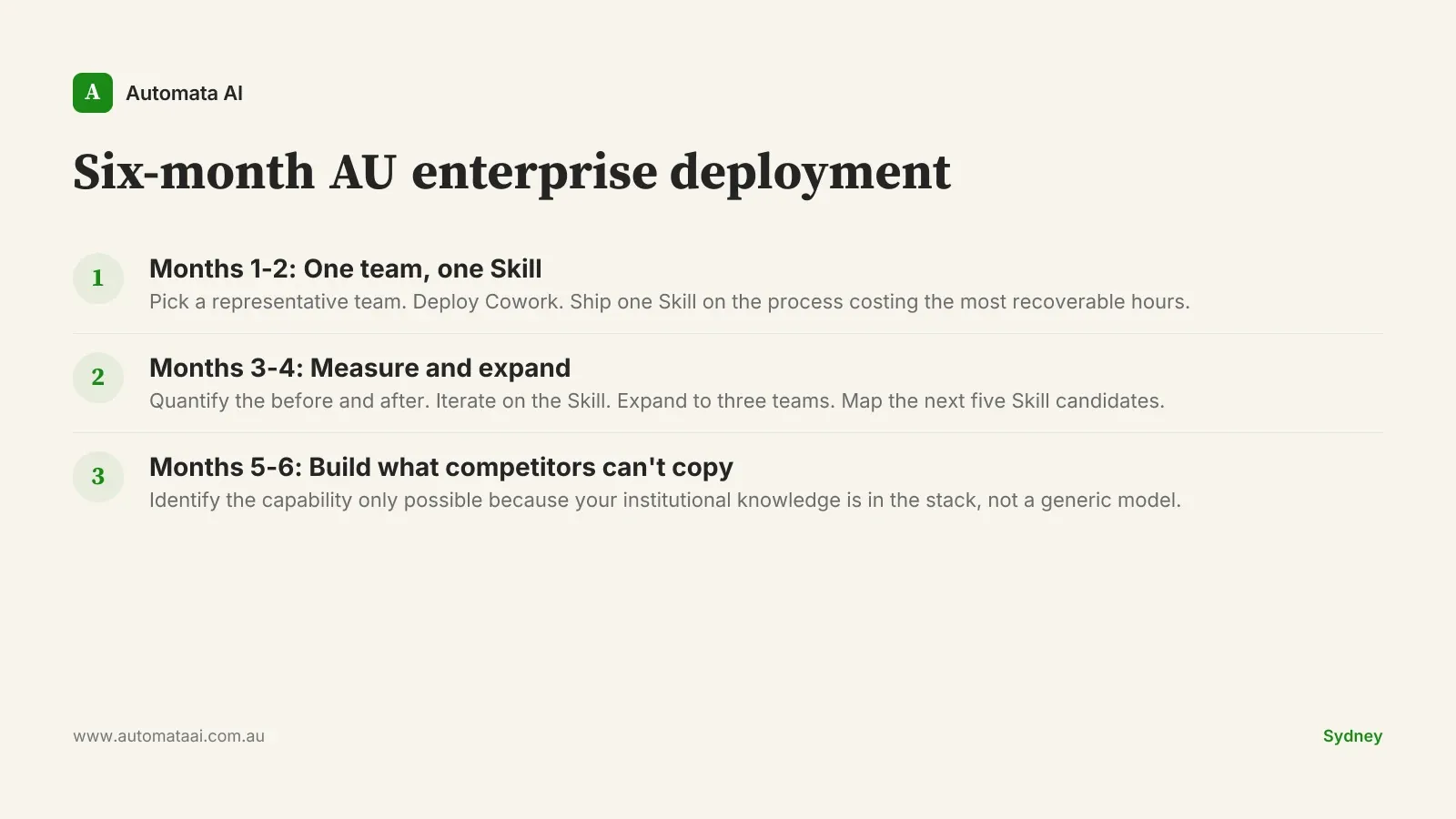

Months one and two: pick one team (not necessarily the most tech-literate in the organisation, but a representative one), deploy Cowork, and ship one custom Skill built around the process costing the most recoverable time. Measure before and after. Not impressions. Time per task, error rate, volume processed.

Months three and four: iterate on the Skill based on what the data shows, expand to three teams, and map which processes across those teams are candidates for Skills two through five. Months five and six: identify the capability that competitors cannot copy because it is built on your institutional context, not a generic model.

APRA-regulated financial services businesses have the most to gain from moving early on pillar two. The compliance surface area is high enough that custom Skills built on your own risk frameworks carry genuine defensibility. A generic AI deployment does not have your policies. Yours does.

The businesses that treat AI as a cost lever in year one and a capability lever in year two compound. The ones that stop at cost reduction hit a ceiling, and that ceiling typically arrives within twelve months. The next decision is not whether to deploy more AI. It is what you want to be able to do in year two that you cannot do today.