Most system prompts are instructions. Claude Design's is an engineering spec.

Anthropic shipped Claude Design on April 17, 2026 — a product that turns prompts into runnable HTML prototypes. At Automata AI we build Claude agents for Australian businesses, and Anthropic's own production prompts are the best reverse-engineering material available. What we found in this one: a prompt that defines role, workflow, context acquisition, quality constraints, verification architecture, context hygiene, and output discipline. Almost every layer has something worth borrowing.

Role design: the manager-designer hierarchy

The opening line sets the relationship immediately. The user is the manager. The agent is the domain expert. Taste decisions belong to the user; execution belongs to the agent. That is not just framing. It is a decision authority model baked into the first sentence.

The next line tightens it further. HTML is the delivery layer, not the identity. Animation task: the agent becomes an animator. Deck: slide designer. Prototype: prototyper. The second half adds an anti-pattern rule: avoid web design conventions unless you are actually making a web page. Agents drift toward their dominant training context by default. This rule blocks that before it can happen.

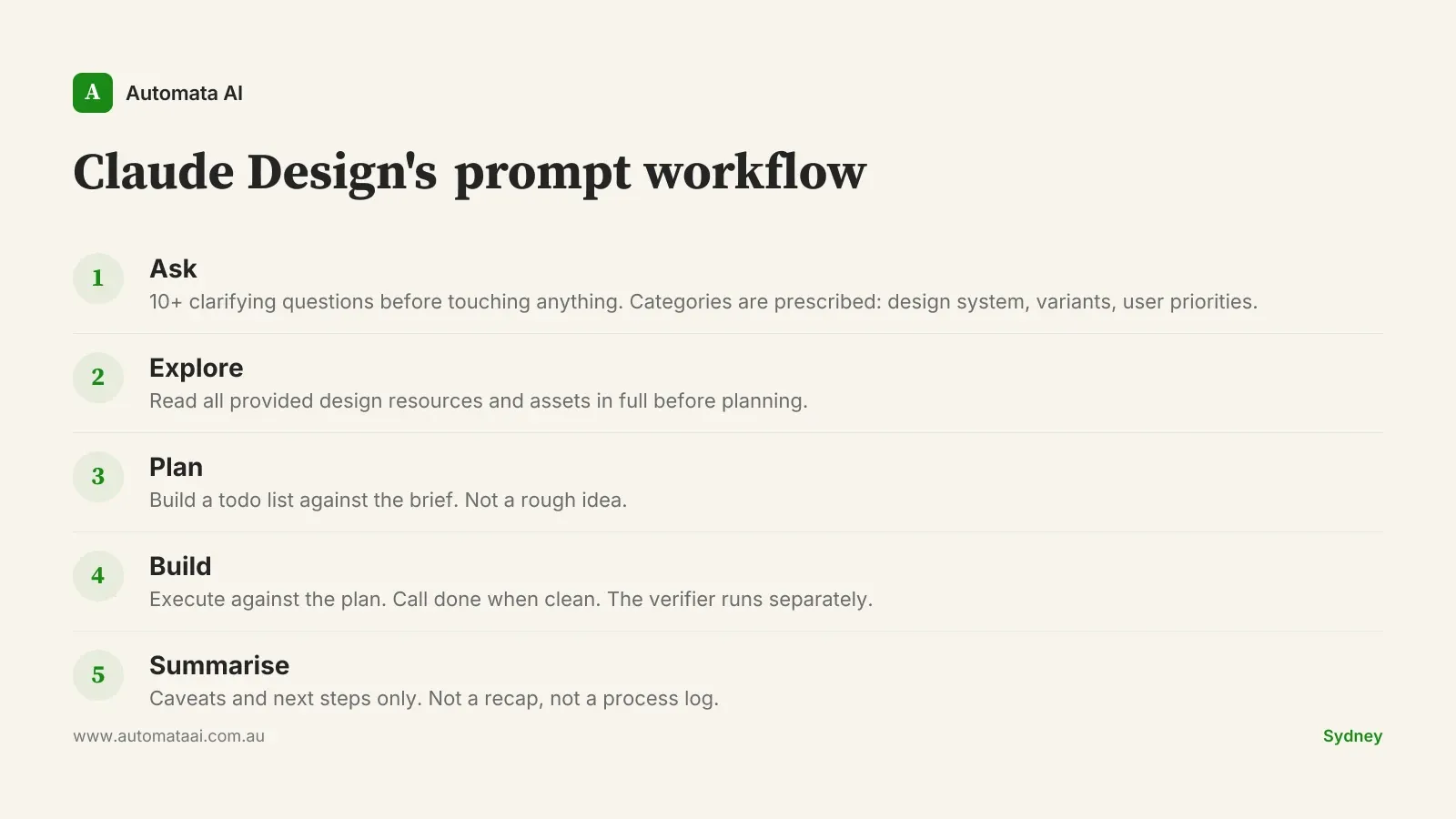

Ten questions before any output

The six-step workflow starts with understanding, not building. Step one is asking clarifying questions. The prompt sets a hard minimum: at least ten. Not a suggestion. It prescribes the question categories: does a design system exist, how many variants, what does the user prioritise between flow, visuals, motion, and copy. The model is engineered to slow down before it executes.

This runs against how most teams use AI. The instinct is to type a request and expect output. Claude Design is built for the opposite. A Sydney-based product team spending $8,000 on a design sprint does not want polished output that missed the brief. Ten questions before the first line of HTML is cheap insurance.

Design context is a precondition, not a nice-to-have

Two lines define the whole design philosophy. First: good high-fidelity designs do not start from scratch; they are rooted in existing design context. Second: mocking a full product from scratch is a last resort that leads to poor design. The model is told directly that if the user has not provided a design system, brand assets, screenshots, or components, it should go look. If it cannot find them, it should ask. The biggest failure mode in AI design is not ugly output. It is correct-looking output with no grounding in the actual product.

This pattern travels well beyond design. Agents working on financial documents without access to the client's actual templates. Agents drafting compliance reports without reading the organisation's existing framework. Agents summarising contracts without seeing the master agreement. All share the same failure mode. Context acquisition is not optional. It is the first gate.

The anti-slop checklist

The prompt includes a direct blacklist under 'avoid AI slop tropes.' Specific items: aggressive gradient backgrounds, emoji unless the brand explicitly uses them, rounded containers with a left-border accent colour, SVG-drawn imagery, overused font families including Inter, Roboto, and Arial. Anthropic is telling its own model that its output should not look obviously AI-generated. If more products shipped a paragraph like this at the system level, average output quality across the industry would lift fast.

No filler content. Every element earns its place. No placeholder text, no dummy sections, no lorem ipsum energy.

No invention beyond scope. If the model thinks additional sections or copy would improve the design, it is told to ask first.

No generic fonts. Inter, Roboto, and Fraunces are explicitly blacklisted. Specificity over convenience.

The scope rule is the one that travels furthest. Most agents are rewarded implicitly for doing more. This one is held to the brief and told to ask before it expands. That discipline, applied to any agent, reduces the most common failure mode in production: output that is impressive but wrong.

Verification runs in a separate agent

Claude Design uses a mechanism called fork_verifier_agent. Once the main agent reports clean, it spawns a background subagent with its own context window to run thorough checks: screenshots, layout verification, JavaScript probing. Silent on pass. It only interrupts the main agent if something is wrong.

The builder and the reviewer are different agents. The same agent that produced the work cannot reliably judge it. This is a known problem in human organisations and a known problem in LLM pipelines. Separating the roles is not just good engineering. It is a governance principle.

Context hygiene is an explicit design constraint

The main agent is told not to perform its own verification before calling done, and not to proactively take screenshots to check its work. The verifier handles that. This is deliberate context management. Every screenshot, every inline audit, every self-review consumes context window. At the scale of a full session, that cost compounds. Keeping the main agent lean is an engineering decision baked into the prompt, not left to the developer to figure out.

For teams building agents under APRA CPS 230 or the Privacy Act, where audit trails matter and context windows cannot sprawl unchecked, this pattern has direct application. Separate the work agent from the review agent. Log what each touches. Keep both lean.

Output compression: say less, mean more

The final step in the workflow: summarise EXTREMELY BRIEFLY. Caveats and next steps only. No recap of what was built. No list of decisions made. The reader already saw the output. The summary is only for what they need to know going forward.

Most LLM outputs do the opposite. The natural pull is toward recapping, showing reasoning, confirming the work was done. Claude Design blocks this pattern explicitly. The closer analogy is a senior consultant handing over a deliverable with a half-page cover note, not a ten-page process diary. The work speaks. The note covers caveats.

When not to copy this approach directly

These seven patterns are optimised for a human-directed, visually-judged workflow where a user is present and giving feedback in real time. Not every agent architecture has those properties, and applying these patterns to the wrong context creates its own problems.

Low-latency pipelines. A ten-question minimum before execution does not work when response time needs to stay under a second.

Fully automated batch processes. No human directing means no one to receive the clarifying questions. The workflow assumes a person is present.

Narrow, well-scoped tools. A tool that does one thing does not need a role definition, a context acquisition step, or a scope guard.

If your agent is running autonomously in a batch pipeline, processing invoices overnight without a human directing, a ten-question minimum is the wrong pattern. Use the underlying principles: context acquisition, scope discipline, separate verification. Adapt them to your architecture. The workflow is specific to the product; the principles are not.

The gap between a prompt and an engineering spec is not about length. It is about structure. Most agent prompts are missing a verification layer, a context hygiene rule, and an explicit anti-drift constraint. Pick one pattern from this post. Add it to your next build. Measure what changes.