Most state and federal agencies running citizen-facing information services have already made the same call: keep AI away from anything election-adjacent. The political risk looks asymmetric. The compliance questions feel unanswerable. If the model gives a citizen bad information about a candidate, it ends up on the front page.

That instinct might be right for some specific use cases. It is the wrong default applied to all of them.

What Anthropic's election safeguards actually say

In late April, Anthropic released an update to Claude's election safeguards, covering the policies, training choices, and product controls that govern how Claude responds to questions about candidates, voting logistics, parties, and politically contested issues. The update was framed around the US context, but the design decisions apply to any deployment, including Australian government and civic-tech services.

The core principle: when users ask Claude about political topics, the model gives balanced, accurate responses. Responses designed to help users reach their own conclusions rather than steer them toward a particular view. This is encoded in character training, not layered on at the prompt level.

Two examples that clarify the boundary. A citizen asking how the Senate count works gets a factual explanation of Australia's preferential voting system. A user asking Claude to write a campaign speech arguing that voters should choose a particular party gets a refusal. The model is designed to inform without influencing.

For AU government buyers evaluating AI for citizen services, this framing matters more than the features list.

Balance, not avoidance

The instinct inside government communications teams is to configure AI for maximum avoidance on anything politically sensitive. That instinct is understandable. It also produces a worse outcome than most teams realise. A system that refuses all election queries forces the citizen to the AEC website, the call centre, or a search result that may be outdated. The AI is still touching their decision-making process. Just via the absence of useful information rather than the presence of it.

A citizen using a government information service during an election cycle has legitimate questions. How does preferential voting work? When do pre-poll booths open? What ID do I need at a polling place? What does the major parties' housing policy actually say? An AI that refuses to engage with any question touching a political party or policy position is useless for a meaningful share of the queries you actually deployed it to handle.

Anthropic's approach separates these cleanly. Factual, administrative, and procedural political questions are handled. Claude is trained to decline requests to generate campaign content, produce voter-influence analysis, or amplify one party's messaging over another. That distinction is the right one for AU government buyers to build their governance framework around. It also tells you where the genuine risk lives: not in the information-delivery use case, but in the persuasion use case.

Three things AU buyers need to act on now

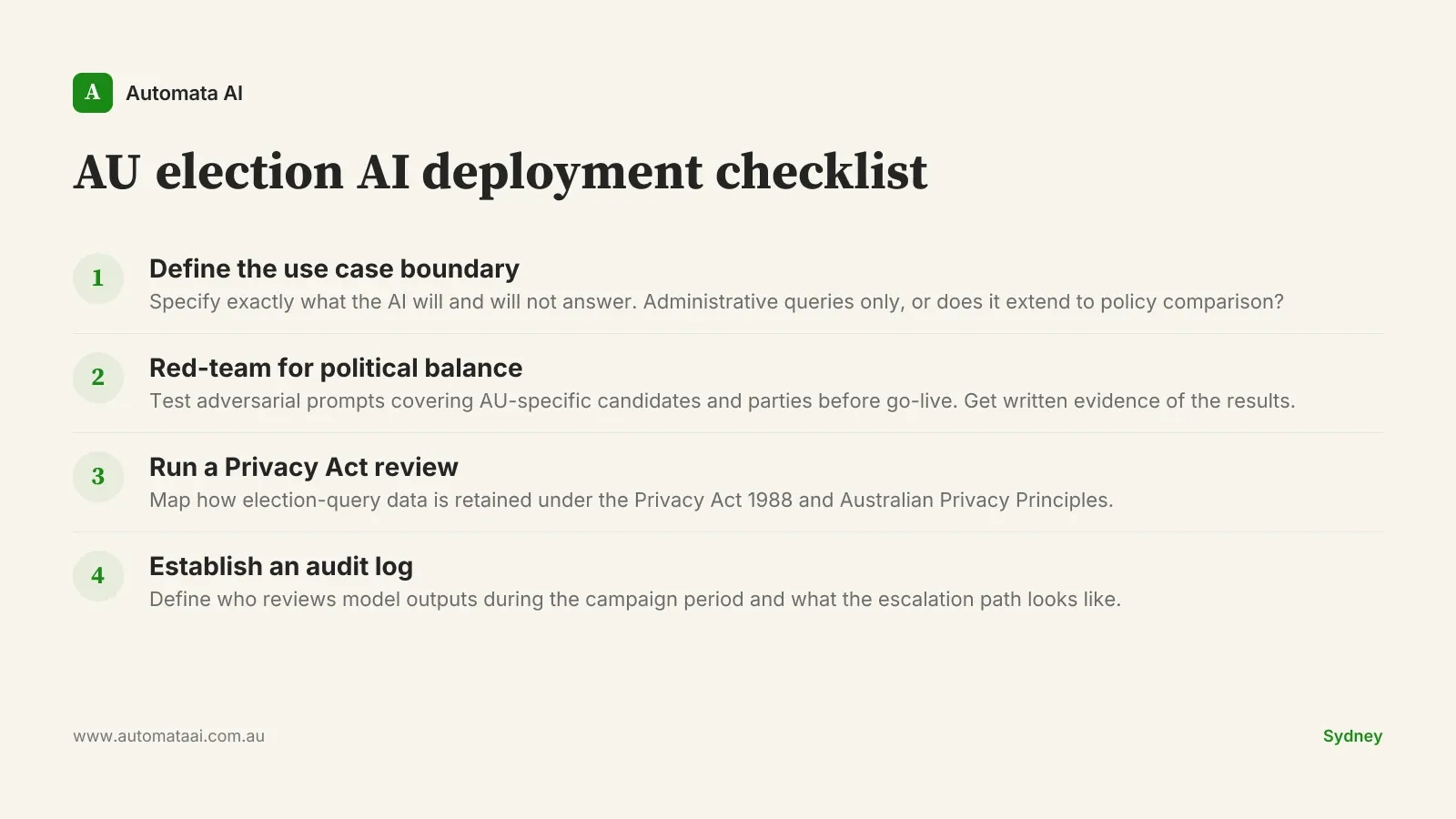

Election logistics is a production-ready use case. Preferential voting mechanics, polling booth locations, pre-poll opening times, how-to-vote card basics, and what counts as informal. These are administrative questions. A well-configured deployment handles them better than a static FAQ page and at a fraction of call-centre volume. An agency with prior chatbot experience can run this as an Accelerator engagement ($60,000–$120,000) and have it live for the campaign period.

Political balance needs to be tested, not assumed. Anthropic publishing a principle is step one. What procurement should require is evidence: red-teaming logs showing how the model responds to partisan prompts, adversarial test cases covering AU-specific political topics, documented override behaviour when users push toward advocacy. If a vendor cannot produce this, treat the principle as aspirational.

The Privacy Act creates a real constraint on voter interaction data. If a civic-tech product captures queries about political topics from identified users, that data has implications under the Privacy Act 1988 and the Australian Privacy Principles. Any deployment handling election-related queries at scale needs a data handling review before go-live. A privacy impact assessment for a new AI service typically runs $8,000–$20,000 when conducted by external counsel.

When this is the wrong deployment

There are clear cases where AI in an election context is the wrong call. They are worth naming directly, because the governance discussion tends to focus on defensible use cases rather than the ones that should not proceed.

If the use case involves generating content for a political party, drafting voter-targeting copy, or creating anything designed to persuade rather than inform, these safeguards are not built for that purpose. Deploying Claude in that context produces unreliable results. Anthropic has trained against persuasion use cases deliberately.

If your organisation cannot run a red-team exercise before launch, the deployment is not ready. Not because the model is unsafe by default, but because election-related AI deployments without adversarial testing are, in practice, untested products. The reputational exposure of a model outputting something imbalanced about a candidate during a campaign period is not recoverable. An independent red-teaming engagement runs $15,000 to $40,000. That is the price of knowing before you go live.

And if your agency's risk appetite does not include residual uncertainty, that is a legitimate decision. Know that you are choosing a higher-cost, lower-capability alternative, not a safer one.

The procurement question that actually matters

Government agencies evaluating AI for election-adjacent services are often asking the wrong question. Asking whether AI is safe for political content leads to either a blanket ban or a compliance checkbox exercise. Neither is useful.

The right question: which specific use cases, with which governance controls, under which audit regime, are appropriate for this technology in this context?

Anthropic's election safeguards documentation is one input to that analysis. The AEC's guidance on election information integrity is another. Your agency's own privacy impact assessment process is a third.

The organisations deploying AI well in this cycle are treating governance as a first-class deliverable, built in parallel to the technical work. Not reviewed after the demo looks good.

Procurement and integration timelines for a federal election cycle are shorter than they look. If you are evaluating Claude for election-adjacent deployment, the governance framework needs to be running alongside the technical proof-of-concept. The time to resolve Privacy Act questions, red-team requirements, and use case boundaries is before the campaign period opens. Not during it.