Anthropic published its no-ads commitment in February. Three paragraphs. No fanfare. For most Australian enterprise AI evaluations I've sat in on, the announcement hasn't come up once.

That's a gap worth closing. What looks like a minor product policy is a long-term incentive-alignment statement, and it has procurement implications that procurement teams aren't yet asking about.

The incentive alignment underneath the announcement

Every successful free-or-freemium technology product faces the advertising question eventually. Search did. Email did. Social platforms did. The pressure scales with the user base, and Claude's user base in 2026 is substantial. By publishing an on-the-record commitment that Claude will carry no advertising, no sponsored responses, no product placements, and no third-party influence on what surfaces, Anthropic is making a specific claim about whose interests the model serves.

That claim is: the user's. Not the advertiser's.

For consumer Claude users, that's a reassuring product feature. For enterprise buyers, it's something more specific: a structural guarantee about what the model won't be optimising for when your team uses it to draft contracts, analyse suppliers, summarise compliance documents, or support internal decisions.

The risk an ads-enabled AI platform creates isn't a banner in the sidebar. It's that response quality gets subtly weighted toward outcomes that serve third-party commercial interests. In a tool embedded in business processes, that kind of drift is not a minor interface problem.

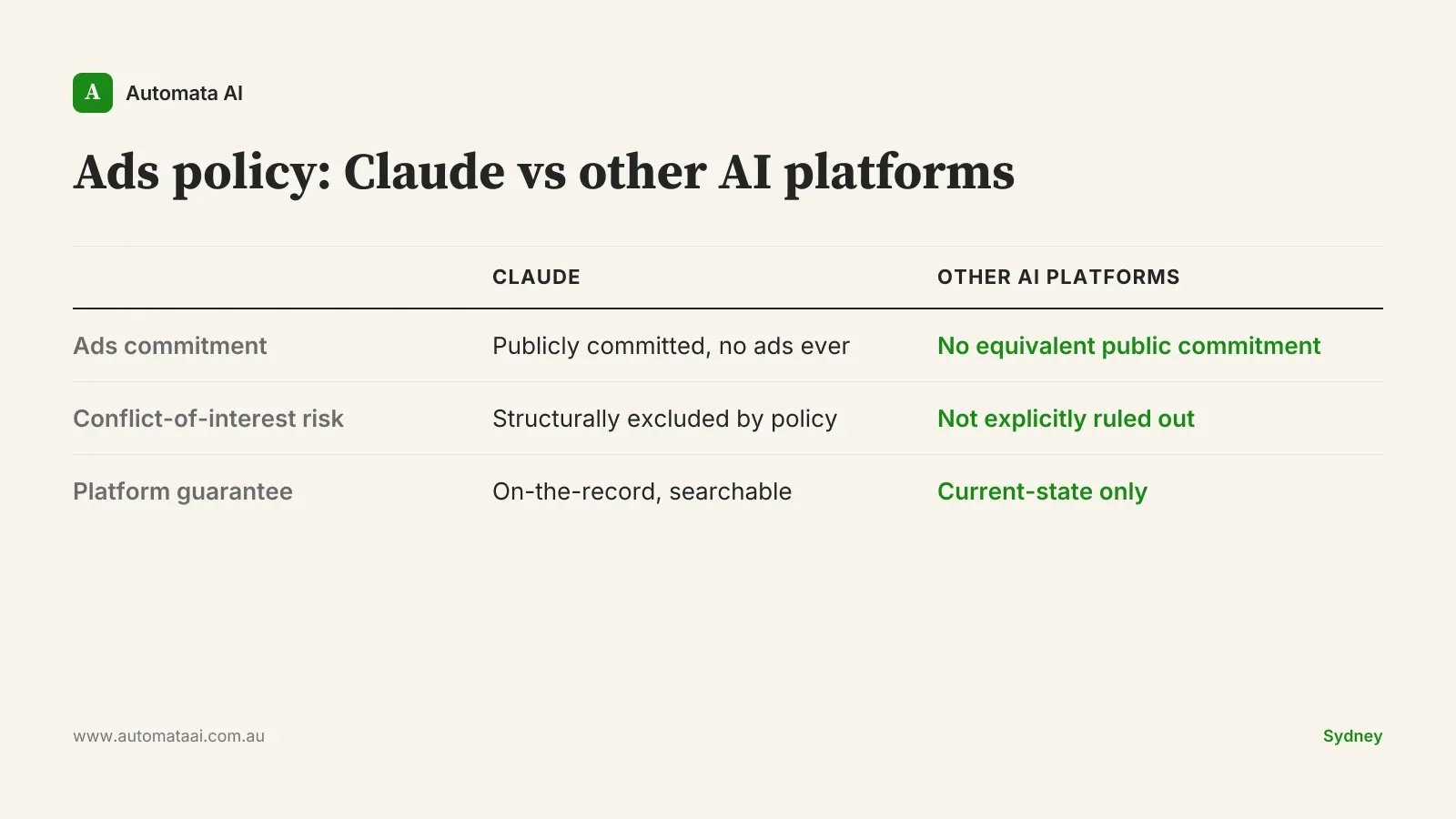

The competitive comparison that now exists

The AI vendor landscape includes platforms that have not made this commitment. Some already run ad-supported consumer tiers. Others have monetisation roadmaps that have not publicly ruled out sponsored content. That's not an accusation. It's an observation that the on-the-record statement Anthropic made is not an industry norm.

For an Australian enterprise running a multi-year platform evaluation, the distinction between 'we don't have ads today' and 'we've publicly committed to never having ads' is material. Written commitments made publicly are substantially harder to walk back than internal product decisions made without fanfare.

Anthropic's statement is searchable and dated. If they reverse it, the reversal is also on the record. That asymmetry has procurement value.

Three procurement questions this changes for Australian enterprise

1. Ask for the policy directly

When evaluating AI platforms, include the ads question explicitly: does your platform carry, or reserve the right to carry, advertiser-influenced responses? Is that a binding product commitment or a statement of current intent?

Anthropic's answer is on the public record. You can reference it in your vendor assessment. Not every platform can say the same.

2. Regulated sectors face a specific governance exposure

For Australian financial services firms operating under APRA CPS 230, which strengthened operational risk requirements for third-party arrangements, and for healthcare and professional services organisations subject to the Australian Privacy Act 1988, the AI vendor's commercial model is not a peripheral detail. It's a governance question.

An AI tool that is structurally incentivised to surface certain information over other information creates a conflict-of-interest exposure. Not a theoretical one. The kind that an internal audit committee eventually notices, and the CIO who chose the platform has to answer for.

3. Platform-level investments carry platform-level risk

Building serious Claude capability — MCP servers, agent workflows wired to your systems of record, integrations embedded in client-facing processes — costs $60,000–$150,000 in year one and scales from there. You're not just choosing a model. You're choosing an architecture you'll be maintaining for years.

Knowing the platform won't introduce an advertiser incentive into that architecture removes a category of risk from the investment thesis. Not the most likely risk. But a real one, and one that's now explicitly off the table with Claude.

When the no-ads argument doesn't change your decision

Not every enterprise needs to weight this heavily.

If you're at the early evaluation stage, running a $10,000–$20,000 discovery engagement to understand which processes are worth automating, you're not yet making a platform bet. Explore on capability and fit. Come back to governance questions when you're closer to commitment.

If your use case is low-stakes internal tooling, knowledge management, or a non-regulated function with no conflict-of-interest exposure, the ads question matters less. A model that writes better internal memos is a model that writes better internal memos, regardless of its commercial structure.

The no-ads signal carries the most weight when three conditions apply:

You're making a multi-year platform commitment. Running a time-limited pilot is a different decision.

Your sector is regulated. Financial services, healthcare, legal, insurance, and government have the most direct exposure to conflict-of-interest governance.

Your integrations are deep. Workflows that touch compliance, client advice, or procurement decisions are where commercial incentive misalignment does the most damage.

If those three don't describe your current situation, evaluate on capability and cost. This will matter later.

The implication for businesses building seriously on Claude

Australian businesses that treat AI as a point product, swappable if something better arrives, don't need to think hard about platform governance. When nothing is deeply integrated, platform-level trust matters less.

For businesses making genuine architectural bets, agent harnesses wired to the CRM, automated workflows touching compliance systems, AI embedded in day-to-day operations, the platform's commercial incentives are part of the investment case. Not a footnote. Part of the case.

The no-ads commitment doesn't make Claude better at the task in front of it. It does mean Anthropic's incentive structure isn't pulling in a different direction from your business's.

The organisations that end up with durable AI advantage won't be the ones that evaluated model benchmarks most carefully in 2026. They'll be the ones that made sound platform bets, understood what their vendor's commercial model would mean for their workflows over five years, and built on infrastructure they could trust.