The model config update takes thirty seconds. Most teams who stop there leave the upgrade's value on the table.

Anthropic moved Claude Opus 4.7 to general availability in April. It's a material step up from Opus 4.6 on the hardest engineering tasks: advanced code reasoning, multi-file refactors, agentic workflows that run for minutes before surfacing a result. For Australian engineering teams with real Claude deployments, here are the five things worth examining this week.

1. The hand-off threshold has moved

The practical value of a model upgrade is not the benchmark score. It's the change in where you can safely remove human supervision from a task. On Opus 4.6, many teams kept a senior engineer in the review loop for harder work: multi-file refactors across a codebase they'd partially described to the model, API integrations where the model had to infer contract behaviour from partial documentation, dependency analysis where a wrong assumption downstream could break three other services. The work was possible on 4.6. It was just risky enough to require a second set of eyes before merge, and that risk assessment was usually correct.

Opus 4.7 narrows that category meaningfully. Teams running it on the same class of tasks report stepping back from review cycles that were previously non-negotiable. At $150 to $180 per hour fully loaded for a senior engineer in Sydney or Melbourne, each supervised review cycle you remove from a repeating task compounds quickly. Four removed review cycles a week on a single workflow is $30,000 to $37,000 a year in reclaimed capacity. For teams running weekly processes, that's roughly one full engineer-week of capacity returned per year from a single workflow change.

2. Self-verification changes the QA equation

Opus 4.7 devises ways to check its own outputs before surfacing a result. This is different from self-correction after feedback. It's pre-emptive: the model tests its own reasoning before reporting back.

The operational effect is fewer false-confident outputs. The failure mode that burns sprint capacity (the output passes a quick review, gets merged, and breaks in staging) is less common on 4.7. For engineering teams where 'looked right on the first pass' has been a recurring sprint drain, this is the most practically valuable change in the release. It's not that 4.7 never gets things wrong. It's that it's less likely to get things confidently wrong.

How to test it before committing to migration: take your five hardest failure cases from Opus 4.6 and run them on 4.7. Not your standard success cases. Your documented failures and edge cases. The quality delta on hard cases tells you more than any synthetic benchmark.

3. Vision is genuinely better, and most teams haven't retested it

4.7 processes images at greater resolution. For teams that set up visual workflows when Opus 3 was current and haven't retested since, you're likely working from outdated assumptions about what's achievable.

The use cases that matter for Australian deployments: accessibility audits on UI screenshots under WCAG 2.1 guidance (relevant for government-facing products), architecture diagram review, form digitisation from scanned PDFs, and document layout extraction. For financial services teams handling scanned client onboarding documents under Australian Privacy Act requirements, vision quality has been a practical ceiling on automation scope. That ceiling limited how much document-heavy work could be pushed through Claude without human re-keying. It's now higher.

4. Professional output quality has lifted

Structured deliverables are cleaner and more considered in structure: investor updates, statements of work, compliance reports, client-facing documentation. The polish floor is higher, which means review cycles shorten.

For Australian consultancies and agencies using Claude to produce client deliverables, the math is straightforward. If a deliverable that previously required 45 minutes of post-processing polish now requires 20, and your team produces eight a week, that's 200 minutes a week. At $120 to $180 per hour for senior consulting time, that's $24,000 to $36,000 a year in returned capacity on one task.

5. Plan around 4.7 as your production model

4.7 is Anthropic's strongest generally available model for production use. Research previews of future capabilities exist and some teams have early access to them, but the correct planning frame for your production architecture this quarter is Opus 4.7. Don't hold off migration waiting for something further along the pipeline. Ship on 4.7. When the next GA release lands, you'll be better positioned to migrate from a working 4.7 baseline than from a 4.6 deployment that was never fully optimised.

More specifically: prompts tuned for Opus 4.6 may be over-engineered for 4.7. Explicit chain-of-thought scaffolding, manual verification steps baked into the prompt, guard-rails that compensated for 4.6 limitations. These cost tokens on 4.7 without delivering equivalent value. Stripping them out requires testing, but the payoff is lower latency and lower inference cost on every call. For high-volume deployments running thousands of calls a day, the token savings from a single simplified prompt can be material at the end of the month.

When not to migrate this week

4.7 is not the right move for every workflow right now.

Your volume is high and complexity is low. Opus 4.7 is priced at the Opus tier. Classification, short-document summarisation, and high-frequency extraction tasks are cheaper on Haiku or Sonnet. Don't pay for reasoning depth you don't need.

Your evals are weak or missing. Migrating model versions without a reliable test set means you have no signal on whether quality went up or down. Build the evals first, then migrate.

Your prompts are locked in systems no one currently owns. Migrating model versions without being able to adjust the prompts means you get the new model running against old assumptions. Half an upgrade is often worse than none.

What to do this week

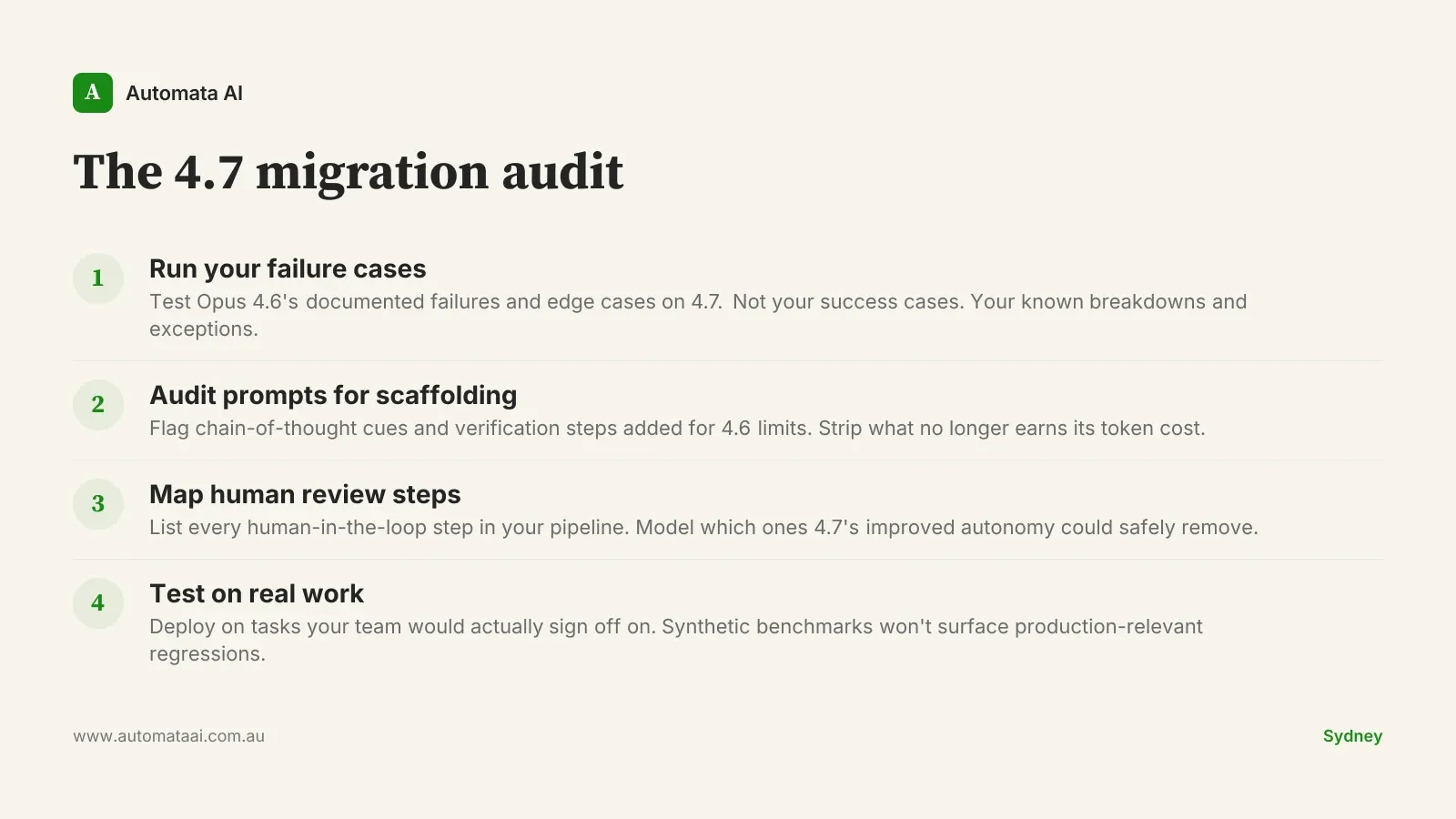

Run failure cases first. Take your five hardest Opus 4.6 failures and run them on 4.7 before touching your success cases. Document the quality delta.

Audit prompts for 4.6 scaffolding. Flag every chain-of-thought cue, verification step, or guard-rail added to compensate for the previous model's limits. These are candidates for removal.

Map your review cycles. List every human-in-the-loop step in your current pipeline and model which ones 4.7's improved autonomy could safely remove.

Test on real work. If you're on Claude Code, update the model config and run a task your team would actually sign off on. Synthetic prompts won't surface production-relevant regressions.

The teams that get full value from model upgrades are the ones who treat each release as an audit trigger. Not just a config update. A reason to find where the previous model forced workarounds that the new one no longer needs. That audit takes a few hours. The savings from removing a single unnecessary human-in-the-loop step usually pay for it inside a month.