We dropped Opus 4.7 into two client environments in the week after Anthropic shipped it on April 16, 2026. The headline improvements are genuine: better long-run reliability on coding tasks and a measurable lift on multi-step agentic work.

What the release notes don't surface clearly are the five places the swap quietly breaks things. None are catastrophic. Most are fixable in a day. But if you're running Claude in a production agentic loop, or you're a Sydney or Melbourne professional services firm with Claude baked into a compliance or document workflow, you want to find them before Monday.

Gotcha 1: Literal instruction-following replaced flexible interpretation

Earlier Claude models had a kind of helpful imprecision. If you said 'summarise in three bullet points' and the source material warranted four, Claude gave you four. Opus 4.7 takes the instruction at face value. Three means three.

Anthropic recommends prompt re-tuning on the new model, and it's in the migration guide. But re-tuning sounds like optional polish. It isn't. If your prompts were written for a model that smoothed over loose phrasing, Opus 4.7 will surface every rough edge. Vague field names in your system prompt, ambiguous output format instructions, underspecified fallback conditions: these all become failure cases.

For Australian financial services teams running document summarisation or compliance extraction under APRA CPS 230 obligations, this can look like degraded output quality on day one. The model isn't worse. The instructions just stopped working the way they used to.

Gotcha 2: The tokenizer change adds 20 to 35 percent to your input token count

Opus 4.7 ships with an updated tokenizer. The same prompt text maps to more tokens, roughly 1.0x to 1.35x depending on content type. Code-heavy prompts sit at the upper end of that range. Natural language prompts tend toward the lower end.

If you're running 5,000 API calls per day at an average of 3,000 input tokens each, a 25 percent increase adds about 15 million input tokens monthly. At Opus pricing, that compounds before you notice. The offsetting argument: Opus 4.7 resolves harder problems in fewer iterations, so agentic loops can run shorter. That may net out flat or better on total tokens. But that's a measure-it claim, not an assume-it one.

Gotcha 3: xhigh is now the default effort level in Claude Code

Claude Code v2.1.117 introduced a new effort tier: xhigh, sitting between high and max. On Opus 4.7, xhigh is now the default. The prior default on Opus 4.6 was high. On Sonnet 4.6, the default stays high.

More thinking per turn is better for hard problems. It also costs more per turn and increases per-turn latency. If your team uses Claude Code for routine tasks alongside complex ones, the cost profile has shifted without anyone making a deliberate decision to shift it. Worth checking before the month-end invoice.

Gotcha 4: Vision pipeline sizing logic may now work against you

Opus 4.7 raises the maximum image resolution from 1,568px on the long edge to 2,576px. For screenshot analysis, document processing, or computer-use workflows, that's a meaningful change.

Higher resolution means the model sees more detail, which is usually better. But if your pipeline pre-processes or resizes images before sending them, your existing sizing logic may now be working against you. Images you were scaling up to hit the old 1,568px ceiling could now be sent at native resolution and processed differently. Run your vision evals before assuming the resolution change is net positive.

Gotcha 5: Direct-swapping without eval tests on production traffic

This is the meta gotcha. The four above are all findable with a decent eval pass. The fifth is skipping that pass entirely.

Direct-swapping two models without a side-by-side run is the agentic equivalent of deploying a database schema change without a migration test. You find out what's different when your users do.

We've seen Australian businesses running $50,000 to $150,000 annual Claude API budgets treat model upgrades like a minor version bump. They are not minor version bumps. Opus 4.7 is a different model with different behaviour on the same inputs. The release number obscures that.

When not to migrate yet

If any of the following apply, stay on Opus 4.6 until you have time to test properly.

Production Claude spend under $10K per year. The performance improvement probably isn't worth the prompt re-tuning effort at this scale. Use the migration as an excuse to clean up your prompts when you have a slow week.

No eval suite in place. Run your real production prompts through both models first. If you can't do that comparison, you're effectively running your first test on live traffic.

Hard latency requirements. xhigh effort increases per-turn thinking time. If your use case is customer-facing or real-time, benchmark it before switching.

None of these are permanent reasons to avoid the upgrade. They're checkboxes. Once you've got a representative sample of production prompts running through both models, the comparison takes a day.

How to run the migration without surprises

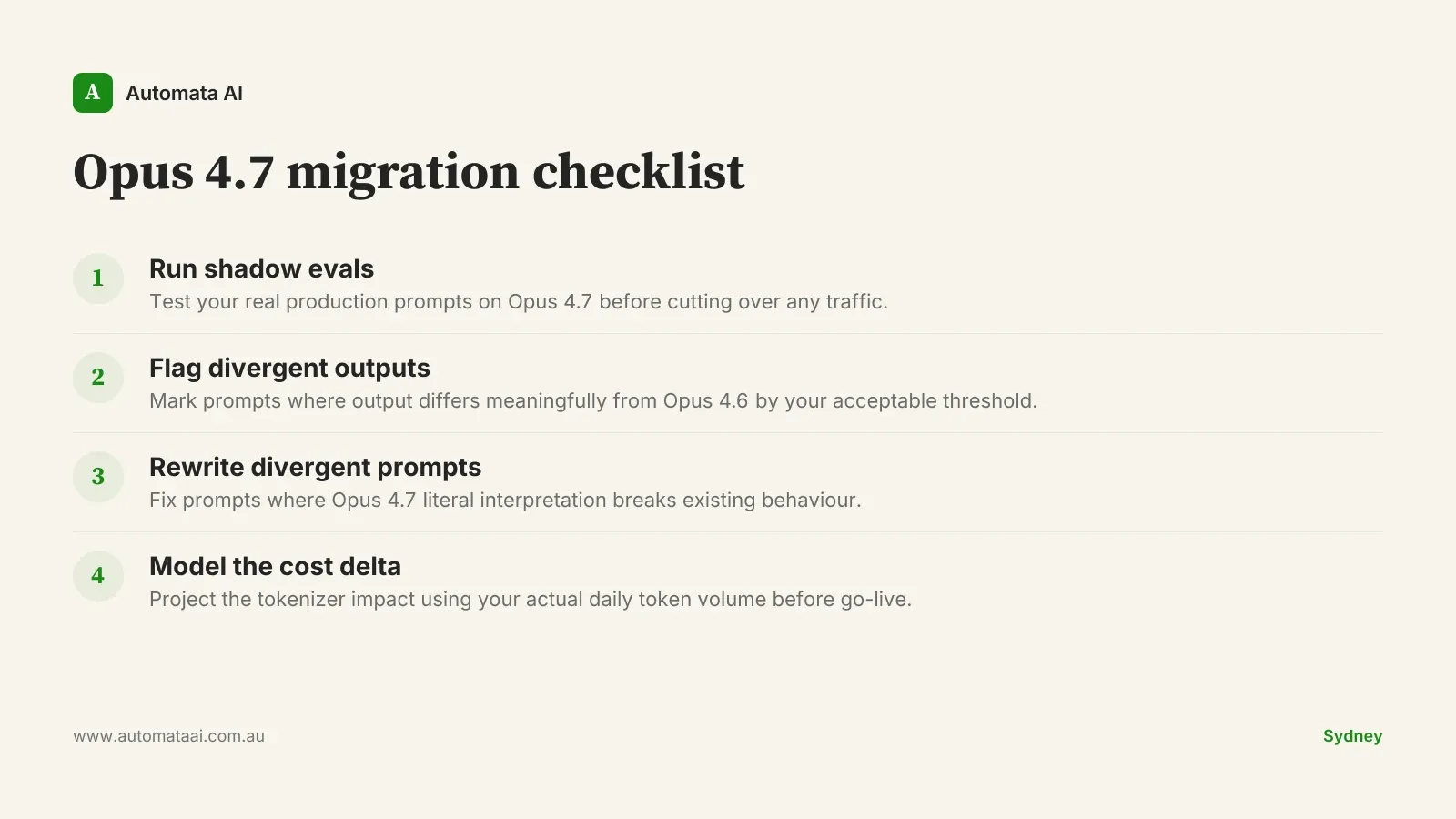

Run your existing prompts on Opus 4.7 in a shadow environment before cutting over any traffic. Flag outputs that diverge meaningfully from Opus 4.6. Rewrite the prompts that diverge. Then project the tokenizer cost impact using your actual daily token volume before you make the switch.

The upgrade is worth it for teams doing complex multi-step agentic work. Tighter instruction-following and the xhigh effort level genuinely move the needle on hard problems. But that improvement has to be earned with a pre-cutover eval pass. It doesn't arrive automatically with the model ID change.