Anthropic's launch post for Claude Security put one sentence in plain language: the next generation of AI models "will be particularly effective at autonomously exploiting" software flaws. That sentence applies to both sides of the table.

For Australian enterprise security teams sitting on an unscanned codebase and an AppSec headcount that cannot grow fast enough, this is not a product announcement to bookmark. It is a deployment decision to make this quarter.

The threat model has shifted

AI is accelerating vulnerability discovery for defenders. It is accelerating it for attackers too, and there is no structural reason to think that asymmetry stays stable.

The specific dynamic Anthropic named at launch: today's models already excel at finding software flaws at scale. The next generation will be capable of exploiting them autonomously. This is not a generalised warning about AI risk. It is a product roadmap implication: the manual effort required to discover and exploit vulnerabilities will decrease for both defenders and attackers over the next two to three years.

Australian organisations several years into technical debt accumulation are sitting on codebases that get harder to defend as offensive AI capability improves. The defensive window is now, not next financial year.

What Claude Security actually does

Claude Security entered public beta on 30 April for Claude Enterprise customers. It scans codebases for vulnerabilities at scale and generates proposed fixes, powered by Opus 4.7. Three points matter for an AU enterprise evaluation:

It is a production product, not a research prototype. Project Glasswing, Anthropic's separate initiative using Claude Mythos for elite vulnerability research, is distinct. Claude Security is the general-purpose tool built for enterprise AppSec teams at scale.

Access is direct or via partners. Available through the Claude Platform directly, and through technology and services partners building on the Claude Security API.

The output feeds your existing team. Claude Security surfaces vulnerabilities and proposes fixes. A human AppSec engineer still makes the call on what to remediate and when. A senior engineer reviewing AI-surfaced findings ships more remediations per week than one processing undifferentiated false-positives from a legacy scanner.

The Australian case for moving this quarter

APRA CPS 234 is a board question, not a compliance form

Australian financial services firms have operated under CPS 234 since 2019. The board conversation has moved well beyond whether a vulnerability management programme exists. Board members at ASX-listed banks and major regionals are asking about AI-augmented security capability specifically. A Claude Security pilot run this quarter gives your CISO findings from your own codebase by mid-year — concrete evidence, not a vendor shortlist.

The SOCI Act is pushing continuous assurance, not annual pen-tests

The Security of Critical Infrastructure Act amendments have created genuine obligations across energy, water, telco, transport, and finance in Australia. Annual penetration-testing cycles were never designed to satisfy a continuous-assurance posture. AI-augmented scanning, run regularly against active codebases, is one component of the continuous model regulators are pointing toward. Organisations that build this workflow in 2025 are ahead of it. Organisations that wait until 2026 are responding to it.

The AppSec talent market is not going to fix itself

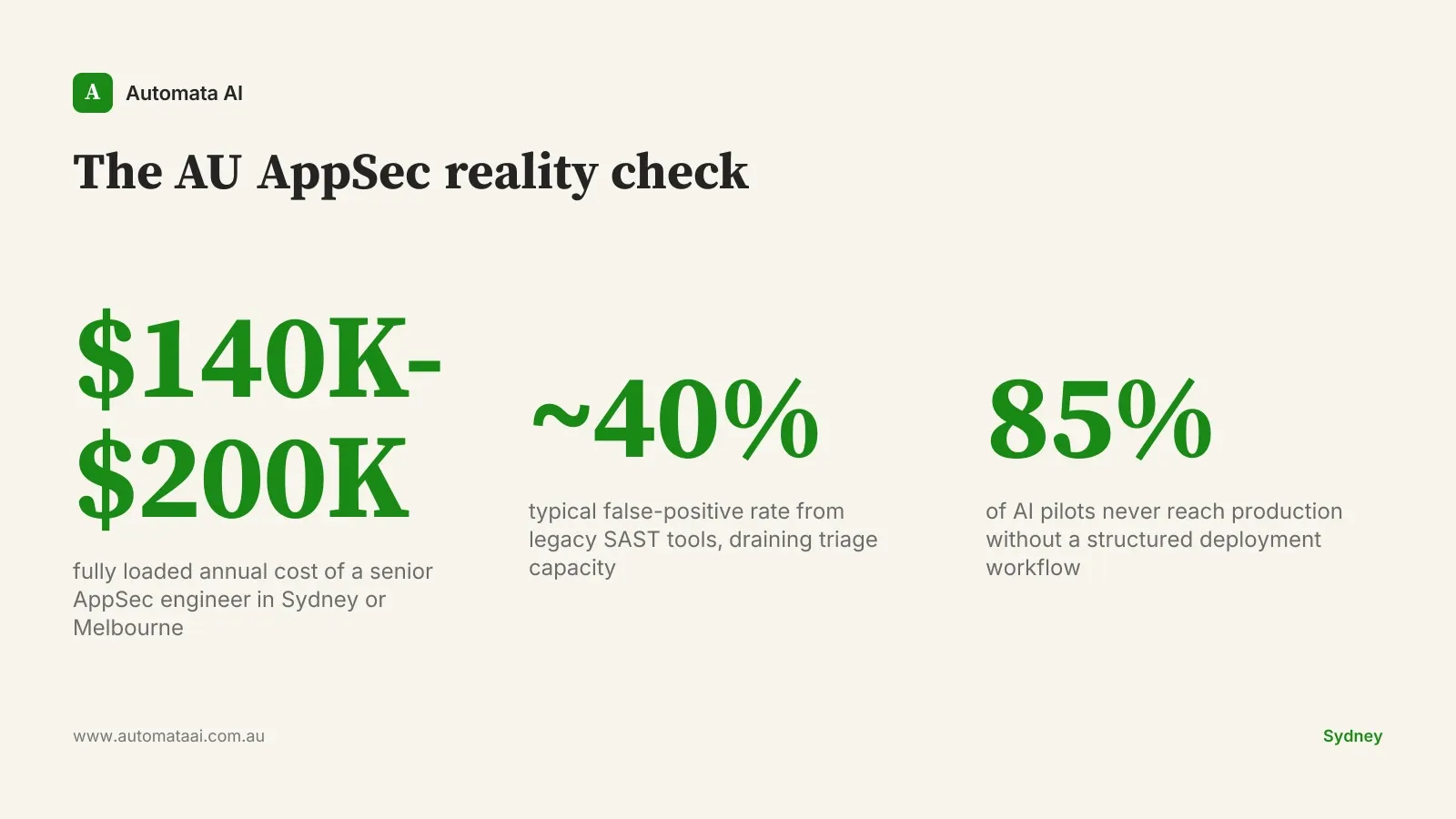

A senior application security engineer in Sydney or Melbourne costs $140,000-$200,000 fully loaded and typically takes four to six months to hire when you find one. The candidate pipeline is not improving. Claude Security does not solve the hiring problem. It changes the shape of the problem: one strong engineer reviewing AI-surfaced findings from a large codebase delivers more value than two engineers producing the same analysis manually. For organisations running lean AppSec functions, which is most of the Australian mid-market, that productivity shift is material.

When not to pilot this quarter

Not every Australian enterprise is ready to extract value from Claude Security right now.

Your codebase lacks basic version control discipline. If development workflows are not consistent enough to produce a scannable repository, no AI tool will produce useful signal.

You have no capacity to triage findings. Claude Security surfaces issues. Someone has to review them. If your existing AppSec team is already fully committed, adding a queue of AI-generated findings without dedicated triage time creates noise, not improvement.

More fundamental gaps exist in your security posture. If MFA is not enforced across your perimeter and core SaaS applications, start there. AI vulnerability scanning is a force multiplier for a functioning AppSec programme. It is not a substitute for the basics.

The honest version: Claude Security is not the right first step for organisations whose AppSec maturity is low. It is the right next step for organisations with a functioning AppSec function that cannot scale fast enough.

How to run the pilot

Pick one repository with known technical debt. Not your most sensitive system, and not a project that has been abandoned. An actively maintained codebase with a history of bug reports gives you useful signal.

Run Claude Security against it. Have your existing team triage the findings against their backlog. Measure three things: the percentage of findings that were already known and tracked, the percentage that were genuinely new, and the false-positive rate.

That ratio determines your rollout pace. If 60% of new findings are valid and unknown to your team, the case for broader deployment is strong. If the tool is surfacing primarily known issues and noise, you have data to calibrate before scaling.

Automata AI helps Australian enterprises run Claude Security pilots with structure: integration with existing AppSec tooling, triage protocols, and board-level reporting frameworks aligned to CPS 234 and SOCI obligations. The difference between a pilot that produces a rollout decision and one that produces a report nobody reads is the workflow built around it.