The Claude agent ran without issues in staging. It processed 400 support tickets, passed the QA review, and got signed off for production. Six weeks later, the team was manually reviewing 60% of outputs and nobody could explain why. Nobody changed the model. Nobody rewrote the prompt. The underlying task the agent was asked to perform had not changed at all.

Context bloat had happened. And for most Australian teams running Claude agents in production right now, it is the failure mode that never appears on the initial build checklist.

What context engineering actually is

Prompt engineering is about how you write instructions. Context engineering is about what information surrounds those instructions at runtime: what is in the window at each step, what has been compressed, what has been retrieved, and what has been evicted. The two disciplines are related but distinct. You can have a well-crafted system prompt and a broken context strategy, and the context strategy will win.

A well-engineered context contains exactly what the agent needs for the next step. A poorly engineered context contains everything that ever happened in the session. As history accumulates, the model allocates attention across content that has no bearing on the current task. Output quality degrades. Token costs inflate. Neither shows up in standard observability until the degradation is severe.

Most teams that hit this problem diagnose it as a model limitation or a prompt failure. They spend weeks iterating on system prompts, adjusting few-shot examples, tweaking temperature. The prompt is usually fine. The context is broken.

Three ways production agents fail on context

1. Context bloat

Conversation history accumulates with every agent turn. A workflow that processes a 40-page document across 30 tool calls might have 120,000 tokens of prior history by the time it reaches page 30, when the actual task at each step needs fewer than 8,000. The model cannot focus selectively. It weights the full window, distributing attention across irrelevant prior turns and relevant current content alike.

A CPS 230 compliance review workflow we audited for a Sydney-based financial services firm had a measured context relevance ratio of 18% by step 25 of a 40-step run. Eighty-two percent of tokens in the window were prior history the model did not need for that step. Quality degradation was measurable from step 15 onward.

2. Stale context

Information loaded at session start becomes outdated mid-session. A pricing agent that loaded a product catalogue at 9am is operating on stale data by 2pm if prices update intraday. The same problem appears in document-processing workflows where the agent cached version 1.2 of a contract at session start, then a revision was published mid-run. The model has no mechanism to detect staleness. It treats old context and fresh retrieval identically, and produces confidently wrong outputs.

3. Insufficient retrieval

The inverse problem: the agent does not pull in what it needs when it needs it. Instead of retrieving the relevant contract clause at the moment of analysis, it works from context loaded three steps earlier, or extrapolates from trained-in knowledge that does not reflect the actual document. This is where hallucinations that look like reasoning failures originate. The model is not guessing randomly. It is extrapolating from insufficient information.

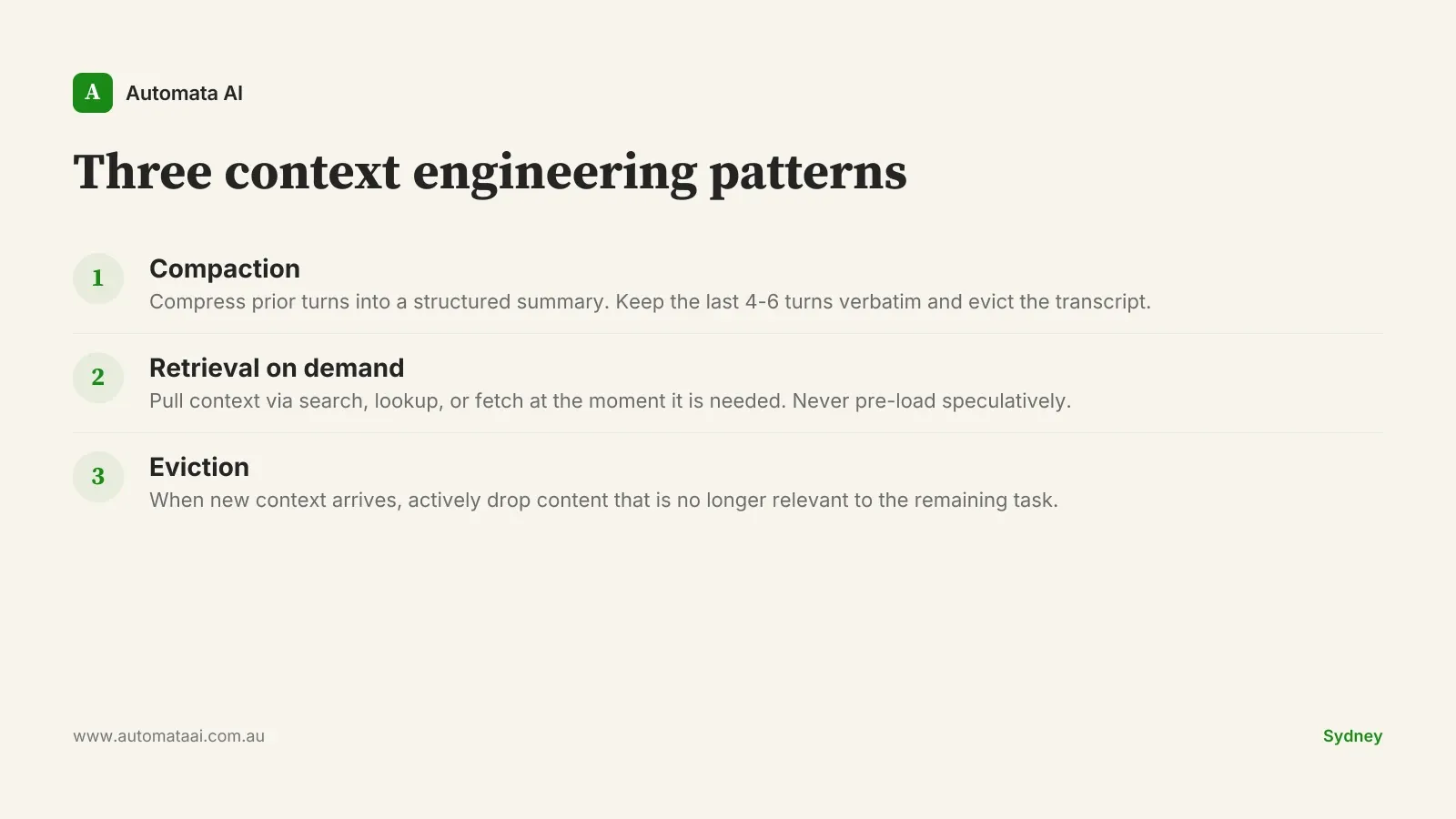

Three patterns that address this

Compaction

Keep the last four to six turns verbatim and compress the rest into a structured summary. The summary captures decisions made, facts established, and current task state. Any open threads the agent needs to track stay in the summary. You evict the transcript. You keep the information.

A compaction implementation we built for a Melbourne professional services firm reduced input token consumption by 67% on their document-review agent, with no measurable quality loss on their benchmark tasks. Engineering effort was two weeks. Annual token cost savings, at their call volume, were $22,000. The savings covered the build cost within five months.

Retrieval on demand

Do not pre-load context speculatively. Build explicit retrieval primitives into the agent (search, lookup, fetch) and let the agent pull what it needs at the moment it needs it. This is architecturally different from loading everything at session start and expecting the model to identify the relevant signal in a dense, accumulated context.

The practical constraint is latency. For a batch workflow where response time is measured in minutes, retrieval overhead is acceptable. For a synchronous customer-facing agent where users expect sub-five-second responses, retrieval strategy needs careful design: what can run asynchronously, what needs a short TTL pre-fetch, and what must be synchronous.

Eviction

When new context arrives, decide what to drop. If content from step 5 is no longer relevant to the task at step 25, evict it. Agents that lack explicit eviction logic grow their context linearly. The ceiling, when it arrives, is abrupt: context overflow errors, or sudden quality collapse, depending on how the model handles the limit.

When context engineering is not the problem

Context engineering matters for long-running, multi-step agents. For stateless or short-session agents, the patterns above add complexity without a corresponding benefit.

The agent is stateless. If each invocation starts with a clean context, accumulation cannot happen regardless of how long the session runs.

Sessions terminate quickly. An agent that completes in fewer than 10 tool calls is unlikely to reach context-engineering limits under most architectures.

Volume is low. If the agent runs 100 times per day at 80,000 tokens per call, an unoptimised context costs under $300 per month. The engineering investment will not pay back at that scale.

Context engineering is an investment. It makes sense when the agent is long-running, high-volume, or both. Applied as a default pattern to every agent a team builds, it is engineering overhead that does not earn its keep.

Measuring before you fix anything

Before changing any architecture, instrument the context window. At each agent step, log total token count, which context segments appeared in or influenced the output, and the proportion of accumulated history versus current-turn content. You can approximate output-referenced segments by checking which prior blocks the model paraphrased or cited. The resulting ratio is your context relevance score.

For most production agents we audit, that ratio sits between 15% and 35%. The remaining 65 to 85% is paying full token price and contributing nothing to output quality. At current Claude API pricing, a team running an agent at 100,000 input tokens per call with 10,000 monthly calls is spending roughly $30,000 per year in input costs alone. A 60% relevance improvement through compaction and retrieval drops that to around $12,000. Those savings typically exceed the build cost within three to four months.

A 20% context relevance score is not a model problem. It is not a prompt problem. The instrumentation takes a day. Fix what the data points to.