A lot of Australian enterprise roadmaps treat 2026 as the year to get serious about AI agents. Anthropic and consultancy Material published a survey of more than 500 technical leaders this year. The findings are worth holding against your own plan before you finalise Q3 priorities.

The survey focused on actual deployment patterns, not intentions. Not what organisations plan to build, but what they have running today. The methodology matters here. The gap between a survey that asks "do you plan to use AI agents" and one that asks "what percentage of your current deployments are multi-step" is the gap between a sentiment poll and a benchmark. This one is the latter.

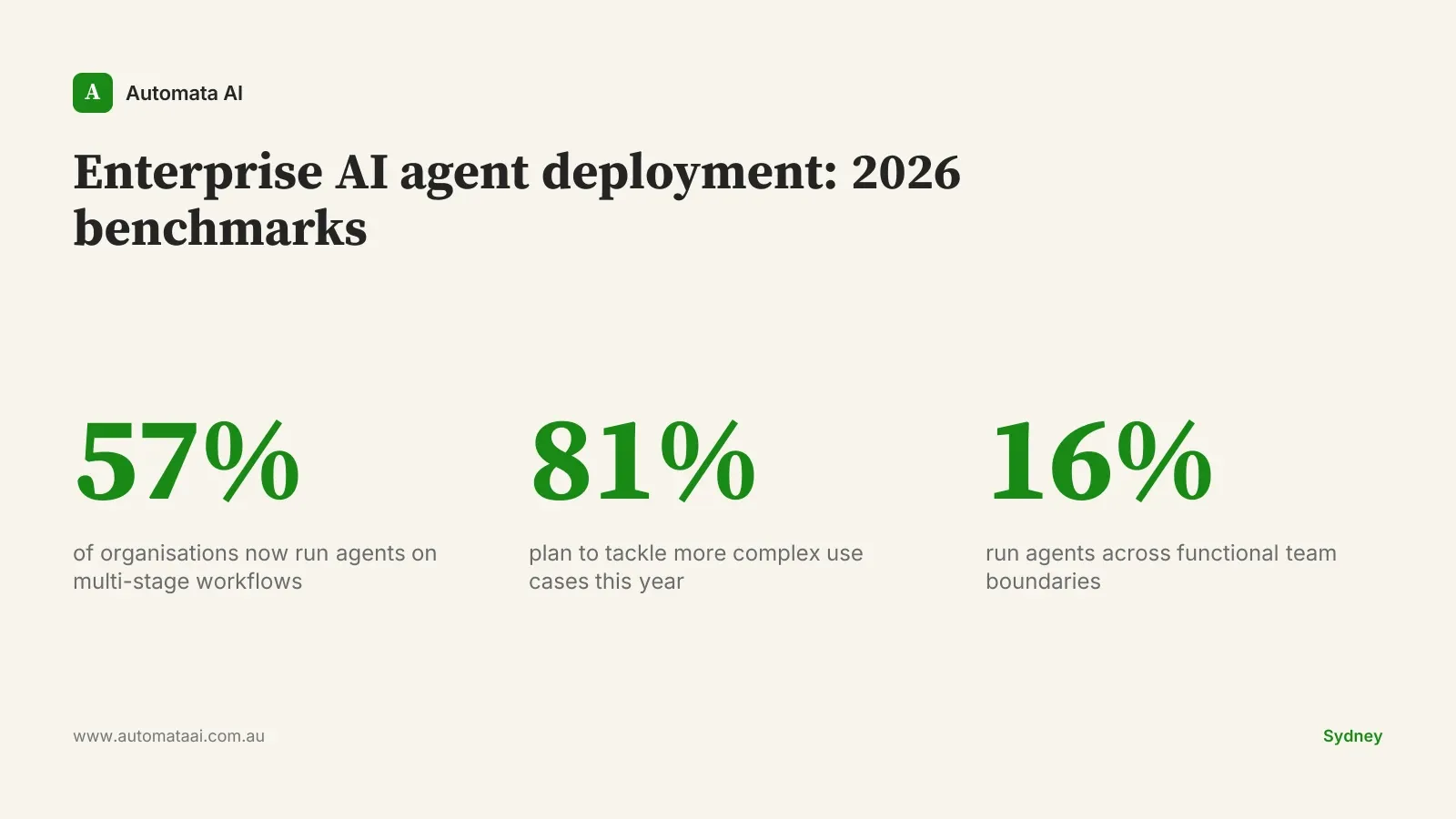

Multi-stage workflows are already the majority deployment pattern

57% of surveyed organisations now run agents on multi-stage workflows. An agent that only reads an invoice is single-task. An agent that reads the invoice, matches it against purchase order records, flags discrepancies, and routes anomalies to an analyst for review is multi-stage. The organisations in the majority are running the second type.

The benchmark question for your organisation: what percentage of your deployed agents are genuinely multi-stage? If the answer is under half, you're operating below the global median.

The complexity trajectory is accelerating, not plateauing

81% of respondents plan to take on more complex use cases in 2026. The most useful reading of that number isn't that everyone is optimistic. It's that the leaders are compounding. They shipped their first multi-step deployment, learned what breaks in production, and are immediately loading a harder problem into the pipeline. The capability accumulates. The distance between these organisations and the ones still evaluating vendor options is not one quarter. It is accumulating every week, and it is the kind of distance that does not close with a single sprint.

39% are actively building, not planning

39% of organisations are actively developing agents for multi-step processes right now. This is your near-peer cohort. The gap between this group and the 57% already deployed is typically one to two build quarters. It is closeable.

The gap between an organisation that is evaluating and one that is building is a culture gap. Those close more slowly.

16% have agents running across team boundaries

This is the leading edge. An agent that handles inbound sales qualification stays inside one team. An agent that qualifies the lead, books the discovery call, populates the CRM record, triggers a contract draft in legal, and alerts finance to a projected deal value spans four functions, four data systems, and four different approval authorities. When those boundaries are crossed, failure modes multiply: one team's data model clashes with another's, approval logic breaks at the handoff, and debugging requires context across systems that rarely talk to each other. The implementation complexity is real. So is the compounding return.

The benchmark question here is binary: do you have any agent running in production today that crosses a team boundary? Not in planning. In production.

29% are crossing organisational boundaries entirely

The most ambitious tier in the survey. Agents that operate between organisations: your system connected to a vendor's, or to a customer's. The technical complexity is manageable. The governance requirements are not trivial. Cross-org agent deployments require clear data-sharing agreements, liability allocation, and audit trail requirements that satisfy Privacy Act (1988) obligations on both sides of the relationship.

For most Australian enterprises, this sits on the 2027 roadmap. That is a reasonable position. But if your 2027 roadmap is silent on cross-org agents entirely, that is not a timing choice. It is a planning gap.

When not to chase these benchmarks

The survey measured organisations running agents at scale. If you are running fewer than three automated processes in production today, targeting cross-functional agents is not a gap to close. It is a step to skip, and skipping steps in agent architecture creates failure modes that are expensive to untangle later. Not every finding in this survey should change your next quarter. Some should change your 2027 planning.

Process volume is too low. An agent built on three transactions per day will not recover a $40,000 to $80,000 build cost within a reasonable payback period. Volume matters.

Data quality hasn't been addressed. Multi-step agents fail noisily when upstream data is inconsistent. Fix the data layer before stacking agent steps on top of it.

The first production deployment isn't stable. A second multi-step build before the first one is operating reliably is how organisations accumulate technical debt in their AI stack.

The Australian lag and what it costs

Australian enterprise AI deployment runs roughly 6 to 12 months behind comparable organisations in the US on these benchmarks. The leaders here are a handful of major banks, large retailers, and parts of the federal government. They sit roughly where US laggards sit. The mid-market, which is most of the Australian enterprise economy, sits further back. For a business turning over $50 million to $300 million, the benchmark gap is likely closer to 12 months than 6.

That lag has a compounding cost. An enterprise that ships a multi-stage compliance reporting process in 2025 rather than 2026 captures roughly $120,000 to $180,000 in analyst time annually, fully loaded at senior rates, while competitors run the same process manually. That saving, reinvested into the next build, is what creates the capability gap between leaders and the peer cohort by 2027.

Your next two quarters

Run each of the five survey findings as a benchmark against your current state. Identify the biggest gap. If you have no multi-stage agents, that is your answer. If you have multi-stage but nothing cross-functional, that is your answer.

Pick the gap. Build one initiative to close it in Q3. The survey isn't a strategy document, but it is a reliable input to one. The Australian enterprises that close this lag won't do it by running strategy retreats. They'll do it by shipping.