Three Claude agents. A coordinator, a researcher, a synthesiser. Six weeks of engineering to wire them together: task graph, context handoffs, conflict resolution. Then it hit production and underperformed a single well-prompted agent on most queries.

That's not a design failure. That's an architecture mistake. The team built a multi-agent Claude system before the task justified one.

Anthropic published its engineering post on the internal research system it built for complex knowledge synthesis. Multiple Claude agents collaborating, specialised roles, parallel workstreams. The architecture works. And the post is unusually candid about where it fails. For Australian teams building past single-agent simplicity, four lessons stand out.

Lesson 1: Decomposition determines whether the system works

A multi-agent Claude system is only as good as the task decomposition underneath it. This is the part most engineering teams underestimate, and the most common reason well-funded builds produce worse outputs than a single agent would.

The research system works because research tasks decompose cleanly. A sub-query to verify a claim in one source is genuinely independent of a sub-query retrieving context from a second. Agents run in parallel without interference. When the task structure doesn't map to independent sub-tasks, where there's shared state or hidden dependencies between agents, the system produces contradictions and gaps that a single agent working with full context wouldn't generate.

The practical test: before building, draw the dependency graph. If your tasks have clean edges and each sub-task's output is genuinely independent of the others, you can parallelise. If the graph has cycles or shared state, you're looking at a coordination problem that will cost more than it saves.

For a mid-market Melbourne professional services firm running a multi-agent system for proposal generation, this matters immediately. Proposal sections aren't independent. Pricing depends on scope; scope depends on requirements gathered in a different section. That's a sequential pipeline problem. Treating it as a parallelisation opportunity creates inconsistent outputs at scale.

Lesson 2: Coordination isn't free

Every message between agents costs something. Context handoffs lose information. Conflict resolution between agents that diverged on a sub-task takes engineering time you didn't budget. Anthropic found this in its own system. Coordination overhead is real, and for tasks below a certain complexity threshold, it exceeds the parallelism benefit entirely.

The rule that holds: if the task fits comfortably in a single Claude context window with room to reason and tool call, you don't need multiple agents. A single well-structured agent with a clear prompt and appropriate tools will outperform a fleet on coordination overhead alone. This isn't a failure of imagination. It's a recognition that complexity has a cost.

Multi-agent starts making sense when the work genuinely exceeds what one agent can hold. Large-scale document review across hundreds of contracts. Parallel research across disparate data sources. Processing workflows where different stages require fundamentally different prompt strategies and tool sets.

In Australian financial services, where APRA CPS 230 requires organisations to manage operational risk in AI-assisted processes, the coordination overhead question isn't just architectural. Every handoff between agents is a potential failure point in your risk model. Design accordingly.

When multi-agent is the wrong call

Most teams considering a multi-agent Claude system don't need one yet. That's not pessimism. It's arithmetic.

Process volume is too low. If your workflow runs on fewer than a few hundred documents at a time, a single-agent pipeline with good tooling handles it without the coordination tax.

Dependencies run throughout. If every stage depends on the previous, multi-agent adds coordination cost without adding parallelism. You need a sequential pipeline, not a fleet.

The task graph isn't drawn. If the team can't yet articulate which sub-tasks are genuinely independent, the system isn't ready to build. Clarity first, architecture second.

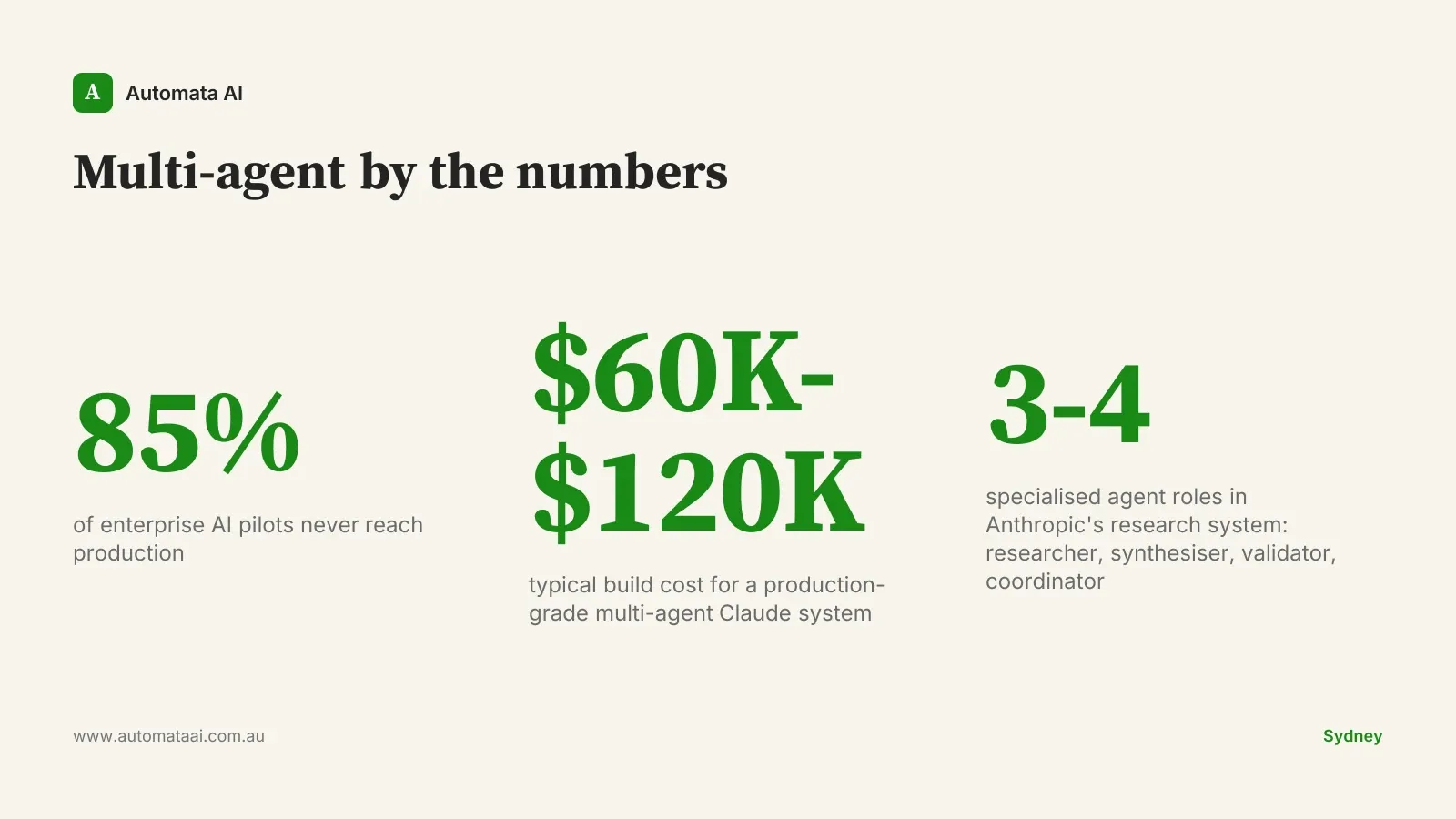

Multi-agent Claude systems cost more to build and more to maintain. A well-scoped build runs $60,000-$120,000. The failure modes in production are non-obvious and harder to debug than single-agent systems because the error could originate at the coordination layer, not the model. The cases where that investment pays off clearly are large-scale parallel workloads, not workflows that could be simplified.

Lesson 3: Specialised agents beat generalists

Anthropic's research system doesn't run five identical agents on the same base prompt. It runs specialised agents with different prompts, different tool access, and different success criteria. Researcher, synthesiser, validator. Each agent does one thing well.

The counterintuitive finding: a fleet of generalist agents performs worse than a smaller set of tightly specialised agents, even when the generalist fleet has more total capacity. The tighter the prompt, the clearer the success criteria, the better the output quality. Generalist prompts introduce ambiguity that compounds across agent handoffs.

The design implication for Australian enterprise builders: resist the default. Five copies of the same agent isn't a multi-agent system. It's horizontal scaling of a single-agent prompt, with all the coordination overhead and none of the specialisation benefit. If your contract review function runs 200 hours of analyst time a month at $100/hr fully loaded, that's $240,000 a year on one process. Properly specialised agents change what's possible at that volume. Before building, name the roles. Each agent needs a job description: what it does, what it produces, what good output looks like.

Lesson 4: Eval the system, not just the agents

Per-agent benchmarks are necessary but misleading in isolation. An agent performing at 95% accuracy on its sub-task can combine with others to produce system-level output that's substantially worse. Anthropic invested heavily in system-level evals: end-to-end measurement of research output quality, not component scores.

This is the discipline that separates production-grade multi-agent systems from demos. A demo is impressive when each agent works. A production system is successful when the composed output is reliable enough to trust at scale, consistently, across the full range of inputs it will see.

Build system-level evals from day one. The metric that matters: given the final output the system produces, how often is it good enough for the human decision it supports? A compliance analyst at a Sydney insurer reviewing policy documents doesn't care that the researcher agent hit 92% recall. They care whether the final review flag rate is accurate. Measure that. Per-agent evals are a development tool; system-level evals are the production gate.

The businesses that get multi-agent right in the next 18 months will share one thing: they resisted building it before the task justified it. Draw the dependency graph. Name the agent roles. Define the system-level eval before you write a line of code. Those three decisions, made before the build starts, determine whether the system reaches production or joins the 85% that don't.