The LangChain config was forty lines. The actual pipeline logic was five. The rest was framework tax: callback chains, agent executors, custom retriever classes that existed solely to satisfy the abstraction layer.

That's how a Sydney data engineering team described their abandoned agentic pipeline to us last year. Not because the use case was wrong. Because the orchestration had become the project.

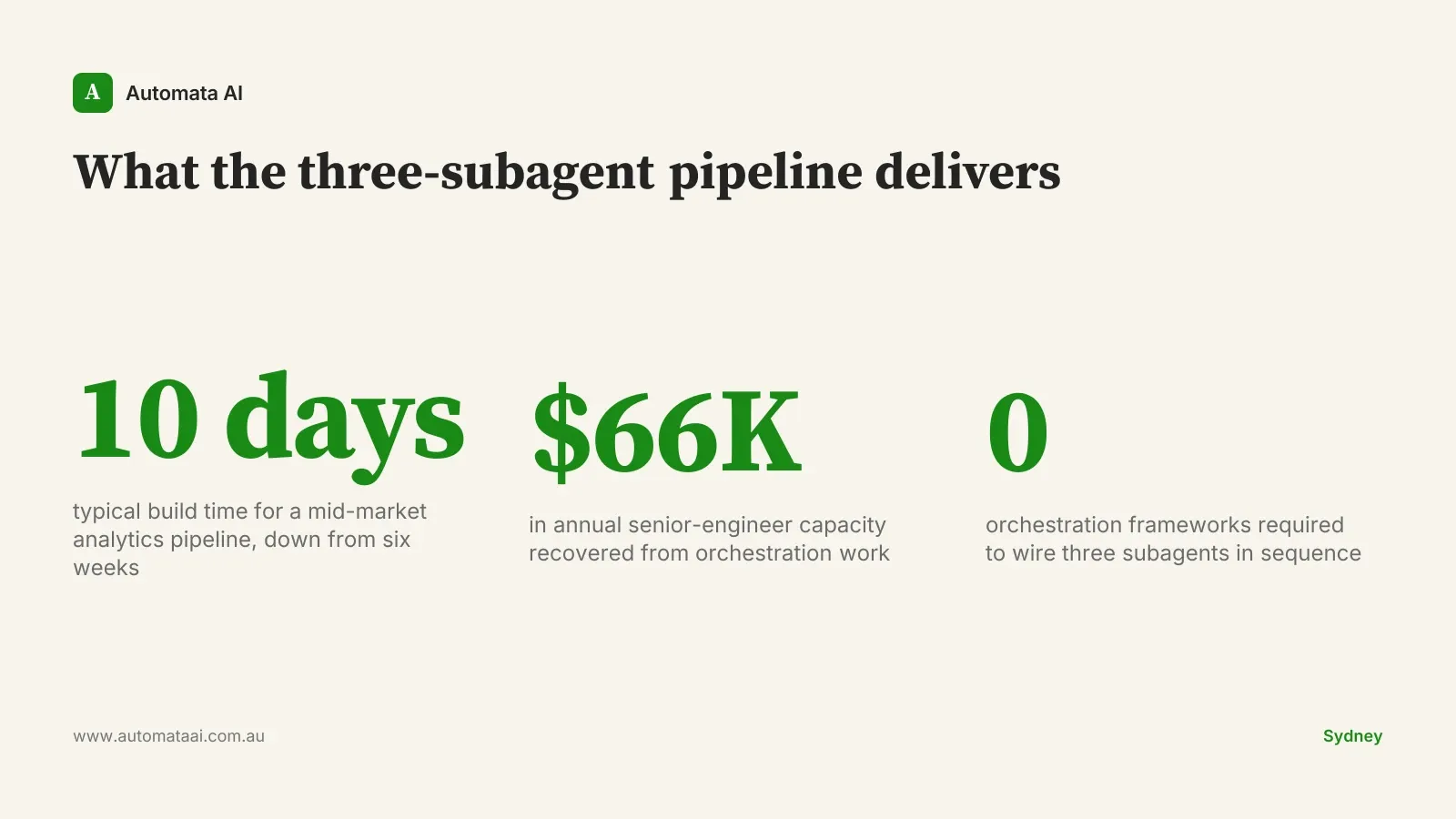

A senior data engineer in Australia costs $200,000 fully-loaded per year. If a third of that time goes to orchestration work rather than building data logic, that's $66,000 in annual capacity spent on wiring rather than thinking. You can model the recovery math for your team size in our ROI Calculator. Three minutes, AUD figures, no signup.

The framework becomes the project

LangChain was designed for generality. It can wrap any model, any vector store, any retrieval pattern. That flexibility is also what makes it expensive to operate. Every time a model API changes, every time your data schema shifts, every time a new engineer joins the team, someone has to re-learn the framework's mental model before they can touch the pipeline.

Claude Code subagents take the opposite approach. Each subagent is a scoped Claude session with a defined input, a defined output, and specific tool access. There is no persistent memory, no complex routing logic, no dependency graph to maintain. There is a shell script that passes outputs between sessions. The complexity ceiling is the engineer's understanding of the problem, not the framework's abstraction model.

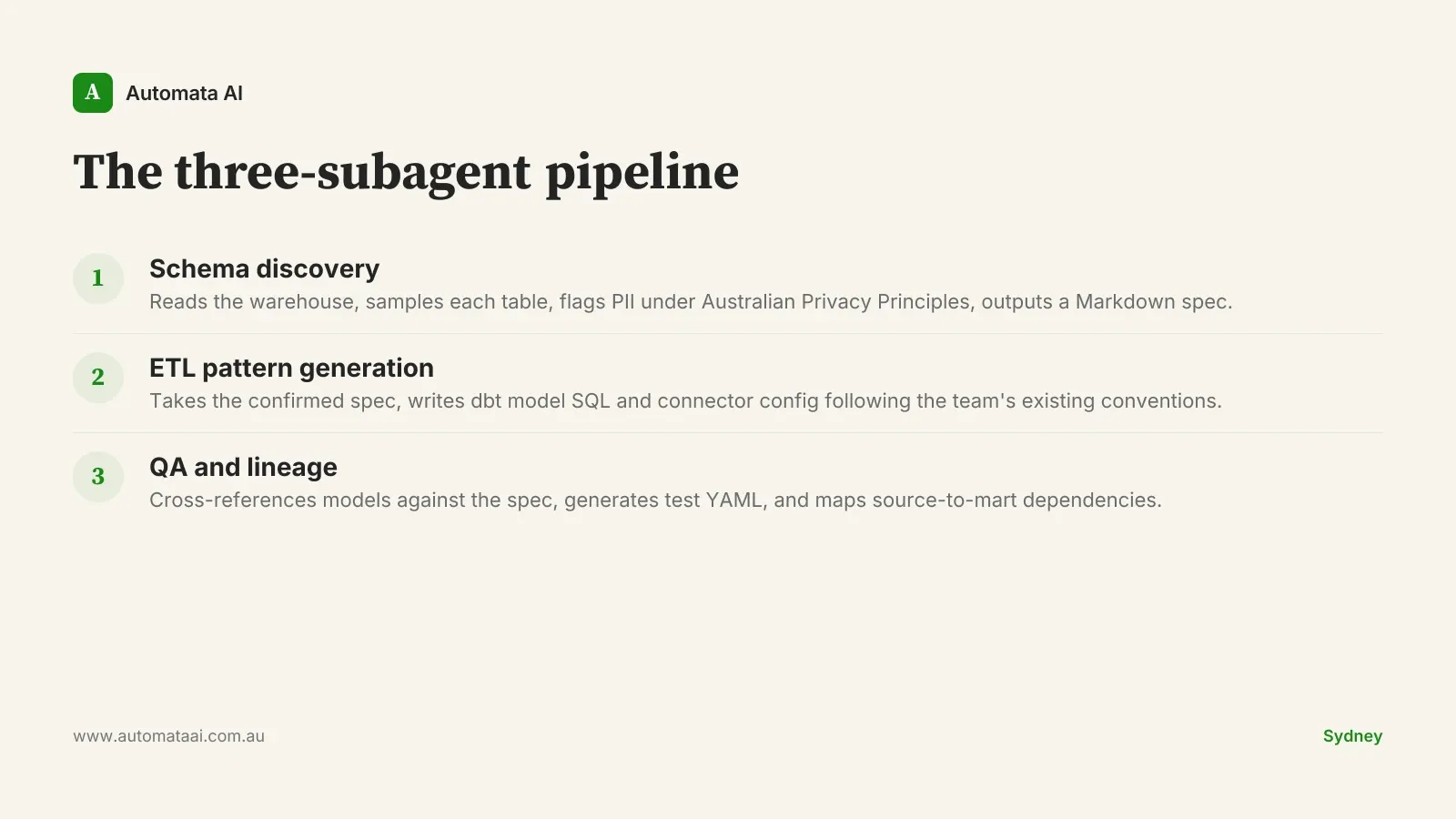

The three-subagent pipeline

The pattern that works reliably for Australian mid-market data teams is three subagents in sequence. These are typically teams running Redshift or BigQuery against a Salesforce or NetSuite source, with one or two senior engineers managing a backlog of pipeline requests. Each subagent does one thing and hands off to the next. If you need this running in production rather than just described, our AI Automation Services covers implementation end-to-end.

Subagent 1: Schema discovery

The first subagent connects to the source warehouse, samples every table, and produces a Markdown specification. Column types, nullability, primary keys, inferred relationships based on naming conventions. It also flags any column that looks like personal information under the Australian Privacy Principles: names, emails, dates of birth, tax file numbers. The engineer reviews the flags before anything else runs.

Markdown spec per table. Column descriptions, type inference, and inferred relationships from naming conventions.

Relationship diagram. Suggested joins based on key naming patterns across the warehouse.

PII flag list. Columns that require Privacy Act review before data leaves the warehouse.

The senior engineer reviews the spec and corrects wrong inferences. This takes fifteen minutes, not two days. The corrected spec becomes the single source of truth for the rest of the pipeline. Everything downstream inherits from it.

Subagent 2: ETL pattern generation

The ETL subagent reads the confirmed schema spec and generates dbt model SQL along with Airbyte connector configuration. It has read access to the team's existing dbt project, so it follows the same naming conventions and uses macros the team already wrote rather than reinventing them. What it cannot do is run migrations, connect to production, or make deployment decisions. It writes code. The engineer reviews and runs it. That boundary is deliberate.

Subagent 3: QA and lineage

The QA subagent reads the generated dbt models, cross-references them against the schema spec, and produces a test plan. It generates a dbt schema.yml file with null checks, uniqueness constraints, and referential integrity tests. For business logic invariants, the engineer specifies them in plain English and the subagent translates to test SQL.

schema.yml ready to drop in. Null checks, uniqueness, and referential integrity tests generated from the spec.

Lineage diagram. Source tables to final mart models, every dependency mapped.

Coverage gap list. Every model without a corresponding test, flagged for the engineer to address.

Composition is just a script

There is no orchestration layer. The composition is a shell script. Run the schema subagent, write its output to a file, pass that file to the ETL subagent, pass the ETL output to the QA subagent. Bash handles the sequencing. The dbt CLI handles execution. The engineer handles review. No LangGraph, no orchestrator config, no DAG definition file to maintain.

Australian data teams that have adopted this pattern typically cut new pipeline build time from six weeks to around ten days for a standard mid-market workload: a Salesforce-to-Redshift integration with three to five marts. The time absorbed by orchestration now goes to the parts that require judgment: business logic review, performance tuning, and stakeholder alignment on metric definitions.

When this pattern doesn't fit

Three subagents in sequence is not a universal solution. This pattern works when the pipeline is well-defined, the schema is stable, and the engineer can review outputs before each stage runs. It breaks in at least three situations.

The schema changes daily. If your source system releases schema updates on an unpredictable cadence, the spec the first subagent produces is stale before the ETL subagent reads it. You need a review gate before each run, which negates most of the speed benefit.

The business logic is contested. If finance and operations disagree on how revenue is defined, no subagent resolves that. The subagent picks one interpretation and generates confident SQL around it. That confidence becomes a liability.

The data volume is too small. Three tables updated weekly does not justify the setup cost. The pattern earns its keep at roughly five or more source systems with ongoing schema evolution.

If your situation fits one of these, the pattern can still help in parts. Use the schema-discovery subagent alone to document an unfamiliar source system. Run the QA subagent on models a junior engineer wrote. Extract value from the pieces that fit rather than treating it as all-or-nothing.

What changes for the senior engineer

The shift is not from engineer to reviewer. A senior engineer capable of setting up this pipeline and evaluating its outputs is exactly the engineer who understood the domain well enough to build it manually. The subagents don't replace that judgment. They redirect it.

That $66,000 in orchestration capacity reallocates to work that's harder to hand off: negotiating metric definitions with stakeholders, stress-testing assumptions under real query load, deciding which business logic belongs in the warehouse and which belongs in the BI layer. Those decisions determine whether the pipeline is trusted. No subagent makes them for you.

The mid-market analytics teams that get most value from this pattern are those with one or two senior engineers, stable source systems, and a backlog of pipeline work they cannot staff through. If that's your situation, start with a clear-eyed look at where your current build time actually goes. Our AI Readiness Assessment works through that diagnostic in a structured session.