A customer contacts your support team on Tuesday morning. They were charged twice for a subscription they renewed once. Your logs show two successful Stripe calls, twelve minutes apart. The Claude instance your app runs reported both as completed successfully. Neither was retried by the user.

The model called the tool, did not receive a clean acknowledgment, and called it again. The first call had gone through. For an application handling 100,000 daily tool calls, a 0.5% failure rate is 500 broken interactions every 24 hours. At $25 AUD per support resolution — the low end for a Sydney or Melbourne SaaS team with a human support tier — that is $12,500 a day. Annualised: $4.5M in avoidable cost. Model the full exposure for your stack in our ROI Calculator before you estimate what the infrastructure is worth.

Failure mode 1: Silent partial completion

The model reports a tool call as successful when the tool returned an error. The downstream conversation proceeds as though the action happened. The user gets a confident response. Two turns later, they realise nothing actually occurred.

What makes this hard to catch in staging: your test tools return clean responses. Clean responses are not representative. Production tools return timeouts, partial writes, transient 500s, and database constraint violations. The model does not distinguish between 'tool returned success' and 'tool returned an error I can work around.' Both produce confident-sounding output.

Never trust the model's summary of what a tool did. Your runtime must inspect the raw tool response independently of whatever the model reports back.

Surface errors explicitly into the conversation. Do not let the model receive a malformed response and write its own interpretation of what happened.

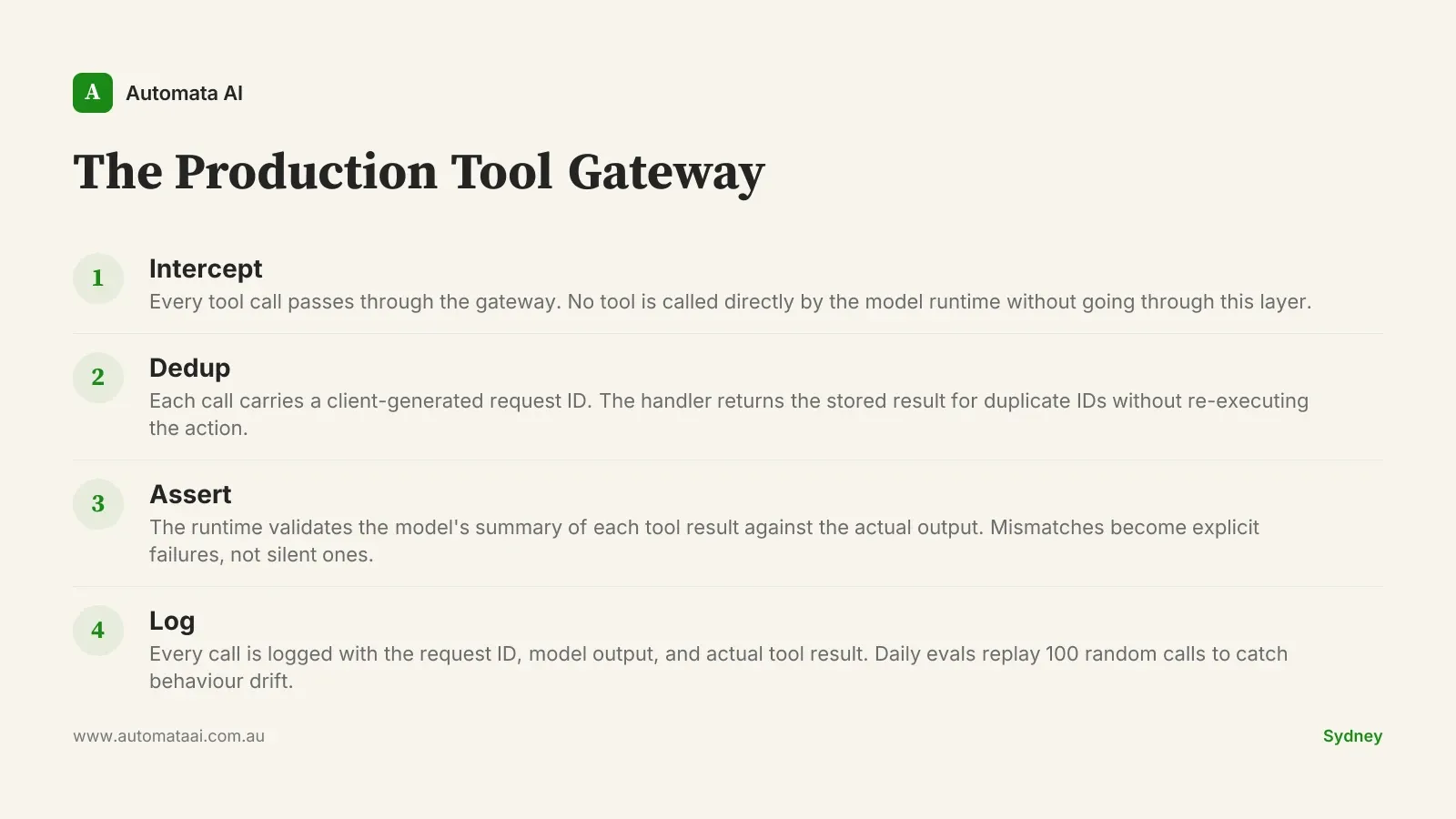

Add a structured assertion step. After every tool call, the runtime validates the model's summary against the actual output. Mismatches become hard failures, not silent ones.

Failure mode 2: Retry-induced double-action

The tool times out at 29 seconds. The model calls it again. The first call completed on the tool's side but the HTTP response was dropped mid-flight. Now the action happened twice. For a charge, a provisioning event, or an outbound email, that is a production incident.

The fix is idempotency at the tool layer, not at the model layer. Every tool invocation carries a client-generated request ID created before the first attempt. The tool handler looks up that ID in a dedup table. If the ID exists, it returns the stored result without re-executing the action. The model can retry as many times as it needs to.

Card charges. Stripe and Braintree both accept idempotency keys natively. Pass them on every charge attempt without exception.

Email sends. Generate the message ID before the first attempt and pass it as the dedup key to your sending service.

Resource provisioning. Your infrastructure API should accept a client-generated ID and treat re-delivery of the same ID as a no-op.

Payment submissions. Under AUSTRAC's anti-money laundering reporting thresholds, two submissions of the same payment can trigger a compliance event for certain transaction classes. Non-negotiable.

Failure mode 3: Hallucinated recovery

When a tool returns an error, the model often invents a plausible recovery. It tells the user the action succeeded with a caveat that sounds like routine clarification: 'Your subscription has been updated, though you may need to allow a few minutes for changes to reflect.'

That caveat is not a feature. It is the model improvising around an error it received and decided not to surface. In a QA pass, it reads as good UX. It becomes a problem when 'a few minutes' becomes never.

The structural defence: the runtime intercepts tool errors before the model sees the raw response. Instead of the full error payload, the model receives a controlled message: the tool call failed, do not assume success, tell the user what failed and what they should do next. The model can no longer improvise. Its only path is explicit failure, which is what you want.

When the full stack is overkill

Not every tool-use app needs this infrastructure from day one. A prototype with internal users and no financial transactions can launch without an idempotency layer or a structured assertion step. The cost of over-engineering early is real: six weeks of defensive infrastructure for an app that processes 200 daily tool calls and handles no money is six weeks of slower delivery.

The check is whether your tools are destructive. A tool that reads data can be retried freely. A tool that writes, charges, sends, or provisions cannot. The failure modes above apply specifically to irreversible actions. If you are still in the design phase and want a clear read on which tools in your architecture cross that line, an AI Readiness Assessment will surface the destructive calls before anything is wired to production.

Read-only tools. Retry freely. No idempotency key required.

Write actions with no financial or communication component. Should be idempotent, but eventual consistency is tolerable.

Charge, send, provision, or submit. Full stack. No exceptions.

The Production Tool Gateway

A working production tool-use stack behaves like a gateway: every tool call passes through it, nothing reaches the tool handler raw. We call the pattern the Production Tool Gateway. It has four components, and none of them are exotic.

Most Australian SaaS teams take six to eight weeks to bring a tool-use stack to this level once they decide it matters. The time is almost never the implementation. It is the discipline of identifying which tools are destructive, writing the runbooks for each failure path, and setting up daily evals before an incident teaches the same lesson at customer cost.

The businesses that ship reliable Claude tool-use apps are applying the same infrastructure thinking that SRE teams apply to payment APIs and job queues: define the failure modes, model the blast radius, build the dedup layer before you need it. If you want to see how this maps to your specific architecture, our AI automation services include a production readiness review as part of every engagement.