A 300-page insurance policy lands in your inbox. Someone needs it compared against last year's version, clause by clause, before Thursday.

Structured correctly for Claude, that's a one-hour task. Unstructured, it's noise. The 1 million token context window removes the length constraint. It does not remove the requirement to write a good prompt.

What 1M tokens means for document work

Around 750,000 words. A 50-page commercial lease, a 200-page product disclosure statement, a 600-page regulatory submission. All fit comfortably within a single context window. The bottleneck has shifted from capacity to structure. Australian financial services firms and insurance underwriters in particular face document volumes where this matters: a single APRA CPS 230 compliance review can span dozens of policies and supporting contracts. The question is no longer whether Claude can hold the document. It's whether you've set up the prompt so it knows what to do with it.

One number matters alongside the context size: prompt caching drops Claude's input token cost by roughly 90% on repeated reads of the same document. Load a 300-page policy once, query it five times across different extraction tasks, and you pay near-full price on the first load only. For workflows involving document monitoring (policy renewals, quarterly regulatory reporting, contract variation tracking), that compresses the per-task cost to cents on subsequent runs. It's the pricing mechanic that makes high-frequency document pipelines economically sensible.

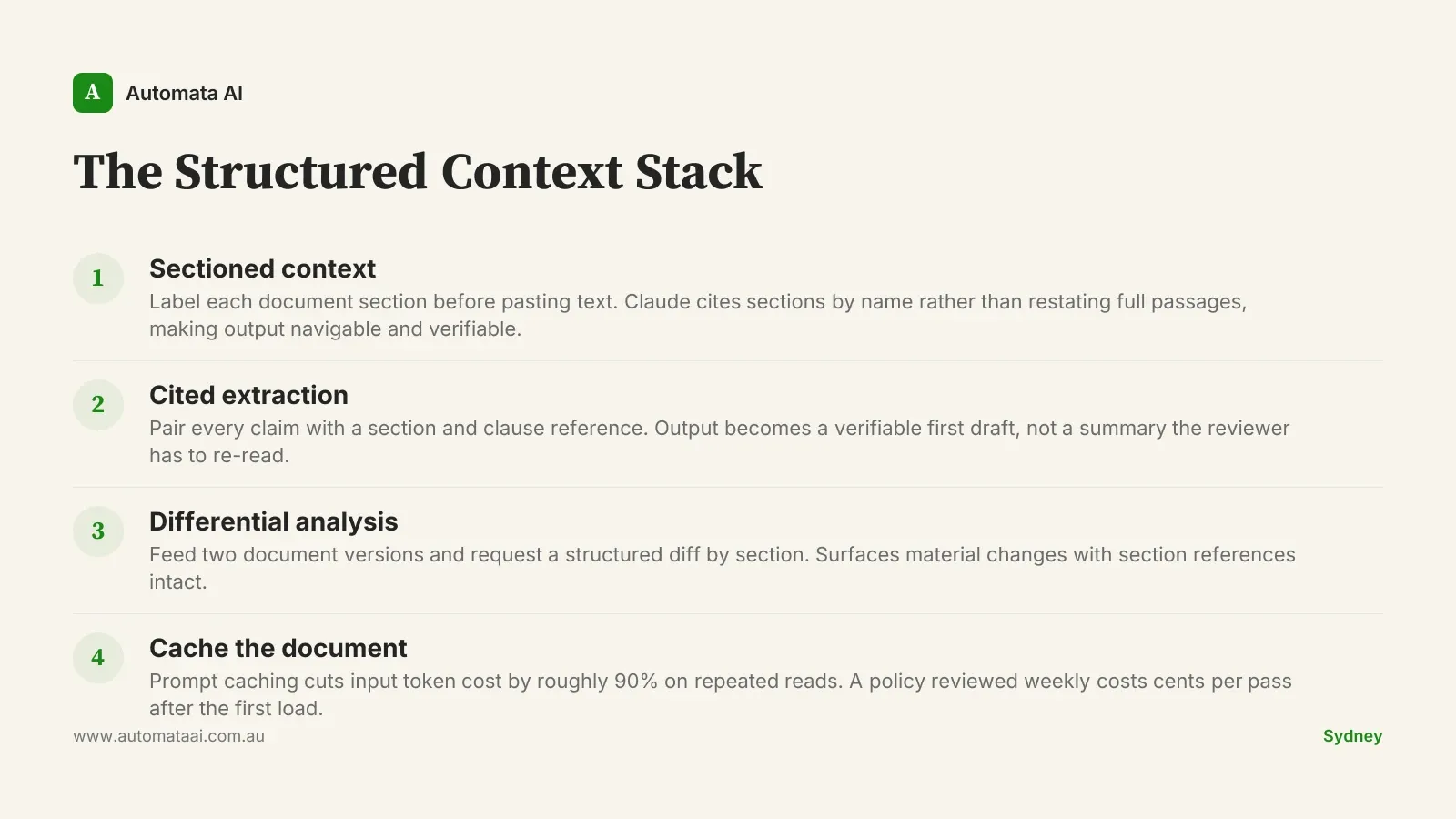

The Structured Context Stack: four patterns that work

We call these four patterns the Structured Context Stack. Together they define the difference between a Claude pipeline that earns its place and one that burns budget without a clear output.

Sectioned context. Label each document section before pasting the text. Claude then cites sections by name in responses rather than restating full passages, making the output navigable and verifiable by the reviewer.

Cited extraction. Pair every claim with a section and clause reference. This is the discipline that turns output from 'interesting summary' into 'verifiable first draft'. A reviewer spending 45 minutes checking an unanchored summary is barely better off than doing the original read themselves.

Differential analysis. Feed two document versions, such as last year's policy against this year's or a redlined contract against the original, and ask for a structured diff by section. This pattern surfaces material changes with section references intact and scales to document lengths that would take a junior reviewer a full week.

Cache the document. With prompt caching enabled, the same document costs roughly 90% less on second and subsequent reads. A policy reviewed weekly for monitoring purposes costs a fraction of what weekly plain-text prompting would run. Pair this with the differential pattern and per-review cost drops to cents.

The cost case

A Sydney law firm running differential analysis on a 300-page contract pays roughly $4 per pass without caching, around $0.45 per pass with caching enabled. The same task billed at a junior solicitor's time, around $100 per hour fully loaded, runs to roughly $1,200 for a standard two-day review. The cost differential is not incremental. It is a different category. That said, the category shift is only available if the prompt is structured correctly. An unstructured pass at $4 still produces $4 of noise.

That gap is the argument. Not the raw dollar figure but what it means in practice: one experienced solicitor reviewing four matters a day instead of one. A compliance team clearing a review backlog in two days instead of two weeks. A renewal team running line-by-line diffs on 20 policies in the time it previously took to do two. The tooling doesn't reduce headcount — it multiplies output per head. That's the argument that holds up in budget conversations.

The numbers shift based on document volume, solicitor rates, and review frequency. To model the payback for your specific workflow, the ROI Calculator gives you AUD figures in under three minutes.

When the context window isn't enough

A 1M-token prompt with no structure is a bad prompt. A 200-page product disclosure statement fed as a plain-text blob without section markers is technically readable by Claude, but the response quality is materially worse than the same document with clear part labels, numbered clauses, and a defined extraction task. The model doesn't compensate for disorganised input. It amplifies whatever structure is already present. If your documents come out of a system that produces clean, structured exports, you're ahead. If they're scanned PDFs or formatted-for-print Word documents, you need a pre-processing step first. Getting that step right is often where the real engineering work lives.

There's also a harder limit. Claude can extract, compare, and flag. It cannot give legal advice. Any pipeline that implicitly positions Claude output as legal advice creates liability under Australian Privacy Act (1988) obligations and, for regulated entities, under the professional responsibility rules that govern Australian legal practice. The human sign-off step isn't optional. It isn't a nicety. It's the design.

The document has no internal structure. Scanned PDFs, poorly OCR'd filings, and documents formatted for print rather than parsing all need a pre-processing step before any prompt makes sense.

The task is undefined. 'Summarise this policy' is not a task. 'List every exclusion clause, with section reference, that applies to business interruption claims' is.

The volume is too low. If your team reviews one contract a quarter, the infrastructure cost exceeds the time saved. The pattern works for high-frequency document workflows, not one-off reviews.

What a well-built pipeline looks like

The components are not exotic: a structured document loader, a caching layer, a task template that specifies extraction format and citation requirements, and a human review step before any output moves to a client file or regulatory filing. Building that correctly takes weeks, not months. Our AI Automation Services cover Discovery engagements that start exactly here — one document type, one defined extraction task, a working pipeline at the end. The Discovery scope is typically two weeks and includes the caching configuration and the task template, not just a proof of concept.

Pick one document type your team reviews repeatedly. Quantify the hours. Model the caching discount against your current review cost. If the payback is under three months, and for most high-frequency document workflows it is, the business case almost writes itself.