The data exists. It has existed since before most of your analysts were born. A 30-year-old AS/400 holds inventory records that are more accurate than anything in your modern ERP, because the people who entered the data cared about it. The problem is not the data. The problem is that querying it requires a green-screen terminal, a specific login, and usually a call to one of three people in the building who still know the command structure.

An MCP server changes that equation. Not by replacing the legacy system, which costs millions and takes years. By wrapping it: a thin layer that reads what Claude needs, translates it into clean structured data, and hands it back to the agent without exposing a single EBCDIC field name.

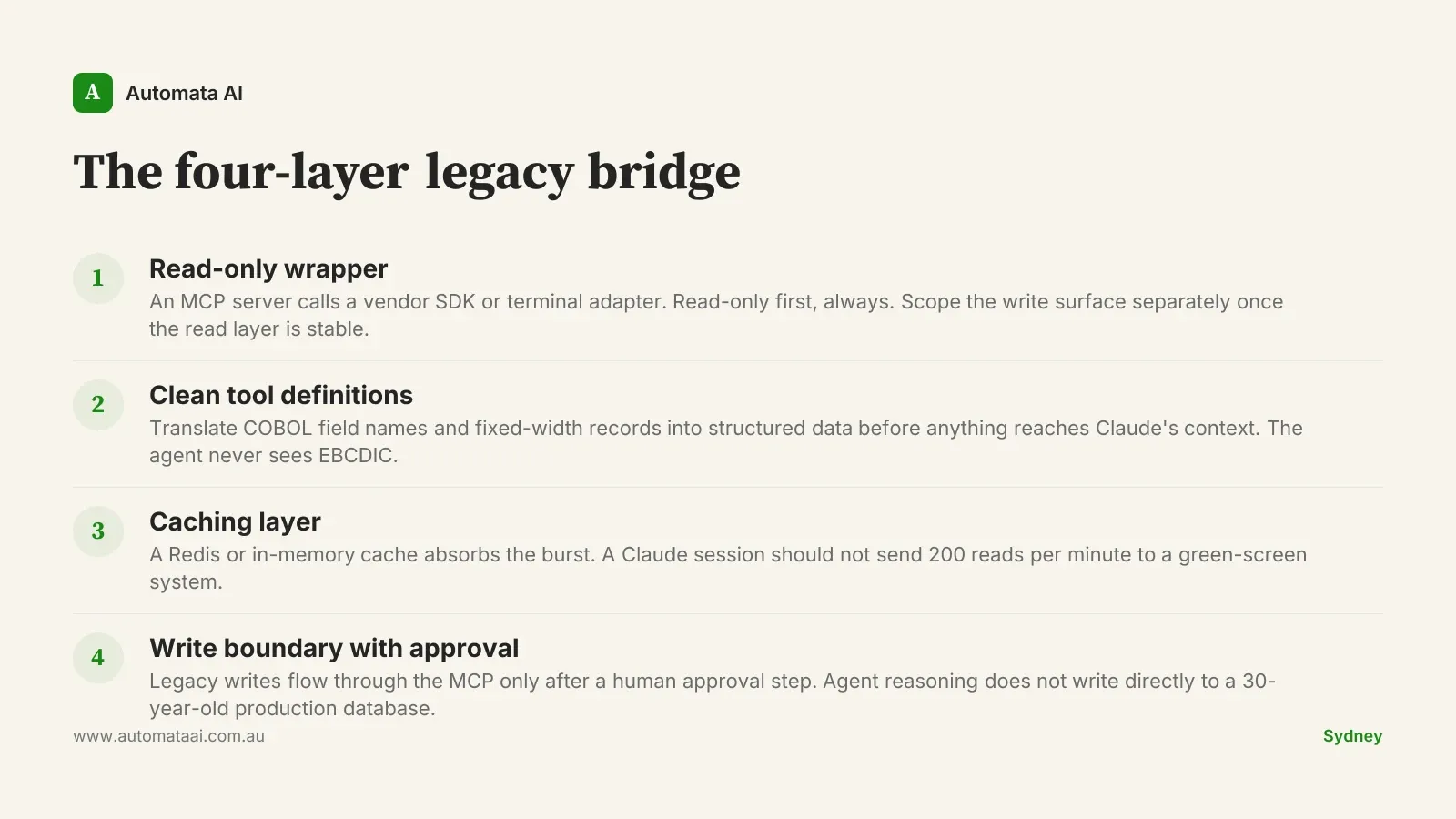

The four-layer legacy bridge

The architecture is not complicated. Four layers, in order:

Read-only wrapper. An MCP server that calls a vendor SDK or terminal adapter. Read-only first. The write surface comes later, once the read layer has proven stable.

Clean tool definitions. The agent never sees raw field names or fixed-width record formats. Tool definitions translate COBOL field names like INVQTY into inventory_quantity_on_hand before anything reaches Claude's context.

A caching layer. A Claude session can make 200 tool calls in a minute. That should not translate to 200 reads on a green-screen. A Redis or in-memory cache in front of the legacy system absorbs the burst.

A write boundary. When writes are eventually needed, they flow through the MCP only after a human approval step. Agent reasoning does not write directly to a 30-year-old production database.

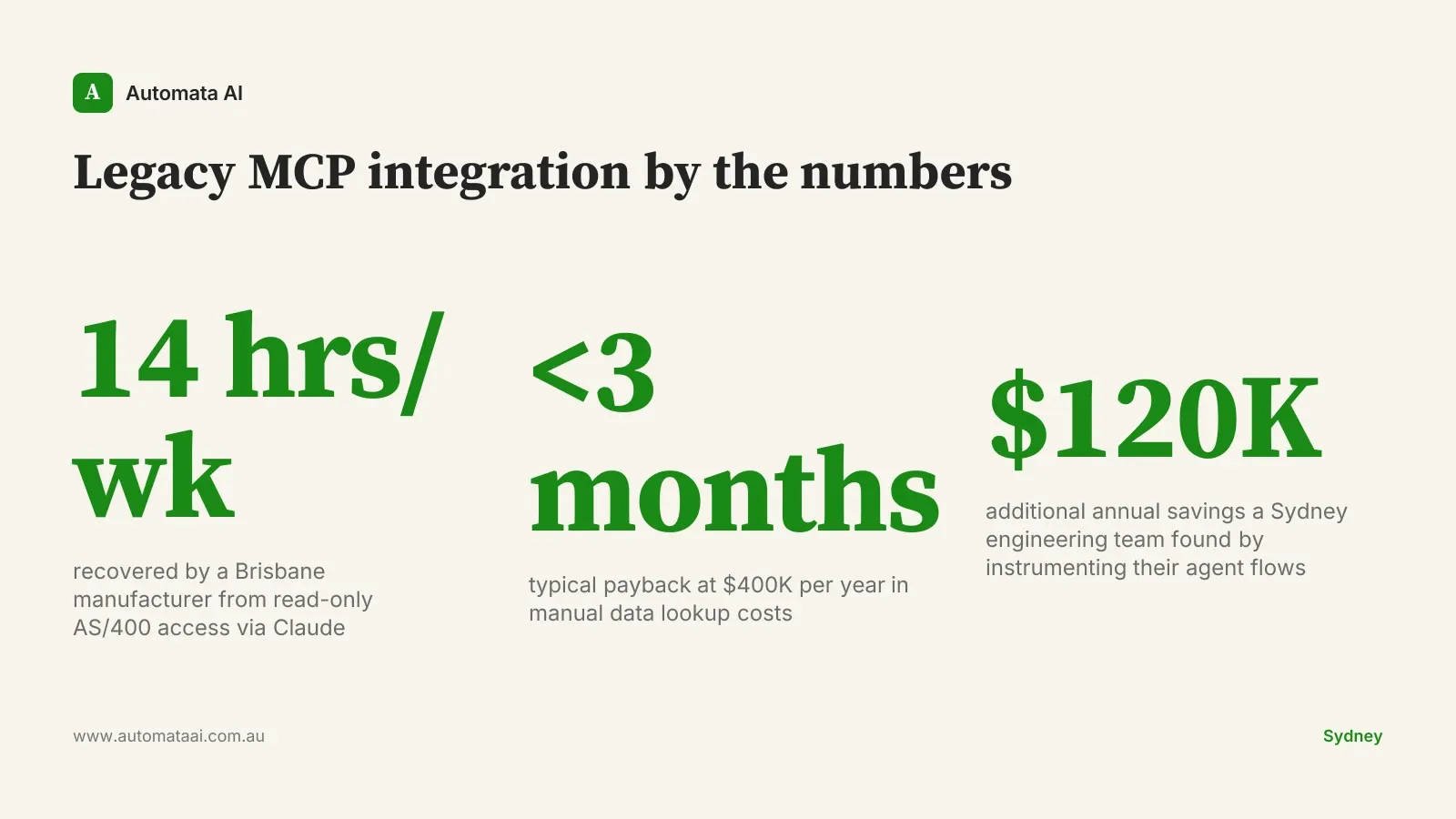

A Brisbane manufacturing firm did exactly this with their AS/400 inventory system. The MCP server itself is around 600 lines of code. The auth work, network path, and credential management came to nearly 1,800 lines. Three times the MCP itself. The firm recovered around 14 hours a week of manual reporting work: inventory queries that used to require a phone call to one of two people who knew the terminal commands, now answered by Claude in under 30 seconds.

The economics of an MCP integration

A typical Australian mid-market firm running on a 1990s ERP loses around $400,000 a year in operations cost from manual data lookups. That number is not hard to build: 10 people spending 90 minutes a day on queries they could not run themselves, at $85 per hour fully loaded. Most firms are conservative when they first model it.

The build lands in around six weeks for an experienced team, at a cost typically in the $60,000-$90,000 range for a mid-market engagement. At $400,000 in recovered time, payback is under three months. You can model your own numbers using our ROI Calculator — it runs on AUD figures and takes about three minutes.

When not to build an MCP

Not every legacy integration justifies an MCP build. Three situations where the economics do not work:

Query volume is too low. If the system is queried a dozen times a week, the engineering cost will not recover in any reasonable timeframe. A scheduled export job is usually cheaper.

No stable network path. Some legacy systems run on isolated networks with no route to a modern application server. Without an authenticated connection, the MCP has nothing to stand on.

A platform migration is within 12 months. If the business is replacing the legacy system soon, an MCP build adds cost with a short window to recover it. Scope accordingly or wait.

The case that does not get made often enough: sometimes the right answer is a nightly CSV export and a file-based tool definition in Claude. If the data only needs to be accurate to yesterday, and the query volume is modest, an MCP is overengineering. The build timeline has an opportunity cost. Prove the use case with the simpler approach first.

The real work is not the code

Every team that has done a legacy MCP integration reports the same thing. The MCP code is not hard. Anyone with solid Python or TypeScript skills and familiarity with the Model Context Protocol can get a read-only wrapper running in a week. The hard part is the network path, the credentials, and the people.

Legacy systems are run by small teams who have spent years keeping them stable. A new dependency that makes hundreds of read requests per session looks, from their side, like a risk they did not agree to carry. The practical play is to get one person from that team in a room for 30 minutes, walk through the read-only scope of the MCP, and show what happens on failure. Once they see that a failed MCP call returns an error to Claude rather than triggering a retry storm on their system, the conversation shifts. Start with read-only, prove value over a quarter, then negotiate the write surface. Our AI Readiness Assessment can help you map the credential and network requirements before committing to a full build.

Measuring the integration from week one

The pattern only holds if you measure it. Three numbers on a shared dashboard from the first week:

Time-to-first-result. How long from query to answer on the agent flow, versus the manual baseline. This is the headline number for finance.

Cost-per-task in tokens. This catches runaway agent loops early, before they become a budget problem.

Acceptance rate. How often the user accepts the agent's output without major edits. Below 70%, something is wrong with the tool definitions or the underlying data quality.

A Sydney engineering platform team running this discipline found that the dashboard itself drove around $120,000 a year of additional savings. Two agent flows were burning 40 percent of the token budget while delivering 10 percent of the output. Cutting them was a one-day decision once the data was visible.

Treat the agent surface like a product

The teams that get compounding value from legacy MCP integrations run a quarterly review: which agent flows earned their keep, which did not, which legacy surfaces are worth exposing next. That review treats the integration like a product, not a project. Patterns that compound stay. Patterns that cost more than they return get retired. Our AI Automation Services include that quarterly review as part of the ongoing engagement.

The technology is not the bottleneck. The credentials and the change management are. If you have a legacy system holding data your team queries manually every week, start by mapping the network path, identifying the credential holder, and beginning the conversation with the team that runs the system. The MCP code can wait until those three things are answered.