Six months ago a Sydney mid-market SaaS team shipped their fifth MCP connector from scratch. Same OAuth2 problem as connectors one through four. Same custom field drift. Same three-week debugging cycle when the agent found a write operation they had not meant to expose. Around $45,000 of engineering time, and they were back at the same whiteboard.

Australian teams building on Salesforce, HubSpot, Xero, and ServiceNow are rebuilding the same connector structure on every project. The connector they shipped eight months ago is not reused. It is rewritten from scratch, because the team remembered the outcome but not the structure, and because the reference pattern was never written down. The shape that ends the rebuild cycle has four parts.

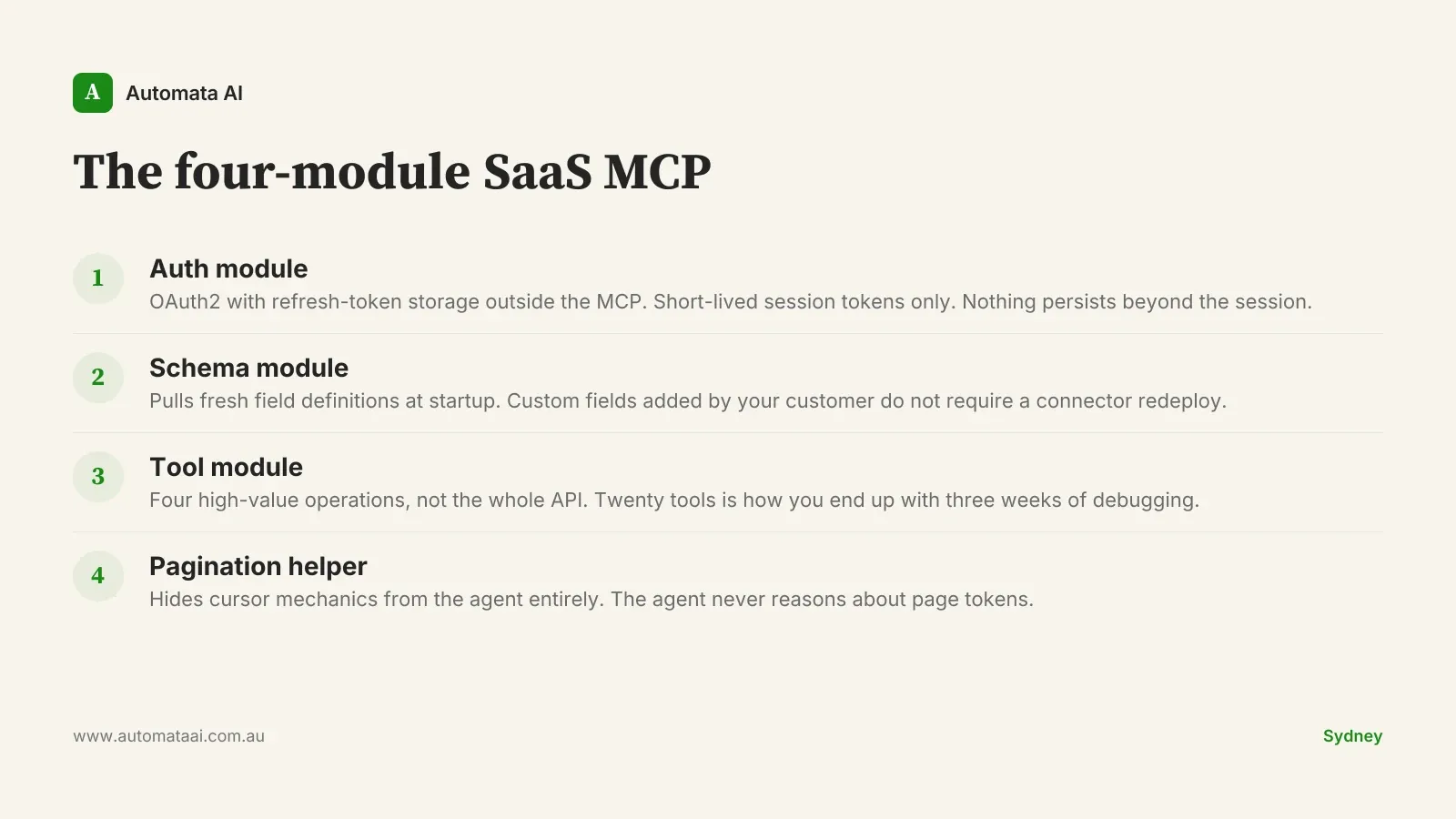

The four-module SaaS MCP

The pattern has four parts. Not three. Not twelve. Every element exists to solve a specific failure mode that shows up in production, not in the demo.

Auth module. Handles OAuth2 with refresh-token storage outside the MCP process. Customer credentials live in your secret store. The MCP fetches a short-lived token at the start of each session and uses it for the session's duration.

Schema module. Pulls fresh field definitions from the SaaS at startup. A custom field added by your customer should not require a connector redeploy. This matters more than it sounds: Australian mid-market SaaS customers add custom fields constantly, and schema drift is a silent connector killer.

Tool module. Exposes a small number of high-value operations, not the entire SaaS API. Four tools is the typical ceiling. Teams that expose the whole API end up with twenty tools and three weeks of debugging.

Pagination helper. Hides cursor mechanics from the agent entirely. When the agent has to reason about page tokens, you get inconsistent traversal and phantom results. The helper handles it; the agent never sees it.

Teams that build well to this shape land at around 1,200 lines of code, four tools, and five days of work. Teams that try to expose the whole API land at around 4,000 lines, twenty tools, and three weeks of debugging — then usually rebuild.

What to expose, and what to hide

Read operations are almost always safe to expose. Internal-state writes (updating a deal stage, marking a ticket resolved) are usually fine with appropriate logging. Write operations that cost money or send customer-visible communications require deliberate handling.

The mistake is letting the agent find dangerous writes by accident. Surface them as a single explicit tool with a name that signals the consequence: send_invoice, not create_document. Pair it with a confirmation pattern the agent has to traverse before triggering. A tool that silently emails a customer when certain conditions are met is not a tool. It is a liability waiting to be discovered in production. Australian teams routinely discover this six weeks into a deployment, usually via a customer complaint.

The auth pitfall

The most common failure mode in Australian SaaS connectors is credentials stored in the wrong place.

Customer credentials belong in your secret store. The connector fetches a short-lived token at session start and uses that token for the session's duration. When it expires, it fetches another. The MCP itself holds nothing that persists beyond the session.

If your auth layer requires a redeploy to rotate keys, fix that before building the rest. Service accounts with long-lived tokens and no rotation policy are a meaningful attack surface, and the Privacy Act (1988) obligations around data breach disclosure make that exposure expensive. One disgruntled contractor with a stale credential is all it takes.

A Sydney mid-market SaaS team running this pattern across six connectors cut their internal 'look up customer X in tool Y' cycle from around 11 minutes to under 90 seconds. That improvement compounds: the same support team now handles the same query volume with three fewer escalations per day. At $100/hr fully loaded, three fewer escalations per day is over $60,000 a year on one process. Model what that improvement looks like across your own volume in the ROI Calculator.

When not to build the connector

This pattern is not the right answer in every case. There are four situations where you should skip the custom MCP connector entirely:

The SaaS vendor already ships an MCP. Salesforce, ServiceNow, and several others have published native implementations or reference connectors. Check before building.

The process runs fewer than twenty times a day. Below that threshold, the five-day build often takes two years to pay back. Faster wins exist elsewhere.

The SaaS instance is heavily customised by IT. If field definitions, workflows, and object relationships change monthly, the schema module helps but the tool layer will still need constant attention. The pattern assumes a baseline of stability in the underlying SaaS configuration.

Write operations cannot be safely isolated. Some SaaS systems make it genuinely difficult to expose read-only surfaces without also exposing writes. If isolation requires building a proxy API on top of the SaaS, that is a different project scope and a different cost conversation.

The honest version of the pitch: the reference pattern saves $45,000 per build when the build is appropriate. When it is not, it just delays a more expensive realisation.

Measuring whether the pattern holds

The pattern works on day five. Whether it holds on day ninety depends on whether you measure it.

Three numbers belong on a shared dashboard from week one: time-to-first-result on the agent flow, cost-per-task in tokens, and acceptance rate (how often the engineer accepts the agent's suggested action without major edits). Without those three, the conversation with finance is a faith argument rather than a numbers argument.

A Sydney engineering platform team running connectors across six SaaS tools reports that the dashboard itself drove around $120,000 a year in additional savings. Not from the connectors performing better, but from the dashboard making outliers visible: two flows were consuming roughly 40 percent of the token budget while delivering around 10 percent of the output value. Retiring them was a one-day decision once the data existed. Without measurement, those flows would have kept running indefinitely.

The second discipline is a quarterly review of the connector surface. Tools that earned their keep stay; tools that did not get retired. Most teams skip this step and treat the agent surface like a project: launched, done, moved on. The ones that compound treat it like a product, reviewed quarterly, pruned regularly, always earning its budget.

Our AI Automation Services cover this build pattern from Discovery through to production for Australian mid-market teams. If you are not sure whether your SaaS configuration fits the four-module shape, the AI Readiness Assessment walks through that question in one session.

Pick one connector. Map it to the four-module shape. Measure the three numbers. The teams that compound are the ones that do not skip those steps.