Your team has been using Claude for three months. The code review prompt got copy-pasted into Slack on week two. By month two there were four versions of it scattered across Notion docs and a GitHub gist nobody had updated since February. Last week a junior engineer spent 45 minutes drafting a prompt from scratch for a task their lead had already solved in January.

That's the Skill conversion moment. Most teams miss it.

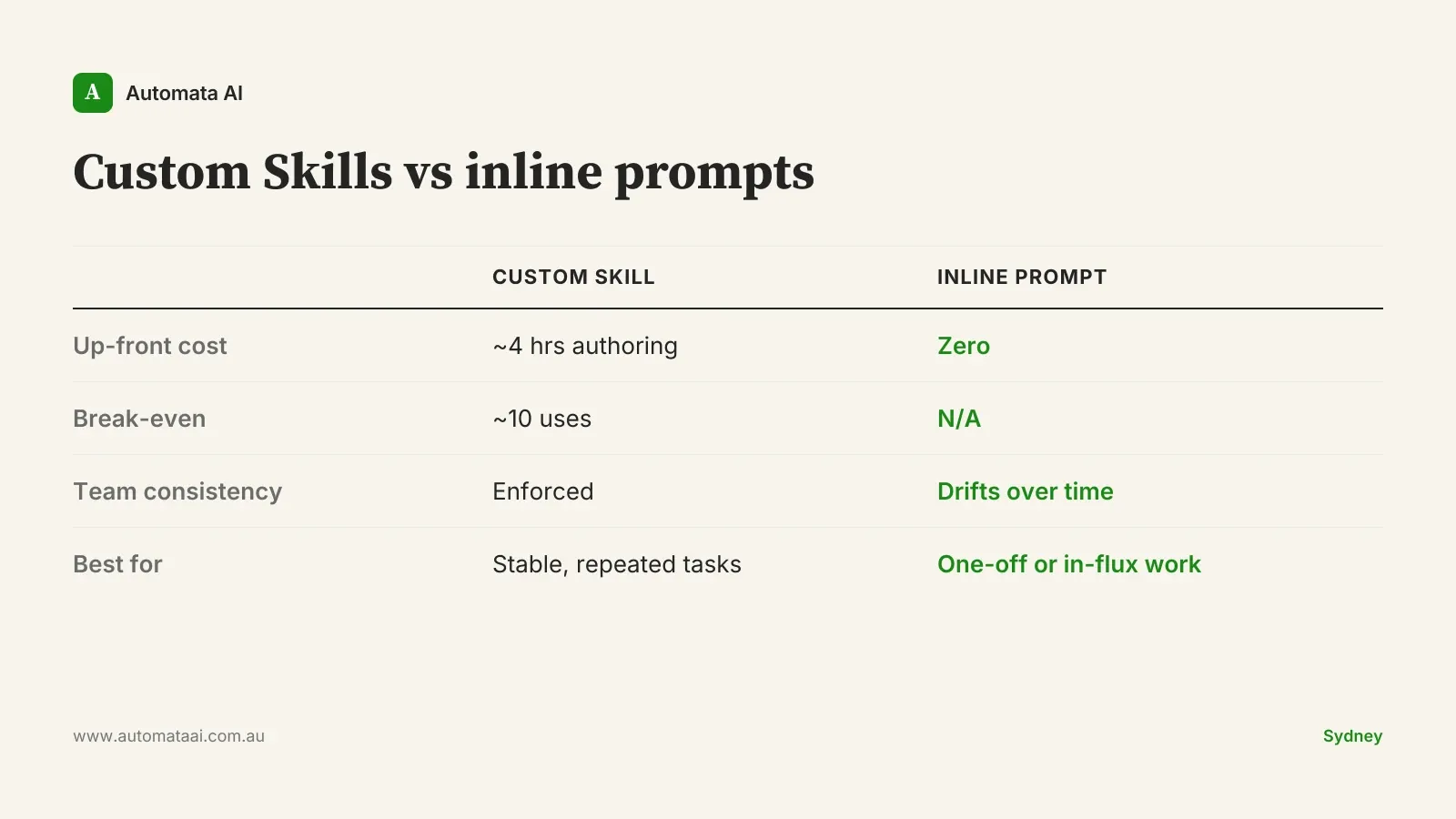

The choice between a custom Claude Skill and an inline prompt sounds architectural. It isn't. Three questions settle it: how often does this task run, how stable is the procedure, and how many people need identical behaviour.

When custom Skills earn their weight

The task runs more than five times a week. Below that frequency, the four-hour authoring cost takes months to recover. Above it, you'll have broken even within two weeks.

The procedure is stable enough that changing it is deliberate. If engineers are editing the prompt each time they use it, the process is still in discovery. Codify when the approach has settled, not before.

More than two engineers need the same exact output. One person with a personal prompt library is fine. Three people maintaining separate versions is version drift by the end of the quarter.

Re-explaining the procedure costs more than documenting it. Summarising a paragraph doesn't need a Skill. Reviewing a pull request against your architectural conventions and flagging APRA CPS 230 compliance issues absolutely does.

When inline prompts win

Not everything belongs in a Skill library. A team that tries to design all its Skills upfront ships none. The instinct to codify is right. The timing is often wrong.

The task is genuinely one-off. If you're using it once and discarding it, the authoring overhead costs more than the task is worth.

The procedure is changing rapidly. Teams in discovery rewrite their prompts daily. Codifying that instability into a Skill means maintaining a Skill that's wrong.

Context is the whole point. Some tasks require specifics that change every time. Wrapping them in a Skill adds process without adding value.

The practical tell: if an engineer is editing the prompt each time they paste it into chat, it isn't a reusable procedure yet. It's still a draft. A Skill is a commitment. Don't make that commitment until the commitment is warranted.

The break-even math

A Skill authored carefully costs around four hours of senior engineering time. At $150 per hour fully loaded (a reasonable mid-market figure for a Sydney-based senior engineer), that's roughly $600 of up-front investment. An inline prompt costs nothing.

The break-even lands at around ten uses. Past fifty runs, the Skill pays for itself in consistency and overhead savings alone. You can run the payback numbers in our ROI Calculator for your specific task volume and team size.

For a Sydney engineering team running 30 recurring weekly agentic tasks, converting the top ten into well-authored Skills can recover around $130,000 a year. That figure accounts for rework, version drift, the time spent re-explaining the same procedure, and the cost of inconsistent outputs reaching production.

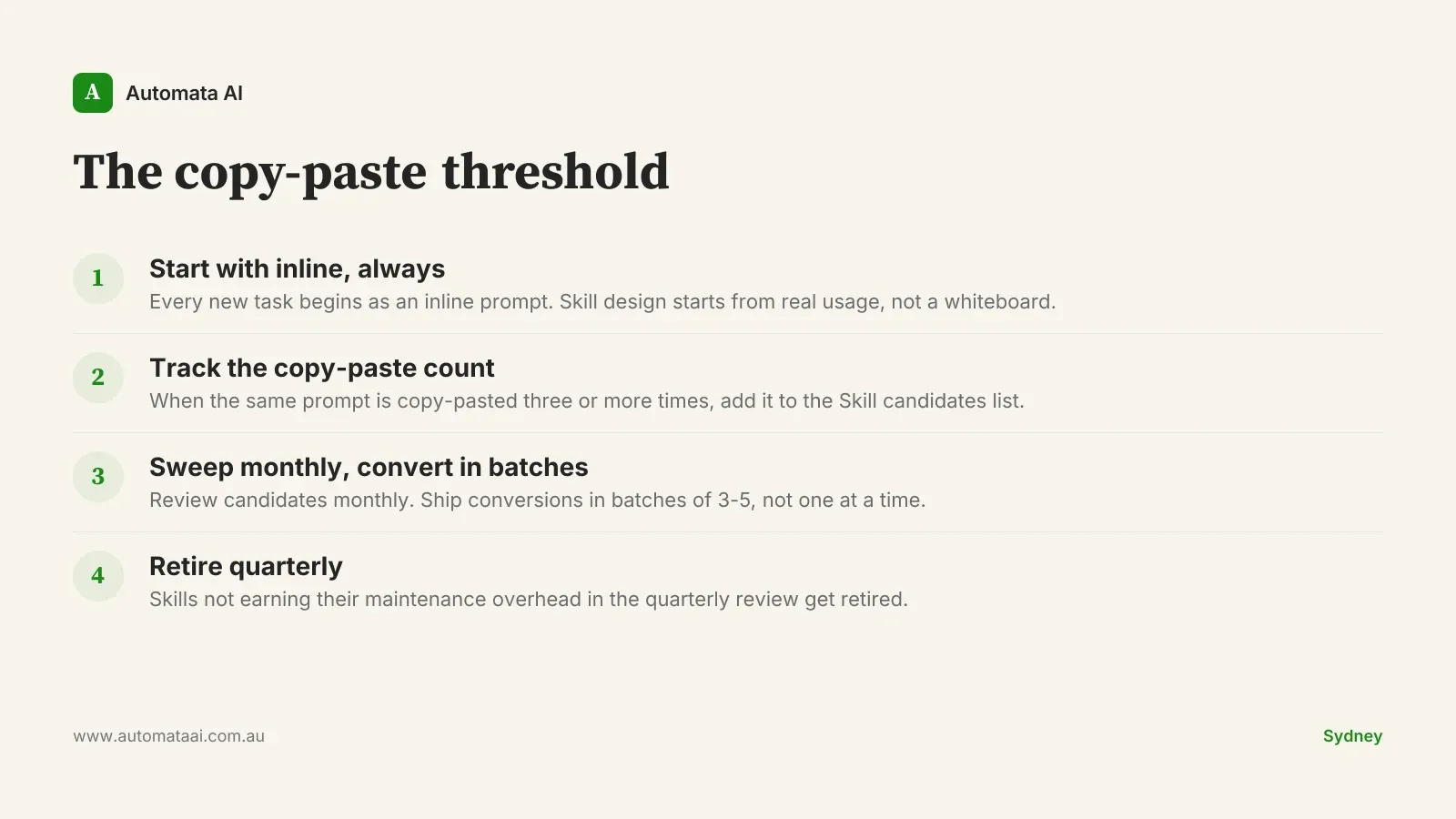

The copy-paste threshold

The migration pattern that works in practice: start every new task as an inline prompt. When the same prompt has been copy-pasted into chat three or more times, it belongs on a Skill candidates list in the team wiki. Sweep that list monthly. Convert in batches of three to five.

That's the copy-paste threshold. Not a feeling, not an architectural discussion — a count. Three copy-pastes and the prompt is a Skill candidate. It might not be ready to author yet, but it's on the radar.

Start inline, always. Every new task begins as an inline prompt. The Skill design process starts from real usage, not a whiteboard.

Track candidates, not completions. Maintain a 'Skill candidates' list in the team wiki. The list is the discipline; the conversions follow.

Convert in batches, retire on a schedule. Ship Skills in batches of 3-5. Retire any Skill not earning its maintenance overhead in the quarterly review.

The AI Readiness Assessment covers this migration as part of a broader review of which agentic workflows are ready to scale and which are still in discovery.

What measurement makes the argument

The pattern only holds if you track it. Add three numbers to a shared dashboard from week one: time-to-first-result on each agentic flow, cost-per-task in tokens, and acceptance rate (how often an engineer accepts the output without material edits).

Without those three, the conversation with finance is an opinion. With them, it's a data argument. One Melbourne engineering platform team running this discipline found the dashboard alone surfaced $120,000 of additional annual savings. Two flows were burning 40 per cent of the token budget while producing 10 per cent of the value. Retiring them took one day once the data existed.

A Skills library doesn't need to be large to compound. Thirty well-authored Skills, reviewed quarterly, is institutional knowledge that belongs to your team, not to any individual engineer. If you want to see what a production-grade Skills build looks like in a real Australian mid-market deployment, our AI Automation Services covers the full scope.

Pick one process. Count how many times it ran last week. If it's above five and the procedure hasn't changed in a month, it's a Skill. The rest can wait until next month's sweep.