An MCP tool that throws a raw stack trace at Claude has stopped being a tool.

It's a wall. The agent hits it, reads the trace, and does one of two things: it retries the same failing call, or it invents a fallback that has nothing to do with what actually went wrong. Neither recovers the task. Both burn tokens. One of them silently corrupts state.

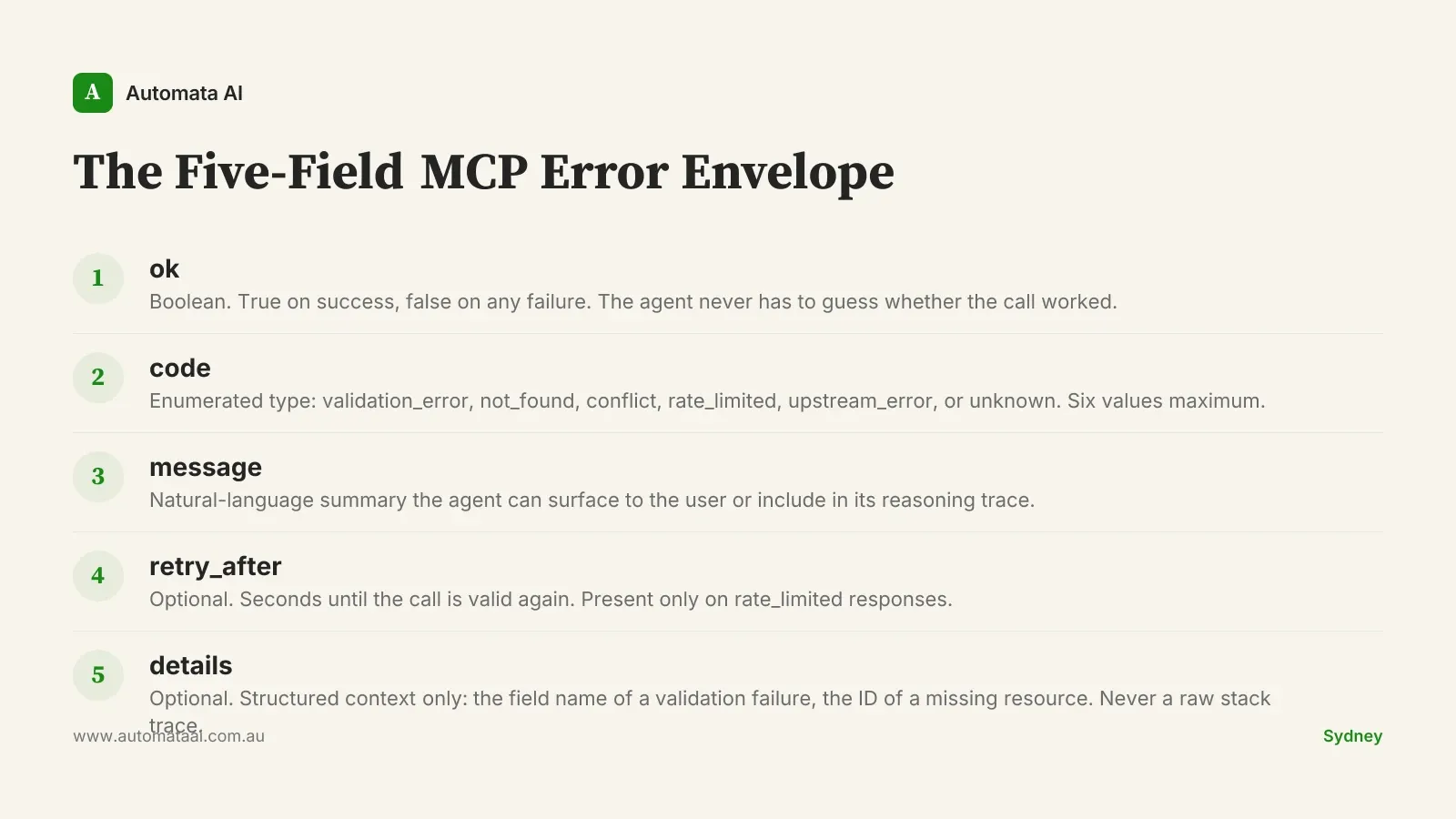

The five-field MCP error envelope

The fix is a consistent return shape. Call it what it is: the five-field MCP error envelope. Every tool in your server returns it, success or failure, and your agent always knows what it's reading.

ok. Boolean. True on success, false on any failure. The agent never infers success from an empty error field.

code. An enumerated set: validation_error, not_found, conflict, rate_limited, upstream_error, unknown. Six values. Not twenty.

message. A natural-language summary the agent can surface to the user or include in its reasoning trace.

retry_after. Optional. Number of seconds until the call is valid again. Present only on rate_limited responses.

details. Optional. Structured context only: the field name of a validation failure, the ID of the missing resource. Never a raw stack trace.

It's also the difference between a demo server and a production server. If you're comparing vendors or evaluating an in-house build, our AI Automation Services cover the error envelope as a baseline requirement across every MCP integration we ship.

Why structured errors beat throwing

When Claude encounters a structured error, it picks from a defined set of recovery actions: retry with backoff on rate_limited, surface the message to the user on validation_error, route to a fallback tool on upstream_error, or stop cleanly on not_found. The code field is a decision tree the agent can actually follow.

A mid-market Australian SaaS team retrofitting this envelope across eight MCP tools cut their agent failure-mode incidents from 22 per week to 4. The platform engineer who owned the conversion spent three days on it. The annual ongoing-incident cost they'd been absorbing was roughly $140,000, mostly senior engineer hours at $120 to $180 per hour plus on-call disruption. Three days of work against $140,000 a year is not a hard number to justify.

If your MCP server currently returns a 200 with an error inside the JSON body, that is worse. Claude reads success and tries to use the malformed payload. Fix the status semantics first, before worrying about the envelope shape.

The auditable envelope

Structured error envelopes are not just a reliability pattern. They're a compliance asset. A regulated buyer reading an agent transcript can see exactly what failed, what code the server returned, and what recovery the agent took. The conversation with their CISO gets shorter when failure modes have names.

Teams selling into Australian financial services will recognise this pressure. Under APRA CPS 230, operational resilience includes demonstrating how third-party systems handle failures. An error envelope with named codes and a logged message is evidence. A stack trace in a transcript is not.

A Sydney logistics builder shipping this envelope across their order management MCP tools cut on-call escalation time per incident from around 40 minutes to 12 minutes. The diagnostic story is simpler when errors have shape: the on-call engineer reads the code, reads the message, and knows where to look. If you want to assess where your MCP infrastructure sits against this baseline, the AI Readiness Assessment is a reasonable starting point.

When not to standardise this

Not every MCP tool justifies the retrofit work. Three situations where the pattern doesn't earn its keep:

The tool is a short-lived prototype. If the MCP server exists to test a model integration and will be replaced or discarded in four weeks, invest the three days elsewhere.

Error states are genuinely rare. A tool that calls a high-uptime internal service and handles only two possible inputs doesn't need six error codes. One ok field and a message is enough.

The agent isn't autonomous. If a human approves every tool call before execution, a structured envelope adds overhead with little recovery value. Human-in-the-loop flows can tolerate messier errors because the human reads them.

The pattern pays off when an agent is operating with real autonomy, calling tools in sequence without human approval, and running at a volume where incident costs compound. Below that threshold, it's a best practice without a business case.

Measuring whether it's working

The envelope only holds its value if you instrument it. Three numbers worth tracking from week one: time-to-first-result on the affected agent flow, cost-per-task in tokens, and incident count per week. If you're modelling the payback on the conversion effort, the ROI Calculator runs AUD figures against your actual hourly rates.

Time-to-first-result. End-to-end latency from task start to completion. If it falls after you standardise the envelope, recovery paths are short-circuiting correctly.

Cost-per-task in tokens. Blind retries on unstructured errors burn tokens fast. A structured envelope cuts unnecessary retry loops and shows up here within days.

Incident count per week. The number that matters to the platform engineer and to finance. Track it before and after the conversion.

A Sydney engineering platform team running this measurement discipline found that the dashboard itself drove around $120,000 a year of additional savings. Not from the envelope pattern directly, but from the visibility it created. Two agent flows were consuming 40 percent of the token budget while delivering 10 percent of the task volume. Killing them was a one-day decision once the data was in front of the right people.

Error shape is diagnostic shape. The teams that get this right aren't the ones with the most sophisticated agents. They're the ones who can read a failure and fix it in 12 minutes instead of 40. That discipline compounds.