A Melbourne mid-market SaaS team ran an agent build for eleven weeks before the project was cancelled. Budget exhausted. Observability nonexistent. Retry logic hand-rolled, brittle, and constantly failing in production edge cases.

Six months later, the same team shipped a customer-facing agent in eighteen days. The team didn't change. The underlying systems didn't change. They used the Claude Agent SDK the second time.

What the SDK hands you on day one

Tool-calling, retries, streaming, memory, and the permissions model are built in. Skills, hooks, and MCP servers compose the same way they do in Claude Code, which means an in-house Skill library built for one agent is reusable on the next. The SDK manages the loop. You write the tools.

That last distinction is worth sitting with. The harness (retry policy, error handling, state management for long-running flows) represents roughly a third of the engineering effort in a hand-rolled build. Most of that third is the boring kind: the code that nobody notices when it works, and everybody notices when it breaks. It's also the hardest code to get right when you're simultaneously building the tools, wiring the systems, and writing the agent logic. The SDK gives you that third back on day one.

Tool-calling and retries. Write the tool, not the infrastructure around it. The SDK handles error recovery, backoff, and retry policy for every tool call.

Memory primitives. Long-running agents (customer support queues, compliance review pipelines, multi-session onboarding) don't require bespoke state management. Memory is first-class.

Permissions model. A pilot ships with the same security posture as Claude Code. In Australian financial services, where APRA CPS 230 operational resilience requirements apply to AI systems touching customer data, shipping with a known-good permissions layer saves weeks of compliance work.

MCP composition. Three to five MCP servers wrapping your underlying systems is the standard pattern. Each server is its own codebase: testable, deployable, and replaceable without touching the agent loop.

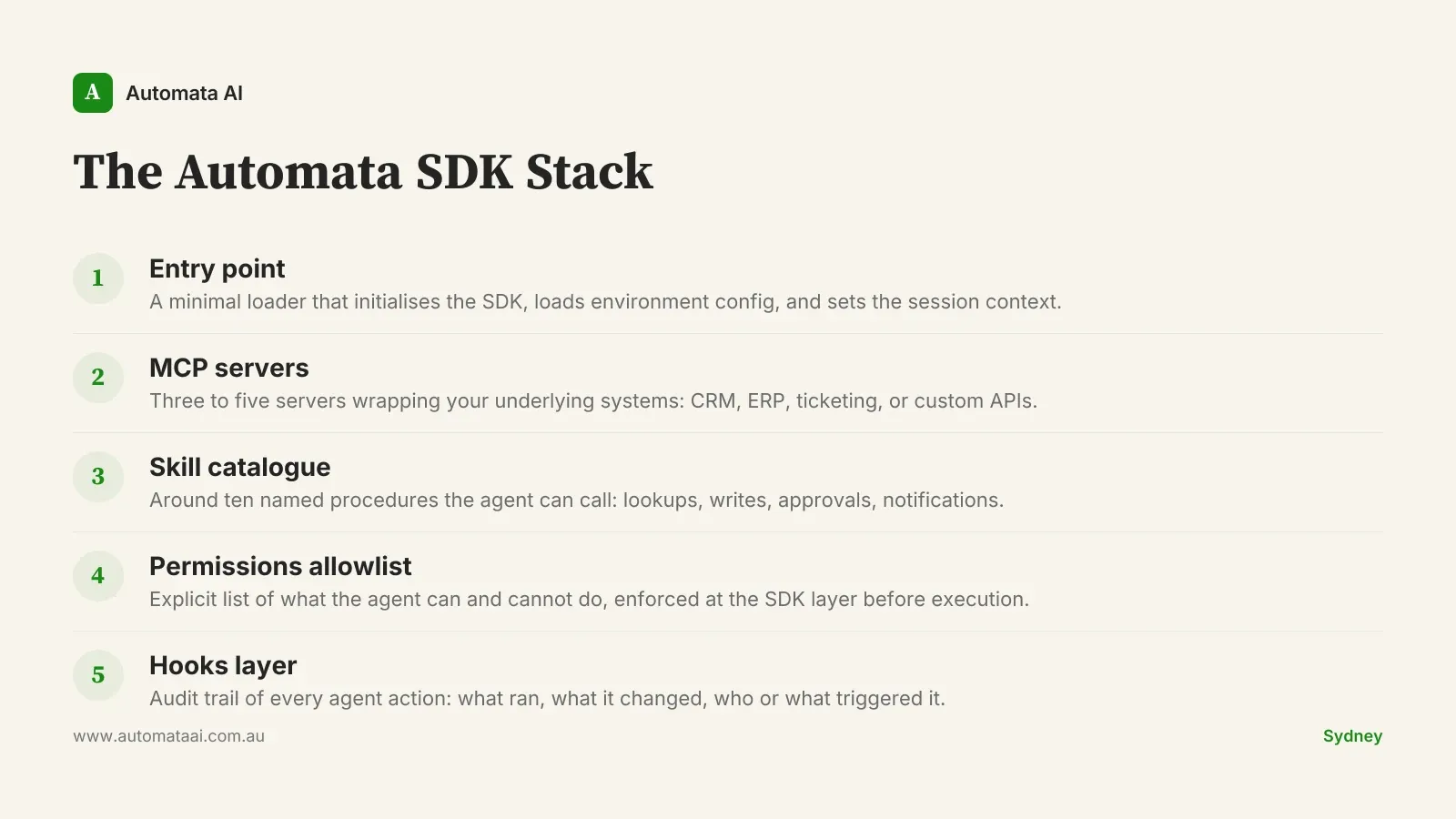

The Automata SDK Stack: a reference build shape

A production agent built on the SDK has five components. We call it the Automata SDK Stack. It's the pattern behind every build in our AI Automation Services portfolio, from document processing flows for professional services firms to customer support agents for SaaS businesses.

The entry point is minimal: it loads the SDK, sets the session context, and hands off to the agent. Three to five MCP servers wrap the underlying systems. A Skill catalogue of around ten named procedures gives the agent a defined vocabulary of what it can do. A permissions allowlist makes explicit what it cannot. A hooks layer records every action for audit.

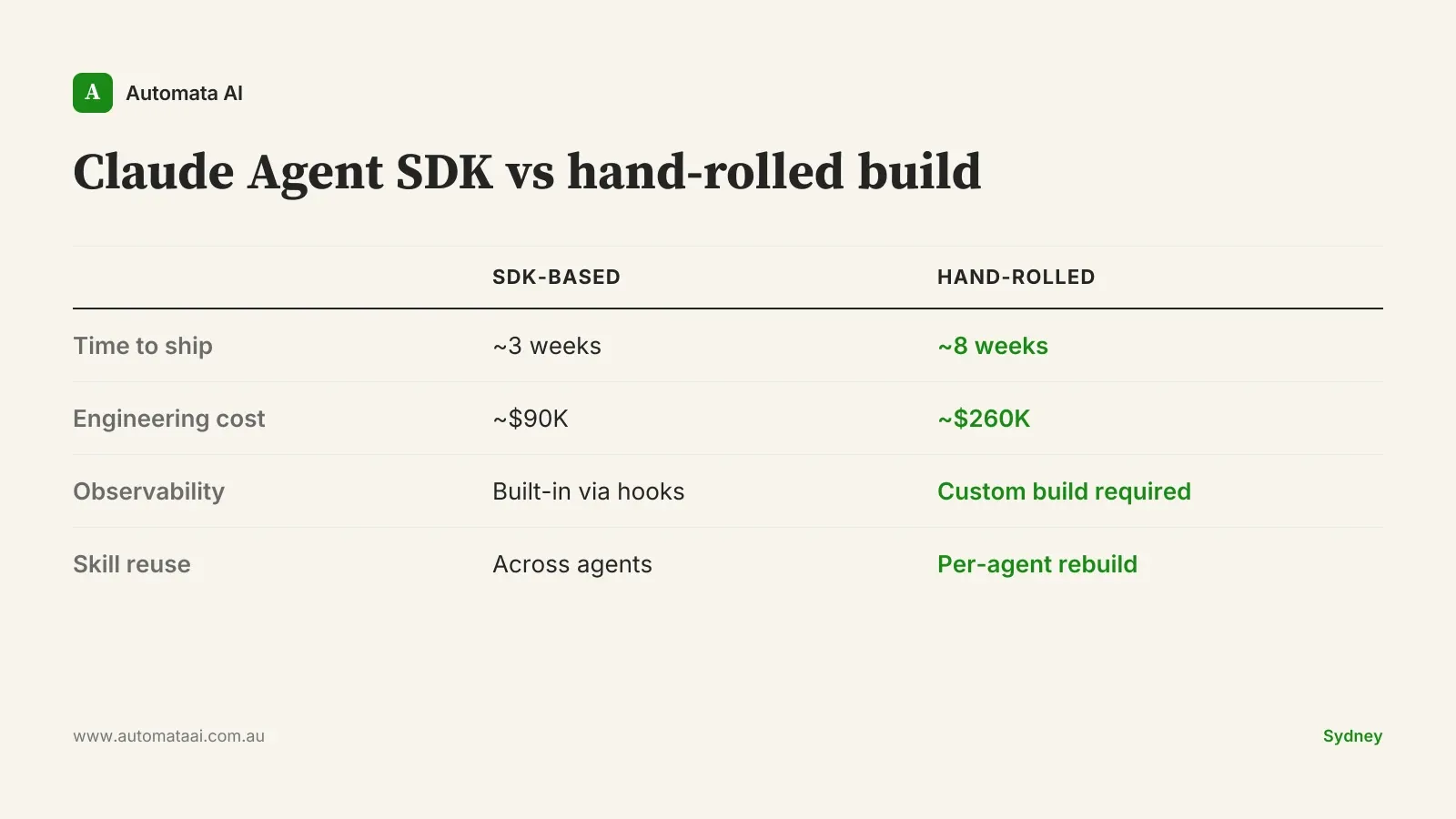

That whole shape takes around three weeks for an experienced team. The equivalent build without the SDK takes eight weeks. That estimate assumes the team figures out the retry policy on the first attempt, which rarely happens. The bigger gap is observability: a hand-rolled build ships without the hooks layer, which means your first production incident involves a lengthy conversation about what the agent actually did and when.

The cost math

At Australian senior engineer rates of $120–$180 per hour fully loaded, the time difference translates directly to money. An SDK-based build runs roughly $90,000. A hand-rolled equivalent runs closer to $260,000, before factoring in the ongoing maintenance cost of custom retry logic and the engineering time spent rebuilding what the SDK already provides.

The Melbourne team's first attempt cost around $200,000 in sunk engineering before cancellation. Their second attempt, with the SDK, came in at $85,000. That $115,000 delta paid for two additional MCP server integrations and a six-month support retainer.

Model the payback for your own process in our ROI Calculator. It runs AUD figures against your team size and process volume and takes about three minutes.

When not to build on the SDK

The SDK isn't the right choice for every build. Three situations where it isn't:

Your systems have no API surface. MCP servers need something to connect to. If your core system has no API and no webhook capability, fix the integration gap first. The SDK can't compensate for missing connectivity.

You need sub-50ms response latency. Agent loops have overhead. High-frequency trading desks, real-time pricing engines, and similar systems don't fit this architecture.

Your compliance stack restricts third-party orchestration. Some Australian financial services organisations and healthcare providers operate under data residency or vendor approval requirements that affect what orchestration layer they can use. Verify with your legal and compliance team before committing to any orchestration framework.

None of those disqualifiers apply to most Australian mid-market builds. The typical SaaS operation, professional services firm, logistics business, or insurance team sits well inside the SDK's wheelhouse. If you're not sure, build a small proof-of-concept MCP server against your most accessible system first. If it connects cleanly, the rest of the stack will follow. Most of the time, it does.

Measurement from week one

The build is half the work. Three numbers belong on a shared dashboard from day one: time-to-first-result on the agent flow, cost-per-task in tokens, and acceptance rate — how often engineers take the agent's output without major revision.

A Sydney engineering platform team running this discipline found the dashboard itself drove around $120,000 a year in additional savings. Two flows were burning 40 percent of the token budget while delivering 10 percent of the value. Killing them was a one-day decision once the data existed. Without the dashboard, that conversation would have taken three months and an external review.

The quarterly review is the discipline that separates a compounding investment from a depreciating one. Pull the token cost and acceptance rate for every flow each quarter. Patterns that have drifted get reviewed first. If the fix is a Skill update, make it. If the flow no longer earns its cost, retire it.

Teams that treat Claude tooling like a product, running deliberate retirements and holding patterns accountable, compound on their initial investment. Teams that treat it like a project ship once and drift.

Our AI Readiness Assessment walks through both disciplines in detail: build shape, measurement, and the quarterly review process.

Pick your highest-volume internal process. Estimate the engineering time to wire it up. If the payback is under six months, the business case almost makes itself. Most of the time, it is.