Your Dependabot queue has 47 open pull requests. Six of your flaky tests have been failing intermittently since February. You know which ones. Your documentation went stale sometime in Q3 and nobody noticed until a new engineer filed a ticket that was not a bug. It was just the docs being wrong about an API parameter that changed in a minor release three months earlier.

None of that required a human decision to fix. It required a trigger.

Running Claude Code inside GitHub Actions is the shift from coding assistant to CI agent. The model does not wait for a developer to open a terminal and type a question. It fires on a schedule, on a pull-request event, on a Dependabot update. It reads the context, writes a response, and opens a pull request. A human reviews the output. The engineer's job changes from doing the triage to approving the triage. That is where the compounding starts.

Four workflow patterns that actually ship

These are the four patterns Australian engineering teams are running in production today, in rough order of maturity:

Scheduled test triage. A daily workflow runs Claude Code against the failing-tests dashboard, posts diagnoses as draft pull requests, and tags the relevant CODEOWNER. The engineer starts the day with a proposed fix, not an alert.

PR review on watched paths. A pull-request workflow triggers Claude Code on files in a watched directory: migrations, API contracts, auth code. It leaves structured inline comments. Not a blocking gate. A second pair of eyes.

Dependabot migration agent. Instead of leaving a stale Dependabot branch open for three weeks, a workflow fires Claude Code on the updated dependency, writes the migration code, and pushes it to the branch. The engineer approves or modifies.

Documentation drift detection. A weekly workflow runs Claude Code over docs and code together. When the two diverge past a configured threshold, it opens a pull request with corrected text. No manual audit required.

The economics for an Australian mid-market engineering team

A typical mid-market Australian SaaS with 80 engineers spends roughly $180,000 a year in attention tax on flaky test triage and Dependabot management. That figure is not speculative. At $120/hr fully loaded for a senior engineer spending 20 minutes a day, across a team of ten who regularly touch this category of work, the number compounds quickly. The work is not hard. It is relentless, and relentless is expensive.

At current Anthropic pricing, a Claude Code GitHub Actions run costs around $0.80 for a typical task. A setup that handles 70 percent of that triage volume, realistic once the patterns are tuned, pays for itself in under two months. Run the payback math against your own headcount in the ROI Calculator.

The setup cost is not trivial. Getting the workflows tuned, the prompts stable, and the CODEOWNER routing correct takes real engineering attention. Budget two to four weeks of focused effort for the first two workflows. The mistake most teams make is treating it as a one-afternoon job, then wondering why the acceptance rate is low.

When this is the wrong setup

Not every team should run this. The pattern works when the toil is high-volume, repetitive, and structurally similar from instance to instance. When those conditions do not hold, you are paying $0.80 per run to automate chaos.

Low-volume processes. If your Dependabot queue generates two PRs a week, a human handles it faster than the overhead of maintaining a workflow.

Unstable codebases. When your architecture is in active flux, agent workflows tuned last month break this month. Wait until the core is stable before automating the periphery.

Processes requiring production access. The agent should produce a pull request a human approves, not push directly to a protected branch or read production credentials.

Small teams without review capacity. If no one has bandwidth to review and accept agent output, the queue shifts from Dependabot to draft PRs. Both pile up.

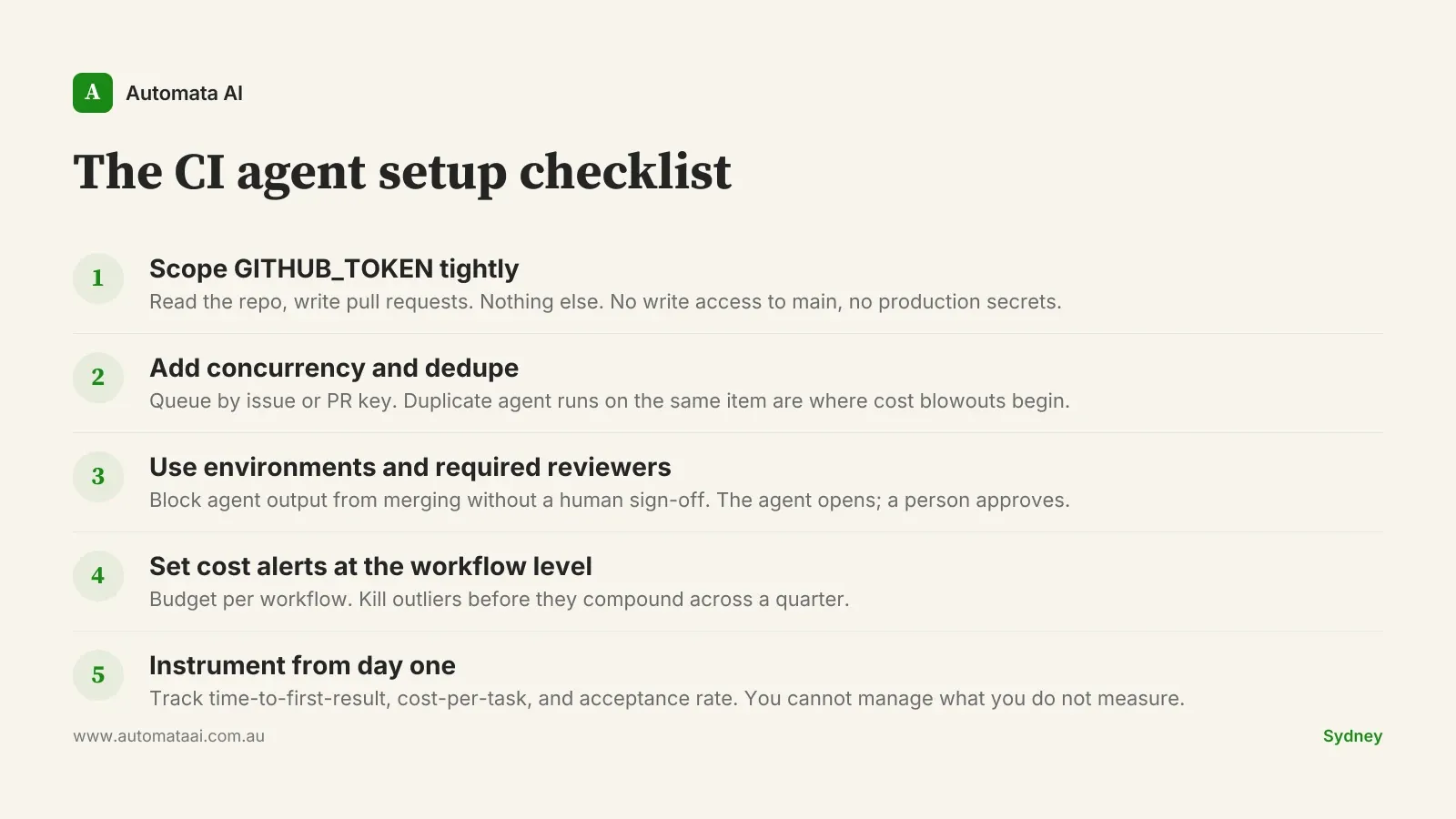

The permissions model you cannot skip

Scope the GITHUB_TOKEN to the minimum the workflow needs. Read access to the repo and write access to pull requests is enough for most patterns. Do not give it write access to main. Do not give it access to production secrets. Use GitHub environments and required reviewers for any workflow that opens pull requests. The guard rail is not bureaucracy — it is what stops a mis-triggered workflow from pushing bad migration code to a release branch at 2am.

Most teams trip on rate limits and concurrency rather than permissions. The fix is queueing, not scaling. Run the agent on a concurrency window, dedupe by issue or pull-request key, and the cost stays predictable. A Sydney engineering platform team running four of these workflows keeps monthly token spend under $400 by deduplicating aggressively, disabling weekend schedules when no one is around to review output, and setting a hard workflow timeout at 10 minutes. Skipping deduplication is how teams end up with twelve simultaneous agent runs on the same Dependabot PR and a $400 monthly budget that becomes $2,000 in a bad week.

The three-metric CI agent dashboard

The pattern only holds if you measure it. From week one, track three numbers: time-to-first-result on each agent flow, cost-per-task in tokens, and acceptance rate (how often the engineer takes the agent's output without major edits). Without those three, the conversation with finance is a faith argument. With them, it is a numbers argument. Our AI Automation Services include instrumentation setup as part of the initial build.

A Sydney engineering platform team running this discipline found the dashboard itself drove around $120,000 a year in additional savings. Two workflows were consuming 40 percent of the token budget while delivering 10 percent of the accepted pull requests. Killing them was a one-day decision once the data was visible.

Run a quarterly review of the agent surface. Patterns that earn their keep stay. Patterns that do not get retired. The teams that get compounding returns treat their Claude tooling like a product with a roadmap and a deprecation process. Not a project that shipped once and sits in the background accruing drift.

The setup is not magic. The model still makes mistakes, workflows still need maintenance, and someone still has to review the pull requests. But the category of work that used to interrupt a senior engineer at 9am is now handled overnight. That is a structural shift in how attention is spent. In Australian mid-market engineering teams at 80-person scale, attention is the scarcest thing on the resource plan. Start with the AI Readiness Assessment to find which of your current workflows are candidates, then instrument from day one.