The APRA auditor's question wasn't about the model. It was about the record. Your team used Claude Code on this codebase. Show me who ran it, what files it touched, and what tools it called. If you can't reconstruct that in under 48 hours, you don't have an AI problem. You have a control gap.

Australian banks, insurers, healthcare networks, and government agencies are rolling out Claude Code at pace. The question that dominates every regulated-sector evaluation isn't capability. It's reviewability.

A CPS 234 finding that triggers remediation can delay a major program by six to twelve months. For a mid-sized Australian financial services firm running a $5M initiative, that delay typically runs $2M to $6M AUD in deferred project value before remediation cost is counted.

What APRA, AUSTRAC, and ASIC are actually asking

None of these regulators have banned AI-assisted development. APRA CPS 234 doesn't mention Claude Code. What it requires is that an institution can demonstrate its information assets, including those modified by automated tools, were subject to appropriate controls. AUSTRAC's AML/CTF obligations add requirements when AI touches transaction processing or reporting code. The Privacy Act (1988) and the Australian Privacy Principles apply when the assisted code handles personal information.

The audit question isn't 'did you use AI'. The audit question is 'can you show me a defensible record of how that AI was used and what it touched'. Those are different questions with very different answer requirements.

The layering of obligations varies by sector. Our Australian financial services AI guide covers the specific interaction between APRA, AUSTRAC, and AML/CTF requirements for teams building regulated financial systems.

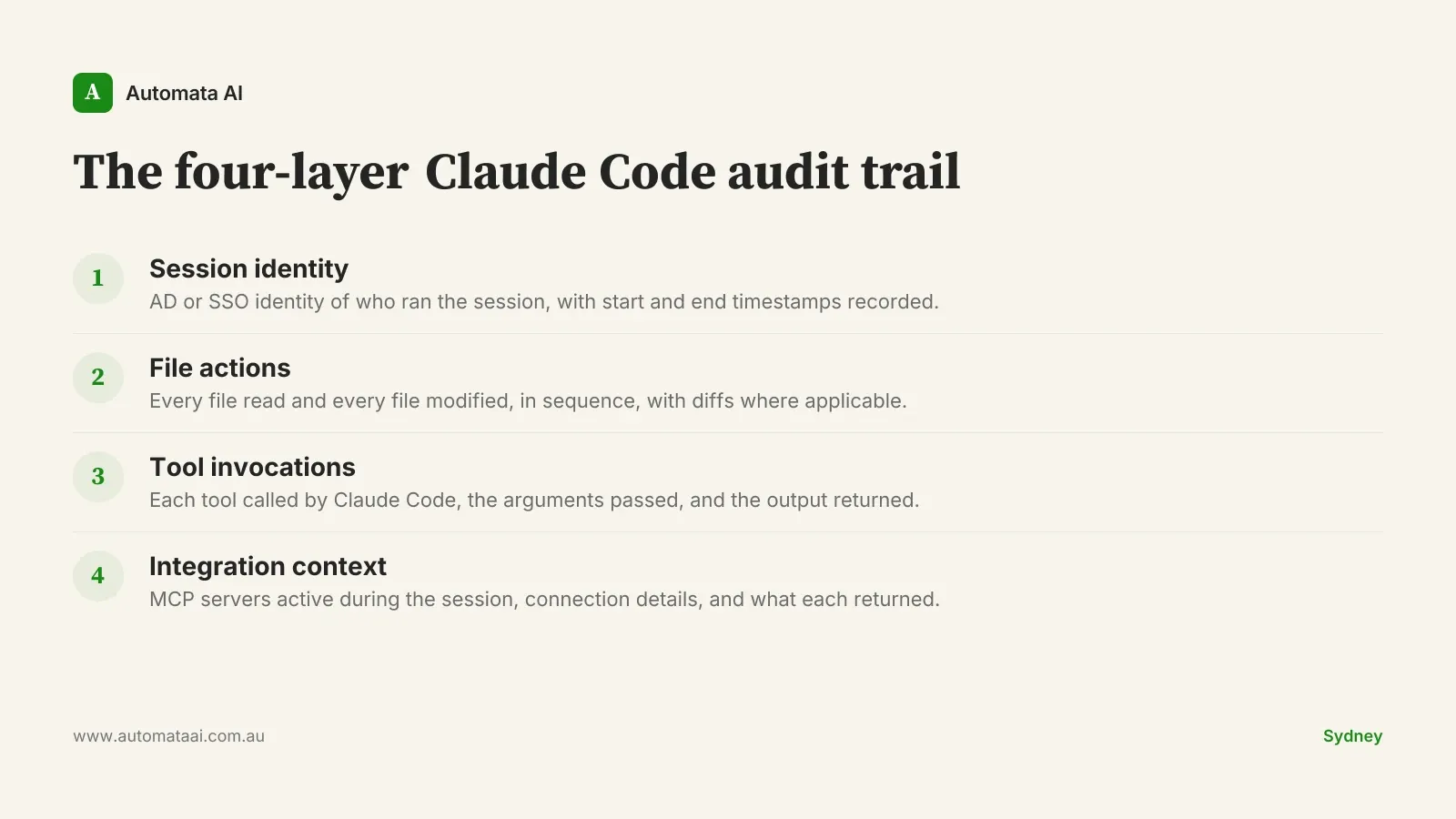

The four-layer Claude Code audit trail

The logging structure that satisfies these requirements captures four distinct categories of evidence from every session. We call it the four-layer Claude Code audit trail. Each layer answers a different regulator question.

Most teams logging Claude Code sessions capture the first two layers and miss the third and fourth. Tool invocation logs and MCP server interaction records are what separates a defensible record from a useful but incomplete one. Without them, you can show what files changed. You can't show what caused the change. That second question is the one that matters in a CPS 234 review.

A logging architecture that survives APRA review

Logging to a developer's local machine is not a logging architecture. A developer who can delete, modify, or simply not run a log is a control gap. Not a minor oversight. A control gap.

Centralised log store. Outside the developer environment, write access restricted to the logging agent. Retention periods are typically seven years for Australian financial services.

Tamper-evident storage. Append-only storage or signed log lines with a timestamp authority. The point is to make post-hoc modification detectable.

Separate access controls. The developer who produced the session should not hold delete rights on that session's log.

Independent review cadence. Quarterly sampling by internal audit or risk function. Not the engineering team reviewing their own logs.

This is heavier than what a startup building an internal tool needs. That's intentional. The architecture is scoped to regulated contexts: financial services, healthcare under the My Health Records Act, and government agencies under PSPF controls. Outside those contexts, the overhead isn't justified.

Closing the gap at code review

The PR review process is where the audit trail connects to the codebase. Most regulated Australian teams that have thought this through add one field to their PR template: the Claude Code session ID for any AI-assisted changes. That session ID links back to the centralised log. A reviewer can pull the full tool invocation history for that session and verify it against the diff.

That one addition closes most of the gap between 'we use Claude Code' and 'we can defend our use of Claude Code under audit'. The compliance analyst spending 20 hours manually reconstructing change history after a finding is 20 hours that better logging practice would have recovered. If you're scoping your logging requirements before investing in infrastructure, the AI Readiness Assessment includes a regulated-industry checklist that covers each of the four layers.

When to skip the overhead

Not every Claude Code use case justifies a centralised audit architecture. An engineering team building internal tooling with no personal data, no financial transaction processing, and no regulatory reporting obligations doesn't need seven-year log retention or tamper-evident storage. The overhead is real and it has a cost.

The threshold is straightforward. If the code you're building or modifying touches production financial transactions, personal health information, regulatory reporting, or government-classified environments, apply the four-layer framework. If it doesn't, a standard PR review process with human sign-off is sufficient. Don't apply compliance infrastructure to engineering work that doesn't require it.

Where the stakes are highest

Production financial transaction code. Any system processing payments, settlement, or ledger entries under AUSTRAC or ASIC supervision.

Personal health information systems. Code touching patient records or My Health Records data under the My Health Records Act and Privacy Act (1988).

Regulatory reporting pipelines. CPS 234, AUSTRAC suspicious matter reports, ASIC market data submissions — code that shapes what the regulator sees.

Government agency systems. Environments subject to PSPF and ISM controls, where classification requirements extend to the development toolchain.

For these contexts, the design effort up front is insurance, not overhead. A regulated-industry AI deployment that can answer the auditor's question immediately keeps its program moving. One that can't stops and restarts at a cost that makes the original logging investment look trivial.

The specifics to get right are log store selection, access control design, PR template structure, and MCP server auditing. These are what our Regulated Industry AI services cover in a scoped engagement. The architecture isn't complex. It just needs to be done before the auditor asks.