Your IT team spent three months on the vendor comparison. The AI product lead flew in from Sydney for the demo. The proof-of-concept ran clean. Now you have a genuine choice between two enterprise AI packaging systems, and the wrong call could cost you $300K-$900K AUD over three years.

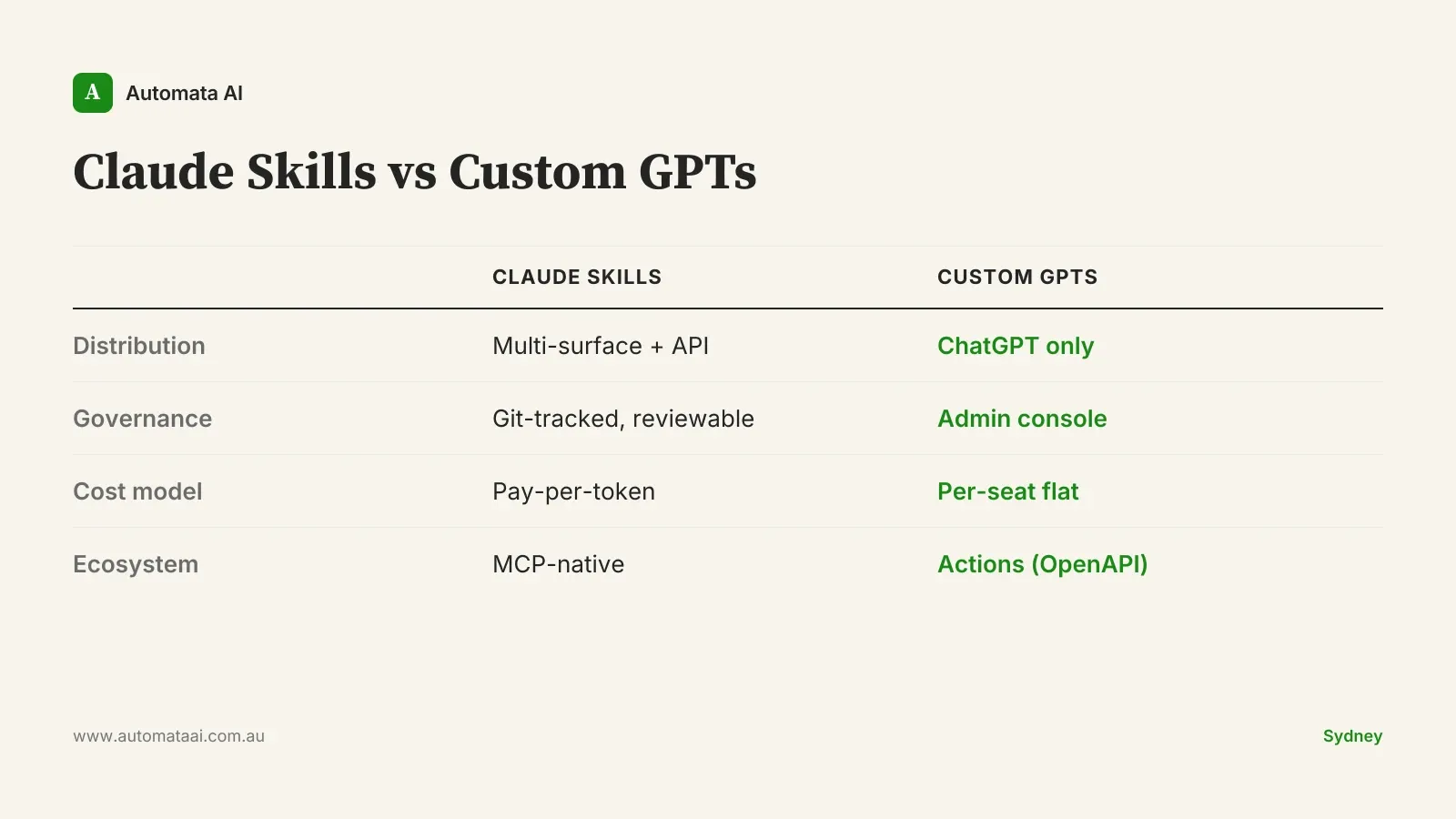

The distribution gap nobody talks about

Custom GPTs live inside ChatGPT. Getting them to your workforce means an enterprise ChatGPT seat per person. That is the end of the conversation for a lot of teams. The infrastructure is already there, the GPT store is familiar, and users know where to find things.

Claude Skills live wherever Claude runs: Claude.ai, Claude Code, the API, embedded inside custom-built apps, or invoked programmatically from internal systems. The distribution footprint is wider, but that flexibility has a cost. Someone has to design and maintain it. If your team does not have that capacity, you are not saving money. You are moving complexity somewhere it is harder to see.

For most Australian mid-market teams, this plays out the same way. Custom GPTs win on day-one ease. Claude Skills win on day-ninety coverage.

Governance: file-based audit vs admin console

For APRA CPS 230-regulated firms and Australian financial services teams more broadly, the governance model matters more than which model the tool runs on.

Custom GPTs are governed through the ChatGPT admin console. The GPT owner controls the system prompt, tool configurations, and access list. Audit comes through the dashboard. That is reasonable governance for general workforce tools.

Claude Skills are a folder on disk. Or a directory in your Git repository. The Skill is tracked, versioned, reviewed in pull requests, and deployable through your existing CI pipeline. A security auditor reads it like any other code artefact. The system prompt is readable. The tool permissions are explicit. For APRA-regulated and government teams, that typically scores higher in a security review because the control surface is something the reviewer can inspect rather than a dashboard they have to trust.

Custom GPTs. Enterprise admin console, creator history, usage logs.

Claude Skills. Git-tracked Skill folder, PR review, signed releases, CI evaluation suite.

Neither is universally right. An 80-person professional services firm in Melbourne with no dedicated security team is probably fine with the admin console. A 300-person financial services firm in Sydney operating under APRA oversight needs the file-based audit trail.

The cost model depends on your usage shape

Custom GPTs are bundled into ChatGPT enterprise per-seat pricing. Roughly $40-$60 AUD per user per month at current Australian enterprise rates, flat regardless of how much those users actually use the tool.

Claude Skills cost API tokens. Heavy users cost more. Light users cost almost nothing. That asymmetry is the entire argument.

For a 500-person organisation, run the numbers. If 100 of those users are heavy (the automation processes documents or runs queries on their behalf daily) and 400 use it lightly, the API model almost always wins on total cost. If all 500 are heavy users, the per-seat model can win on simplicity and predictability.

The three-year difference between those two usage profiles can sit anywhere from $300K to $900K AUD, based on typical Australian enterprise token consumption and current ChatGPT enterprise pricing. That is enough to fund a full AI Automation Services engagement on top. Run your actual numbers through our ROI Calculator before signing either contract.

The MCP ecosystem tilt

Custom GPTs use OpenAI's Actions framework. It is OpenAPI-based, mature, and functional. If your internal systems expose REST APIs, which most do, Actions connects to them.

Claude Skills tap into MCP servers. In 2026, MCP is the faster-moving ecosystem. Salesforce, Atlassian, and a growing number of enterprise SaaS platforms are shipping native MCP connectors ahead of REST APIs. If your team already runs internal MCPs for systems like your CRM, ERP, or document management platform, Claude Skills compose into that infrastructure without extra integration work. That is a compounding advantage. Every new MCP connector your vendors ship becomes available to your Skills the same day.

This matters most for engineering teams and regulated operations already building out MCP infrastructure. For most other teams, Actions and MCP are functionally equivalent at the integration layer.

When Custom GPTs are the right call

This is not a Claude pitch. For a meaningful portion of Australian mid-market teams, Custom GPTs are the right answer.

Your workforce is already on ChatGPT enterprise. The distribution problem is solved. Users know where to find GPTs, and you are not adding a new surface to manage.

Usage is broadly even across the organisation. If your whole workforce uses it daily without heavy document processing, per-seat cost is predictable and token billing is not worth the management overhead.

The use case fits inside ChatGPT's surface. General Q&A, document drafting, research support. Tools where staying inside the chat interface is exactly right.

The case falls apart when governance needs file-based audit, when distribution needs to span surfaces beyond the ChatGPT picker, or when your automation stack is MCP-native and Skills can slot in with zero extra configuration.

Some Australian mid-market teams end up with both. Custom GPTs for general workforce use. Claude Skills for engineering, compliance, and regulated operations where the audit trail needs to be reviewable code. That is not a failure to decide. It is the right architecture for a certain scale of organisation.

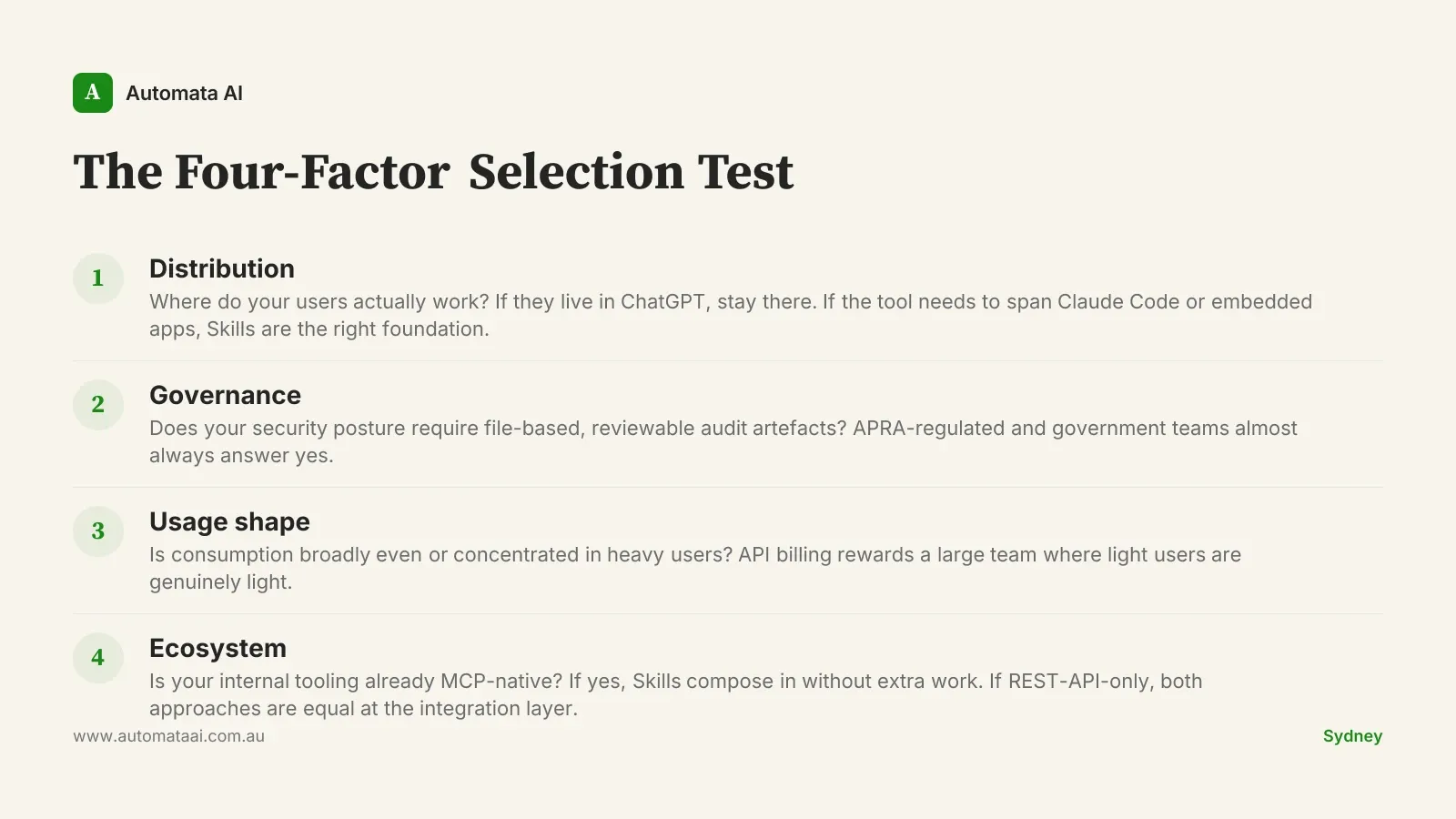

The four-factor selection test

Before committing to either platform, run the Four-Factor Selection Test. The four factors are distribution, governance, usage shape, and ecosystem. Each names a different constraint. Each has a different switching cost if you get it wrong.

Score each factor for your organisation. If three or four land on one side, the decision is clear. If you are two-two, default to governance — that is the factor with the hardest switching cost six months down the track.

The businesses that get this wrong do not usually get it dramatically wrong. They get it expensively wrong. Six months into a per-seat commitment the team barely uses, or three months into an API deployment with no audit trail the security team will sign off on. Our AI Readiness Assessment will tell you which of the four factors matters most for your specific stack before you commit.