A cross-service change ships on Friday. The PR is clean. Claude wrote the billing service update, the user service update, and the API gateway config. Each piece passed its own tests. By Monday morning, the platform is returning 403s for authenticated users because the billing service assumed a JWT claim format that the user service had changed three months earlier. Nobody told Claude.

That is not a model problem. That is a context architecture problem.

Mid-market Australian engineering teams at Sydney and Melbourne scale-ups, typically 8 to 20 services and 20 to 60 engineers, are hitting this pattern repeatedly in 2026. A senior platform engineer at $220,000 fully loaded spending two days a week firefighting AI-generated misalignments costs the business roughly $90,000 a year in wasted capacity. That figure does not include the downstream PR rework, the Monday morning triage calls, or the senior engineers pulled off roadmap work to diagnose what went wrong.

The underlying cause is rarely the model. Claude Code does not intrinsically understand your service boundaries. It works from the context it is given. Most monorepo teams give it very little.

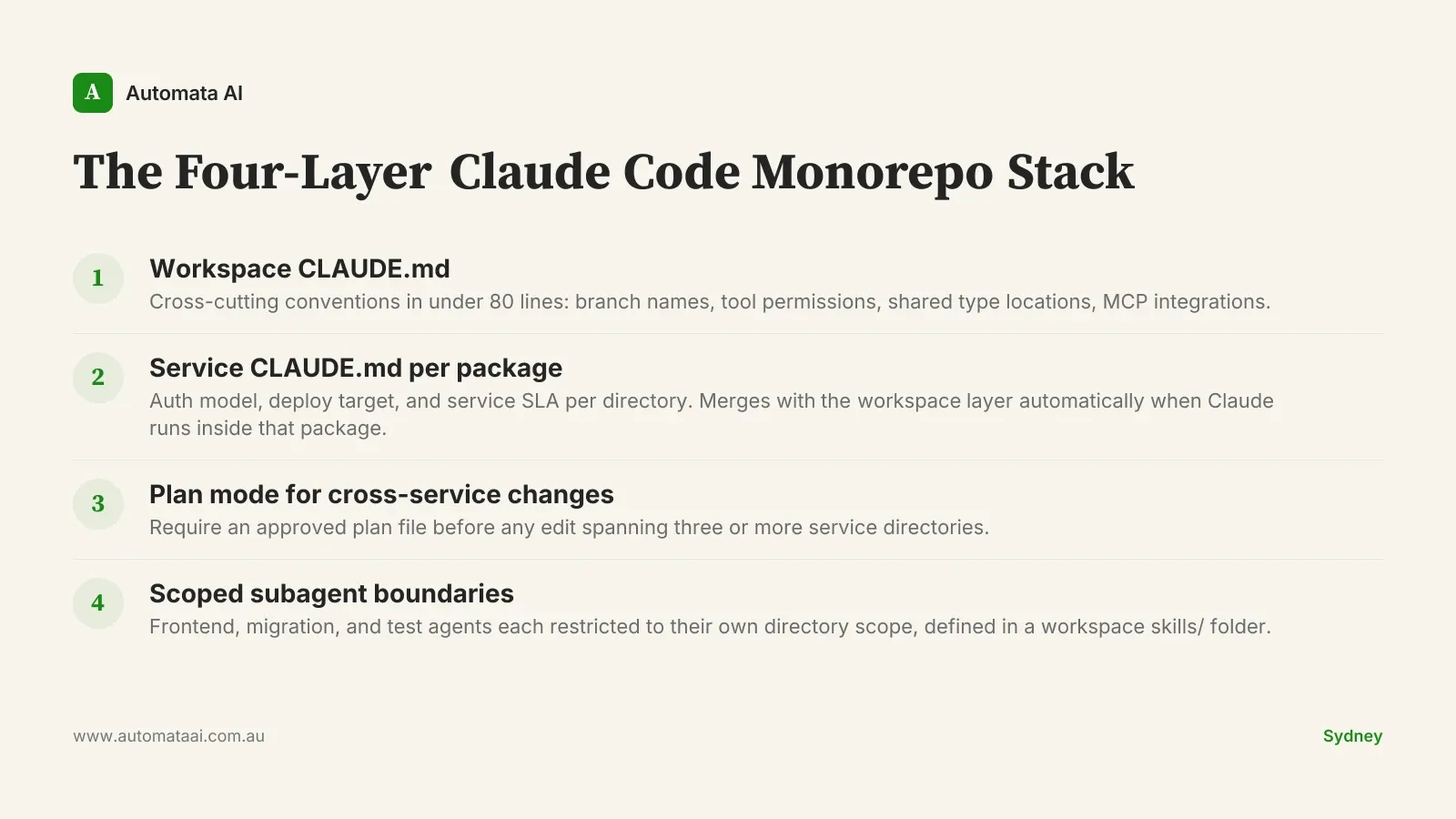

The fix is a four-layer context architecture called the Four-Layer Claude Code Monorepo Stack. Each layer is independently useful; together they make Claude Code a reliable development partner across services. If you want to map where your current setup sits before starting, our AI Readiness Assessment surfaces the gaps.

Layer 1: Workspace-level conventions

The repo root holds a short CLAUDE.md with cross-cutting conventions: branch naming, PR templates, code-review expectations, the test runner, and which tools Claude Code is permitted to call. Keep it under 80 lines. Anything beyond that belongs in linked documents Claude loads on demand.

Allowed and disallowed tools. If Claude should not run kubectl in a production context, or should not commit directly to main, say so here explicitly.

Branch and PR conventions. Jira-style prefixes, required PR description fields, review assignment rules that apply across every service in the repo.

Where shared types and API contracts live. If shared auth types are in packages/core/src/auth, put that path here. The billing engineer should not have to explain it every session.

MCPs configured at workspace scope. Linear for ticket context, Datadog for alert history. Register integrations here once, not per service.

The workspace CLAUDE.md is the first document a new engineer reads to understand how the team uses AI. It doubles as the onboarding document for the AI itself. Keep it honest, current, and short enough that people actually maintain it. Our AI Automation Services page covers how we help teams set this up as part of a structured engagement.

Layer 2: Service-level context per package

Each service gets its own CLAUDE.md scoped to that directory. A frontend package pulls the design system tokens, the component naming convention, and the accessibility baseline. A billing service pulls the payment gateway client pattern, idempotency requirements, and the integration test approach. A platform service pulls the SLA targets, alert thresholds, and the on-call escalation path.

When an engineer runs Claude Code inside a service directory, the workspace and service CLAUDE.md files merge automatically. The engineer working inside packages/billing gets both layers without switching context or loading extra files.

This composability is what prevents the Friday 403 scenario. The billing service CLAUDE.md includes the current JWT claim format and a pointer to the shared auth type definitions. Claude reads it before writing. The cross-service assumption that broke the Monday platform is surfaced before the PR is raised.

Layer 3: Plan mode gates cross-service changes

Not every change stays in one service. A feature touching the user service, the billing service, and the API gateway is precisely the situation where Claude should write the plan before touching code. Review it. Approve it. Then execute.

A repo-level hook that flags when edits span three or more service directories. Claude Code supports hooks natively. A lightweight shell check that prompts for an approved plan file is a one-afternoon implementation.

A required PR description section. When the diff spans services, the PR template asks for a link to the plan file. This creates an auditable record of intent.

A senior engineer review of any cross-service plan before execution. Not a veto. A fifteen-minute read that catches assumption mismatches before they reach production.

Plan mode is not a slowdown mechanism. It is the difference between Claude operating as an engineer who checks with their lead before touching shared infrastructure and one who does not.

Layer 4: Scoped subagent boundaries

Subagents work best in monorepos when scoped tightly. A frontend reviewer subagent gets access to packages/web and the design tokens. A schema migration subagent gets the migrations folder and the database client, nothing else. A test-runner subagent runs in the CI container with no write access to source directories.

The definitions live in a workspace skills/ directory so the boundary conventions travel with the repo. A new engineer onboarding six months from now gets the same agent scopes as the person who set them up. The defaults do not erode over time.

Tight scopes are not limitations imposed on the model. They are deliberate design decisions about what each agent is trusted to do. A migration agent that cannot touch service config cannot break service config.

When not to use this architecture

A monorepo with three services and five engineers does not need four layers of context architecture. The overhead of maintaining per-service CLAUDE.md files adds friction when the services are small and the team shares context naturally. At that scale, a single workspace CLAUDE.md with short service-specific sections is sufficient.

The four-layer stack earns its complexity at roughly the 8-service, 15-engineer threshold. Below that, the coordination overhead of the architecture exceeds the coordination problem it was designed to solve. This is not a universal best practice. It is a pattern that fits a specific scale.

One more check worth making: if your services have genuinely separate ownership, different teams with different deployment pipelines and different on-call rotations, a monorepo may be the wrong structural choice regardless of how you configure Claude Code. This stack assumes an intentional monorepo. Resolve the architecture question before investing in the tooling.

The teams that ship this well take three to four weeks and test each layer with a real cross-service change before moving to the next. The teams that struggle set up a root CLAUDE.md on day one and declare the job done. Six months later they are still correcting AI-generated code that does not understand the codebase.

Claude Code is a capable development partner in a monorepo. The context architecture is what makes it a reliable one. If you are sizing the investment for your engineering org, book a technical diagnostic with our team. We work with mid-market Australian engineering orgs to stand up this architecture in a structured engagement.