Three hours into a Claude Code session, the model contradicts a decision it agreed to before lunch. The engineer pastes the prior output back in. The model agrees with both versions.

Most teams conclude the tool does not work.

The tool works. Context engineering is the discipline of managing what Claude knows, in what order, across a working day. It is what keeps a session productive from standup to end of business. Miss it and the session drifts. Get it right and a long afternoon produces the same quality output as the first 30 minutes.

For a senior Australian engineer at $200,000 fully loaded, recovering two hours of drift-related rework per day is worth roughly $50,000 in annual capacity. Run that across a 20-person team and the gap exceeds $1M per year. You can model the numbers for your own headcount using our ROI Calculator.

What context drift actually looks like

The context window is a working memory budget. Each session starts clean, and the budget fills over time with conversation history, file reads, tool output, and intermediate reasoning. When the window saturates, the model still produces output. Confidently. But with less of the earlier session available to draw on. Decisions made at 9 AM become inaccessible by 3 PM.

The result looks like an able model having a bad afternoon. That is not what is happening. The session accumulated dead weight: pasted logs from an abandoned debug run, repeated reads of the same file, intermediate reasoning that went nowhere. Nobody cleaned it out. This is a session-shape problem. It has a method.

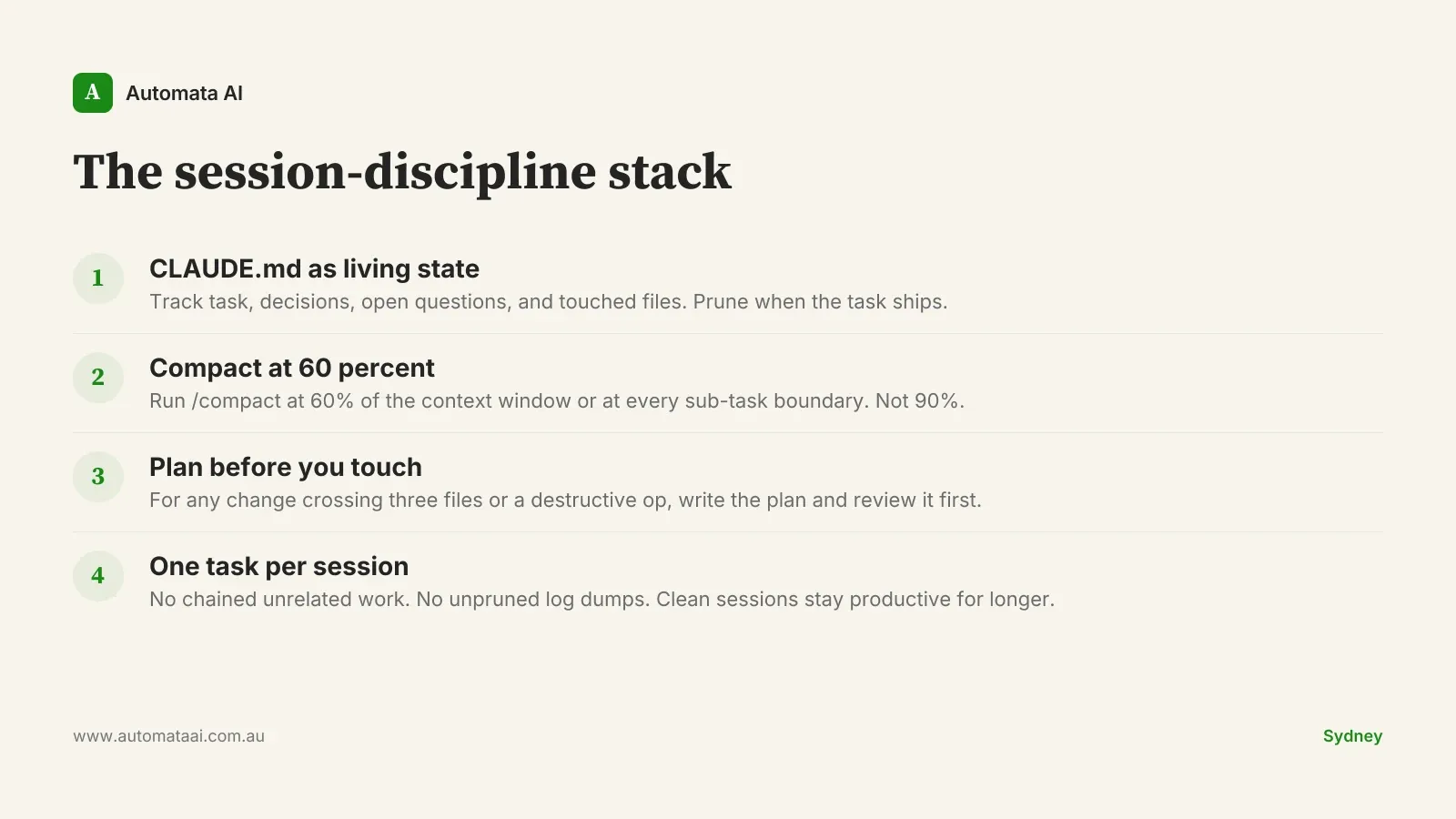

Pattern 1: CLAUDE.md as living state

The lowest-friction entry point is a CLAUDE.md file at the project root. Claude Code reads it at every session start. The file is a working scratchpad, not polished documentation. It gets pruned when the task ships.

Current task and acceptance criteria. Not a vague description. The specific output that marks this done.

Decisions made and the reasoning behind them. 'We chose SQLite because this runs locally' is more useful than 'We chose SQLite'. The reasoning prevents re-litigation two days later.

Open questions, and the answers when they land. Most teams skip this part. It is the most valuable part.

Files touched in this work stream. Claude can orient from the list at session start without re-reading each file in full.

Teams that adopt this pattern report 40 to 60 percent fewer cases where the model contradicts a prior decision. The reason is simple: the prior decision is in context before the first message of the day, not buried twenty turns back in a previous session the model cannot access.

Pattern 2: /compact before crossing the threshold

Long sessions accumulate dead context. Pasted stack traces that resolved. File reads from an approach that was abandoned. Reasoning chains that led to a dead end but were never cleared. All of it stays in the window, consuming budget that could serve the next subtask.

/compact compresses prior turns into a summary and frees that budget. The variable is not whether to use it. It is when.

At 60 percent of the context window, not 90. Waiting until the window is full means the compaction itself runs with degraded context.

At every sub-task boundary. Finishing a module, finishing a migration, finishing a debug cycle. Run /compact before the next task starts.

After a debug excursion that did not change the plan. The model explored it. Do not carry that exploration forward.

Before reading in a large file or activating a new MCP tool. Create the room before you need it.

A Melbourne fintech engineering team ran an informal comparison across two sprints. Sessions with this discipline produced pull requests that needed 30 percent less rework in review. Sessions without it produced more confident-sounding output that turned out to be less correct. The overconfidence was the tell that context had run thin.

Pattern 3: Plan mode before any change worth reviewing

Plan mode forces Claude to write out the full approach before touching any files. You review the plan, push back on anything that looks wrong, and only then let it execute. This is not process overhead. It is the cheapest possible way to catch a misunderstanding before it propagates across three files and a migration script.

The change touches more than three files. Cross-file changes are where a wrong assumption does the most compounding damage.

The change involves a migration or a destructive operation. Irreversibility raises the cost of a wrong direction.

The change affects shared infrastructure or authentication flows. The blast radius is wide enough to justify the extra step.

The engineer is new to this area of the codebase. Plan mode surfaces hidden assumptions before they become bugs discovered after a deploy.

Our AI Readiness Assessment walks through how to embed plan mode and session discipline into an existing development workflow. Not as an optional layer. As the default.

When these patterns do not apply

Not every session needs all four. A 30-minute bug fix in a familiar part of the codebase, with a fresh context window, does not need a CLAUDE.md scratchpad or a /compact pass. That overhead on a simple task is waste. Mistaking discipline for dogma is its own kind of drift.

The signals that warrant the full stack: sessions expected to run more than two hours, changes crossing infrastructure or authentication boundaries, teams rotating through the same codebase who need shared context between engineers, or any task where a wrong direction costs a full day to unwind. Below that bar, stay light.

Most Australian engineering teams that report poor Claude Code sessions are running 45-minute sessions with no CLAUDE.md, no /compact pass, and three unrelated tasks chained end to end. Then they wonder why output quality drops after the first 20 minutes. The pattern is not the tool's fault.

Start with one pattern in tomorrow's longest session. CLAUDE.md is the lowest barrier: fifteen minutes to create, no tooling required, and the payback is visible by the second session. If you're building this into a team-wide adoption, our AI Automation Services describes how we structure that work with mid-market Australian engineering teams.