The proof of concept landed well. IT gave the green light. The team loved it. Now someone has to turn a 12-person pilot into a 500-user deployment across five departments, and nobody left a runbook behind.

That is the gap most enterprises fall into. The pilot proved the model works. What it did not prove was whether your access controls, governance policies, audit requirements, and team training could scale alongside it. For Australian mid-market organisations moving Claude into production, those gaps cost more than the licence.

The real cost of per-seat AI at enterprise scale

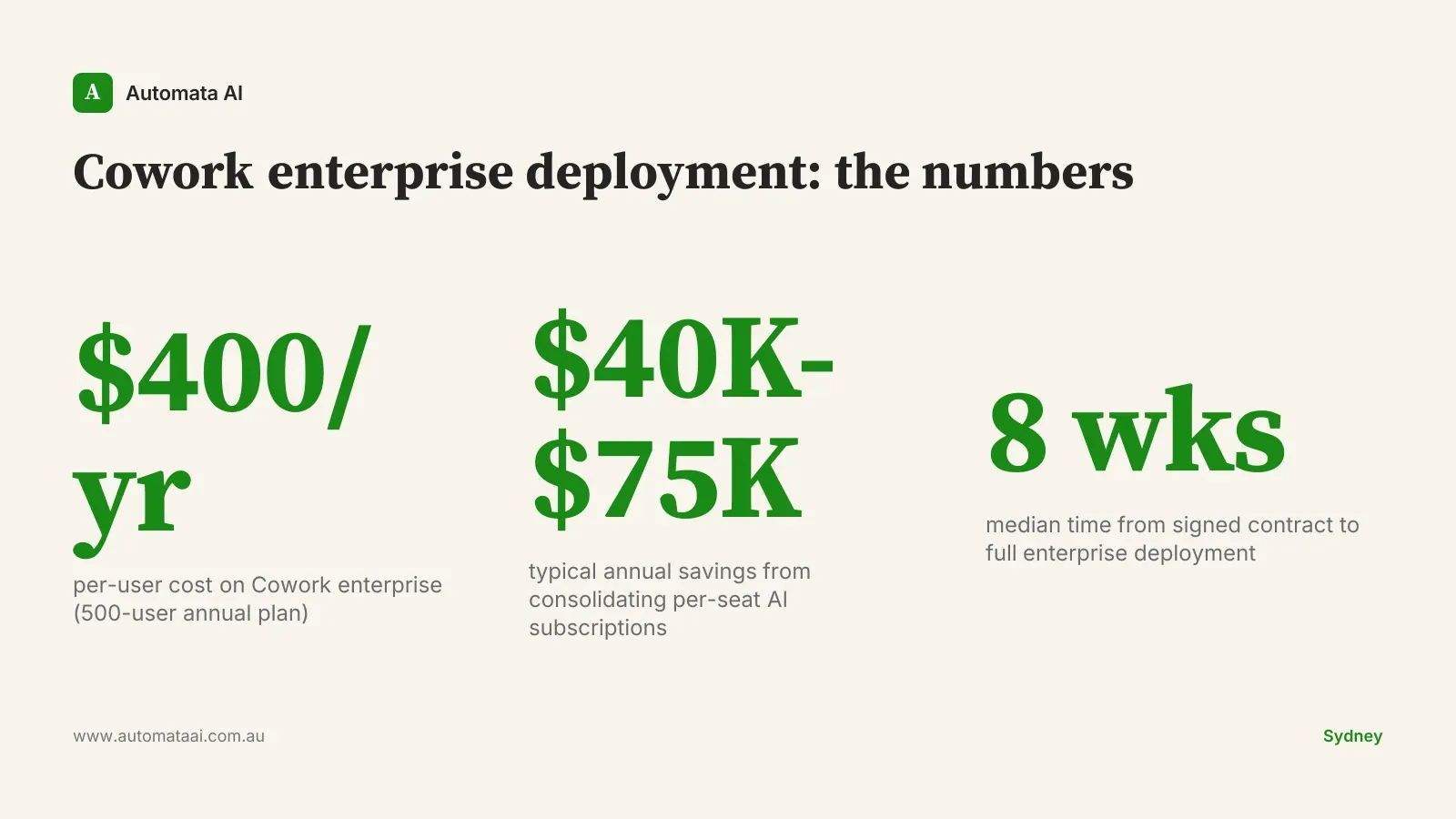

Per-seat AI tooling adds up differently than most finance teams expect. At $50 to $150 per user per month, a 500-person organisation running three or four AI subscriptions in parallel is spending $300,000 to $900,000 per year. That is before IT integration overhead, security reviews, or the duplication that happens when departments buy their own tools independently.

Cowork enterprise licensing changes the maths. At AUD$200,000 per year for 500 users, the unit cost is $400 per user per year. That is less than three months of the low-end per-seat rate. Consolidation savings based on typical mid-market tool sprawl land between AUD$40,000 and AUD$75,000 annually, and that figure assumes only partial consolidation.

The AUD$200,000 covers the entire organisation, not a per-team budget. When each department stops running its own subscriptions, the payback arrives fast. Model your own scenario in the ROI Calculator with your headcount and current tool spend.

Governance before deployment: the structure audit teams actually accept

Under Australia's Privacy Act (1988) and APRA CPS 230 for regulated entities, any AI-assisted decision touching personal or financial data needs to be auditable. Saying the AI recommended it is not a defensible position in a regulatory review. Governance structure has to come before the rollout, not get added six months later when compliance starts asking questions.

The Cowork governance model operates at three levels. This is the structure that enterprise teams in Sydney and Melbourne have found passes both internal audit and external scrutiny:

Role-based agent access. Support teams access the support agent, compliance teams access the compliance agent, engineering accesses engineering. No agent operates outside its defined domain.

Audit logs for every AI-assisted decision. Who ran what, with what inputs, and what the output was. This is the evidence chain auditors and regulators look for under CPS 230.

Human fallback on defined high-risk tasks. Contract review above $100,000, employment decisions, and regulatory filings route to a human before any action is taken.

Configured upfront, this structure takes two to three weeks to establish. Retrofitted under compliance pressure, it takes two to three months and still produces gaps. The difference is the sequence. All three levels come standard in our AI Automation Services enterprise tier.

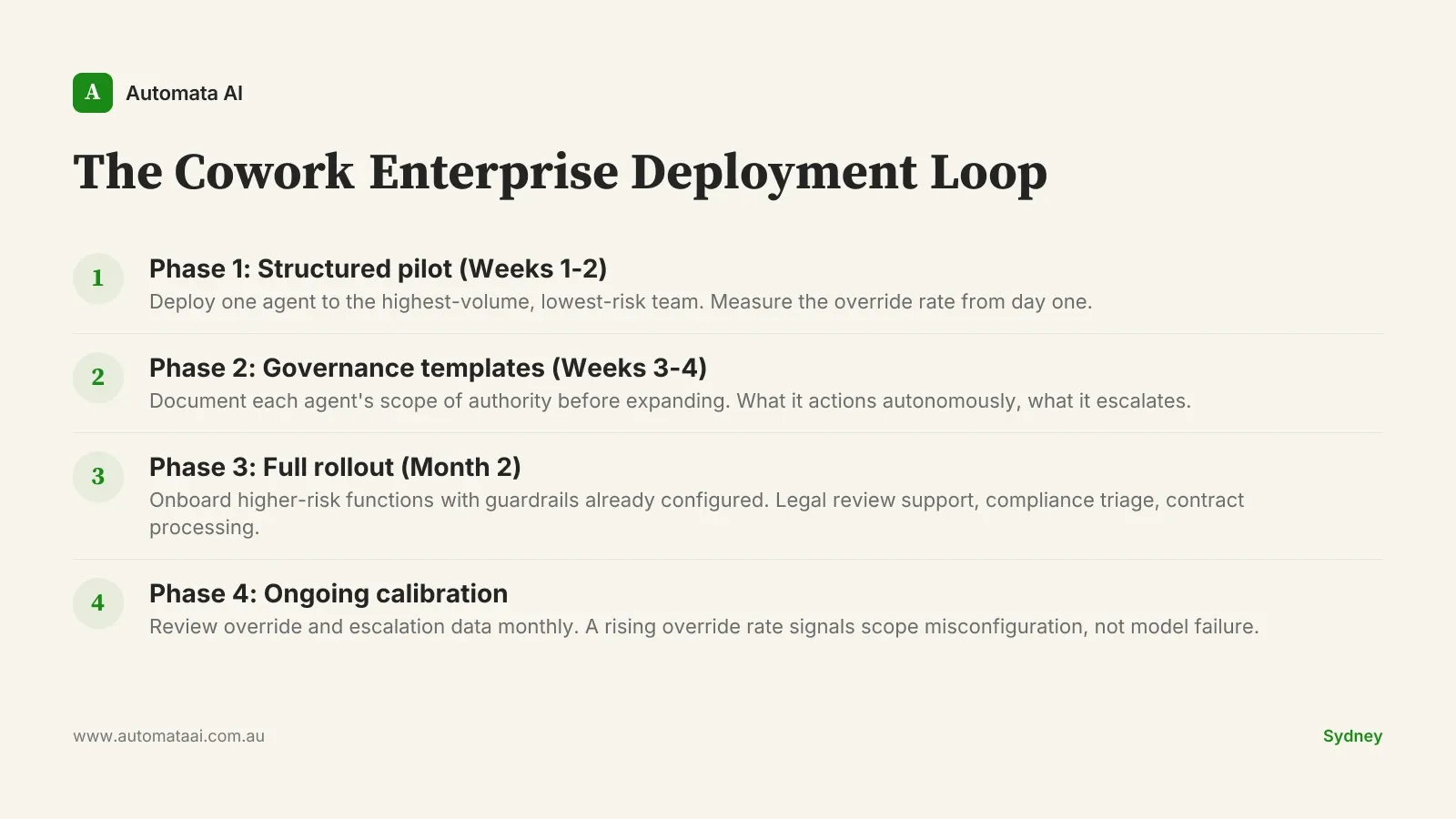

The Cowork Enterprise Deployment Loop

The four-phase structure below is the deployment model we use with Australian mid-market organisations. It does not end after month two. The override and escalation data from Phase 4 feeds directly back into Phase 1 scope definitions for the next agent you deploy. That feedback loop is what separates a one-time rollout from an AI programme that actually matures over time.

Phase 1 (Weeks 1-2). Deploy one agent to the highest-volume, lowest-risk team. Support and finance work well here because the processes are repetitive and the outputs are reviewable. Measure override rate from day one.

Phase 2 (Weeks 3-4). Build governance templates before expanding. Every agent profile gets a documented scope of authority: what it actions autonomously, what it escalates. No exceptions.

Phase 3 (Month 2). Onboard higher-risk functions: legal review support, contract processing, compliance triage. The guardrails from Phase 2 are already in place. This is when the Privacy Act obligations on AI-assisted decisions become load-bearing.

Phase 4 (Ongoing). Review override and escalation data monthly. The number worth watching is not AI accuracy. It is the rate of tasks humans override. A rising override rate in month three usually means the agent scope was defined too broadly.

When enterprise deployment is premature

Not every organisation is ready for this. If your team is still working out what the core workflows actually are, enterprise licensing is premature and the governance overhead will feel like bureaucracy rather than structure.

The governance setup alone takes two to four weeks: role-based access configuration, audit trail infrastructure, manager training on override logs. For an organisation with fewer than 150 active AI users, that overhead pushes the payback period past 18 months. The AUD$200,000 structure does not pay for itself at 50-person scale.

Fewer than 150 users actively using AI tools. The per-user savings do not materialise at this scale.

Core workflows changing more than once per quarter. Governance templates built on shifting processes require constant revision.

No dedicated IT resource for access control management. Role-based access without ownership drifts and creates security gaps.

Fewer than two departments with stable, high-volume repetitive processes. Enterprise structure without enterprise volume is overhead, not investment.

If those describe your situation, start with an AI Readiness Assessment. Map the processes, model the ROI, document the governance requirements. Then come back to this playbook when the numbers justify it.

The deployment is the work, not the model

Enterprise AI rollouts are not slowed by the model. Claude is ready. What slows them is access control, governance structure, and the fact that most organisations try to build those after the fact, under deadline pressure, after the rollout has already started creating compliance exposure.

Cowork gives you the structure before the pressure arrives. Pick a team with stable, high-volume processes. Define the agent scope before you deploy it. Instrument the override rate from day one. The organisations that move from POC to full production in under eight weeks are not the ones with the best technology. They are the ones that planned the governance before they planned the features.