The most common moment for Privacy Act compliance to become urgent is when someone asks the question out loud. Your AI system has been processing customer data for six months. Legal is asking. But you do not have a documented answer for how it handles personal information.

OpenAI's Privacy Filter is one tool designed to give you that answer.

What Privacy Filter actually is

Privacy Filter is a dedicated PII detection and masking model released under the Apache 2.0 licence. Open-weight means you can run it on your own cloud tenancy, on-premises servers, or a private VPC without sending data to a third-party model endpoint. It detects names, addresses, phone numbers, government identifiers, financial account numbers, and health information, substituting masked tokens before any downstream system sees the raw data.

That matters directly for Australian Privacy Principle 8, which governs cross-border disclosure of personal information. Running preprocessing inside your own infrastructure eliminates one of the more complex compliance obligations for organisations currently sending unfiltered data to US-hosted model endpoints.

The four Australian organisations with direct Privacy Act exposure

Four categories of Australian organisation have immediate reason to evaluate this seriously.

APRA-regulated financial services. Customer data flows through AI for credit analytics, fraud detection, and support operations. PII filtering before data reaches a model reduces compliance exposure and supports CPS 234 information security obligations, which require documented controls over information assets.

Healthcare providers. Patient data sits at the intersection of the Privacy Act and the My Health Records Act. A documented preprocessing layer adds an auditable control before any AI system touches clinical or administrative records.

Government agencies. Citizen data comes with handling requirements that make cloud-based processing difficult to justify politically and practically. An open-weight model running on-premises avoids the cloud exposure question entirely.

Any Australian enterprise with employee data in AI workflows. Performance reviews, HR support transcripts, payroll queries, internal communications — all can contain sensitive personal information. Processing them through AI without a filtering layer is defensible only if you have documented why the risk is acceptable.

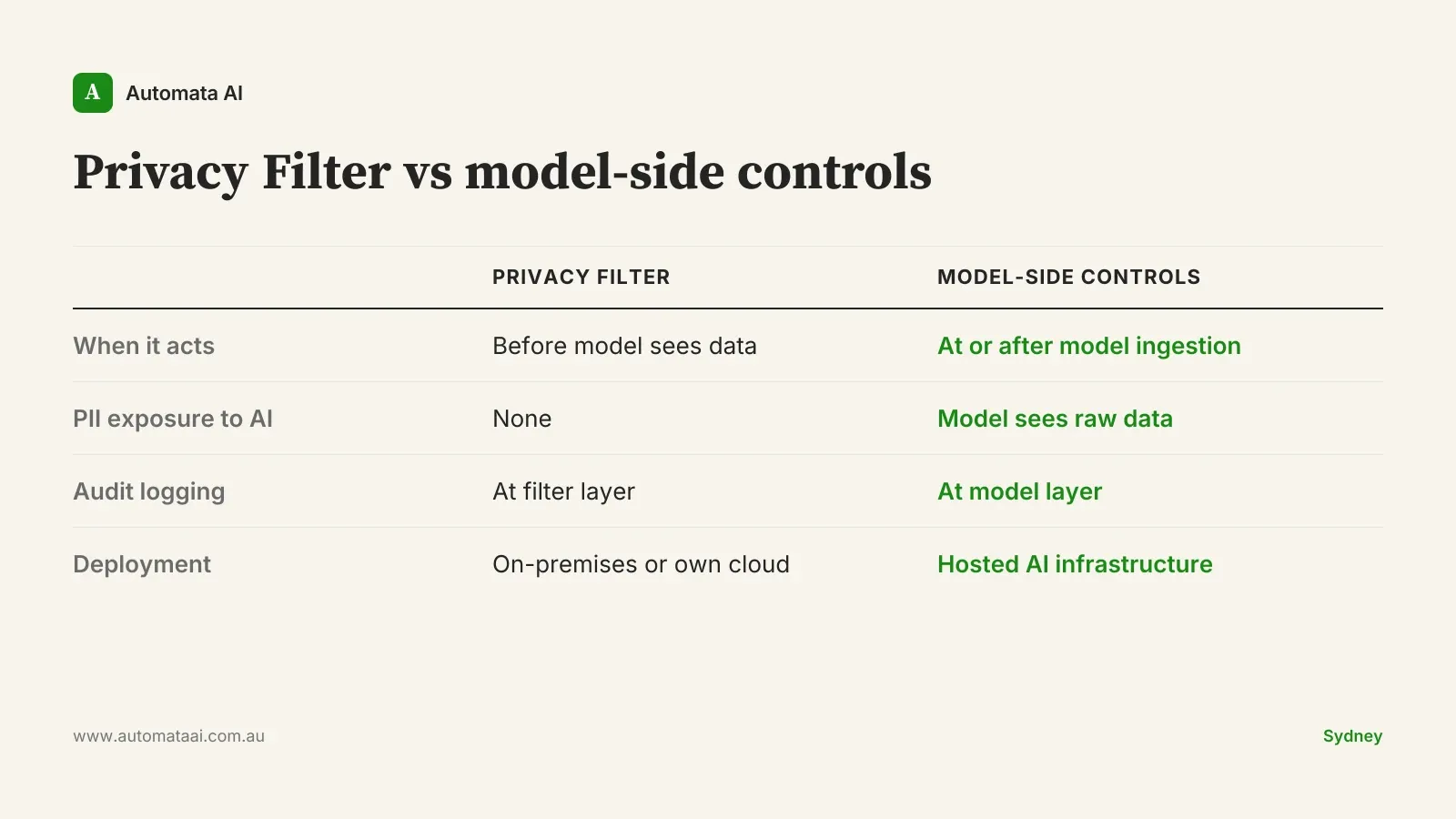

Pre-processing or model-side: a different compliance posture

The alternatives to Privacy Filter are real and often good. Many Australian teams already rely on custom system prompts that instruct the model to handle PII appropriately, MCP servers that sanitise inputs before they reach the model, or Bedrock and Vertex AI deployments that come with data-residency guarantees and formal data processing agreements. These are mature, documented choices with strong vendor support.

The difference is timing. Those approaches operate at or after the point where the AI model encounters your data. Privacy Filter operates before. The model sees [PERSON_NAME] and [AU_PHONE_NUMBER] instead of the actual values. That is a structurally different compliance posture.

Neither approach is correct in the abstract. It depends on your data sensitivity, your regulatory context, and how mature your AI governance documentation is. The pre-processing posture is stronger for highly regulated inputs; the model-side posture can be sufficient for lower-sensitivity workflows with appropriate controls. If your compliance team cannot articulate which posture your organisation has adopted, that gap is more urgent than which tool you choose.

When Privacy Filter is the wrong answer

There are scenarios where a separate preprocessing model adds complexity without meaningful compliance benefit.

Your data is already de-identified upstream. Coded, structured data from a warehouse that already strips identifiers does not need a second filter.

The sensitivity profile does not warrant it. Internal productivity tools processing general business correspondence have a different risk profile from systems handling customer financial data. Do not over-engineer low-risk workflows.

Volume does not justify the overhead. Privacy Filter is free to licence but not free to operate. If the data volume is low enough that manual review is feasible, infrastructure deployment is not the answer.

You are in early pilot phase. If you have not yet scoped which inputs carry PII, design the pilot to answer that question first before building a filtering layer on top of an undefined input set.

A preprocessing layer you cannot maintain is worse than no preprocessing layer. Build the operational capability before you build the architecture.

Three deployment patterns for Australian organisations

1. High-volume support transcript processing

Run Privacy Filter as a batch step before any transcript reaches the AI layer. Customer names, account numbers, contact details, and reference numbers are masked at ingestion. The analysis model works on redacted text and produces output that can be stored without triggering the same data handling obligations as the raw transcript. Log what was masked and when — that log is your audit trail if the OAIC ever asks.

2. Document analysis with mixed-sensitivity content

Legal, HR, and compliance document review often involves a mix of sensitive and non-sensitive material. Privacy Filter as a first pass identifies and masks personal information; the analysis model works on the output. The filtering step is fast and cheap. The analysis step is expensive. Keep them separate and only send to the model what genuinely needs analysis. This also limits your exposure on the model's data retention terms, which vary significantly between providers.

3. Full compliance posture for audit-ready workflows

Preprocessing with Privacy Filter, audit logging at the filter step, plus model-side controls as a second layer. This is the architecture you document when a regulator asks how your AI system handles personal information. Both layers need to be logged. The documentation is the point. For organisations under APRA supervision or with significant healthcare data exposure, this posture should be baseline.

What it costs to run this in Australia

Licensing is zero. Privacy Filter is Apache 2.0 with no usage fees. Operational cost for a Sydney or Melbourne deployment covers compute, monitoring, patching, and ongoing operations. The total typically runs $20,000 to $80,000 per year, depending on processing volume, whether you use managed GPU infrastructure, and whether your team is self-managing or engaging a provider for model hosting and maintenance. At higher volumes, the per-record cost drops significantly.

Compare that to a notifiable data breach under the Privacy Act. External audit, mandatory notification to affected individuals, OAIC reporting, and remediation typically lands between $200,000 and $1 million before you account for reputational damage and the cost of rebuilding customer trust in sectors where trust is the product. For organisations processing material volumes of personal information through AI, the case for a filtering layer is not complicated.

The Privacy Act's notifiable data breach scheme gives you 30 days to report a qualifying breach and notify affected individuals once you become aware of it. The right time to design your AI data handling architecture is before that 30-day clock starts.