Your sprint board says two weeks. Your agent finished the feature in six hours.

That gap isn't a curiosity. It's the structural problem your product organisation now has.

Australian product teams running standard sprint cadences (two-week cycles, weekly standups, monthly releases) were designed around how long it takes a skilled developer to write, review, and ship code. Agentic AI has decoupled that assumption. Not gradually. All at once. The workflow your product team built over the last three years is now miscalibrated for the speed at which features can be produced.

The cost of running at human speed

For a mid-market Sydney or Melbourne product team fully loaded at $120–$150 per hour across engineers, QA, and product management, the arithmetic is direct. A two-week feature build costs $9,600–$12,000 in time. An agent-assisted build on the same spec takes six to eight hours, reviewed and shipped. Run twenty features a year and the efficiency gap is $160K–$240K, before counting the revenue you are not capturing because a competitor shipped first.

The businesses most likely to close that gap against you are not US hyperscalers. They are comparably sized Sydney and Melbourne competitors who adopted agentic workflows six months before you did. Per feature, that is a six-to-twelve week head start. Compounded across a product roadmap, it is a category position. A Sydney fintech that ships a new lending feature in a week, while a competitor is still in sprint planning, has six weeks of user data, feedback, and iteration before that competitor ships anything. That is not a temporary advantage. It compounds.

The numbers vary by team size and role mix. Run this against your own headcount in our ROI Calculator. It handles AUD figures and mid-market headcount configurations.

The new product loop

The headline story is that AI writes code faster. The actual shift runs deeper.

When agents can produce a working feature from a well-written spec in hours, the bottleneck moves upstream to the specification itself. Product managers who write vague briefs get vague features. The garbage-in problem does not disappear. It accelerates. And it now accelerates at ten times the speed.

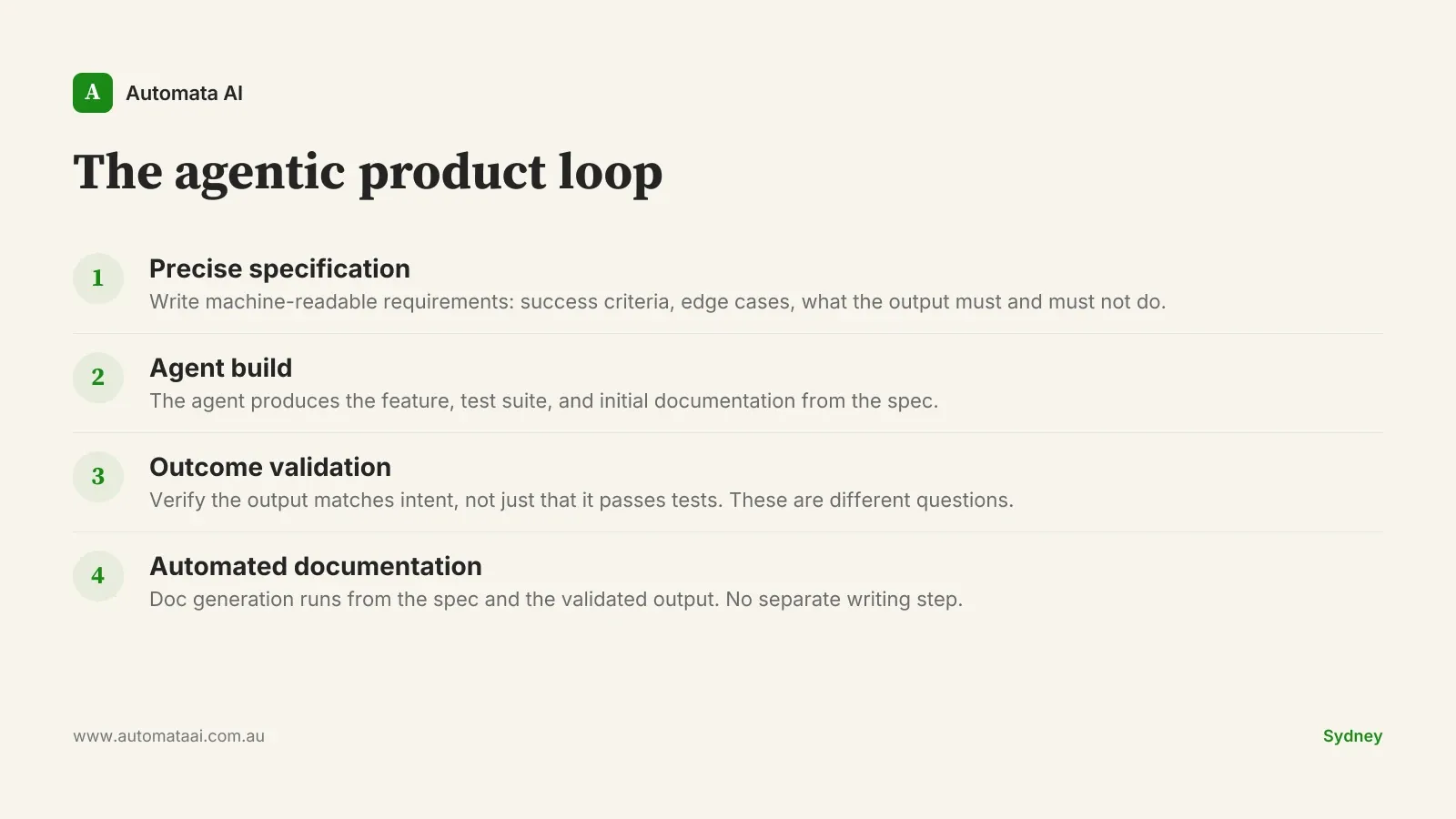

The agentic product loop runs as follows: precise specification, agent build, outcome validation, automated documentation. No human coding step in the middle. Product managers sit at the front, writing the spec. Engineers sit at the back, verifying that the output matches intent. Not just that it passes tests.

This changes QA fundamentally. Traditional QA tests whether the implementation matches the requirement. Agentic QA tests whether the outcome matches the intent. A feature can pass every unit test and still fail the user's actual need. This is not a testing failure. It is a specification failure.

The spec is now the product

Version control used to mean code control. In an agentic workflow, the specification document is the source of truth. If the spec is ambiguous, the codebase will be too. If it drifts across three iterations, so will the feature behaviour. Engineers who have worked in agentic environments often describe the spec as the actual product. The code is the output, not the source.

Product teams in Melbourne and Sydney that have adopted agentic tooling are finding their weakest link is documentation discipline, not technical capability. An agent builds exactly what you specified. The problem is that what you specified was not what you meant. This gap existed in traditional development too. A skilled developer would ask clarifying questions and fill in the blanks. An agent does not. It ships exactly what you wrote.

Product managers are developing a new skill: writing machine-readable requirements. Not because agents cannot parse natural language, but because precise language produces predictable outcomes. The difference between 'make the checkout faster' and 'reduce API response time from 400ms to under 150ms on the checkout endpoint' is the same difference you will see in the output. Specification quality is the first thing we address in our AI Automation Services before any agent tooling is introduced.

When not to adopt agentic workflows

Not every part of your product should move to agent workflows. The temptation after seeing agentic speed in action is to apply it everywhere immediately. Three conditions where the traditional development model is still correct:

Regulated data flows. If your product operates under Australian Privacy Principles, APRA CPS 230 obligations, or AUSTRAC requirements, agent-generated code touching those data paths needs structured human review at the integration point. Automated tests catch errors. Humans catch misaligned intent. Regulators care about intent.

Unstable process definitions. If requirements change every sprint because the business has not decided what it wants, agents will build the wrong thing faster. Speed amplifies direction, good or bad.

Core transaction and identity systems. Agents are productive on net-new features and isolated components. Agents modifying live payment rails, identity management, or core transaction processing require the same careful review those systems have always demanded.

The $160K–$240K annual efficiency advantage disappears quickly if agent-generated code ships bugs into a payments system at three times the normal velocity.

Managing drift in practice

Agent code quality is good but not uniform. Output on well-defined, isolated tasks is reliable. Output on loosely specified, cross-cutting concerns is where drift occurs. It happens silently, across iterations, and is rarely visible in test results alone. By the time a team notices the codebase has drifted from the original intent, the spec and the code have diverged across dozens of builds.

Track spec-to-output fidelity. At each iteration, compare what was built against what was specified. Not whether it works. Whether it matches intent. These diverge early and quietly.

Require human review on critical paths. Automated tests catch errors. Humans catch misaligned intent. Both are necessary because they catch different things.

Treat the spec as a living document. When agent output reveals a gap in the spec, update the spec. Your codebase reflects the quality of your documentation.

If you are not sure whether your product workflows are spec-mature enough for agentic tooling, an AI Readiness Assessment covers spec quality and process stability directly.

The businesses building competitive positions in 2026 are not the ones with the most engineers. They are the ones with the most disciplined specification process and the infrastructure to verify output at agent speed. The product velocity advantage is real, but only if the spec quality is there to direct it.

Pick one feature that has been on your roadmap for six weeks. Write a precise spec: what success looks like, what the edge cases are, what the output must and must not do. Run it through an agent workflow. The gap between what you get and what you expected will tell you more about your product organisation's agentic readiness than any pilot program.