Forty-eight hours. Five teams. All of them shipped to real users before the weekend was over.

That's not a marketing claim. It's what happened at the Claude Code and Opus 4.6 hackathon. Not a demo day. Not a pitch competition. Five production agents, processing real customer data, before the event closed.

The results told us more about where production AI is heading than six months of analyst reports.

What the winning teams built

Three projects set the benchmark. Not because they were the most technically sophisticated. Because they solved the most expensive problems and shipped to real users before the event closed. For Australian businesses, two of these use cases map directly onto workflows consuming 40 to 60 hours per month across finance and customer operations. Financial reconciliation and compliance-adjacent support work that currently sits on the desks of your most experienced staff, not contractors you can easily scale.

Automated QA testing: 70% less cycle time

A testing agent cut QA cycle time by 70%. For a software team spending two full days per sprint on manual regression, that recovers roughly $85,000 per year from a single process. The agent ran inside Claude Code, integrated with the team's existing test suite, and was processing real tickets within 18 hours of the event opening.

Customer support triage: $80K annual saving

A support triage system reduced ticket-routing time to near zero and eliminated the need for an additional hire the business had already budgeted. Estimated annual saving: $80,000. The team used structured outputs from the start, which meant every triage decision was auditable from day one.

Financial reconciliation: four hours to 12 minutes

A reconciliation tool took a daily four-hour process down to 12 minutes. That's roughly 200 hours per month returned to a finance team. At $100 per hour fully loaded, that's $240,000 in annual capacity recovered. This project shipped to $50,000-plus in monthly active usage within 30 days of the hackathon.

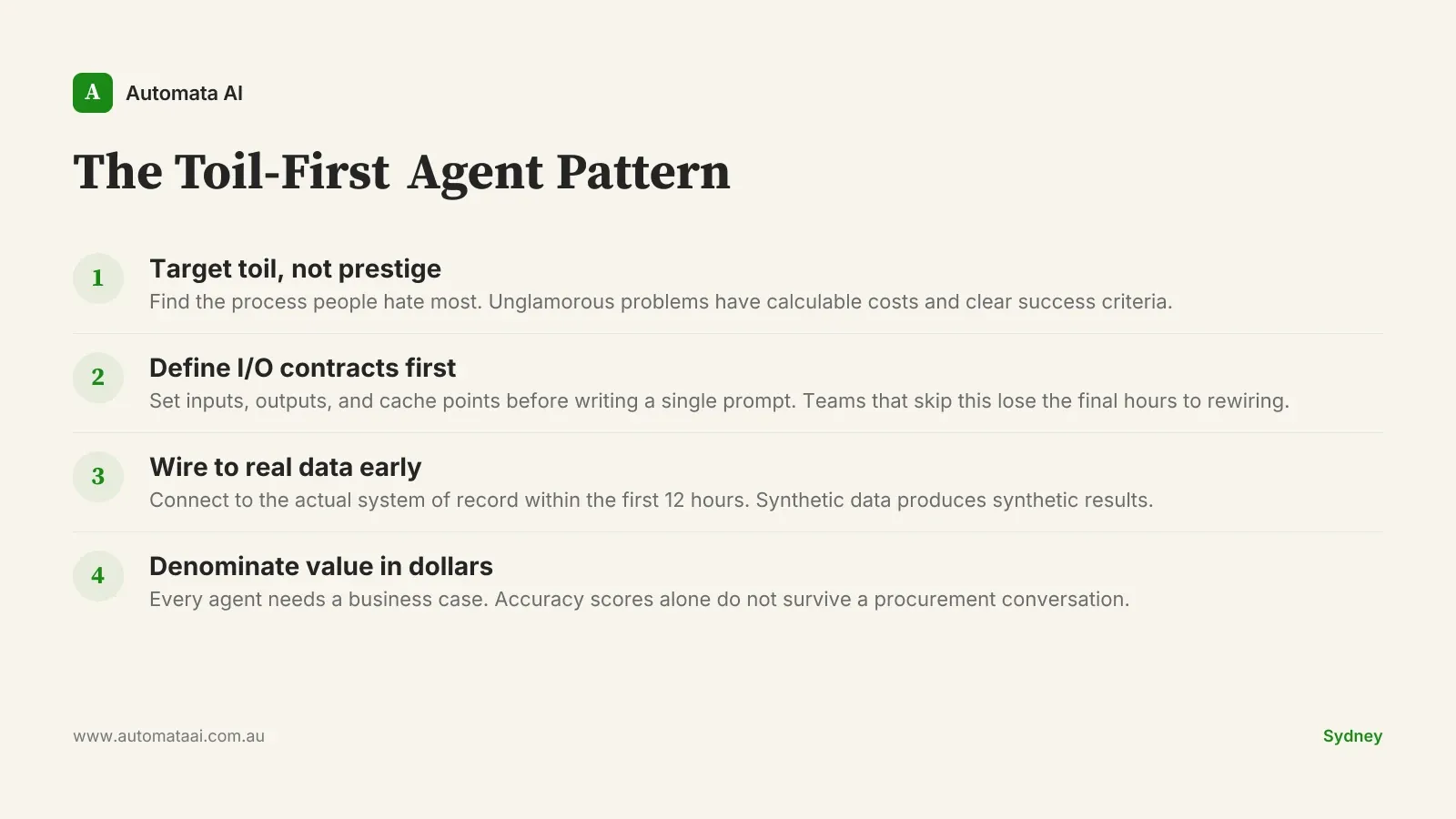

The three patterns that separated winners from participants

The winning teams weren't the ones with the cleverest prompts or the most sophisticated model configuration. They had three things in common, and those three things form what we call the Toil-First Agent Pattern. None of them are obvious until you've watched twenty teams try and fail in the same 48 hours.

Target toil, not prestige. The three winning agents automated processes people hated. Not impressive demos, not frontier capabilities. QA regression, ticket routing, bank reconciliation. Unglamorous problems with calculable costs.

Structure I/O contracts before writing a single prompt. Prize-winning teams defined their inputs and outputs early, cached system prompts, and spent their time wiring agents to real data. Teams still iterating on prompts in the final two hours rarely shipped anything usable.

Denominate value in dollars from hour one. "94% accurate" does not survive a procurement conversation. "$80,000 annual saving" does. Every finalist had a business case attached, not just a benchmark score.

For teams building under Australian Privacy Act obligations, the structured-output approach has a secondary benefit: every agent input and output is logged and inspectable. That's not just good engineering. It's what compliance teams need before they'll approve a production deployment.

When a hackathon win doesn't translate to production

Here's the honest part. Plenty of projects that performed well during the event won't survive contact with production. The reasons are predictable, and they have nothing to do with the model. The agent isn't the variable. Problem selection is.

The data is messier than the demo. Hackathon agents run on curated samples. Production systems have missing fields, encoding errors, and edge cases nobody documented. An agent running at 94% accuracy on clean data often falls to 60% on the real thing.

The process changed after they built it. Rules, approval workflows, and exceptions shift. An agent built to a frozen spec starts degrading the moment the business moves, and most businesses move within the first quarter.

The agent was never wired to the actual system of record. Winning a hackathon with a standalone script is not the same as integrating with a live CRM, ERP, or reconciliation platform. The last 20% of the work takes 80% of the time.

Australian mid-market teams planning for the Q3 2026 hackathon should build with this in mind from hour one. The prize pool is meaningful. The 30 days that follow are where the real test happens. An agent that can't handle your messiest live data, your most awkward edge case, or your compliance team's documentation requirements won't survive an internal review regardless of how well it ran during the event.

The timing is also relevant. Australia's mid-market sits at a genuine inflection point. APRA CPS 230 obligations have made operational resilience a board-level priority in financial services. Privacy Act reforms have raised the cost of unstructured data handling. These aren't obstacles to agent deployment. They're the reason well-structured, auditable agents are exactly what compliance teams will approve.

What Australian teams should prepare before Q3 2026

The Q3 2026 hackathon is open to Australian teams. The prize pool exceeds $50,000, and winners receive production-grade agent consulting. At our Transform engagement pricing of $150,000 to $300,000 for a full production deployment, that consulting is a substantive commitment. It's the difference between leaving with a prototype and leaving with a deployment roadmap a CFO can approve.

The more important number is this: the average prize-winner in the most recent cohort reached $50,000-plus in monthly active usage within 30 days of the event. That's not a demo milestone. That's a business. It happened because those teams arrived with a real problem, a real data source, and a payback calculation that made the deployment decision straightforward.

Claude Code runs inside VS Code, JetBrains, and Warp. Opus 4.6 handles the reasoning layer. The infrastructure gap between a proof of concept and a production agent has narrowed considerably over the past 12 months. The bottleneck now is problem selection, not tooling.

If you want to identify which processes in your business sit above the $50,000 annual threshold before the event, our AI Readiness Assessment is the place to start. Most mid-market businesses have two or three processes that qualify. Finding them takes less time than most teams expect.

Our AI Automation Services include pre-hackathon scoping for teams that want to arrive with a validated problem statement, a defensible business case, and a data sample that reflects production reality rather than a curated subset.

The businesses that did well at this hackathon didn't win because they're better engineers. They won because they spent less time deciding what to build. Pick the toil. Quantify it using our ROI Calculator — if the annual saving clears $50,000 and the process runs daily, 48 hours is enough time to build something real.