Last year, a mid-sized Sydney professional services firm ran a routine penetration test. The testers used an AI-assisted fuzzing tool and found three exploitable API endpoints in four hours. Their previous test, same scope with human testers, took two weeks and found one.

The attacker's clock has changed. Yours probably hasn't.

The attack has changed pace, not just shape

AI-assisted penetration testing tools cost between $50,000 and $100,000 today for commercial-grade capability. That is expensive but procurement-accessible for any mid-tier threat actor or organised criminal operation. In six to twelve months, capable open-source equivalents will cost nothing. The cost barrier that previously kept sophisticated attack tooling out of reach is disappearing. A security posture that was appropriate in 2023, when these tools were largely confined to well-resourced state actors, is already inadequate in 2026.

Fuzzing is the automated testing of inputs to find unexpected system behaviours. With an AI model generating the test cases, it runs 10 to 100 times faster than a human tester. A Claude-class model can produce thousands of edge-case inputs per minute, covering input combinations a human would never think to try. Exploitation chains that previously took a security team weeks to develop now execute in hours.

User enumeration, credential stuffing, and API logic flaw exploitation are all automatable at machine speed. This is not speculative. We are already seeing it in client red-team exercises. The question is not whether your systems will face AI-assisted probing. It is when, and whether your detection infrastructure will notice before the attacker finds a gap worth using.

The five-layer AI defence stack

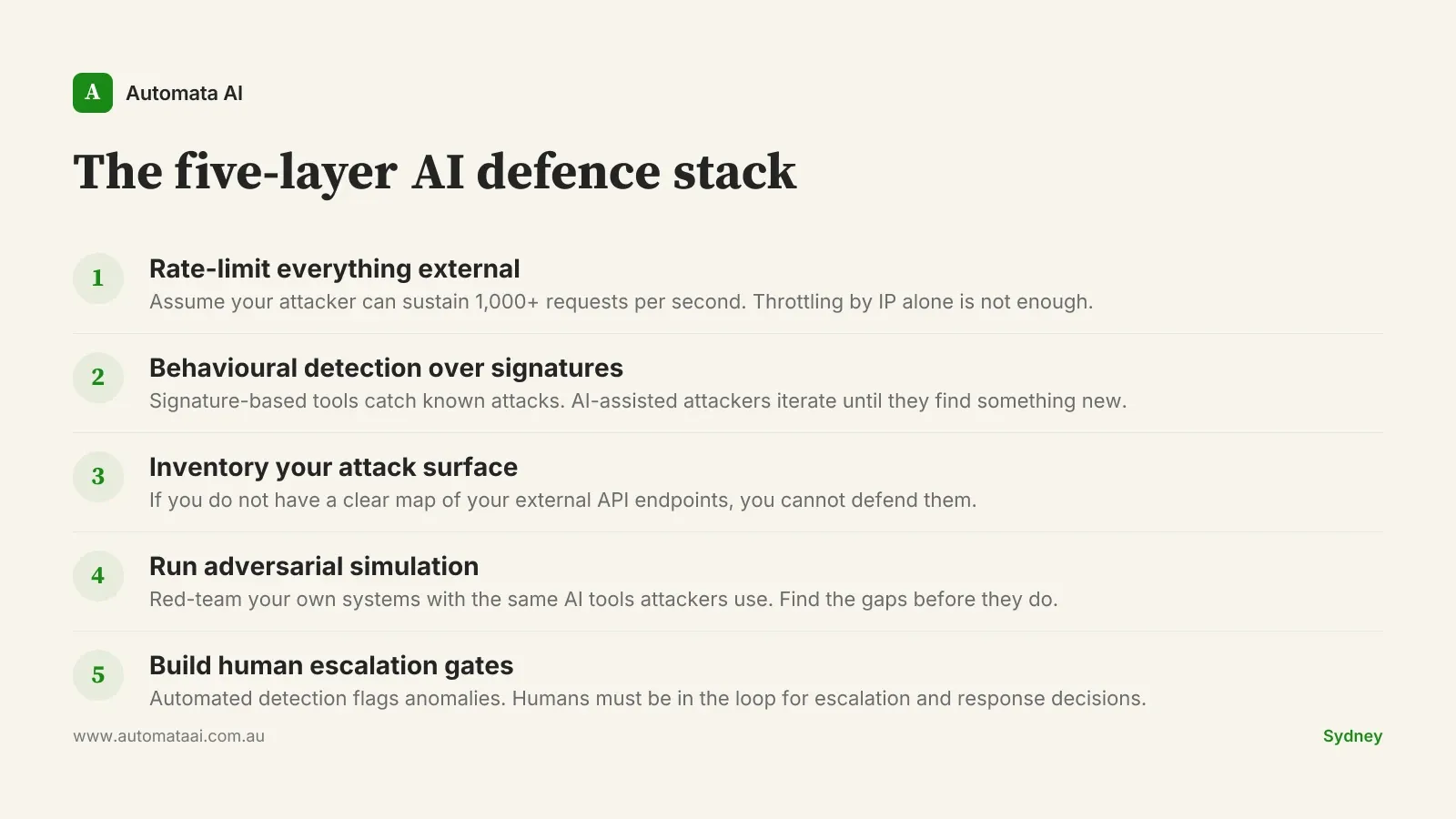

The standard security playbook was designed for human attackers operating at human speed. It needs updating. We call the updated approach the five-layer AI defence stack: five areas where AI-assisted offense has created new exposure that conventional controls were not designed to cover.

The hardest layer to implement is behavioural detection. Signature-based tools catch known attack patterns. AI-assisted attackers iterate rapidly, refining their inputs until the signatures age out. What you need instead is anomaly detection: systems that flag when a series of requests looks statistically unusual, even if each individual request passes a signature check. That is a different tooling category, and most mid-market security stacks do not have it yet. Establishing it requires four to six weeks of normal-traffic baselining before it becomes useful.

The adversarial simulation layer is where most businesses underinvest. Running your own AI-assisted red-team exercise, using the same tools an attacker would use, surfaces gaps a conventional penetration test will miss. Our AI Automation Services include security-adjacent assessments that apply this approach. The goal is to find the exploitable path before the attacker does.

What Australian regulators now expect

The Privacy Act 2024 amendments introduced mandatory breach reporting within 72 hours for serious data breaches, replacing the previous 30-day window. AUSTRAC expects digital transaction monitoring systems to flag anomalies that previously required human pattern-matching. For businesses operating in Australian financial services, APRA CPS 234 now requires security capabilities to be explicitly commensurate with your threat environment. That environment changed materially in 2025.

Privacy Act compliance. Mandatory breach notification within 72 hours and an expanded definition of serious breaches means slower detection now carries direct legal exposure.

AUSTRAC digital monitoring. AML/CTF rules require transaction monitoring capable of matching the speed of AI-assisted fraud attempts.

APRA CPS 234 review. Financial services boards should expect AI-aware security controls to feature in 2026 prudential audits.

APRA has signalled publicly that AI-specific risk assessments will feature in regular prudential audits for regulated entities by the end of 2026. Businesses that wait for the formal prudential standard before acting will be starting from behind. The Privacy Act amendments are already in force. AUSTRAC expects controls now, not when the next rule update publishes. For boards with APRA obligations, the safer posture is to treat 2026 as the compliance deadline, not 2027.

When not to build the full stack immediately

Not every business needs to spend $200,000 to $300,000 on AI security hardening in 2026. If your entire operation is internal, with no customer-facing APIs, no regulated personal data, and no APRA or AUSTRAC obligations, the threat profile is lower and the spend would be disproportionate.

External APIs. Any internet-facing endpoint is now in scope for AI-assisted fuzzing. This includes webhook receivers, authentication endpoints, and third-party integrations.

Regulated personal data. Financial, health, or identity data under the Australian Privacy Principles creates direct breach notification exposure under the updated Privacy Act.

Customer authentication flows. Login, payment, and identity verification paths are the highest-value targets for AI-assisted credential and logic attacks.

If none of those apply, a basic rate-limiting and monitoring uplift runs $15,000 to $25,000 and is a more proportionate place to start.

Where to start in 2026

For businesses that do have external API exposure, the right first step is not a $300,000 programme. It is an honest inventory: which endpoints are internet-facing, what data they handle, and what rate limits are currently in place. That inventory takes a week with the right template. Once you have it, the prioritisation almost does itself. Endpoints handling authentication or financial data go to the top. Endpoints returning publicly available information can wait. Our AI Readiness Assessment includes a security surface review as part of the engagement.

For mid-market businesses at roughly $15 million to $200 million in revenue with customer-facing digital systems, a focused hardening engagement covering rate limiting, behavioural monitoring setup, and a one-day AI red-team exercise runs $40,000 to $80,000. That is the realistic minimum for 2026 if AI-assisted probing is already part of your threat model. For enterprise, the $200,000 to $300,000 figure covers a more complete programme: detection tooling procurement, an adversarial simulation engagement, and a security architect to integrate it across your existing stack.

The businesses that will be caught flat-footed are the ones that modelled their 2026 security posture against 2023 attacker behaviour. That gap is real and widening. The defensive tools exist, the playbook is clear, and the regulatory expectations are set. The cost of acting early is a fraction of the cost of a breach notification to AUSTRAC and 72 hours of incident response at $200 to $400 per hour.