Two requests, same prompt, different behaviour. That's what some Claude production teams observed on April 23, when a deployment-ordering issue at Anthropic created a brief window where some inference traffic hit a partially-configured state.

The incident lasted roughly 90 minutes. Recovery was clean. Anthropic's status communication was fast and specific. But for Australian enterprises running Claude in any customer-facing capacity, the postmortem itself is the valuable document.

What happened on April 23

A deployment-ordering error meant that during the rollout window, some requests hit a fully-updated configuration while others hit the previous state. The result: elevated error rates and inconsistent model behaviour across requests in the same session. Not every affected request failed outright. Some returned inconsistent outputs with no visible error signal. That's the failure mode that's hardest to catch: silent inconsistency rather than a clean error response.

Anthropic published a detailed postmortem. Their comms were prompt and informative, a genuine example of how AI vendors should handle availability incidents. The less visible lesson is what the incident reveals about production risks every Claude consumer inherits downstream.

Three lessons for Australian production teams

1. Partial rollouts create cross-region version drift

During a partial rollout, your Australian Bedrock workload and a parallel workload on the direct Anthropic API can briefly see different model behaviour. Same organisation, different surface, different version window. For 90 minutes on April 23, that window existed. Production agents that make downstream decisions based on model output need tolerance for this. A risk assessment pipeline that classifies a document as medium-risk on one request and low-risk on the next, with no configuration change on your side, is the kind of silent inconsistency that becomes a compliance audit finding. For workflows with financial or compliance implications (which describes most mid-market deployments across financial services, legal, and professional services firms), undefined model consistency is an operational risk event under APRA CPS 230, and your incident classification framework should reflect it.

One practical response: treat model identity (the model name plus the deployment timestamp your monitoring captures) as part of your request idempotency key. It's a small change to your logging schema that makes vendor-side incidents dramatically easier to diagnose retrospectively. If you're hardening an existing Claude integration, our AI Automation Services covers production-grade instrumentation as part of every engagement: request tracing, model version logging, and alerting thresholds for output distribution shifts.

2. Your incident-comms template needs to exist before the event

Anthropic's own status posts during the April 23 window were prompt and specific. The gap for most AU enterprises isn't the vendor's comms. It's their own. An APRA-regulated firm running Claude as part of a customer-facing workflow has an obligation to notify affected customers within a defined timeframe. If your AI-specific incident-comms template was written for core banking outages and then applied wholesale to AI service degradation, it probably doesn't cover the right failure modes: partial model behavioural drift, inconsistent outputs on critical workflows, or a model update that changed behaviour without changing the model name.

Building an incident-comms template purpose-built for AI features costs roughly $30,000–$80,000 the first time, most of which is senior hours: the compliance lead, legal review, and a half-day scenarios workshop. That's not the cost of building the AI feature. That's the cost of running it in production at regulated scale. It pays back on the first event where you have something specific to send rather than a vague platform-wide notice while customers wonder if it's their data or the platform.

3. Add behaviour-stability tests to your eval suite

Most eval suites test capability: does the model produce the right output for a given input? That dimension is necessary and most teams have at least some of it. What capability evals don't tell you is whether output is consistent across deployments, regions, and time. Stability evals test that second dimension. Run the same ten prompts today and again after your vendor's next maintenance window. If the output distribution has shifted beyond your threshold, that's either a vendor-side change or a regression in your prompt templates. A capability eval catches the regression you introduced. A stability eval is what surfaces a vendor-side configuration change that your monitoring otherwise can't distinguish from your own code bug.

Adding three stability tests is a two-hour sprint item. Pick three prompts your production system depends on. Run them on a schedule against a fixed baseline and log the outputs. Alert when output distribution shifts outside a defined threshold. When it fires, check Anthropic's status page before you check your own deployment logs. If your team doesn't have an eval harness set up yet, the AI Readiness Assessment covers what production infrastructure to build before you go live.

When these lessons don't apply yet

If you're pre-production (finishing a proof-of-concept, evaluating whether Claude fits the use case, running a sandbox pilot), the April 23 postmortem isn't your immediate priority. Cross-region drift and incident-comms templates are problems for teams that have solved the earlier gates: a repeatable process, clean enough data to run against a model, and a business case that holds up after integration costs are factored in.

Roughly 85% of Australian AI pilots never reach production, based on our 2025 client engagements. Almost none fail at incident response. They fail because the process wasn't stable, the data wasn't ready, or the ROI case collapsed when integration costs were actually counted. Get those right first. Production resilience belongs in your roadmap, not your pilot retro.

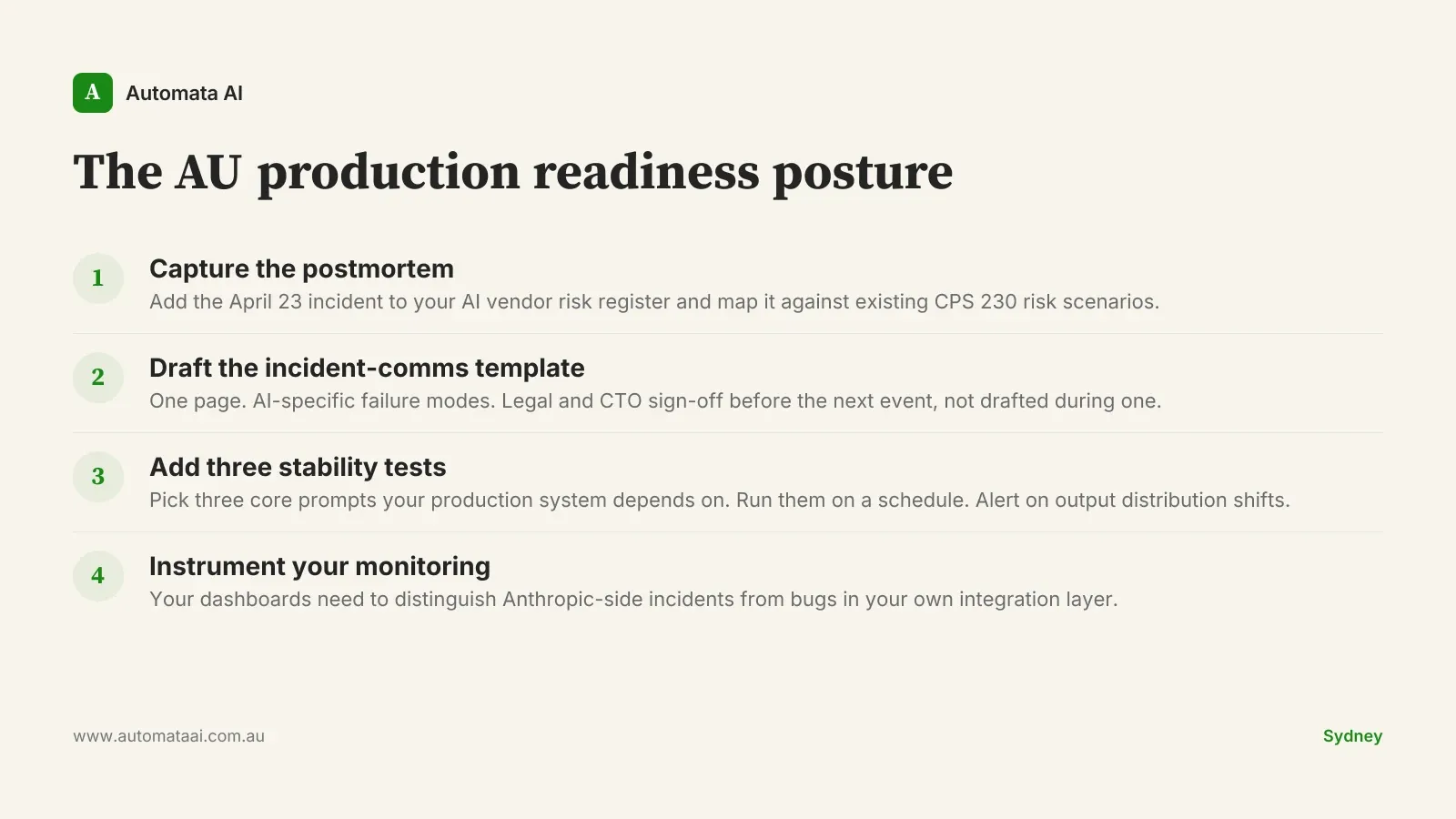

The AU production readiness posture

Capture the postmortem. Add the April 23 incident to your AI vendor risk register and map it against your existing CPS 230 risk scenarios this week.

Draft the incident-comms template. One page. AI-specific failure modes. Legal and CTO sign-off before the next event, not during it.

Add three stability tests. Three core prompts, run on a schedule, with an alert on output distribution shifts beyond a defined threshold.

Instrument your monitoring. Your dashboards should distinguish Anthropic-side incidents from bugs in your own integration layer before the next event hits.

The businesses that handle vendor incidents well are the ones that built the posture before the event. Not as a compliance exercise. As the baseline for running AI in production at any meaningful scale. The April 23 window was 90 minutes. The next one may be longer. If your current Claude setup wouldn't have looked competent through it, that's a gap worth closing. Contact Automata AI to talk through what production readiness looks like for your team.