Your Claude agent nailed the demo. Nine tools, three workflows, every output formatted exactly as the downstream system expected. Three weeks into production, it fails on one in five requests, and your team is spending Monday mornings cleaning up the rest.

The answer is almost always the same. The tools were designed for a controlled environment, not for the chaos of real production data and edge cases.

Tool descriptions are the contract, not documentation

Claude decides which tool to call based on the description. Not the function name. Not the parameter types. The description. A vague description produces wrong tool selection; a precise description produces correct selection. This is not a model limitation. It's a design responsibility.

Every tool description should answer three questions: what does this tool do, what input format does it expect, and when should it be used instead of a close alternative. A description that says only Searches the customer database. Returns matching records loses to one that says Searches by name, email, or account ID. For partial name lookups without a known identifier, use search_customers_fuzzy instead. The second description tells Claude what to do when the first approach fails. That's the entire point.

Treat every tool description as an API contract. Because it is one.

Error messages belong in the reliability spec

A tool that returns HTTP 500 gives Claude three options: retry the same call, abandon the task, or find an alternative path that was never intended. The retry burns tokens. The abandonment fails the user. The hallucinated alternative creates downstream problems that are harder to trace than the original failure.

The correct design is error messages that give Claude a recovery path. A message like User not found. The account ID may be incorrect. Call search_users with a partial name to locate the correct record turns a dead end into a branch. Every tool in your MCP server or agent harness should have error messages written to this standard.

The harder Claude works to recover from a badly designed tool, the more tokens you burn proving the tool was designed badly. For Australian enterprise teams, that waste compounds quickly. A senior analyst spending three hours a week cleaning up agent errors that informative error messages would have prevented is costing $28,000 a year or more at $120 to $180 per hour fully loaded. That number assumes one analyst and one agent.

Multi-step tools cut the chained-failure surface

Three-tool chains have three failure points. One multi-step tool has one. When a workflow predictably requires the same three actions in the same order, collapsing them into a single tool is not premature optimisation. It's the obvious design from the start.

Fewer round trips also means fewer opportunities for the agent to drift between calls. Each tool invocation is a moment where the agent's intermediate state can be misread. The agent carries context from the previous call into the next, and if the previous call returned slightly unexpected output, that drift compounds. Compound that across a chain of five or six tools and you have a workflow where the final output can vary significantly from the intended action, and where debugging the divergence requires replaying every step.

The token cost difference compounds at scale. A moderate-volume Australian production agent running 10,000 operations per month at three-call chains rather than single multi-step tools burns $15,000 to $40,000 in additional annual API costs. That figure is before accounting for the elevated error rate that comes with longer chains.

Read-before-write for every state-changing tool

Any tool that changes production state should be preceded by a tool that confirms the current state. The agent calls get_customer_details before update_customer_record. It calls check_account_balance before initiate_transfer. Each read costs one extra call. Each wrong write can cost considerably more.

For clients in Australian financial services, this pattern is non-negotiable. APRA CPS 230 treats operational resilience as a board-level obligation, and agents that modify production data without confirming the current state first represent exactly the kind of uncontrolled operational failure the standard is designed to prevent.

Read-before-write also creates a natural audit trail. Every write call is preceded by a read log entry capturing what state existed before the change. For any organisation subject to regulatory scrutiny, that provenance record is worth more than the cost of the extra API call.

When not to apply these patterns

These patterns have real implementation costs. Multi-step tools require more upfront design work. Read-before-write doubles the call count for high-stakes workflows. If your Claude integration handles genuinely low-stakes tasks (drafting internal summaries, answering employee questions, generating first-draft documents that go to a human editor before they touch anything real), not every pattern applies with equal weight.

The threshold is consequence. If a wrong agent action requires a human to manually correct production state, the tools driving it deserve all four patterns. If a wrong agent action means a draft email gets deleted and rewritten in thirty seconds, one or two patterns will do. Start with the high-consequence tools. You can always add structure to low-stakes tools later, and you rarely need to.

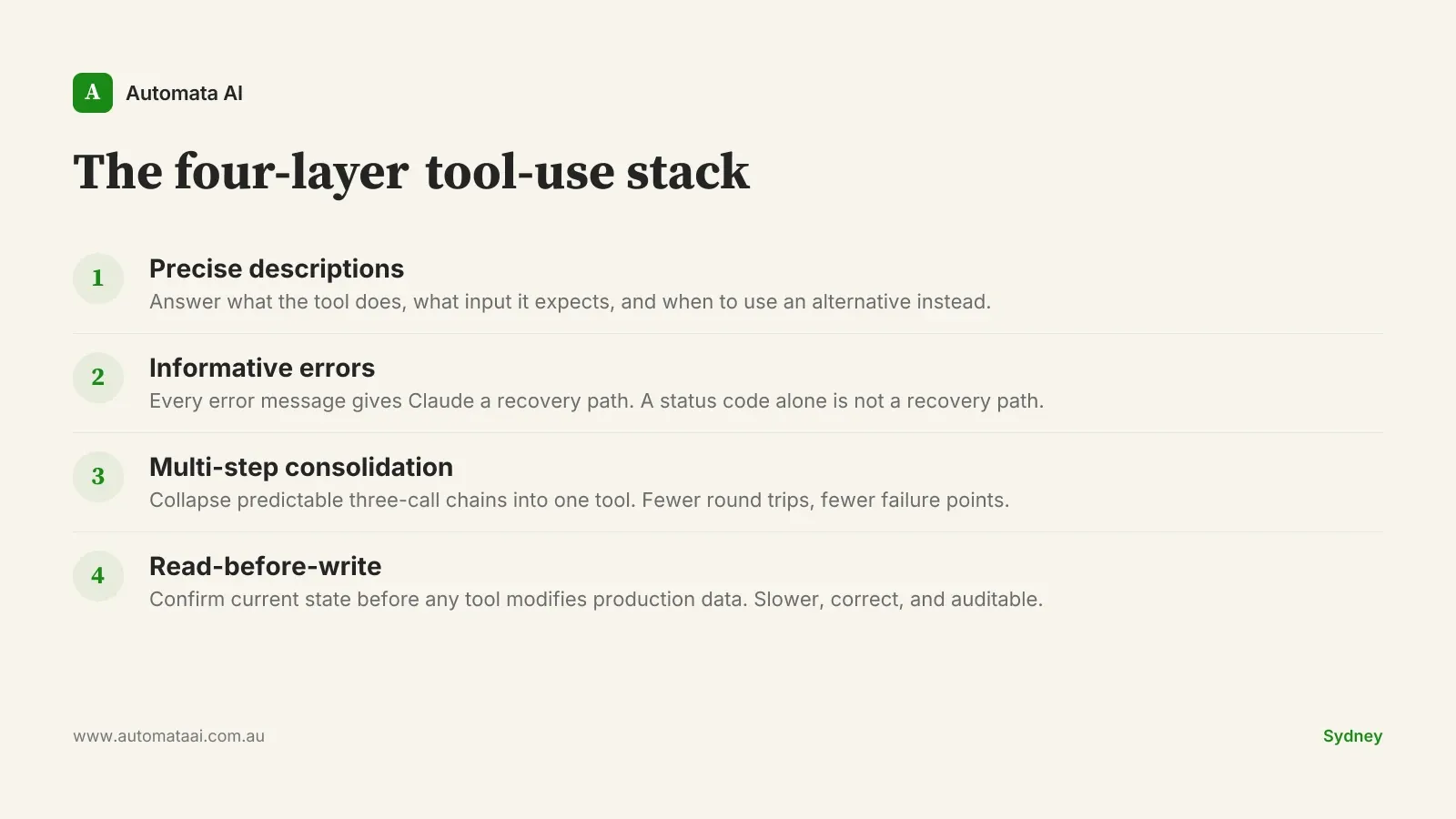

The four-layer tool-use stack: a production audit

The four patterns together form what we call the four-layer tool-use stack: precise descriptions, informative errors, multi-step consolidation, and read-before-write confirmation. Each layer addresses a distinct failure mode. Teams that apply all four consistently see lower incident rates within their first production quarter. If you want to map where your current tools sit against each layer, our AI Readiness Assessment covers the gap analysis before it becomes an incident log.

Audit every tool description. Does it answer what the tool does, what input format it expects, and when to use an alternative instead? If not, rewrite it before the next production deployment.

Rewrite every unhelpful error message. If the error string does not give Claude a recovery path, it is not yet part of the reliability spec.

Identify your three most common tool chains. If the agent calls the same sequence consistently, consolidate those calls into a single multi-step tool.

Add read-before-write guards to every state-changing tool. One extra read call is a cheap trade for a correct write and an audit trail.

Production reliability is not a Claude problem. It is a tool design problem. The four-layer stack gives you a concrete place to start. If you want that foundation in place from day one rather than retrofitted after the first incident, our AI automation services covers what a production-ready engagement looks like.