The chunk is there. You indexed the corpus, set up the embeddings, and wired Claude to your internal documents. The user types their question. Claude says it found nothing relevant. The right answer exists in your knowledge base.

Retrieval missed it.

What contextual retrieval actually does

Standard RAG embeds raw text chunks stripped of surrounding context. A compliance team at a Sydney financial services firm might index three hundred APRA prudential standards. A chunk from CPS 230 reads: the board must review this annually. Without context, that sentence could belong to dozens of different documents. The embedding captures the words, not the meaning. The retrieval system returns the wrong chunk, or nothing at all. For Australian regulated enterprises, that is not a minor inconvenience. The compliance analyst sees a gap. The support team gives the wrong answer to a client query.

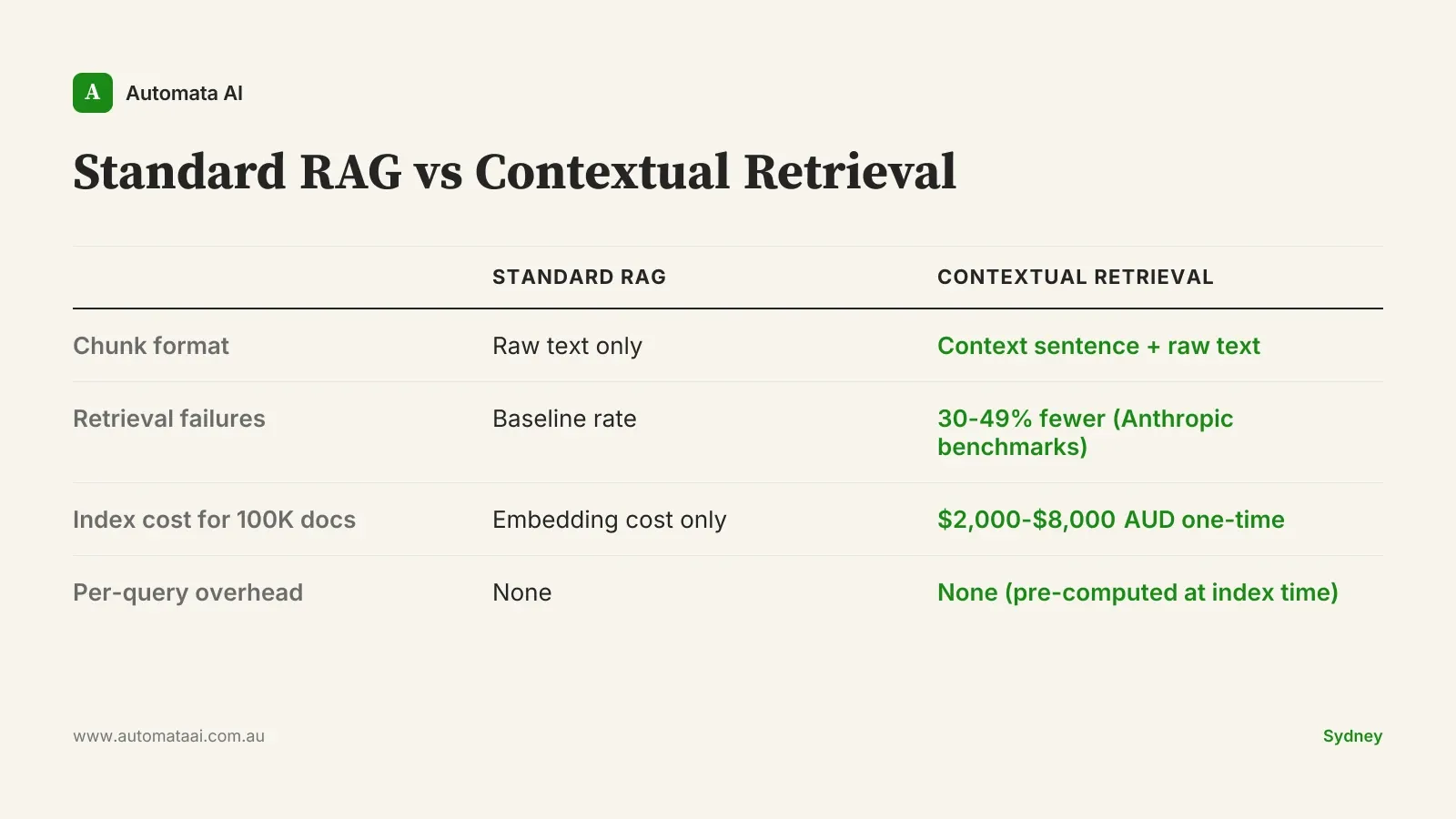

Contextual retrieval prepends each chunk with a short context paragraph before embedding. Claude itself generates the context: one or two sentences explaining what the chunk is about and where it fits in the document. That CPS 230 chunk becomes: From APRA CPS 230, Operational Risk Management, Section 4.2, covering board governance obligations. The retrieval signal sharpens. Anthropic's own testing showed a 30-49% reduction in failed retrievals on standard benchmarks.

Three patterns for Australian knowledge bases

Not every corpus needs the same implementation. Three patterns translate this technique to the knowledge bases Australian enterprises actually run in production.

1. Generate-once, embed-once at indexing time

When you first index your knowledge base, generate context for every chunk in a single pass before embedding. This is the right approach for stable corpora: legislation, clinical protocols, product manuals, filed regulatory submissions. Best fit for financial services policy libraries, healthcare clinical guidelines, and government legislation updated annually at most. The content changes rarely, so the upfront cost pays forward for months or years.

The cost is front-loaded. For a moderately sized Australian enterprise corpus of 100,000 documents in a mid-market financial services policy library, expect $2,000-$8,000 AUD in Anthropic compute for the context-generation pass. Against a compliance analyst at $120-$150/hr fully loaded, that is a rounding error. Prompt caching reduces the indexing compute cost by 60-80% on large runs. This pattern is the default starting point in our AI Automation Services for new knowledge-base builds.

2. Generate-on-update for changing corpora

For knowledge bases that evolve continuously, generating context for the full corpus on every update is wasteful. Integrate context generation into your document ingestion pipeline as a CI step. When a document is added or modified, generate context only for the new or changed chunks. If you are already rebuilding embeddings on document updates, this is one extra API call per chunk in the same pipeline. The index stays current without reprocessing documents that have not changed.

This pattern works especially well for AUSTRAC guidance and AML/CTF compliance teams tracking frequent regulatory amendments, and for government departments whose policy library evolves with each budget cycle. Australian financial services teams can find more on how this maps to their regulatory context on our financial services industry page.

3. Hybrid retrieval with Claude as ranker

Run standard semantic retrieval and contextual retrieval in parallel. Return both result sets to Claude and let Claude rank the combined candidates before generating the final answer. This is the most expensive pattern, typically 2-3x the per-query cost of a standard pipeline, but it produces the biggest quality jump on corpora where retrieval failures carry real cost. The ranking step is where Claude earns its keep: it can weigh recency, source authority, and topical fit in ways that vector similarity alone cannot.

For Australian professional services firms running Claude over client files, or healthcare providers querying clinical protocols, the maths usually justify it. If a retrieval failure causes a compliance oversight costing $200,000, spending an extra $0.04 per query to halve the failure rate is obvious. Before defaulting to this pattern, check your baseline recall with an AI Readiness Assessment. It is over-engineered for corpora where baseline recall is already above 90%.

When contextual retrieval is the wrong answer

Don't implement this because it sounds right. Most teams treat contextual retrieval as a configuration change. It isn't. It is an architectural decision with real cost implications that compound at scale. If your retrieval failure rate is already below 5%, the improvement won't justify the added complexity. If your corpus is under 5,000 documents, better chunking or a higher-quality embedding model will likely produce equivalent results for a fraction of the cost.

Contextual retrieval also cannot fix a structurally poor knowledge base. If your documents have no consistent heading structure, no document metadata, and no clear section boundaries, Claude will generate vague context sentences that don't sharpen retrieval meaningfully. The technique amplifies a well-structured corpus. It cannot compensate for one that was never structured to be retrieved. Fix the corpus first.

The failure mode we see most often: teams implement contextual retrieval before measuring their baseline. They spend $4,000-$6,000 on compute, observe improved outputs, and have no way to confirm the improvement justified the cost. Sample 100-200 historical user queries. Grade retrieval success against known ground truth before changing anything. That number is the only honest baseline.

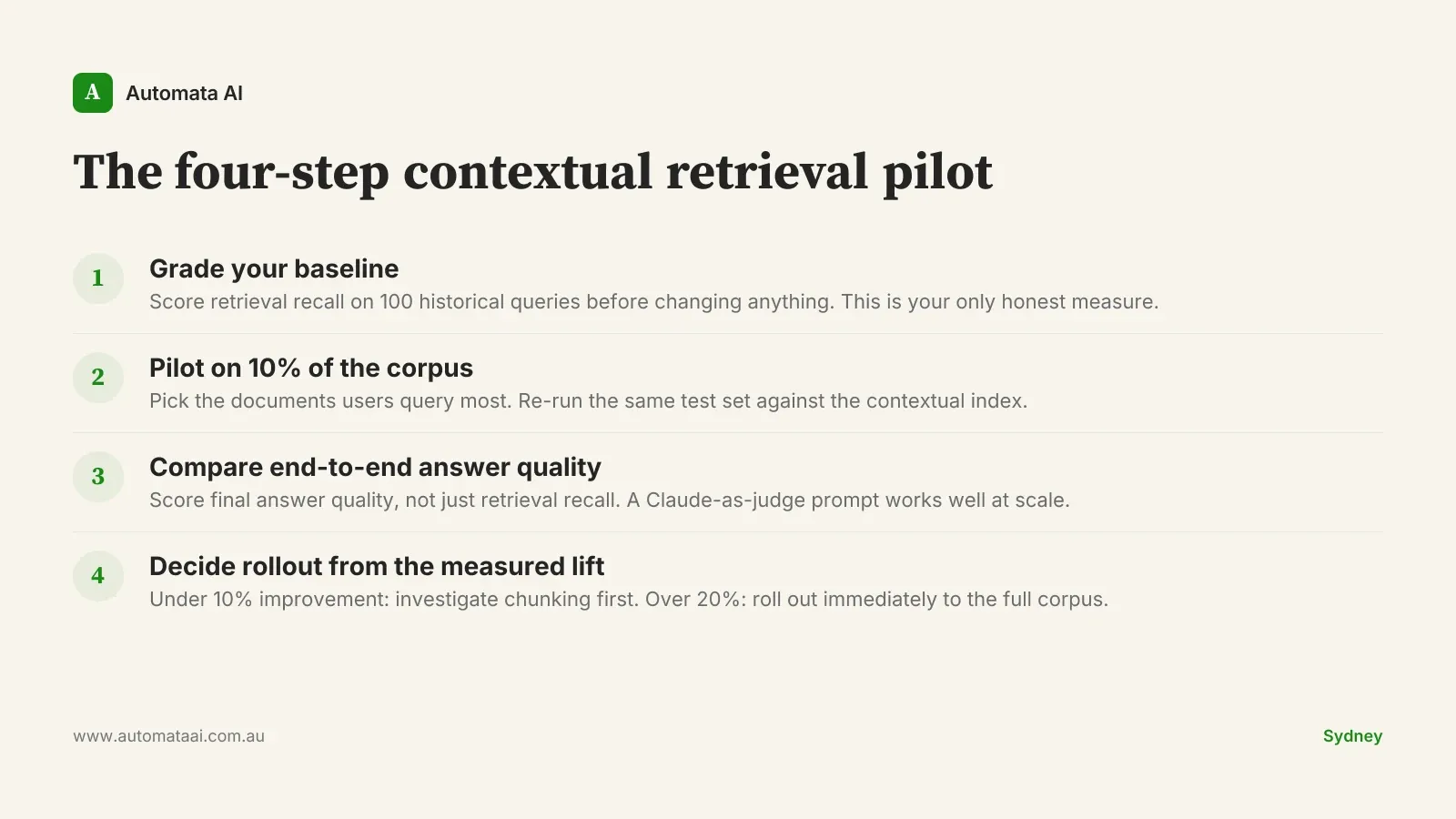

The four-step contextual retrieval pilot

If you decide to run a pilot, this is the sequence we have used across our 2025 Australian client engagements. We call it the four-step contextual retrieval pilot.

Grade your baseline. Sample 100 historical user queries. Score whether the right chunk appeared in the top-5 retrieved results. That number is your retrieval recall baseline before any changes.

Pilot on 10% of the corpus. Pick the documents your users query most. Generate context for that slice, re-embed, and run your test set again.

Compare end-to-end answer quality. Retrieval recall matters, but so does final answer quality. Use a human reviewer or a Claude-as-judge prompt to score both pipelines on the same query set.

Decide rollout pace from the measured lift. Under 10% improvement in answer quality: investigate chunking strategy first. Over 20%: roll out immediately and extend to the full corpus.

The businesses that get the most from contextual retrieval treat it as an engineering problem, not a configuration toggle. Measure the baseline. Run the pilot. Quantify the lift. The only missing piece, for most Australian knowledge-base teams, is the discipline to measure before deploying.