Your production agent is billing more than you planned. Not on infrastructure. On extended thinking calls that nobody adjusted after the pilot went live.

The Claude think tool gives Claude a private reasoning scratchpad before it generates a response. That scratchpad doesn't reach the user, but it does cost input and output tokens. At 50,000 API calls per month with extended thinking enabled across the board, those costs add up to a budget line that most teams never explicitly approved.

For Australian teams running agents at volume, the guidance here is clear: extended thinking improves response quality substantially on some workload types and adds latency and cost with no quality lift on others. The decision is per-workload, not a global setting.

What the think tool actually does

When you enable the think tool, Claude reasons through the problem in a private scratchpad before writing its response. It's not a separate model or a different mode. It's the same Claude, given space to work through multi-step reasoning before committing to an answer. The scratchpad tokens cost money and the thinking time adds latency. On complex tasks, that investment produces measurably better output. On simple tasks, Claude would have arrived at the same answer without it.

There's no configuration toggle that's right for every workload in your stack. The think tool needs to be set per call type, and the correct setting varies by task complexity, stakes, volume, and latency tolerance.

Where extended thinking earns its cost

Three workload categories produce measurable quality gains that justify the additional token cost:

Multi-step reasoning under constraints. Tasks requiring Claude to hold multiple conditions at once: contract clauses under APRA CPS 230 requirements, compliance analysis with overlapping policy exclusions, project planning against six or seven simultaneous constraints. These see 15 to 35 percent quality improvement with extended thinking enabled, per Anthropic's engineering documentation. The scratchpad lets Claude track constraint satisfaction across reasoning steps without losing earlier conditions.

Code review and bug analysis. Extended thinking improves Claude's ability to trace subtle bugs that require holding multiple context items simultaneously: a variable defined in one function, mutated in a second, misused in a third. On straightforward syntax errors, the lift is minimal. On logic bugs across complex codebases, it's material.

High-stakes, low-frequency decisions. If a task runs once per day and a wrong answer triggers a real-world action, the additional $0.05 to $0.15 per call is the cheapest quality insurance you'll find. Document summaries that inform legal position, classification decisions that route work to regulators, extractions that feed reporting to ASIC: these are worth the cost.

Where extended thinking is wasted spend

Routine extraction and classification. Pull the invoice date, extract the vendor name, classify this support ticket into one of eight categories. Claude handles these reliably without a reasoning scratchpad. Extended thinking takes you from 97 percent accuracy to 97.5 percent and doubles the per-call cost. Don't enable it.

Conversational agents. Users feel the latency. A three to four second delay in a customer-facing chat interface drives abandonment, particularly in the financial services tools many Sydney and Melbourne teams have deployed in the last 18 months. The quality lift on conversational tasks is minimal; the UX cost is real and measurable.

Tasks your evals already pass. If your evaluation suite shows 95 percent or better quality without extended thinking, enabling it typically reaches 96 percent and doubles token cost on that workload. Unless your quality bar is set by a regulator, which changes the calculation entirely, the marginal lift doesn't justify it.

Teams that enable extended thinking globally because it seems safer are usually still running a pilot mindset in a production environment. In production, safer is the wrong frame. Cost-per-correct-call is the right frame.

The cost math for Australian production teams

A production agent running 50,000 API calls per month with extended thinking enabled across the board can add $6,000 to $14,000 AUD per month to your Anthropic bill compared to targeted, workload-by-workload enabling. At 500,000 monthly calls, that's $60,000 to $140,000 per year in unnecessary spend. That's the delta between a team that audited its workloads and one that didn't.

The reverse matters equally. Disabling extended thinking on a high-stakes workload to save $200 per month, then experiencing a 10 percent quality drop on decisions that matter, is a false economy. A financial services firm that cut extended thinking from its contract-review agent and started missing compliance clauses is a preventable story. Before tuning either direction, run your actual call volumes through the ROI Calculator to model the cost-quality trade-off for your specific stack.

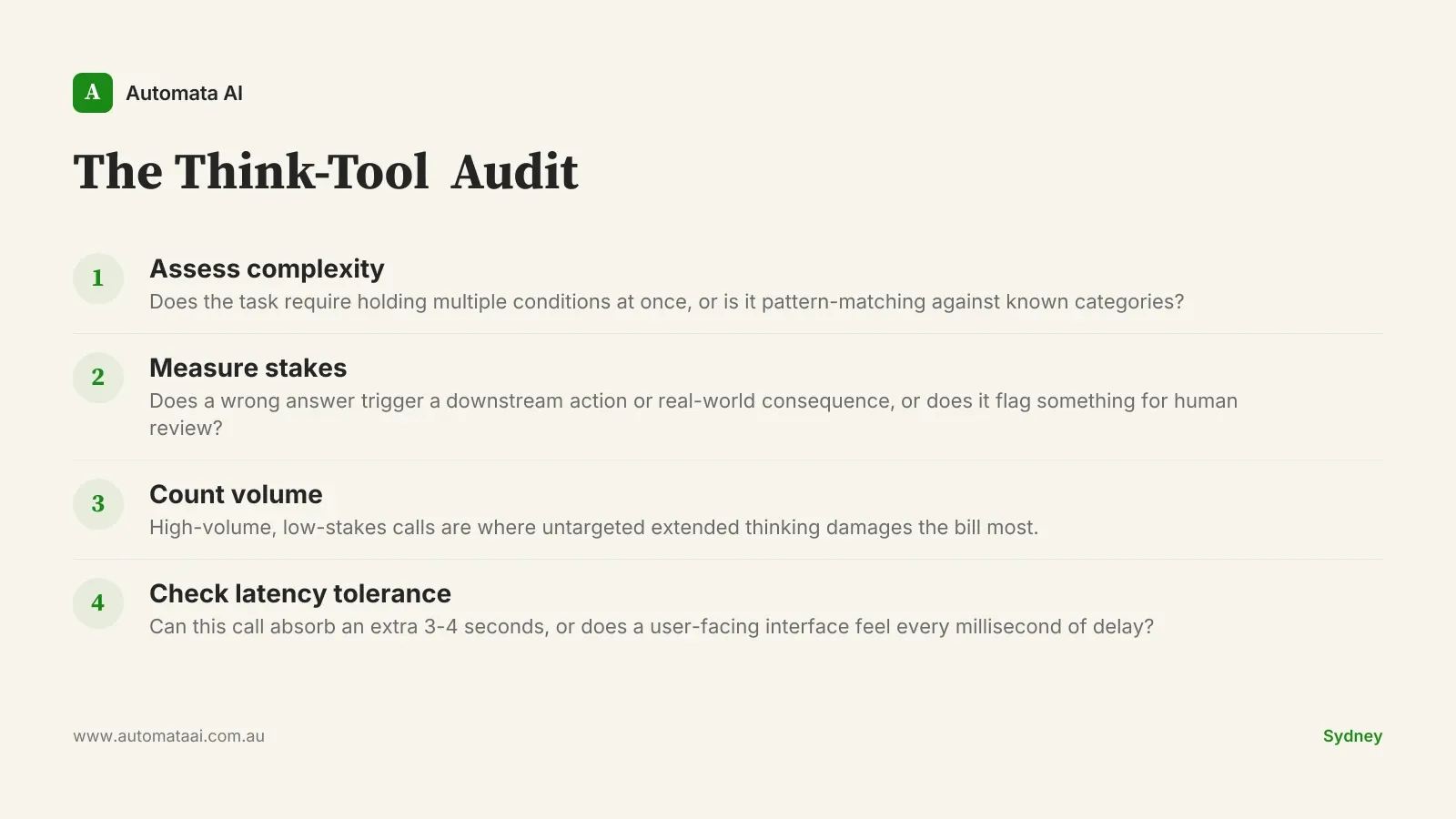

The think-tool audit

Before touching your think-tool configuration, inventory every Claude call in your production stack. For each call type, assess four dimensions:

Complexity. Does this task require Claude to hold multiple conditions simultaneously, or is it pattern-matching against known categories?

Stakes. What is the cost of a wrong answer? Does it trigger a downstream action, or does it flag something for human review?

Volume. How many calls per month? High-volume, low-stakes workloads are where untargeted extended thinking damages the bill most.

Latency tolerance. Is this a user-facing conversational flow or an async batch process running overnight?

Enable extended thinking on high-complexity, high-stakes, latency-tolerant, lower-volume workloads first. Measure the quality lift against your eval baseline before enabling on anything else. Disable on any workload where the measured lift is under three percent relative to the baseline.

Australian teams running agents across multiple workload types, particularly in financial services and professional services, where the same agent might handle routine extraction and high-stakes analysis in the same day, almost always benefit from per-workload configuration. It's one of the first things we address in our AI automation services for teams that have moved from pilot to production.

The Anthropic bill is a lagging indicator. By the time the cost is visible, the configuration decision is weeks old. If you're not sure which workloads in your stack warrant extended thinking, an AI Readiness Assessment maps your agent architecture against your actual workload types and identifies which ones are burning budget without returning quality.