The bank's Claude pilot is working. The mortgage operations team loves it. Legal has seen the demo. Risk and compliance are next in the queue.

That's where most pilots stall. Not from technical failure. From a review process that has never formally evaluated an AI agent and has no playbook for doing it.

Getting the compliance frame right is the difference between a six-month review and a six-month deployment. Banks that understand what CPS 230 actually requires move faster. Banks that treat it as a box-ticking exercise get sent back to start.

What CPS 230 actually demands of a Claude deployment

APRA's operational resilience standard came into force on 1 July 2025. It was not written for AI, but it was written for operational risk, and it reaches every material service dependency an authorised deposit-taking institution holds. A Claude agent embedded in workflows across Australian financial services operations is a material service under that definition. If it fails and that failure disrupts banking operations or customer-facing processes, CPS 230's incident reporting obligations apply.

Four obligations apply directly to any Claude deployment classified as material.

Operational risk management. Identify every material service, map single points of failure, and document the controls. An AI model accessed via API is material if its failure disrupts operations or customer-facing processes.

Business continuity planning. The bank must demonstrate it can survive the loss of any one critical service for at least four hours. A DR document is not sufficient. Tested failover is.

Incident management. Clear notification timelines to APRA and the board when a material service fails or is compromised. AI downtime counts.

Third-party risk assessment. The assessment extends to downstream providers and the underlying compute. The API call to Anthropic is the visible dependency. The compute stack beneath it is the invisible one.

APRA's assessors have started asking about the full dependency chain in AI-related examinations. An institution that maps Anthropic as a vendor but cannot name Anthropic's underlying compute provider has an incomplete third-party risk register. The standard does not have an exemption for 'we didn't think about the layer below'.

How Claude maps into the CPS 230 framework

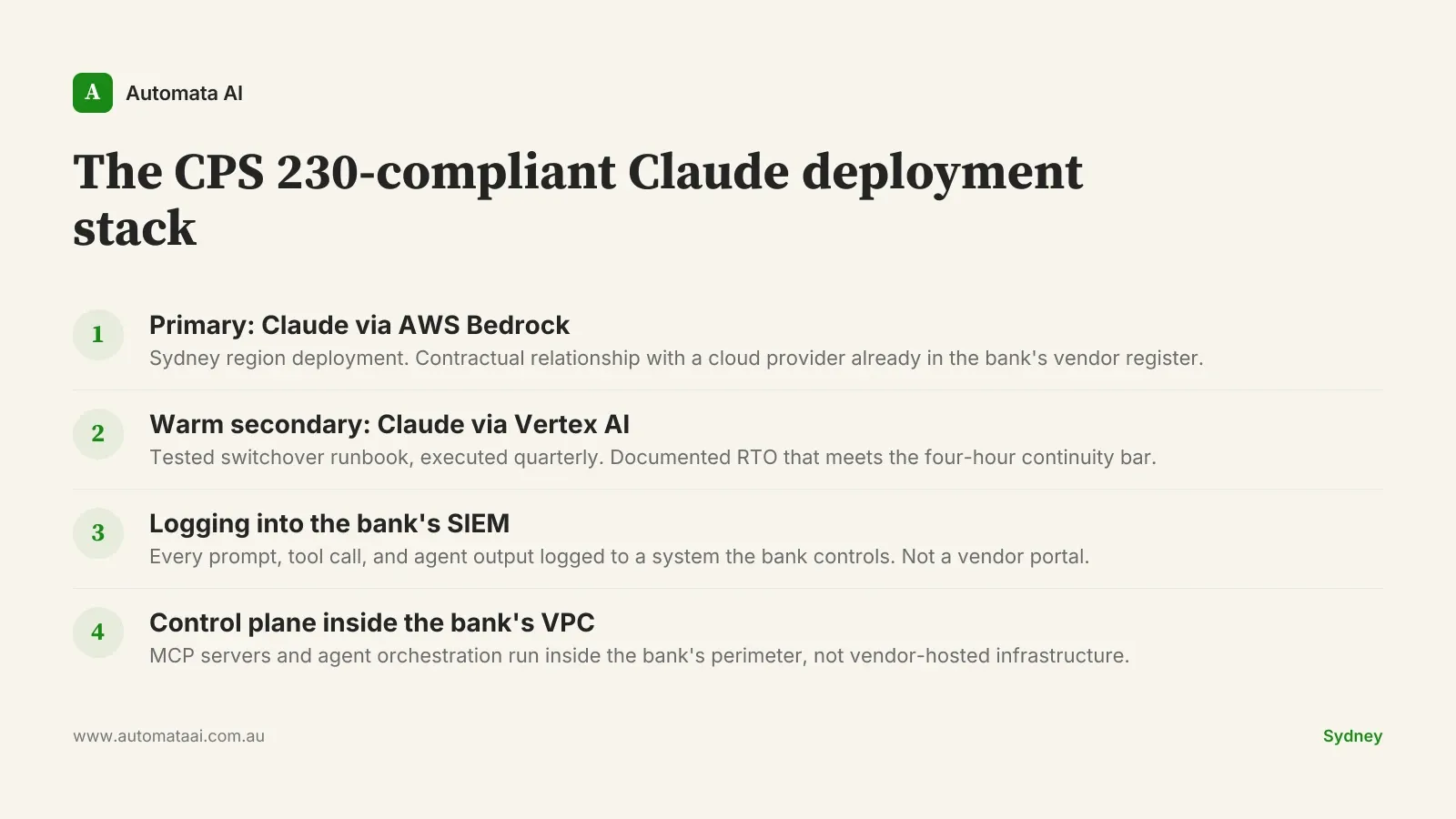

Anthropic is a service provider. The bank's third-party risk team needs a formal assessment covering materiality, criticality, concentration risk, and exit capability. Concentration risk is the most common gap. Two deployment paths address it without replacing the model.

Claude via AWS Bedrock (primary). A contractual relationship with AWS, already in most banks' vendor registers. Sydney region available. Satisfies data residency requirements for standard domestic use cases.

Claude via Google Cloud Vertex AI (warm secondary). A tested switchover that demonstrates the bank can reach the four-hour continuity bar even if the primary path fails entirely.

The control plane matters as much as the model path. MCP servers, agent orchestration, and any Claude Code flows should run inside the bank's VPC. Prompts and tool calls should feed directly into the bank's existing SIEM, not a vendor-side store the bank cannot query on demand.

Most vendor-supplied AI architectures are built around the model and forget the bank's logging requirements. A bank examiner asking for an audit trail of every agent action in Q3 needs to pull that from a system the bank controls, not from a vendor portal with a 90-day retention window.

The CPS 230-compliant deployment stack for Claude agents

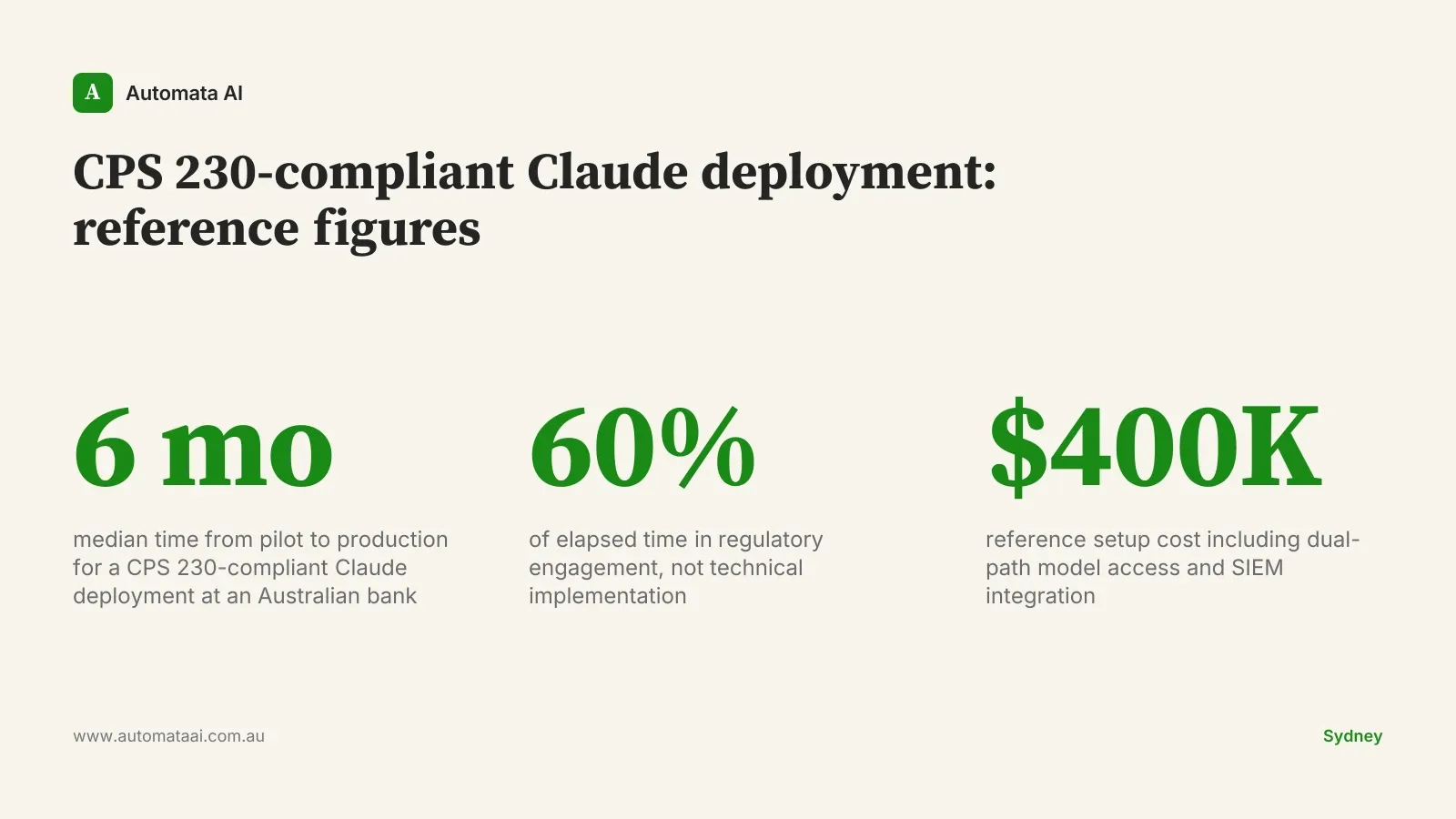

The framework above names the four components of a reference APRA-aligned Claude deployment. Building and validating this stack, including the Bedrock and Vertex integrations, SIEM wiring, and VPC control plane, sits within the scope of our AI Automation Services for Australian banks. The setup cost for a reference deployment runs around $400,000, with roughly $180,000 a year to operate.

The capital cost is dominated by logging and SIEM integration, not model access. AWS Bedrock and Google Cloud Vertex AI both charge around $15 per million tokens for Claude. The compute is cheap. The compliance engineering is not.

The three questions APRA will ask

Every APRA examination of a bank running a live Claude deployment will return to three questions. If the answers are weak, the deployment does not reach production. If they are strong, even a small pilot clears review quickly.

Who owns the operational risk register entry for the AI service? Not who owns AI strategy broadly. Who has signed the risk acceptance for this specific system, with their name against the entry in the register.

How did you test the failover? A documented runbook is necessary but not sufficient. APRA wants evidence of a tested switchover, run quarterly, with results on file.

How do you log and review agent actions? The answer must name the specific SIEM, the retention period, and the cadence of human review for flagged outputs.

A tier-two Australian bank running this compliance shape moved a Claude-backed mortgage operations workflow from pilot to production in six months. Regulatory engagement was the long pole, accounting for roughly 60 percent of elapsed time. The technical implementation, concentrated on the logging integration and failover testing, was around 40 percent of total effort. The switchover runbook was tested twice before APRA accepted the evidence.

When the full compliance shape isn't necessary

Not every Claude deployment inside an Australian bank needs the full CPS 230 treatment. The threshold is materiality. A Claude deployment used by two analysts to draft internal summaries, with no integration into customer-facing systems and no access to material client data, is unlikely to trigger a material service classification.

A Claude agent with write access to loan origination workflows, capable of generating customer-facing documents without a human review gate, almost certainly does. The line is not always obvious, and APRA's guidance does not draw it for you.

If the annual cost of the AI service falls below $200,000 and the bank can demonstrate it could be switched off this afternoon without disrupting operations for four hours, the materiality case is weak. That is the right place to start. Running an AI Readiness Assessment against a specific workflow will produce a materiality determination in two weeks rather than six months of internal debate.

Big-banging a bank-wide Claude deployment without a compliance shape costs more to unwind than to build correctly the first time. Start contained, measure what works, and bring the compliance shape with you when you scale.

What moves to Claude, what stays with the team

Drafting, summarising, cross-referencing, and explaining. These move to Claude. The compliance analyst producing a 45-minute manual briefing note can produce the same document in under 10 minutes with Claude handling the research synthesis.

Decisions, attestations, customer communications, and regulator-facing voice. These stay with the qualified human. Every Claude output carries a citation back to source data so the reviewer can verify in under two minutes per document.

This is not a technology constraint. It is a regulatory one. ASIC's guidance on AI in financial advice and APRA's operational risk framework both point to human accountability for consequential decisions. A Claude-generated document presented to a customer as the bank's position requires a licensed professional to have reviewed and accepted it. Claude drafts. The bank's qualified person signs.

Staff retention is a sleeper benefit in teams that get this split right. The documentation overhead in financial services compliance is not trivial: when a compliance analyst leaves because 60 percent of the job is repetitive drafting, the replacement cost runs around $90,000 fully loaded. That benefit is hard to model upfront and material once the rollout settles.

The banks that reach production fastest in 2026 are not the ones that move fastest on technology. They are the ones that answered the compliance questions before the technical team started building. Pick one workflow, determine its materiality, and design the compliance shape before writing a line of integration code.