Somewhere on a Pilbara iron ore operation right now, a haul truck is three work orders into a failure mode that happened twice before. The third event is logged. The first two are in the CMMS. Nobody connected them.

That's not a staffing problem. It's a pattern recognition problem at scale. A reliability team managing hundreds of pieces of equipment across a large operation cannot manually read across thousands of work orders looking for signal. Claude can.

What the workflow does with your CMMS data

Most CMMS platforms (Maximo, SAP PM, Infor) are excellent at storing maintenance records. They are not built to reason across them. A haul truck that fails three times in eight months for different stated causes might have the same root cause. The CMMS stores three events.

The workflow ingests from three sources:

CMMS work orders. Every job card, close-out note, and parts request, cited by work order number.

OEM telemetry. Fault codes, hours in service, and temperature and pressure readings from onboard diagnostics.

Field engineer notes. The unstructured observations that rarely make it into a formal root-cause analysis but often contain the actual diagnosis.

Claude reads across all three. It identifies recurring failure patterns by fleet and equipment class, drafts root-cause analyses ready for the monthly reliability review, and ranks preventive maintenance opportunities by expected downtime cost. Not by scheduled interval. By what failure is likely to cost the most if it goes unaddressed.

Every output cites the specific work orders and telemetry events that support it. The reliability engineer can verify any claim in the draft RCA in under two minutes.

The cost case for reliability

Unplanned equipment downtime at a typical Australian iron ore mine runs around $90,000 per hour. That varies by site, commodity, and where the equipment sits in the production chain, but it is a reasonable baseline for Pilbara and Bowen Basin operations.

A Claude-assisted reliability workflow that prevents two hours of unplanned downtime per month delivers around $2.16 million a year. The build is around $180,000 of platform engineering, plus around $60,000 a year to run. Payback is under two months. Run the numbers for your site in the ROI Calculator before building the business case internally.

The integration pattern

The Claude layer connects to the CMMS and OEM telemetry systems in read-only mode. It does not write back. Maintenance schedules, parts orders, and work order creation remain controlled by the reliability team in their existing systems.

This is a deliberate design choice, not a technical limitation. Reliability teams in Australian mining operations carry regulatory responsibility for equipment safety. Mine safety legislation in Queensland and Western Australia places that responsibility on named individuals. Any system that writes to the CMMS without direct human oversight is a governance problem before it is a technology question.

The Claude layer surfaces. The reliability team decides.

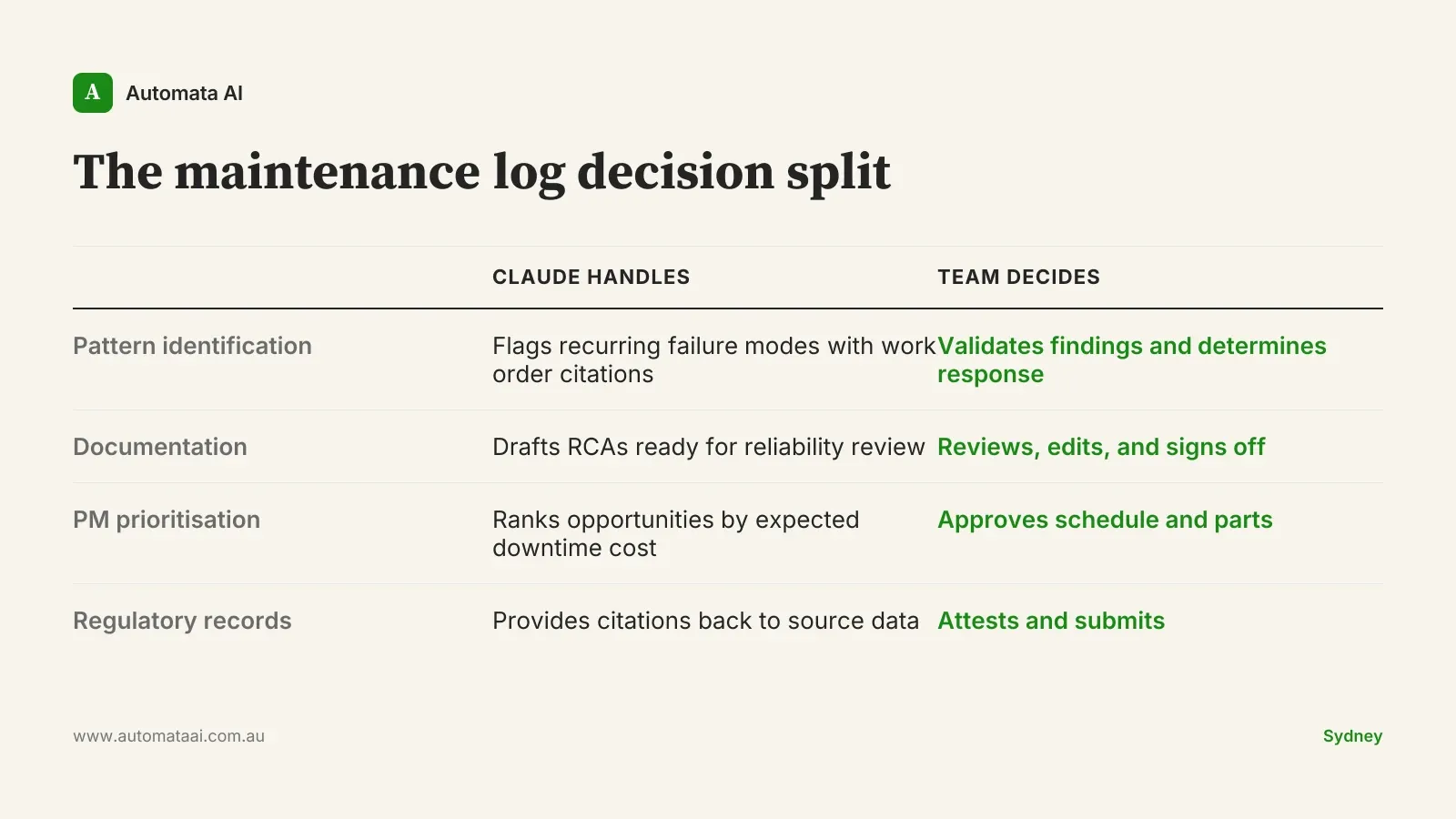

The maintenance log decision split

The split that produces durable adoption in regulated Australian sectors is consistent across sites and commodities. Our AI Automation Services cover the full implementation pattern, but the shape is as follows.

What moves to Claude

Cross-referencing work orders. Claude reads across CMMS records, OEM telemetry, and field notes to find patterns a single engineer reviewing one work order at a time would miss.

Pattern identification by fleet. Recurring failure modes flagged by equipment class, with citations to the specific work orders that support each finding.

RCA drafting. Root-cause analyses ready for the monthly reliability review, with every claim traceable to source data.

PM opportunity ranking. Preventive maintenance candidates ranked by expected downtime cost, not by scheduled interval.

What stays human

Approving and scheduling maintenance actions. The reliability engineer reviews every Claude-surfaced recommendation before it becomes a work order.

Regulatory attestation. Any document submitted to a regulator or insurer carries a qualified engineer's signature, not an AI system's output.

Parts and contractor decisions. Procurement and contractor engagement stay with the reliability team.

OEM communications. Warranty discussions, technical service bulletins, and site visit coordination.

Every Claude output carries a citation back to source data. The human reviewer can verify any artefact in under two minutes before signing off.

The autonomy trap

Mining ops teams that scope this project as autonomous maintenance scheduling hit a wall fast. The language that kills it is phrases like "automate the scheduling" or "remove the engineer from the approval loop." Those phrases accurately describe what the scoping document says, and they are exactly what stalls the project in change control, usually within four weeks of the first governance review.

Drop the autonomy ambition. Build a decision-support layer instead. The reliability team will adopt it faster, trust it more, and extend it to other workflows once they have seen it work on one.

A Bowen Basin coal operation running this pattern reported a 14 percent reduction in unplanned downtime in the first year. The reliability team owned the workflow from day one. IT implemented it. That distinction mattered for adoption and for staying out of regulatory trouble.

The tell is simple: if your AI vendor is promising to automate away the reliability engineer's sign-off, find a different vendor.

Rollout discipline

Start with one site and one reliability engineer over six to eight weeks. Measure three things: how often the team acts on Claude's surfaced recommendations, how much editing the drafted RCAs need before they are usable, and whether the engineer wants to expand scope at the end of the pilot.

Australian mining operators that rolled this out across all sites simultaneously reported adoption below 25 percent six months in. Operators that started with one site reported above 70 percent fleet-wide adoption within a year. The AI Readiness Assessment is the fastest way to identify which site and which reliability team should go first.

There is a benefit that doesn't appear in the business case before the rollout settles. Teams using Claude well report that the documentation overhead stops being the dominant part of the reliability engineer's day. Pattern recognition is handled. The engineer is doing engineering. Retention improves, which matters in an industry where experienced reliability staff are hard to replace and FIFO conditions make every quality-of-work-life improvement count.

Pick one site. One engineer. Six weeks. Measure what moves.