Your engineering team has fifteen Skills configured in Claude Code. Last week, seven of them were invoked. The week before, the same seven.

The other eight Skills are sitting idle. Not because engineers don't want to use them. Because they forgot they exist.

Skills are inventory. Discovery is distribution.

Every internal product lives or dies by whether the people it's meant to help can find it when they need it. Most engineering teams treat Skill deployment as a one-time event: configure the yaml, post in the #ai-tools channel, move on.

That's not a distribution strategy. That's a launch event with no follow-through.

Based on our 2025 engagements with Australian engineering teams, average Skill utilisation sits below 10% for teams that ship Skills without a discovery plan. Teams that treat discovery as a parallel workstream alongside Skill development consistently hit above 70%. Same Skills. Different distribution.

Why engineers don't find Skills on their own

The failure modes cluster into four patterns, and none of them are laziness:

Inline prompts have momentum. An engineer writing inline prompts for six months has a working reflex. A new Skill has to interrupt that reflex at exactly the right moment.

Skill names are technical, not situational. A skill called 'generate-migration' is invisible to an engineer thinking 'I need to update the schema.' The mental mapping doesn't happen automatically.

The catalogue lives somewhere nobody goes. A wiki page, a Confluence doc, a pinned Slack message from three weeks ago. None of these are visible when an engineer is in flow.

Announcements are a single event. The effective half-life of a Slack announcement is about four hours. A Skill posted on a Tuesday is forgotten by Friday.

None of these are hard to fix. They all require deliberate, repeated action rather than a one-time configuration.

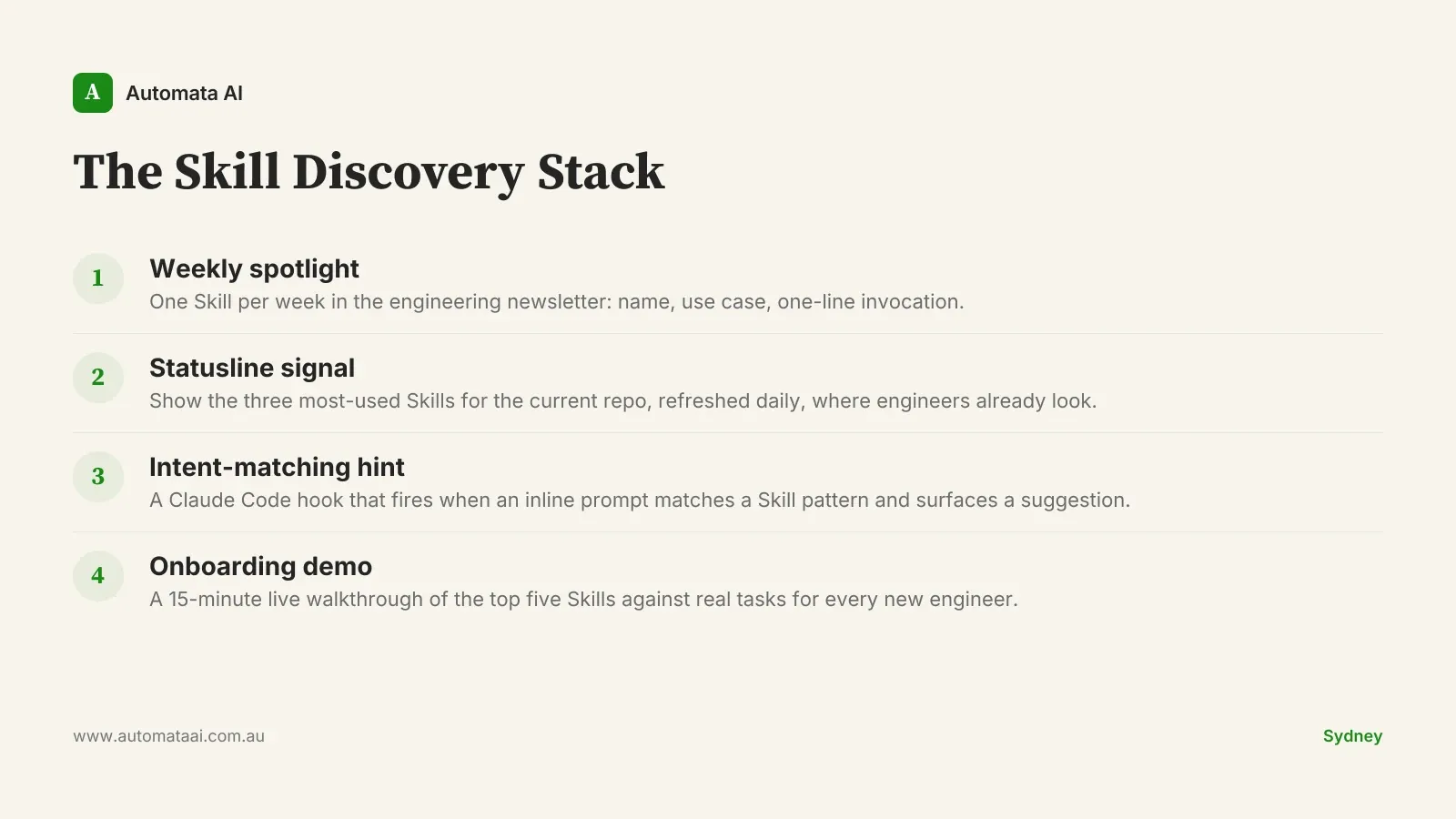

The Skill Discovery Stack

Four mechanisms, applied together, reliably move utilisation from below 10% to above 70%:

Weekly spotlight. One Skill per week in the engineering newsletter: name, use case, one-line invocation. Engineers don't need to memorise a full catalogue. They need one new thing per week until the pattern sticks.

Statusline signal. A statusline component showing the three most-used Skills for the current repository, refreshed daily. Engineers who can see what their teammates are invoking are far more likely to try it themselves.

Intent-matching hint. A Claude Code hook that detects inline prompts matching common Skill patterns and surfaces a 'did you mean /skill-name?' suggestion. This intercepts the habit at the moment of execution, which is the only moment that matters.

Onboarding demo. A 15-minute live walkthrough of the top five Skills against real tasks for every new engineer. Not a wiki page. An actual session in the IDE before their first pull request.

None of these require new infrastructure. They require engineering team leadership to treat Skill discovery as a product problem, not a documentation problem. Automata AI ships these as configurable patterns through our AI Automation Services for Australian engineering teams.

What the economics look like

A Sydney engineering team of 60 with 20 Skills and weak discovery sees roughly 1,100 weekly Skill invocations across the team. The same team, same Skills, with all four Discovery Stack mechanisms running: roughly 4,200 weekly invocations.

At a conservative 8-minute saving per invocation and a fully loaded engineer rate of $120/hr, the difference is around $590,000 a year. The Discovery Stack itself — spotlights, a statusline update, a hook, and an onboarding script — costs perhaps $15,000 in implementation time.

The input assumptions are the variables: your team size, your invocation rate, your fully loaded rate. Model it in our ROI Calculator if you want AUD figures for your own context.

When not to build a discovery layer

If your team has fewer than eight Skills, you don't need a Discovery Stack. The overhead of four coordinated mechanisms for half a dozen Skills is negative ROI. Keep it simple.

The threshold where discovery investment starts to pay is roughly:

Eight or more Skills in active use.

A team of 20 or more engineers sharing a Skills catalogue.

At least two Skill categories (code generation and review, for instance), because cross-category discovery is where the largest gains sit.

Below those numbers, a shared /skills command with a short description for each Skill is probably enough. Our AI Readiness Assessment can help identify which processes are worth building Skills for before you invest in the discovery layer.

One thing to avoid: shipping the discovery mechanisms and then ignoring the data they generate. The teams that sustain 70%+ utilisation iterate. Spotlights with low click-through get replaced. Skills that generate hints but no invocations get reviewed or retired. That discipline is ongoing, not a one-time configuration.

Three numbers to track from day one

Weekly Skill invocations per engineer. Hint acceptance rate: how often engineers follow a 'did you mean?' suggestion through to an invocation. Weekly spotlight click-through. These are leading indicators. They tell you whether the mechanism is working before the outcome shows up in time-saved metrics.

The teams that sustain high utilisation treat their Skill catalogue like a product backlog. Skills that earn their keep stay. Skills that don't get retired or reworked. A quarterly review is the operating rhythm that makes the compound return real rather than theoretical.

Pick one process your engineers prompt manually more than five times a day. Build a Skill for it. Then build the discovery layer. The first step creates the inventory. The second step makes it count.