Your marketing team is asking for ChatGPT. Your knowledge workers are already on Claude licenses you bought six months ago. Your CFO wants to know if you're actually paying for both. This conversation is happening in Sydney and Melbourne mid-market offices this quarter, and the answer is simpler than the vendor pitches make it sound—but only if you're willing to be honest about what your teams actually produce day to day.

What ChatGPT Images 2.0 actually delivers

OpenAI integrated image generation directly into the ChatGPT chat surface. For end users, three things changed concretely.

First, in-conversation image generation. Describe what you need, get an image, ask for revisions, get a better one. No switching to a separate image tool, no export step, no context lost between sessions.

Second, visual reasoning on uploaded images. Upload a product photo, a competitor's ad, or a screenshot of a chart. Ask questions, get analysis that combines what's in the image with broader business context.

Third, output quality suited for real business uses. Resolution and fidelity are high enough for social posts, presentation assets, and internal materials. This isn't a toy.

For a marketing or creative team producing visual content daily, that is a genuine workflow improvement over switching between a chat tool and a separate image generation platform. The integrated surface reduces friction and speeds iteration. That matters when your team is running three active campaigns and needs to test multiple creative directions before Thursday.

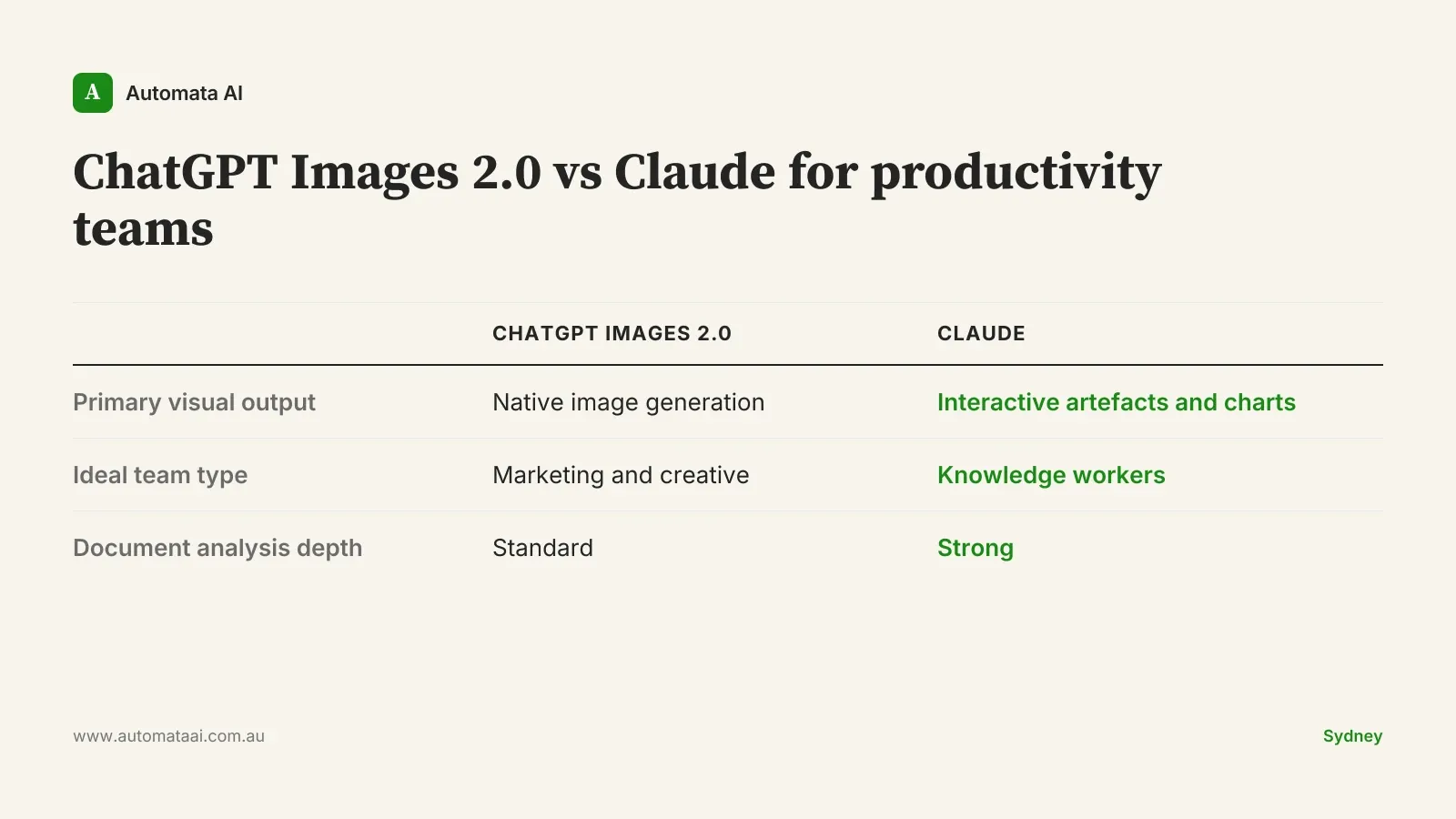

Where Claude's productivity surface fits better

Claude's advantages for end-user productivity aren't in raw image generation. They sit in a different part of the work stack entirely.

Interactive artefacts. Claude builds working charts, editable tables, and live diagrams in chat. Not a static image. Something you can modify by asking follow-up questions. For a finance team that needs a model they can stress-test, that's the capability that matters.

Document depth. Upload a 60-page contract, an APRA regulatory disclosure, or a board pack. Claude reads it, extracts what matters, and answers specific questions about it. The document handling is materially better than anything optimised for image work.

Writing and analysis workflows. For teams producing proposals, reports, or client deliverables, Claude's text-heavy surface is built for that output.

For Australian businesses in regulated sectors, there's a subtler point. Teams handling sensitive client data under Australian Privacy Principles or APRA CPS 230 requirements tend to find that Claude's document-workflow positioning sits better with legal and compliance teams during vendor sign-off. Not because ChatGPT is less secure by design, but because the enterprise packaging and the expected use cases differ in ways that matter when your general counsel is approving the deployment.

Here's the honest read: a compliance analyst spending 20 hours a week reviewing supplier documents doesn't need AI-generated images. They need something that can read a regulatory disclosure and flag the five things worth escalating. Image generation in chat solves zero of that problem.

Most knowledge workers don't need image generation as a primary work tool. The question isn't which AI handles images better. It's whether your staff produce images as a core output at all.

Three workload patterns and where each tool fits

The licensing decision is a routing problem, not a brand loyalty question. Here's how it maps by workload.

Marketing and creative teams

ChatGPT Images 2.0 is the right fit. The integrated chat-to-image workflow matches how creative teams actually work: try a direction, iterate fast, adapt for different channels. A 10-person marketing team in Sydney on ChatGPT Team pays roughly $75 to $80 AUD per user per month. That's a straightforward cost-per-output calculation, and one that justifies itself quickly if the team is producing visual content daily.

Knowledge-worker teams

Analysts, lawyers, accountants, operations leads. Their visual output needs are charts and explainers, not generated photos or ad creative. Claude's interactive artefacts and document handling match the actual work. Static image generation isn't the job for these teams, and optimising for it pulls budget in the wrong direction.

Mixed teams

A 100-person mid-market business with both creative and knowledge-worker cohorts might legitimately need both platforms. Fully loaded annual licensing with ChatGPT and Claude across those 100 seats runs $130,000 to $300,000 depending on tier. Running a single tool runs $90,000 to $200,000. That's a real budget decision, and you need measured usage data from actual pilots before committing to the higher number.

When a dual-platform approach is the wrong call

Mid-market IT leads tend to over-index on capability and under-index on adoption. Before committing to both tools, check these signals.

Your creative team is under 10 people. At that headcount, individual subscriptions to a dedicated image tool cost less than a second enterprise AI platform.

Image-generation needs are occasional. A few product photos a month, a quarterly social campaign. That doesn't justify a platform-level tool.

Adoption on the first tool hasn't landed yet. If 60% of staff still don't have a regular AI workflow, adding a second platform splits attention and stalls both. Fix adoption on one tool before expanding.

The trap is letting two good vendor demos drive a dual-platform commitment. That's how a business ends up paying $300,000 a year for tools running at 30% utilisation.

A practical approach for the next two weeks

Survey actual visual output needs by role. Not what staff think they might use. What they produce today. A short internal form takes an afternoon and gives you the routing data you need.

Pilot ChatGPT Images 2.0 with your marketing or creative cohort. Two weeks, real deliverables. Measure whether it saves time on actual campaign assets, not just whether people found the demo impressive.

Pilot Claude artefacts with your knowledge-worker cohort. Same window, same metrics. Focus on document turnaround time and report-writing output.

Build the licensing call on measured usage, not pilot enthusiasm. Enthusiasm in a two-week pilot doesn't predict monthly active use six months in.

The businesses that get this right won't be the ones who followed the best pitch. They'll be the ones who tied tool choice to actual work output and matched their licensing to what their people use every day.