Your governance team approved cloud AI. Your legal team approved cloud AI. Then someone in the healthcare operations group asked whether patient records could go to a US-based inference endpoint, and the answer got complicated.

Google's answer to this structural problem (internally referred to as Nano Banana) runs Gemini-class reasoning directly on the device. No inference request to a remote server. No data transfer. For Australian businesses where cloud AI is architecturally blocked, not just politically awkward, this is worth understanding clearly.

What on-device AI actually means

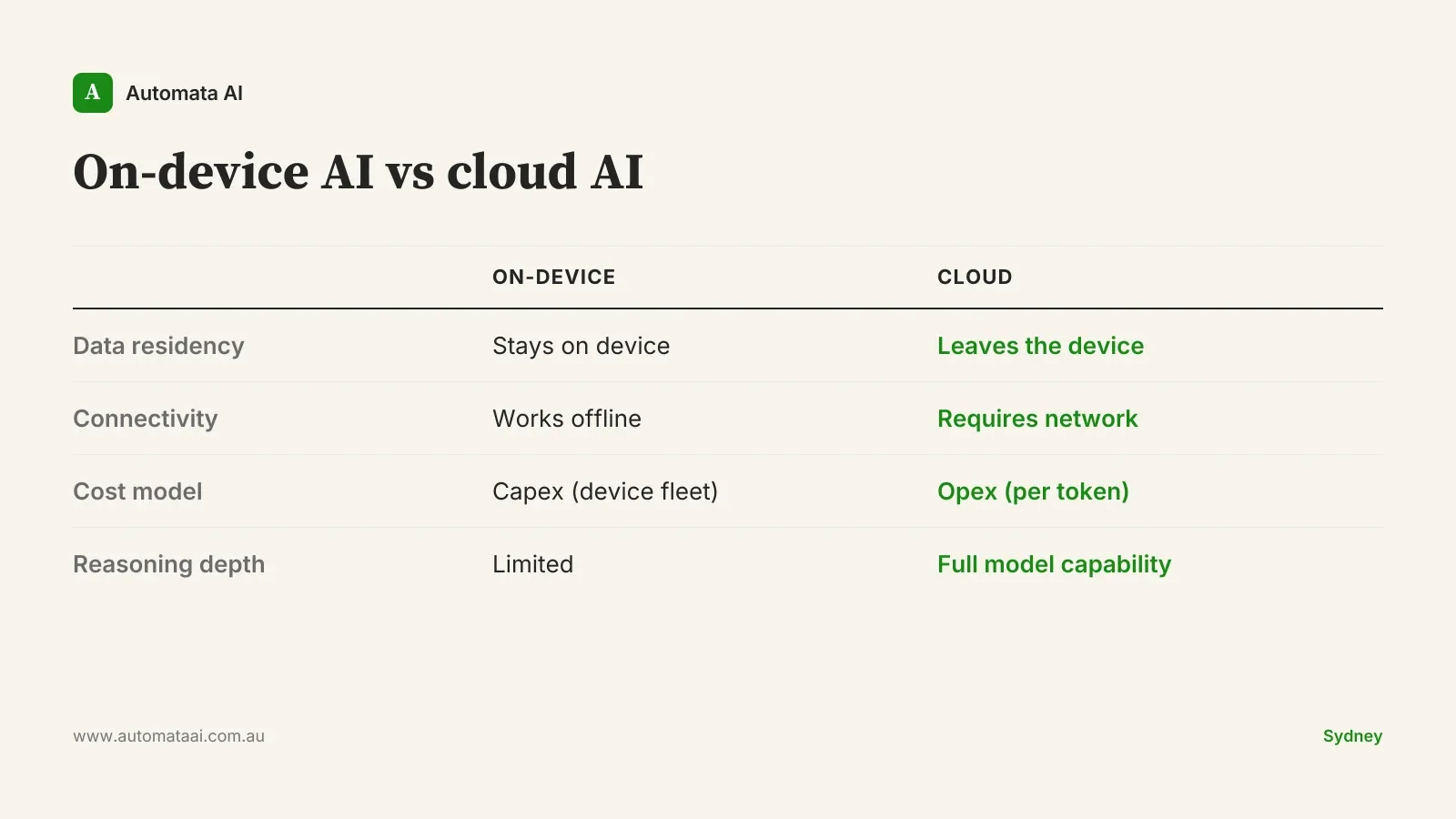

Cloud AI works by sending a prompt to a remote inference endpoint, receiving a response, and billing you per token. Every request is a data transfer. For most enterprise workflows, the Privacy Act (1988) permits this under appropriate processing agreements, but getting there takes legal sign-off, data handling terms, cross-border transfer assessments where the inference endpoint is offshore, and ongoing governance overhead that some organisations can't or won't absorb.

On-device AI runs the model weights locally. The inference happens on the processor. Nothing leaves the hardware. Three concrete advantages follow from this architecture.

Data stays local. No processing agreement to negotiate, no data transfer risk, no DPA to review. The model runs on the device and the information stays on the device.

No network dependency. Mining sites in the Pilbara, agricultural operations in regional Queensland, remote services in the Northern Territory. Cloud AI fails when connectivity fails. On-device doesn't.

No per-request cost. For high-volume, low-complexity tasks such as document classification, form filling, and field note summarisation, the compute cost is the device, not the inference bill.

Where Australian buyers should pay attention

On-device AI isn't valuable for every workload. It's valuable for specific scenarios where cloud AI has a structural disadvantage in the Australian context.

Healthcare professionals reviewing patient records. Privacy Act obligations make cloud inference legally complicated, even under a processing agreement. On-device removes the question entirely. A GP reviewing patient history while dictating clinical notes doesn't want a data governance conversation. They want the tool to work.

Government departments with sovereignty requirements. Material above certain classification thresholds can't leave the premises. Cloud AI is architecturally off the table. On-device is the only viable path.

Field workers in low-connectivity environments. Mining, agriculture, remote services. Cloud AI is unreliable when 4G is intermittent or absent. On-device works regardless of connectivity status.

Consumer apps handling personal data. Australian businesses building apps that process personal information under the Australian Privacy Principles benefit from architectures where inference never touches a server. It doesn't eliminate compliance obligations, but it simplifies the posture considerably.

The honest gap: where Claude currently sits

Claude doesn't have a comparable first-party on-device offering today. Claude runs in the cloud via claude.ai, the API, Amazon Bedrock, and Google Vertex. For the workloads listed above, this is a real architectural gap, not a positioning gap. If your use case genuinely requires on-device inference and you need it now, Gemini's current implementation is ahead.

That said, most Australian enterprises don't have a cloud privacy problem. They think they do. The Privacy Act has clear provisions for contracted processing, the same legal framework that permits payroll processors and external accountants to handle personal data. A well-structured cloud AI agreement with appropriate data handling terms, including an assessment of where inference happens and what is retained, covers most workloads. The governance friction is real, but it's solvable through contracting, not through architecture. On-device AI is the right answer for a narrower set of cases than it's often marketed as.

When on-device is the wrong answer

For typical knowledge-worker tasks, including research synthesis, contract drafting, complex reasoning, and multi-step agent workflows, on-device AI is currently weaker than cloud. The model running on a phone or laptop carries materially less parameter count and context capacity than the models behind cloud API endpoints. Gemini's on-device variant is not Gemini Ultra. The performance difference is significant for tasks where reasoning depth matters.

Complex reasoning tasks. Cloud models are stronger. If the work requires holding a 50-page contract in context and synthesising a legal opinion, on-device won't match cloud performance.

Agent workflows. Tasks that chain tools, call APIs, and make sequential decisions work better on full cloud models with large context windows.

Low-volume, high-stakes processes. If a sensitive workflow runs twice a week, a properly structured processing agreement under the Privacy Act is a better path than restructuring your AI architecture for two uses.

The economics are different, not necessarily better

Initial on-device AI integration for an Australian enterprise typically runs $60,000 to $150,000, before accounting for device fleet upgrades if the hardware doesn't meet inference requirements. That figure doesn't capture the ongoing overhead: model versioning across a device fleet, device management, and hardware refresh cycles as models outgrow the compute. Cloud deployments carry none of that.

For comparison, cloud Claude for equivalent workload volume typically runs $40,000 to $200,000 per year in inference and tooling costs. On-device is capex-shaped: higher upfront, lower ongoing marginal cost. Cloud is opex-shaped: lower upfront, scales with usage. Which structure works better depends on your organisation's tax position, the workload volume, and how long you plan to run the system. Model the actual numbers before choosing an architecture on principle.

What to do now

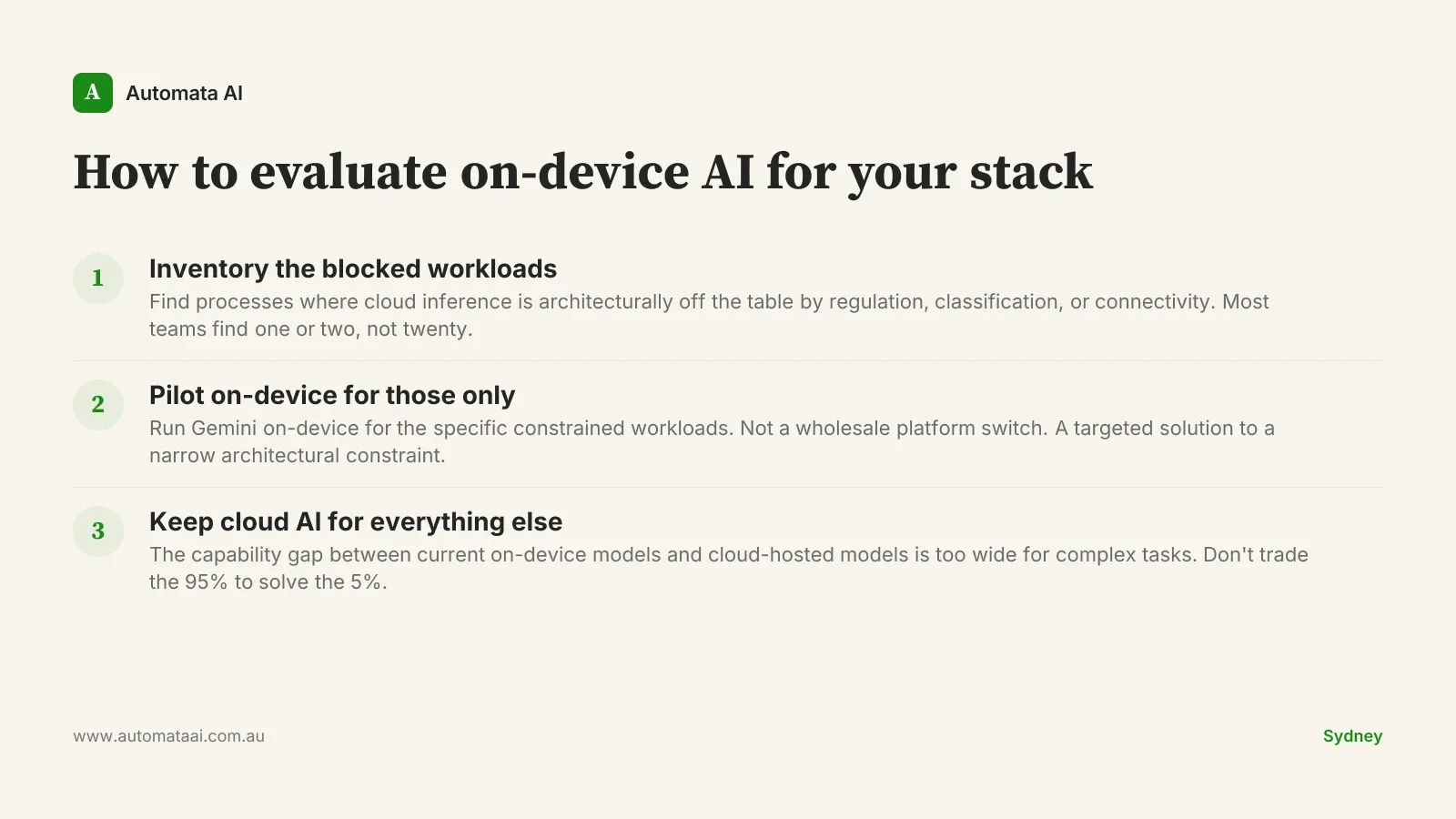

For most Australian enterprise AI stacks, the practical answer is hybrid: cloud AI for the broad majority of knowledge-worker tasks, on-device for the narrow set of workloads where data genuinely can't move.

Inventory the workloads where cloud inference is genuinely blocked. Not politically difficult. Architecturally blocked — by regulation, classification requirements, or connectivity. Most teams find one or two, not twenty.

Pilot Gemini on-device for those specific workloads. Not as a wholesale platform switch. As a targeted solution to a narrow architectural constraint.

Keep cloud Claude for the rest. The capability gap between current on-device models and cloud-hosted models is too wide to ignore for complex tasks. Don't optimise the 5% of your workload at the cost of the 95%.

Anthropic's on-device roadmap isn't public. The competitive pressure from Nano Banana is real, and the on-device AI space is moving quickly. A six-month review of this specific question is worth putting in the diary.

If you have a workload in your Australian operations that's genuinely blocked from cloud AI today, don't wait for the perfect solution. Pilot what's available. The architecture that solves your compliance constraint in 2026 is more valuable than the theoretically superior option still being roadmapped.