Someone changed a system prompt last Tuesday. It felt better. They shipped it. Three weeks later a client noticed their Claude agent was refusing to process invoices it had handled fine for two months. Nobody knew which change caused it, because nobody had evals.

Evals are the test suite for agent behaviour. Not unit tests for functions. Tests for the quality, accuracy, and safety of what your agent actually produces. Without them, agent development runs on opinion. Someone runs a few examples, says it looks good, and the only signal you get is production behaviour after the fact.

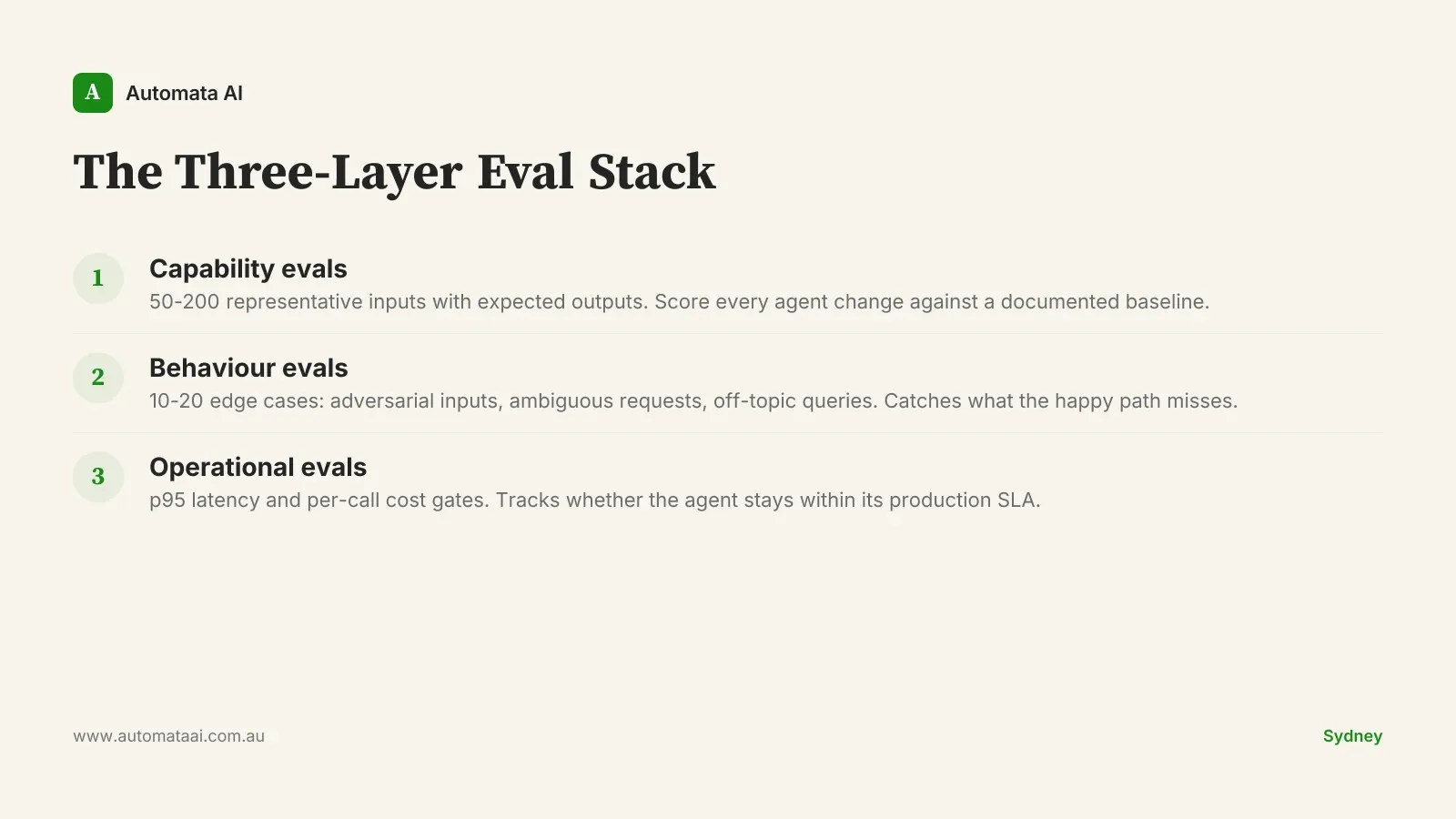

The Three-Layer Eval Stack

Every production Claude agent needs three types of evals from week one. Not eventually. From the start. The Three-Layer Eval Stack is what separates agent builds you can ship from ones you keep patching in production. Here is how each layer works.

1. Capability evals: scoring every agent change

The first layer answers the basic question: does the agent do the task correctly? Build a set of 50 to 200 representative inputs with documented expected outputs. Run every agent change against that set. Score the results. The scoring doesn't need to be elaborate. A pass/fail rate across your test set is enough to start. What matters is the baseline, not the tooling.

Curating that test set is the expensive part. For a typical Sydney mid-market agent build, expect to spend $8,000–$15,000 in analyst time getting the first 100 examples right. That's 80–120 hours of a senior subject-matter expert reviewing edge cases and documenting correct outputs at $85–$120 per hour fully loaded. It's not glamorous work. It's the most important thing your team does before go-live.

2. Behaviour evals: stress-testing the unexpected

The second layer tests what happens when the task is unfamiliar. Capability evals cover the happy path. Behaviour evals test what happens when a user submits an ambiguous request, an adversarial input, or something your training examples never covered. The failures you want to find here are the ones clients find if you don't.

Ten to twenty carefully chosen edge cases will catch problems your capability set misses. Australian Privacy Principles compliance failures, hallucinated regulatory citations, unexpected refusals on legitimate requests: behaviour evals surface these before production does. The investment is a few hours of someone who knows the domain writing out the ten ways a user might try to break the agent.

3. Operational evals: latency and cost gates

The third layer is non-functional. Does the agent stay within latency and cost budgets? A document-review agent that works correctly but takes 45 seconds per document when users expect 8 seconds has a serious production problem your capability evals will never detect. Track p95 latency and per-call cost in your eval suite from day one. These numbers compound: a 10-cent-per-call agent at 50,000 calls a month is a $5,000 monthly bill someone needs to own.

The pattern that costs Australian teams $40K to $120K

Across Australian enterprise agent builds, the same pattern appears. Teams ship the agent, plan to build evals once it's stable, and never do. The agent works well enough at launch. Then Claude updates ship. Prompts accumulate small tweaks. Context windows change. Six months later, accuracy on core tasks has drifted 15–20 percentage points and nobody noticed, because there was nothing to measure it against. The agent hasn't failed dramatically. It has just become quietly worse.

By the time teams bring in outside help to diagnose a degraded production agent, the rebuild cost typically runs $40,000–$120,000. Not because the agent is broken beyond repair. Because there's no baseline to measure against, no test set to validate against, and no automated pipeline catching regressions. The eval suite, built upfront, would have cost a fraction of that. Our agent automation services include eval build-out as a first-class deliverable, not an afterthought.

When not to invest in a full eval suite

Not every Claude agent needs this investment. A proof-of-concept with fewer than 50 users and no production SLA doesn't need a $15,000 test-curation project. Build a handful of representative examples to sanity-check prompt changes, then reassess before going live. The overhead of a full eval suite only makes sense once the agent has real stakes.

The threshold is roughly: does this agent handle customer-facing work, or does it make decisions affecting compliance, money, or data integrity? If yes, the eval suite isn't optional. If you're unsure whether your agent has reached that threshold, the AI Readiness Assessment will map the stakes before you commit to a build.

Internal productivity tools with low stakes and low volume can run on a lightweight 20-example set refreshed quarterly.

Customer-facing agents processing documents, answering questions, or taking actions need the full three-layer stack before go-live.

Regulated-domain agents touching APRA-regulated entities, healthcare, or financial advice should treat eval suites the same way they treat access controls: non-negotiable.

Building your first 50 capability examples

Start with the 50 inputs your agent handles most often. Not edge cases. The representative core. For each input, document the correct output: what the agent should produce, what it should not produce, and how you'd score a borderline response. Run that set against your current agent. Save the results. That baseline is the most valuable artefact your team will produce this month. Everything that follows is measured against it.

Every time a prompt changes or a model version updates, re-run the set automatically. A script that runs 50 inputs against your agent and logs pass/fail is enough to start. The point isn't the tooling. The point is that no change ships without running the set.

Run evals on a fixed weekly cadence even when nothing changes. A Claude version bump that ships without announcement can move your agent's behaviour on edge cases by enough to matter. The weekly run is how you find out before your clients do. If you're at the stage of wiring this infrastructure together and want a second set of eyes, contact our team. We've built eval pipelines for Melbourne and Sydney teams across financial services, professional services, and operations.

The teams shipping reliable Claude agents in production aren't running smarter prompts. They're running measurement infrastructure. Build the baseline before you need to defend it.