The worst thing that can happen to a Claude business case in Australian banking is to open with a vendor productivity figure. A board that has reviewed ten years of software migration proposals has learned one thing well: discount any external claim by ninety percent and see if the residual case still works. It usually doesn't.

The case that survives that discount starts somewhere else. It starts with the backlogs your operations manager already knows about: the ones with measurable cycle times, measurable queue depths, and a real staffing cost per resolution.

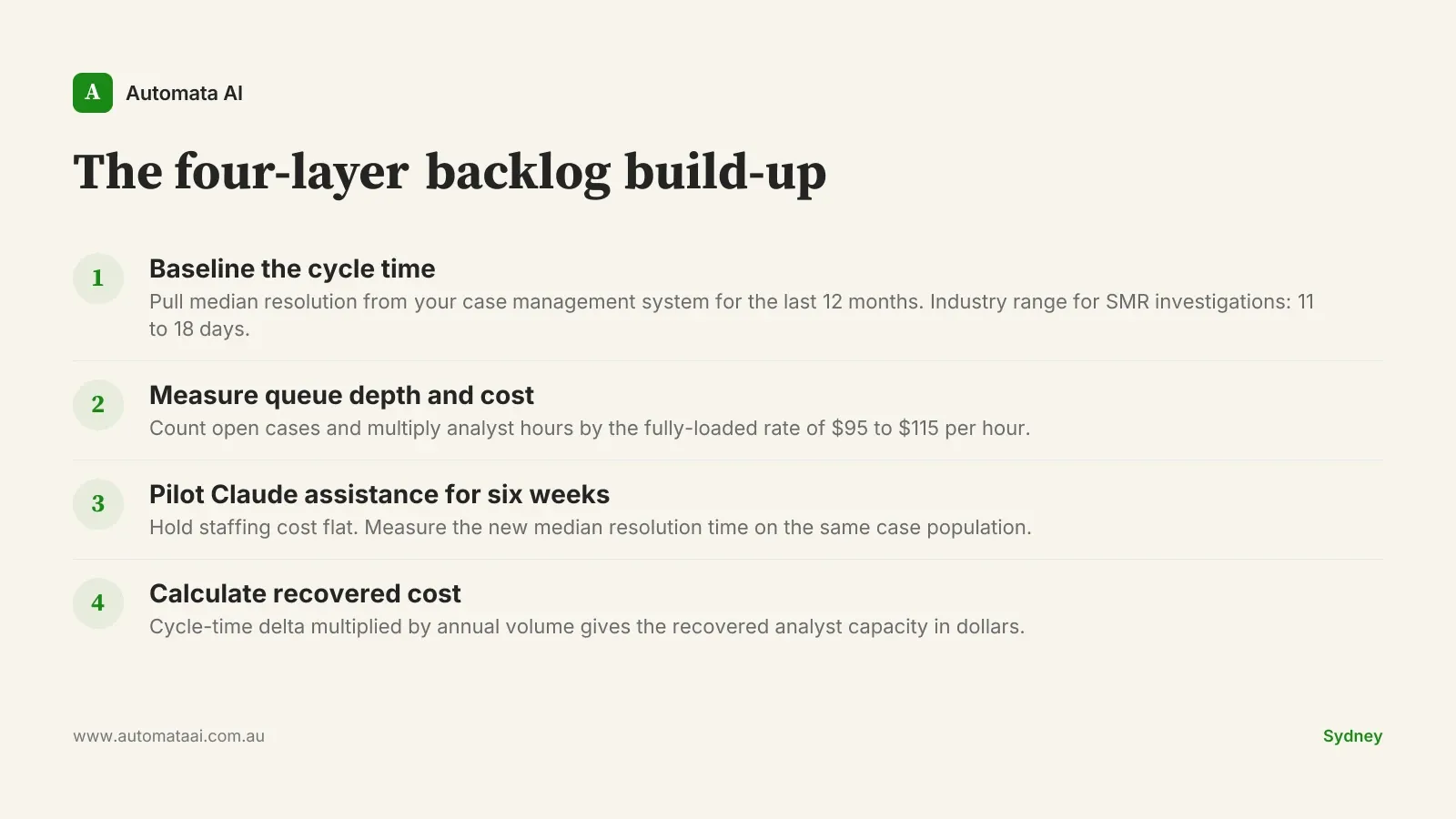

The four-layer backlog build-up

Three operational backlogs recur across tier-two and tier-three Australian banks with enough volume and cycle-time data to support a rigorous bottom-up model: Suspicious Matter Report investigations under AUSTRAC reporting obligations, loan documentation review, and formal complaints handling under the Australian Financial Complaints Authority process. Each shares the same properties. The queue is deep enough to generate statistical significance in a six-week pilot. The cycle time is long enough that a 30 to 50 percent reduction produces a measurable dollar recovery. And the outcome per case is defined precisely enough that a human reviewer can validate the Claude-assisted output.

The build-up has four layers. Each one is a measured input, not an assumption:

Baseline the cycle time. Pull median resolution time from your case management system for the last 12 months. For SMR investigations, the industry range runs 11 to 18 days median. For loan documentation review, 4 to 9 days. Complaints handling, 7 to 14 days.

Measure queue depth and staffing cost. Count the open cases and the analyst hours per case. At a fully-loaded operations analyst rate of $95 to $115 per hour, this gives you the annual cost floor per backlog.

Pilot Claude assistance for six weeks. Hold staffing cost flat during the pilot. Measure the new median resolution time on the same population. No headcount changes until the before/after comparison is clean.

Calculate recovered cost. Cycle-time delta multiplied by annual volume. If SMR resolution drops from 14 days to 7.3 days and you run 9,200 cases annually, the recovered analyst capacity is roughly $1.8 million on that backlog alone.

Call it the four-layer backlog build-up. The discipline is treating each layer as a fact sourced from your own systems, not a benchmark from a vendor deck. A board that challenges the number will find a file, not a slide.

A reference number that holds up

A tier-two Australian bank running this build-up across all three backlogs produced a defensible $4.2 million annual saving. The build cost was approximately $1.6 million in year one and $700,000 per year in steady state. Break-even at 14 months. Four-year NPV at typical Australian bank discount rates: around $9 million.

That number was arrived at from measured baselines, not from published case studies. The board could stress-test every figure because every figure came from internal data. Model your own three backlogs using the ROI Calculator and you will see the same structure: the savings are in the cycle-time delta, not the headcount reduction.

Four questions every board will ask

Boards don't reject the numbers. They test the logic behind them. Prepare for four challenges and have a specific artefact ready for each:

'How does a time saving become a real cost reduction?' Answer with a staffing plan. Name which roles are redirected to higher-value work, which are not backfilled at next attrition, and which require a new function. Vague answers here collapse the case.

'What is the regulatory risk under APRA CPS 230?' Answer with the audit trail design, the human-in-the-loop boundary for each workflow, and the attestation process for model outputs. The answer needs to be in writing before the board meeting.

'What if Anthropic raises prices?' Answer with the multi-provider architecture. Claude via Amazon Bedrock or Google Vertex gives price and availability protection without rewriting the application layer.

'What if the pilot numbers don't generalise?' Answer with the rollout structure: pilot on one queue, shadow-mode on a second, assisted production on a third, full production only after all three pass. This is not reassurance. It is a gate.

The pattern is the same across all four: a specific artefact sourced from your own operations data. Our AI Automation Services page walks through how the pilot and build sequence fits together.

When this build-up does not work

Not every banking operations backlog supports a defensible build-up. Three conditions kill the case before it starts.

The volume is too low. If the backlog runs fewer than 2,000 cases annually, the recovered capacity at even a 50 percent cycle-time reduction typically sits below $400,000. That doesn't justify a six-figure build.

The process is unstable. If the rules governing SMR investigations or complaints handling change materially every quarter, the Claude-assisted workflow will require constant re-prompting. The maintenance cost eats the saving.

The human-in-the-loop requirement is deep. Some APRA-regulated workflows require a human review on every case output, not a sample. If that review step is the bottleneck, Claude accelerates input preparation, not resolution.

This is particularly relevant for Australian financial services firms navigating APRA CPS 230. The standard requires a documented risk assessment for material service providers. Claude, whether deployed via Anthropic's API or through AWS Bedrock, qualifies as a material service provider in most implementations. The Australian financial services AI guide explains what that risk assessment needs to contain.

Two disciplines that close sceptical boards

The first is anchoring every number to internal data. A claim of 'we recovered 12,000 analyst hours' sourced from your own time-tracking and case management systems survives scrutiny that kills a vendor benchmark. The internal source is not more precise. It is more credible, because the challenger who wants to dispute it has to dispute your own records.

The second is sensitivity analysis. Run the case at 50 percent of the assumed savings and 150 percent of the assumed build cost. If the four-year NPV is still positive at that haircut, present that version to the board, not the base case. A board that approves the haircut version has a defensible position if things go sideways. A board that only sees the optimistic case does not.

The third discipline, underused in Australian banking boardrooms, is a structured exit clause. The engagement contract should allow a clean withdrawal at month 12 if recovered value falls below 60 percent of the model. That clause is rarely invoked. It is frequently what tips a cautious board from deferral to approval. Australian boards respond to bounded downside in writing, and a board that knows the commitment is time-limited and performance-gated moves faster than one negotiating an open-ended obligation.

Pick the backlog with the highest queue depth and the most reliable cycle-time data. Baseline it. Model the pilot. If the four-year NPV doesn't clear $3 million at the 50-percent haircut, the case isn't ready yet. If it does, you have a number that can survive a room full of sceptics.