Your compliance team flagged it on a Tuesday morning. Someone had connected ChatGPT Workspace Agents to the firm's Microsoft 365 environment three months earlier.

Since then, client correspondence, contract drafts, and internal financial briefings had been flowing through OpenAI's servers. Nobody meant to create a Privacy Act exposure. They were trying to save time on meeting summaries.

What OpenAI Workspace Agents do with your data

Starting May 2025, ChatGPT Business moved Workspace Agents to a credit-based pricing model. The integrations cover Google Workspace, Microsoft 365, and Slack. The product automates email drafting, document summarisation, scheduling, and report generation. They work.

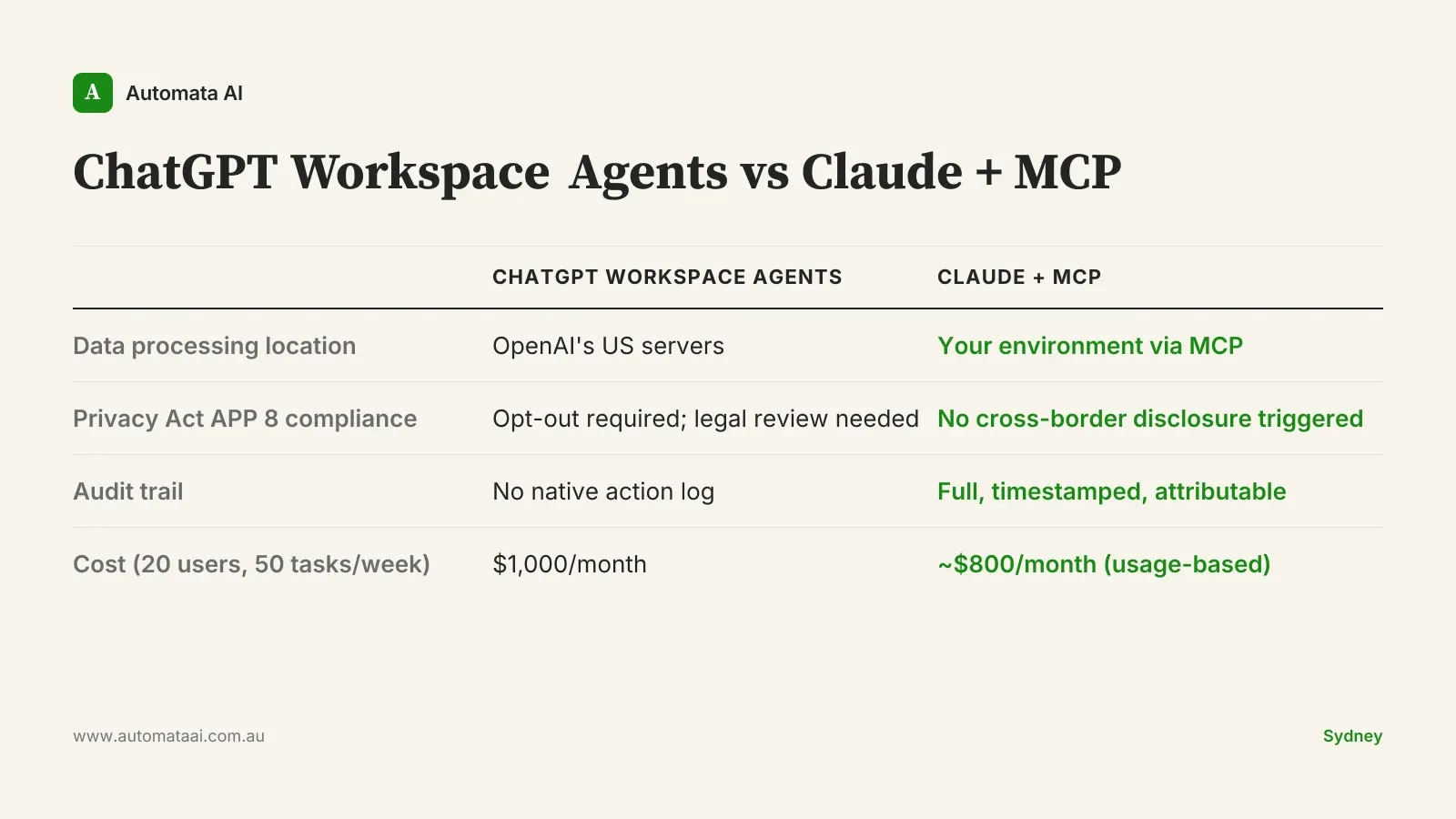

That's the issue. When an OpenAI Workspace Agent reads a Gmail thread to draft a response, that content is processed on OpenAI's infrastructure. Enterprise customers can opt out of model training, but opting out requires active management: someone at your firm confirming the setting is applied correctly to every integration, and re-confirming it each time a new one is added.

Training data by default. ChatGPT Business allows opt-out from model training, but opt-out is not opt-in. Enterprises must actively manage this setting for every connected integration.

Offshore data processing. Content sent to OpenAI's servers crosses jurisdictional boundaries, triggering obligations under the Australian Privacy Principles.

No native audit trail. There is no built-in log of which documents an agent read, when, or what it extracted. ASIC-regulated firms cannot accept that gap.

The Australian compliance gap

Australian Privacy Principle 8 governs cross-border disclosure of personal information. When a Workspace Agent reads a client email containing PII, financial advice, or health information and processes it on OpenAI's US servers, that is a cross-border disclosure with legal consequences. For ASIC-regulated teams (advisers, funds managers, insurance underwriters), there are additional data handling obligations tied directly to AFSL licence conditions.

The compliance analyst who spent three hours generating that weekly regulatory report was producing a documented, attributable output. The AI agent that replaced them is producing the same output with no audit trail, no attribution, and no evidence chain. When ASIC or APRA comes asking, that matters.

Claude + MCP: local execution, full audit trail

Claude Code with MCP (Model Context Protocol) is architecturally different from cloud-first agent products. MCP lets Claude interact with your internal systems (file servers, databases, internal APIs) without data leaving your environment. The model processes on Anthropic's infrastructure, but the documents, emails, and records it reads via MCP never need to be uploaded to an external server.

Every Claude action through an MCP integration is logged: what the agent was asked to do, what files it read, what output it produced, and when. For a 20-person team running 50 automated tasks per week at roughly $0.40 per task, the monthly API cost is around $800. That compares with $1,000 monthly for ChatGPT Business at the same headcount. Run your own numbers in our ROI Calculator to model the payback for your specific process volume.

Local execution by default. Data stays in your environment unless you explicitly configure it otherwise.

Full attribution logging. Every MCP action is logged with a timestamp and the invoking user or process.

No training data use. Anthropic's API does not use your API data to train models.

Privacy Act-compatible by design. No cross-border disclosure obligation is triggered when processing stays within your environment.

A wealth management firm's real numbers

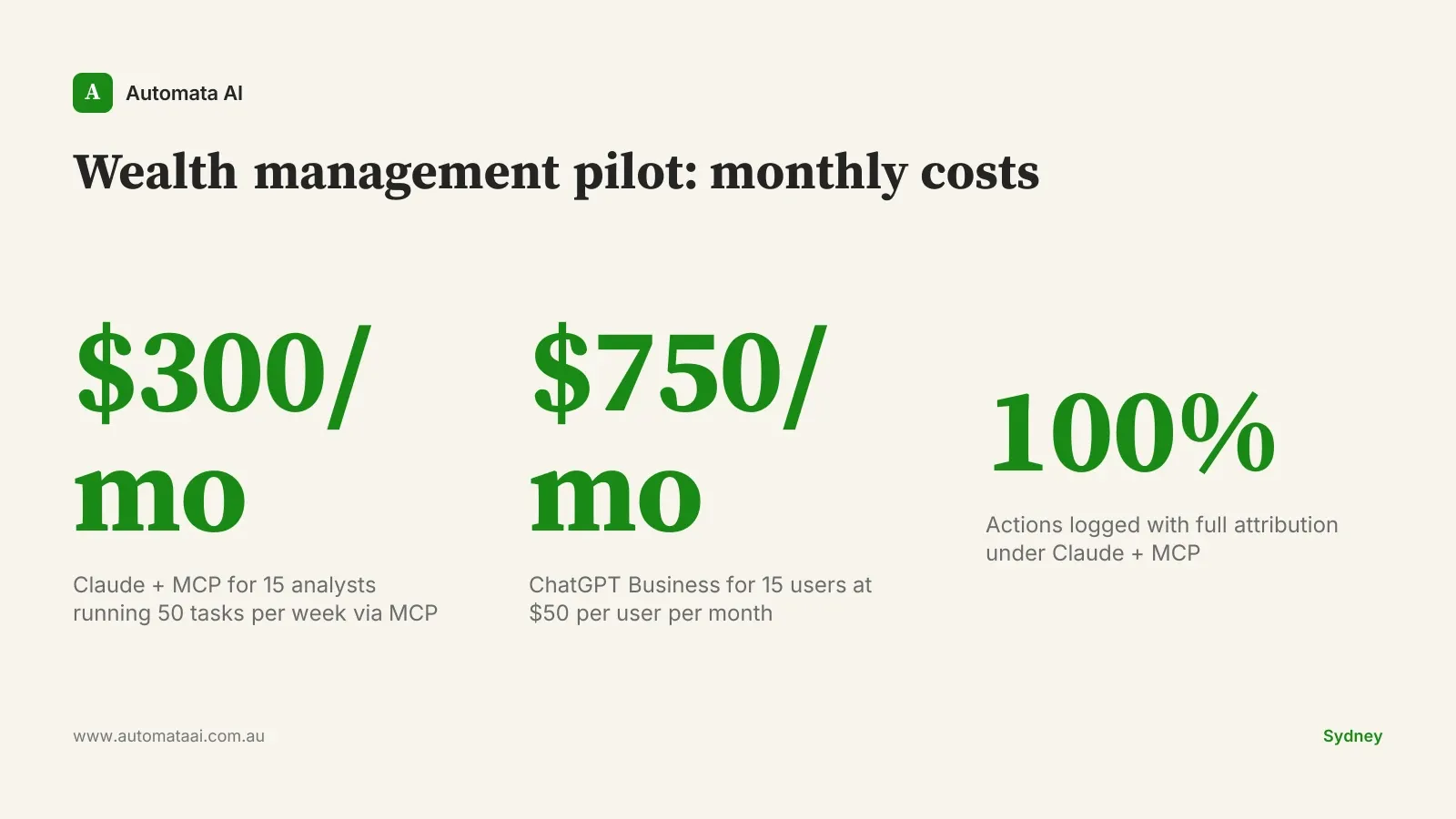

Consider 15 analysts at an Australian wealth management firm running weekly portfolio review reports. Each analyst spends 90 minutes per week pulling data and formatting summaries. That is 22.5 hours of team time weekly. At $100 per hour fully loaded, that is $117,000 per year on one reporting process.

ChatGPT Workspace Agents approach: The agent reads email summaries and Sheets data to generate reports. Monthly cost: $750 for 15 users at $50 per user. Privacy exposure: client portfolio data, adviser correspondence, and AUM figures processed by OpenAI's servers. Compliance status: requires active opt-out management and legal sign-off on each connected integration.

Claude + MCP approach: Claude reads from the firm's internal file store via MCP. Reports generated entirely in-house. Monthly cost: approximately $300 (15 tasks per week at $0.40 per task over 50 weeks). Privacy exposure: none. Full audit log from day one, no additional legal overhead to manage.

The $450 monthly saving per team is secondary to the compliance position. Australian wealth management firms operating under AFSL obligations need documented evidence that client data was handled correctly. A vendor's privacy policy is not sufficient. Our AI automation for financial services page covers the data handling frameworks relevant to AFSL-licensed businesses.

When Claude + MCP is the wrong choice

Not every business should go through the effort of a full migration. There are situations where staying on ChatGPT Business, with proper configuration, is the right call.

Volume is too low. If your team runs fewer than 20 automated tasks per week, the implementation effort for MCP integrations will not pay back within 12 months.

Your data is not regulated. If your automation workflows only touch marketing content, general scheduling, or public-facing documents, the Privacy Act compliance case for local execution is much weaker.

You are not AFSL or ASIC-regulated. The urgency of moving off cloud-processed agents is proportional to how sensitive your data is. Many businesses can manage the risk with correct opt-out settings and quarterly audits.

The right answer for some businesses is to stay on ChatGPT Business, configure the data handling settings correctly, and review them quarterly. Don't spend $20,000 to $80,000 on a migration project to protect workflows that never needed protecting.

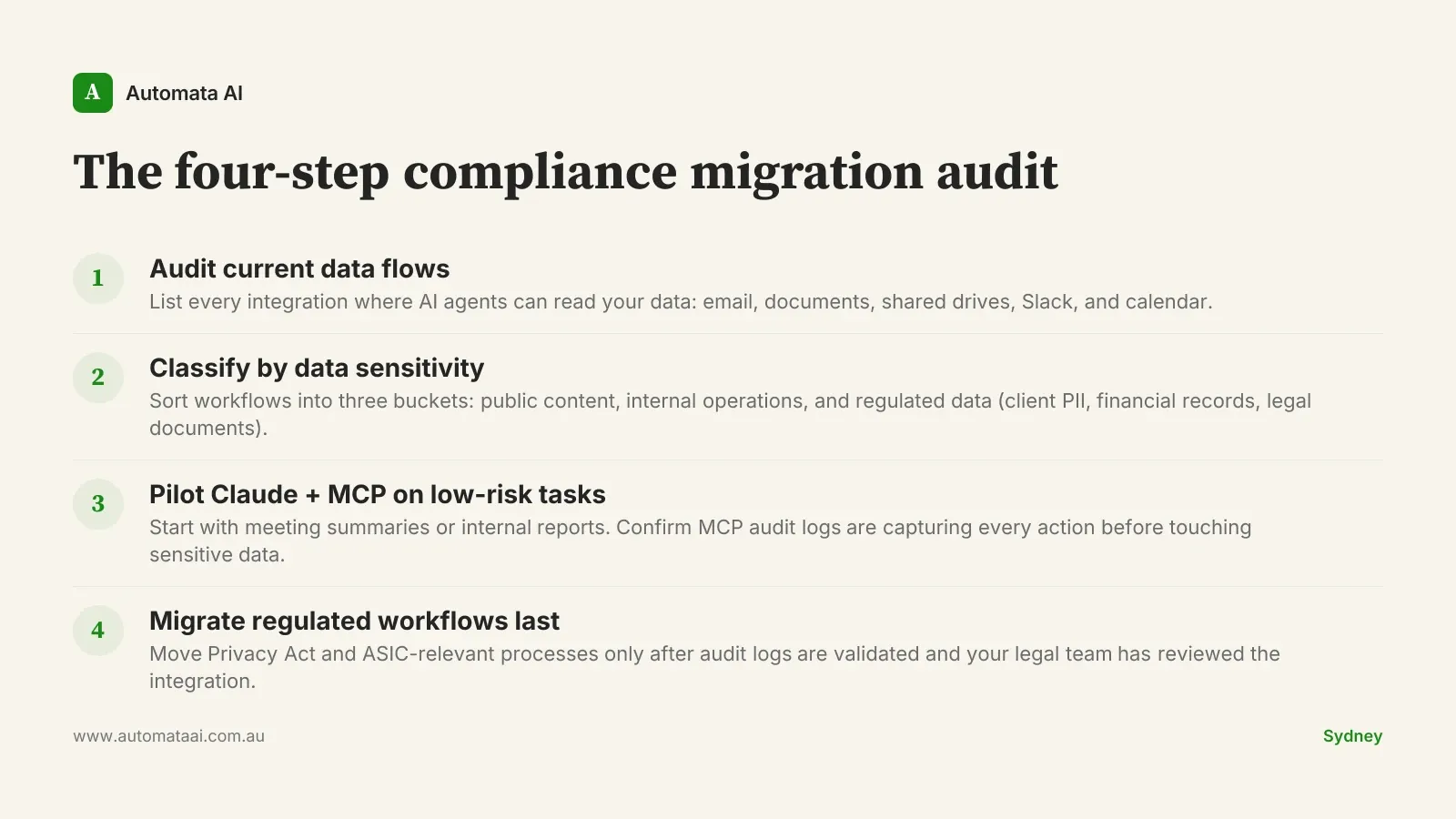

The compliance migration audit

Before changing any integration, run what we call the four-step compliance migration audit. It takes a day with one person and prevents the most common failure mode: creating new exposure while trying to fix old exposure.

If you want a structured view of where your current automation stack sits against Privacy Act and ASIC obligations, the AI Readiness Assessment identifies the gaps in about 15 minutes. Start there before you commit to any vendor.