Your senior engineers have Claude Code running. So do the three other squads. Each one has rebuilt the same prompt scaffolding from scratch, documented it nowhere, and carries it out the door when they leave. When the lead from the Melbourne office joins a project in Sydney, the two command sets collide, and someone spends a Friday afternoon resolving the conflict rather than shipping.

That is what slash commands look like as a personal scratchpad. The teams extracting compounding value treat them differently: shared, versioned, code-reviewed. Internal tooling, not personal notes. The economics are not subtle.

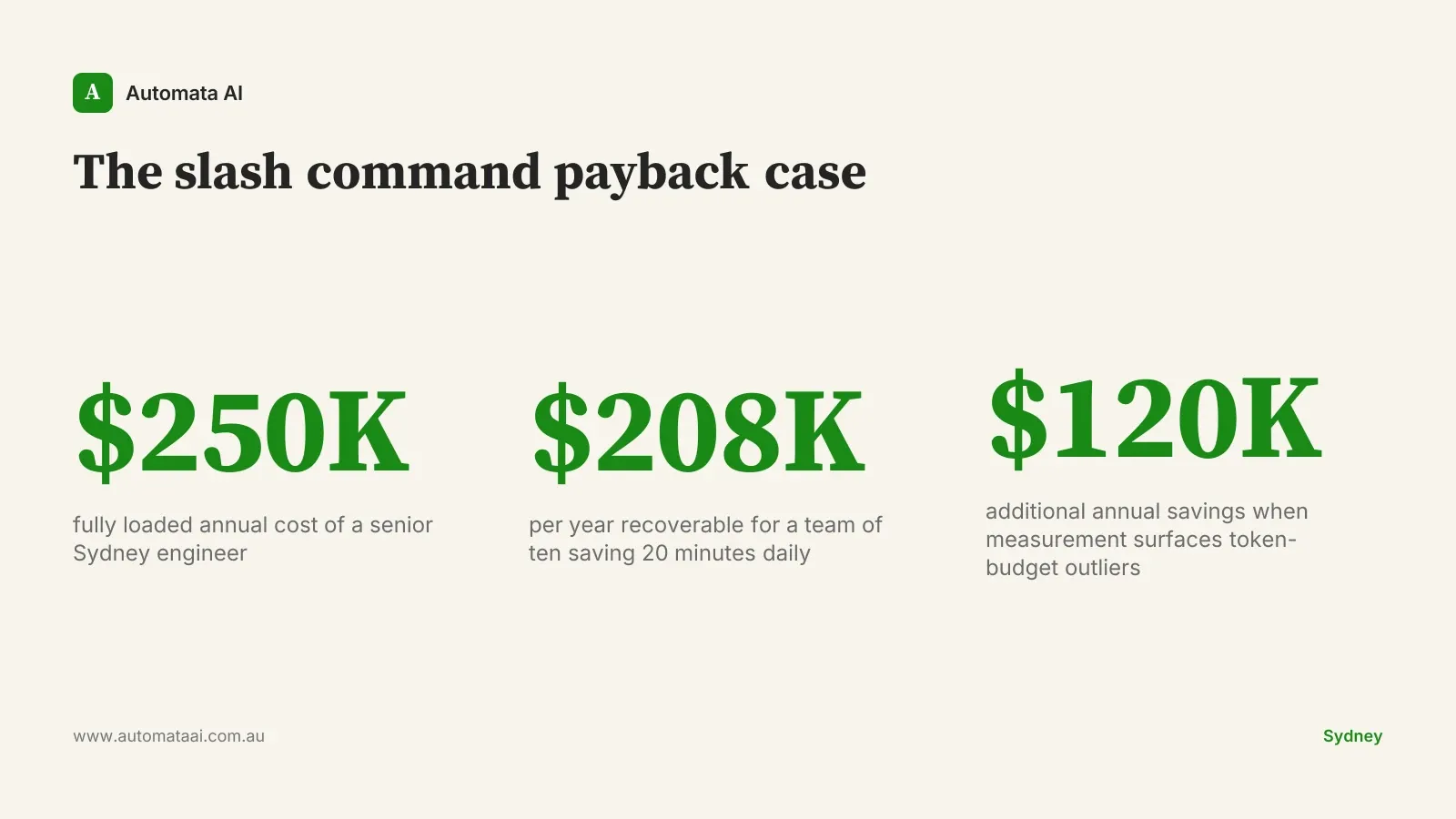

The economics justify owning this surface

A senior engineer in Sydney costs roughly $250,000 fully loaded. If a team of ten saves twenty minutes a day on prompt scaffolding, that is around $208,000 a year recovered. That maths assumes twenty minutes, not an hour. It assumes a mid-market rate, not top-of-market. It assumes the engineer was otherwise productively occupied. Even on conservative assumptions, the figure is worth taking seriously. You can run the payback calculation in our ROI Calculator with your own team size and hourly rate.

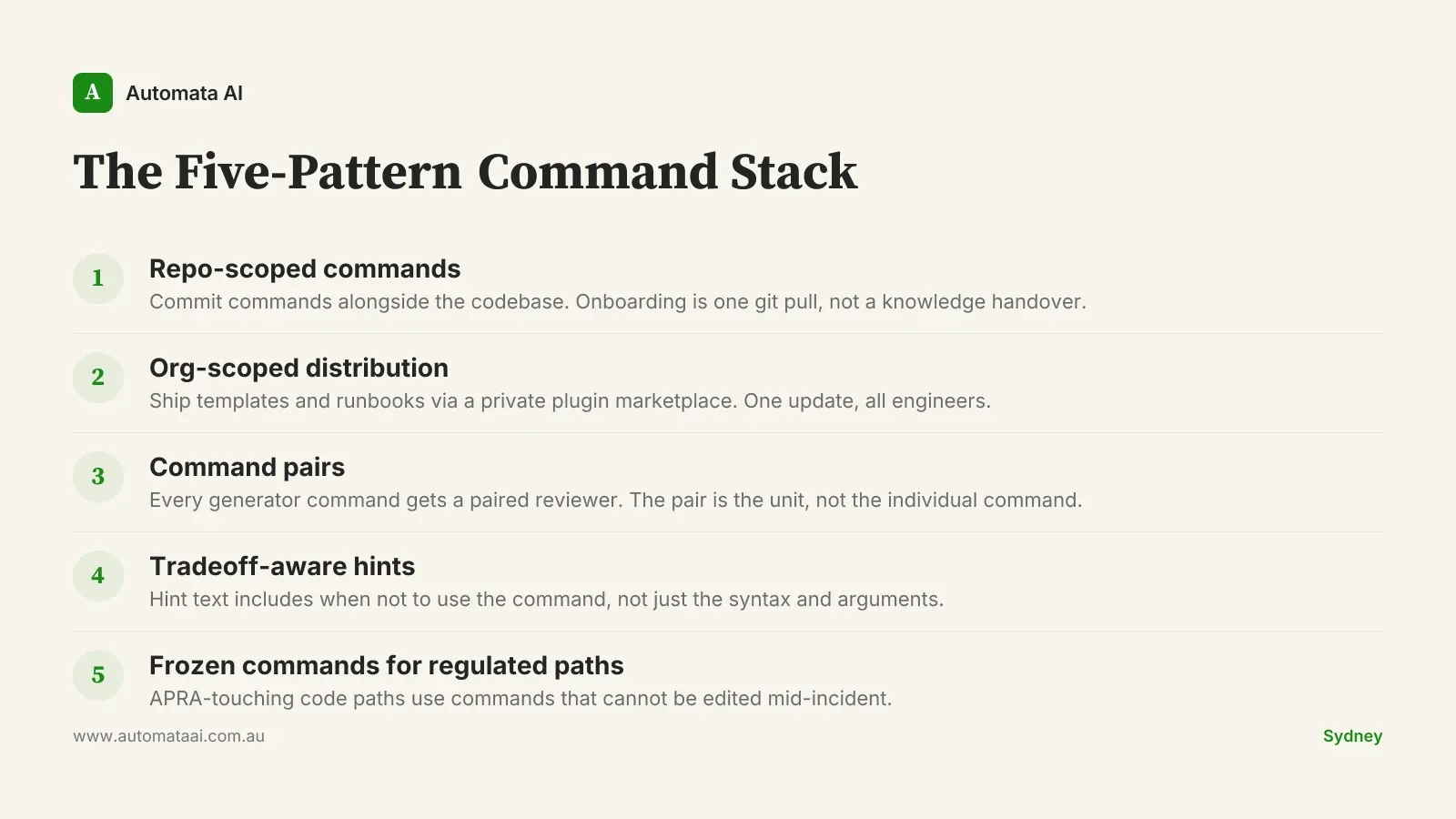

The Five-Pattern Command Stack

This is the framework that shows up in mature Claude Code rollouts across Australian engineering teams. Call it whatever you like internally. What matters is that these five patterns address five different failure modes: knowledge loss on departure, inconsistency across squads, unreviewed outputs, knowledge gaps for junior engineers, and unguarded regulated paths. Skipping any one of them is fine. Skipping all five means your team's Claude investment compounds for no one.

Repo-scoped commands committed alongside the codebase. Onboarding becomes one git pull rather than a knowledge-transfer session. The commands live where engineers already look. A new hire joining a Brisbane platform team has the full command vocabulary on day one, not six weeks in.

Org-scoped commands distributed through a private plugin marketplace. Security review templates, Terraform patterns, incident runbooks. One update in the marketplace reaches every engineer at once. No Slack thread. No manual prompt-file paste.

Command pairs: a generator command plus a paired reviewer. Any artefact Claude produces gets a one-keystroke review pass. Teams that skip the reviewer find that savings from generation get partially eaten by the review work it was meant to eliminate. The pair is the unit.

Argument hints that include the tradeoffs, not just the syntax. A junior engineer reaching for a migration scaffold should see, in the hint text, when to reach for the manual alternative instead. If the hint only shows syntax, you have replaced one knowledge gap with another.

Frozen commands for regulated code paths. Any command that touches APRA CPS 230-relevant systems should not be editable mid-incident. Frozen commands give your CISO a hard answer when auditors ask what guardrails exist. They also show up well in a third-party security review.

How to roll this out without stalling

Start with three commands that already exist as a wiki page somewhere. A review checklist, a deployment template, a PR description scaffold. Write the prompt in a .claude/commands/ directory, test it against a scenario your team runs weekly, then open a pull request. Most teams see payback inside a quarter.

The conversion itself is lightweight. Take the wiki page, extract the core prompt, write it to the commands directory, test it against a real scenario, open a pull request. The PR review is where the prompt gets sharpened. Junior engineers asking "why does it work this way?" in review comments is exactly the knowledge-transfer mechanism the wiki page was supposed to provide but usually did not.

Teams that try to design the full command catalogue upfront ship nothing. Three months of planning produces a diagram. Three months of usage produces seventeen commands, two of which account for eighty percent of the value. The wiki-to-command conversion also surfaces a useful signal: if a process has no wiki page, the team has not agreed on it. That means it is not ready to automate.

The second-order win is harder to quantify and more durable than the direct savings. A repository of committed slash commands is a readable inventory of how your team has agreed to use Claude. It answers the questions a CISO or general counsel will ask during a rollout signoff: What can Claude do? What guardrails exist? Can someone audit those guardrails? A wiki page invites drift. A committed command file with a pull-request history invites trust. If you are mapping that readiness right now, the AI Readiness Assessment is a structured starting point.

When slash commands are not worth the effort

Three situations where this investment does not pay:

The team has fewer than four engineers. Below that threshold, the coordination overhead approaches the benefit. A personal prompt file works fine.

Workflows are changing every six weeks. Frozen commands require maintenance when the underlying process shifts. Stabilise the process before versioning the tooling around it.

The team has not agreed on what good looks like. A slash command wraps a prompt. If there is no rough consensus on the underlying standard, you are versioning a disagreement, not resolving it.

What to measure from week one

Three numbers belong on a shared dashboard from the moment you ship the first command: time-to-first-result on each agent flow, cost-per-task in tokens, and acceptance rate. Acceptance rate is how often an engineer takes Claude's output without significant revision. The three together tell you whether the command is saving time, saving money, and producing work the engineer trusts. Without all three, the conversation with finance is a faith argument, not a numbers argument.

A Sydney engineering platform team running this discipline found the dashboard itself drove roughly $120,000 in additional annual savings. Two flows were consuming 40 percent of the token budget while delivering about 10 percent of the value. The team lead described it as the easiest cost-reduction conversation he had in the year. Killing the two flows took one day. Building the dashboard took a week. If you want someone to build and instrument the full setup, that is the kind of work we do through our Claude Code rollout services.

Review the command surface every quarter. Patterns that earned their keep stay. Patterns that did not get retired. The teams that do this consistently treat their Claude tooling as a product, not a project. That mindset is the compound interest.